心得

虽然已经完成了25个课程,但是,继续学习。

这个课程继续很友好。

可视化做得很好。不至于在等待的过程中有焦虑感。

有进度,有已经运算的时间,有预计剩余时间,有运算速度,有中间运算的结果。

正如已经的发现,这次的课程,重点不是讲解模型,重点讲解的模型如何在mindspore平台中的实现。模型的原理,可能需要课后进行研究学习了。

这次将的是BERT模型在mindspore平台上的实现。

课程也是一步一步的把每个步骤展现出来。跟着课程学习,就能学会模型的实现。从而在平台上达到运用的目的。

打卡截图

环境配置

[1]:

%%capture captured_output

# 实验环境已经预装了mindspore==2.2.14,如需更换mindspore版本,可更改下面mindspore的版本号

!pip uninstall mindspore -y

!pip install -i https://pypi.mirrors.ustc.edu.cn/simple mindspore==2.2.14

[2]:

# 该案例在 mindnlp 0.3.1 版本完成适配,如果发现案例跑不通,可以指定mindnlp版本,执行`!pip install mindnlp==0.3.1`

!pip install mindnlp

Looking in indexes: Simple Index Collecting mindnlp Downloading https://pypi.tuna.tsinghua.edu.cn/packages/72/37/ef313c23fd587c3d1f46b0741c98235aecdfd93b4d6d446376f3db6a552c/mindnlp-0.3.1-py3-none-any.whl (5.7 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 5.7/5.7 MB 16.0 MB/s eta 0:00:0000:0100:01 Requirement already satisfied: mindspore in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from mindnlp) (2.2.14) Requirement already satisfied: tqdm in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from mindnlp) (4.66.4) Requirement already satisfied: requests in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from mindnlp) (2.32.3) Collecting datasets (from mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/60/2d/963b266bb8f88492d5ab4232d74292af8beb5b6fdae97902df9e284d4c32/datasets-2.20.0-py3-none-any.whl (547 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 547.8/547.8 kB 21.1 MB/s eta 0:00:00 Collecting evaluate (from mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/c2/d6/ff9baefc8fc679dcd9eb21b29da3ef10c81aa36be630a7ae78e4611588e1/evaluate-0.4.2-py3-none-any.whl (84 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 84.1/84.1 kB 24.9 MB/s eta 0:00:00 Collecting tokenizers (from mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/ba/26/139bd2371228a0e203da7b3e3eddcb02f45b2b7edd91df00e342e4b55e13/tokenizers-0.19.1-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl (3.6 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 3.6/3.6 MB 40.9 MB/s eta 0:00:00a 0:00:01 Collecting safetensors (from mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/c6/02/28e6280ed0f1bde89eed644b80f2ece4e5ae212dc9ee70d7f56fadc93602/safetensors-0.4.3-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl (1.2 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.2/1.2 MB 27.3 MB/s eta 0:00:00a 0:00:01 Collecting sentencepiece (from mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/a3/69/e96ef68261fa5b82379fdedb325ceaf1d353c6e839ec346d8244e0da5f2f/sentencepiece-0.2.0-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl (1.3 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.3/1.3 MB 4.8 MB/s eta 0:00:00a 0:00:01m Collecting regex (from mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/70/70/fea4865c89a841432497d1abbfd53878513b55c6543245fabe31cf8df0b8/regex-2024.5.15-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl (774 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 774.7/774.7 kB 30.6 MB/s eta 0:00:00 Collecting addict (from mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/6a/00/b08f23b7d7e1e14ce01419a467b583edbb93c6cdb8654e54a9cc579cd61f/addict-2.4.0-py3-none-any.whl (3.8 kB) Collecting ml-dtypes (from mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/50/96/13d7c3cc82d5ef597279216cf56ff461f8b57e7096a3ef10246a83ca80c0/ml_dtypes-0.4.0-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl (2.2 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 2.2/2.2 MB 17.7 MB/s eta 0:00:00a 0:00:01 Collecting pyctcdecode (from mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/a5/8a/93e2118411ae5e861d4f4ce65578c62e85d0f1d9cb389bd63bd57130604e/pyctcdecode-0.5.0-py2.py3-none-any.whl (39 kB) Collecting jieba (from mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/c6/cb/18eeb235f833b726522d7ebed54f2278ce28ba9438e3135ab0278d9792a2/jieba-0.42.1.tar.gz (19.2 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 19.2/19.2 MB 63.0 MB/s eta 0:00:0000:0100:01 Preparing metadata (setup.py) ... done Collecting pytest==7.2.0 (from mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/67/68/a5eb36c3a8540594b6035e6cdae40c1ef1b6a2bfacbecc3d1a544583c078/pytest-7.2.0-py3-none-any.whl (316 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 316.8/316.8 kB 56.8 MB/s eta 0:00:00 Requirement already satisfied: attrs>=19.2.0 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from pytest==7.2.0->mindnlp) (23.2.0) Requirement already satisfied: iniconfig in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from pytest==7.2.0->mindnlp) (2.0.0) Requirement already satisfied: packaging in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from pytest==7.2.0->mindnlp) (23.2) Requirement already satisfied: pluggy<2.0,>=0.12 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from pytest==7.2.0->mindnlp) (1.5.0) Requirement already satisfied: exceptiongroup>=1.0.0rc8 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from pytest==7.2.0->mindnlp) (1.2.0) Requirement already satisfied: tomli>=1.0.0 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from pytest==7.2.0->mindnlp) (2.0.1) Requirement already satisfied: filelock in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from datasets->mindnlp) (3.15.3) Requirement already satisfied: numpy>=1.17 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from datasets->mindnlp) (1.26.4) Collecting pyarrow>=15.0.0 (from datasets->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/75/63/29d1bfcc57af73cde3fc3baccab2f37548de512dbe0ab294b033cd203516/pyarrow-17.0.0-cp39-cp39-manylinux_2_28_aarch64.whl (38.7 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 38.7/38.7 MB 47.4 MB/s eta 0:00:0000:0100:01 Collecting pyarrow-hotfix (from datasets->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/e4/f4/9ec2222f5f5f8ea04f66f184caafd991a39c8782e31f5b0266f101cb68ca/pyarrow_hotfix-0.6-py3-none-any.whl (7.9 kB) Requirement already satisfied: dill<0.3.9,>=0.3.0 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from datasets->mindnlp) (0.3.8) Requirement already satisfied: pandas in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from datasets->mindnlp) (2.2.2) Collecting xxhash (from datasets->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/7c/b9/93f860969093d5d1c4fa60c75ca351b212560de68f33dc0da04c89b7dc1b/xxhash-3.4.1-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl (220 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 220.6/220.6 kB 47.7 MB/s eta 0:00:00 Collecting multiprocess (from datasets->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/da/d9/f7f9379981e39b8c2511c9e0326d212accacb82f12fbfdc1aa2ce2a7b2b6/multiprocess-0.70.16-py39-none-any.whl (133 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 133.4/133.4 kB 9.3 MB/s eta 0:00:00 Collecting fsspec<=2024.5.0,>=2023.1.0 (from fsspec[http]<=2024.5.0,>=2023.1.0->datasets->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/ba/a3/16e9fe32187e9c8bc7f9b7bcd9728529faa725231a0c96f2f98714ff2fc5/fsspec-2024.5.0-py3-none-any.whl (316 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 316.1/316.1 kB 24.0 MB/s eta 0:00:00 Collecting aiohttp (from datasets->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/eb/45/eebe8d2215328434f33ccb44a05d2741ff7ed4b96b56ca507e2ecf598b73/aiohttp-3.9.5-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl (1.2 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.2/1.2 MB 376.8 kB/s eta 0:00:00 0:00:01 Requirement already satisfied: huggingface-hub>=0.21.2 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from datasets->mindnlp) (0.23.4) Requirement already satisfied: pyyaml>=5.1 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from datasets->mindnlp) (6.0.1) Requirement already satisfied: charset-normalizer<4,>=2 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from requests->mindnlp) (3.3.2) Requirement already satisfied: idna<4,>=2.5 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from requests->mindnlp) (3.7) Requirement already satisfied: urllib3<3,>=1.21.1 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from requests->mindnlp) (2.2.2) Requirement already satisfied: certifi>=2017.4.17 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from requests->mindnlp) (2024.6.2) Requirement already satisfied: protobuf>=3.13.0 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from mindspore->mindnlp) (5.27.1) Requirement already satisfied: asttokens>=2.0.4 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from mindspore->mindnlp) (2.0.5) Requirement already satisfied: pillow>=6.2.0 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from mindspore->mindnlp) (10.3.0) Requirement already satisfied: scipy>=1.5.4 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from mindspore->mindnlp) (1.13.1) Requirement already satisfied: psutil>=5.6.1 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from mindspore->mindnlp) (5.9.0) Requirement already satisfied: astunparse>=1.6.3 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from mindspore->mindnlp) (1.6.3) Collecting pygtrie<3.0,>=2.1 (from pyctcdecode->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/ec/cd/bd196b2cf014afb1009de8b0f05ecd54011d881944e62763f3c1b1e8ef37/pygtrie-2.5.0-py3-none-any.whl (25 kB) Collecting hypothesis<7,>=6.14 (from pyctcdecode->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/6c/f7/66279227de1a500724e90ef11d0f47a21342454e50acf50ee0148e9eda00/hypothesis-6.108.2-py3-none-any.whl (465 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 465.2/465.2 kB 7.8 MB/s eta 0:00:00a 0:00:01 Requirement already satisfied: six in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from asttokens>=2.0.4->mindspore->mindnlp) (1.16.0) Requirement already satisfied: wheel<1.0,>=0.23.0 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from astunparse>=1.6.3->mindspore->mindnlp) (0.43.0) Collecting aiosignal>=1.1.2 (from aiohttp->datasets->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/76/ac/a7305707cb852b7e16ff80eaf5692309bde30e2b1100a1fcacdc8f731d97/aiosignal-1.3.1-py3-none-any.whl (7.6 kB) Collecting frozenlist>=1.1.1 (from aiohttp->datasets->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/57/15/172af60c7e150a1d88ecc832f2590721166ae41eab582172fe1e9844eab4/frozenlist-1.4.1-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl (239 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 239.4/239.4 kB 11.9 MB/s eta 0:00:00 Collecting multidict<7.0,>=4.5 (from aiohttp->datasets->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/d0/10/2ff646c471e84af25fe8111985ffb8ec85a3f6e1ade8643bfcfcc0f4d2b1/multidict-6.0.5-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl (125 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 125.9/125.9 kB 24.9 MB/s eta 0:00:00 Collecting yarl<2.0,>=1.0 (from aiohttp->datasets->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/c6/d6/5b30ae1d8a13104ee2ceb649f28f2db5ad42afbd5697fd0fc61528bb112c/yarl-1.9.4-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl (300 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 300.9/300.9 kB 17.0 MB/s eta 0:00:00 Collecting async-timeout<5.0,>=4.0 (from aiohttp->datasets->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/a7/fa/e01228c2938de91d47b307831c62ab9e4001e747789d0b05baf779a6488c/async_timeout-4.0.3-py3-none-any.whl (5.7 kB) Requirement already satisfied: typing-extensions>=3.7.4.3 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from huggingface-hub>=0.21.2->datasets->mindnlp) (4.11.0) Collecting sortedcontainers<3.0.0,>=2.1.0 (from hypothesis<7,>=6.14->pyctcdecode->mindnlp) Downloading https://pypi.tuna.tsinghua.edu.cn/packages/32/46/9cb0e58b2deb7f82b84065f37f3bffeb12413f947f9388e4cac22c4621ce/sortedcontainers-2.4.0-py2.py3-none-any.whl (29 kB) Requirement already satisfied: python-dateutil>=2.8.2 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from pandas->datasets->mindnlp) (2.9.0.post0) Requirement already satisfied: pytz>=2020.1 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from pandas->datasets->mindnlp) (2024.1) Requirement already satisfied: tzdata>=2022.7 in /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages (from pandas->datasets->mindnlp) (2024.1) Building wheels for collected packages: jieba Building wheel for jieba (setup.py) ... done Created wheel for jieba: filename=jieba-0.42.1-py3-none-any.whl size=19314459 sha256=d0107e72c4e8dd09842b2135549040fdbf47b02414bf1d3e60d8b1ca586642e0 Stored in directory: /home/nginx/.cache/pip/wheels/1a/76/68/b6d79c4db704bb18d54f6a73ab551185f4711f9730c0c15d97 Successfully built jieba Installing collected packages: sortedcontainers, sentencepiece, pygtrie, jieba, addict, xxhash, safetensors, regex, pytest, pyarrow-hotfix, pyarrow, multiprocess, multidict, ml-dtypes, hypothesis, fsspec, frozenlist, async-timeout, yarl, pyctcdecode, aiosignal, tokenizers, aiohttp, datasets, evaluate, mindnlp Attempting uninstall: pytest Found existing installation: pytest 8.0.0 Uninstalling pytest-8.0.0: Successfully uninstalled pytest-8.0.0 Attempting uninstall: fsspec Found existing installation: fsspec 2024.6.0 Uninstalling fsspec-2024.6.0: Successfully uninstalled fsspec-2024.6.0 Successfully installed addict-2.4.0 aiohttp-3.9.5 aiosignal-1.3.1 async-timeout-4.0.3 datasets-2.20.0 evaluate-0.4.2 frozenlist-1.4.1 fsspec-2024.5.0 hypothesis-6.108.2 jieba-0.42.1 mindnlp-0.3.1 ml-dtypes-0.4.0 multidict-6.0.5 multiprocess-0.70.16 pyarrow-17.0.0 pyarrow-hotfix-0.6 pyctcdecode-0.5.0 pygtrie-2.5.0 pytest-7.2.0 regex-2024.5.15 safetensors-0.4.3 sentencepiece-0.2.0 sortedcontainers-2.4.0 tokenizers-0.19.1 xxhash-3.4.1 yarl-1.9.4 [notice] A new release of pip is available: 24.1 -> 24.1.2 [notice] To update, run: python -m pip install --upgrade pip

[3]:

!pip show mindspore

Name: mindspore Version: 2.2.14 Summary: MindSpore is a new open source deep learning training/inference framework that could be used for mobile, edge and cloud scenarios. Home-page: https://www.mindspore.cn Author: The MindSpore Authors Author-email: [email protected] License: Apache 2.0 Location: /home/nginx/miniconda/envs/jupyter/lib/python3.9/site-packages Requires: asttokens, astunparse, numpy, packaging, pillow, protobuf, psutil, scipy Required-by: mindnlp

基于 MindSpore 实现 BERT 对话情绪识别

模型简介

BERT全称是来自变换器的双向编码器表征量(Bidirectional Encoder Representations from Transformers),它是Google于2018年末开发并发布的一种新型语言模型。与BERT模型相似的预训练语言模型例如问答、命名实体识别、自然语言推理、文本分类等在许多自然语言处理任务中发挥着重要作用。模型是基于Transformer中的Encoder并加上双向的结构,因此一定要熟练掌握Transformer的Encoder的结构。

BERT模型的主要创新点都在pre-train方法上,即用了Masked Language Model和Next Sentence Prediction两种方法分别捕捉词语和句子级别的representation。

在用Masked Language Model方法训练BERT的时候,随机把语料库中15%的单词做Mask操作。对于这15%的单词做Mask操作分为三种情况:80%的单词直接用[Mask]替换、10%的单词直接替换成另一个新的单词、10%的单词保持不变。

因为涉及到Question Answering (QA) 和 Natural Language Inference (NLI)之类的任务,增加了Next Sentence Prediction预训练任务,目的是让模型理解两个句子之间的联系。与Masked Language Model任务相比,Next Sentence Prediction更简单些,训练的输入是句子A和B,B有一半的几率是A的下一句,输入这两个句子,BERT模型预测B是不是A的下一句。

BERT预训练之后,会保存它的Embedding table和12层Transformer权重(BERT-BASE)或24层Transformer权重(BERT-LARGE)。使用预训练好的BERT模型可以对下游任务进行Fine-tuning,比如:文本分类、相似度判断、阅读理解等。

对话情绪识别(Emotion Detection,简称EmoTect),专注于识别智能对话场景中用户的情绪,针对智能对话场景中的用户文本,自动判断该文本的情绪类别并给出相应的置信度,情绪类型分为积极、消极、中性。 对话情绪识别适用于聊天、客服等多个场景,能够帮助企业更好地把握对话质量、改善产品的用户交互体验,也能分析客服服务质量、降低人工质检成本。

下面以一个文本情感分类任务为例子来说明BERT模型的整个应用过程。

[4]:

import os

import mindspore

from mindspore.dataset import text, GeneratorDataset, transforms

from mindspore import nn, context

from mindnlp._legacy.engine import Trainer, Evaluator

from mindnlp._legacy.engine.callbacks import CheckpointCallback, BestModelCallback

from mindnlp._legacy.metrics import Accuracy

Building prefix dict from the default dictionary ... Dumping model to file cache /tmp/jieba.cache Loading model cost 1.018 seconds. Prefix dict has been built successfully.

[5]:

# prepare dataset

class SentimentDataset:

"""Sentiment Dataset"""

def __init__(self, path):

self.path = path

self._labels, self._text_a = [], []

self._load()

def _load(self):

with open(self.path, "r", encoding="utf-8") as f:

dataset = f.read()

lines = dataset.split("\n")

for line in lines[1:-1]:

label, text_a = line.split("\t")

self._labels.append(int(label))

self._text_a.append(text_a)

def __getitem__(self, index):

return self._labels[index], self._text_a[index]

def __len__(self):

return len(self._labels)

数据集

这里提供一份已标注的、经过分词预处理的机器人聊天数据集,来自于百度飞桨团队。数据由两列组成,以制表符('\t')分隔,第一列是情绪分类的类别(0表示消极;1表示中性;2表示积极),第二列是以空格分词的中文文本,如下示例,文件为 utf8 编码。

label--text_a

0--谁骂人了?我从来不骂人,我骂的都不是人,你是人吗 ?

1--我有事等会儿就回来和你聊

2--我见到你很高兴谢谢你帮我

这部分主要包括数据集读取,数据格式转换,数据 Tokenize 处理和 pad 操作。

[6]:

# download dataset

!wget https://baidu-nlp.bj.bcebos.com/emotion_detection-dataset-1.0.0.tar.gz -O emotion_detection.tar.gz

!tar xvf emotion_detection.tar.gz

--2024-07-18 02:26:09-- https://baidu-nlp.bj.bcebos.com/emotion_detection-dataset-1.0.0.tar.gz Resolving baidu-nlp.bj.bcebos.com (baidu-nlp.bj.bcebos.com)... 113.200.2.111, 119.249.103.5, 2409:8c04:1001:1203:0:ff:b0bb:4f27 Connecting to baidu-nlp.bj.bcebos.com (baidu-nlp.bj.bcebos.com)|113.200.2.111|:443... connected. HTTP request sent, awaiting response... 200 OK Length: 1710581 (1.6M) [application/x-gzip] Saving to: ‘emotion_detection.tar.gz’ emotion_detection.t 100%[===================>] 1.63M 4.42MB/s in 0.4s 2024-07-18 02:26:09 (4.42 MB/s) - ‘emotion_detection.tar.gz’ saved [1710581/1710581] data/ data/test.tsv data/infer.tsv data/dev.tsv data/train.tsv data/vocab.txt

数据加载和数据预处理

新建 process_dataset 函数用于数据加载和数据预处理,具体内容可见下面代码注释。

[7]:

import numpy as np

def process_dataset(source, tokenizer, max_seq_len=64, batch_size=32, shuffle=True):

is_ascend = mindspore.get_context('device_target') == 'Ascend'

column_names = ["label", "text_a"]

dataset = GeneratorDataset(source, column_names=column_names, shuffle=shuffle)

# transforms

type_cast_op = transforms.TypeCast(mindspore.int32)

def tokenize_and_pad(text):

if is_ascend:

tokenized = tokenizer(text, padding='max_length', truncation=True, max_length=max_seq_len)

else:

tokenized = tokenizer(text)

return tokenized['input_ids'], tokenized['attention_mask']

# map dataset

dataset = dataset.map(operations=tokenize_and_pad, input_columns="text_a", output_columns=['input_ids', 'attention_mask'])

dataset = dataset.map(operations=[type_cast_op], input_columns="label", output_columns='labels')

# batch dataset

if is_ascend:

dataset = dataset.batch(batch_size)

else:

dataset = dataset.padded_batch(batch_size, pad_info={'input_ids': (None, tokenizer.pad_token_id),

'attention_mask': (None, 0)})

return dataset

昇腾NPU环境下暂不支持动态Shape,数据预处理部分采用静态Shape处理:

[8]:

from mindnlp.transformers import BertTokenizer

tokenizer = BertTokenizer.from_pretrained('bert-base-chinese')

100%

49.0/49.0 [00:00<00:00, 3.28kB/s]

107k/0.00 [00:08<00:00, 36.6kB/s]

263k/0.00 [00:09<00:00, 19.4kB/s]

624/? [00:00<00:00, 58.1kB/s]

[9]:

tokenizer.pad_token_id

[9]:

0

[10]:

dataset_train = process_dataset(SentimentDataset("data/train.tsv"), tokenizer)

dataset_val = process_dataset(SentimentDataset("data/dev.tsv"), tokenizer)

dataset_test = process_dataset(SentimentDataset("data/test.tsv"), tokenizer, shuffle=False)

[11]:

dataset_train.get_col_names()

[11]:

['input_ids', 'attention_mask', 'labels']

[12]:

print(next(dataset_train.create_tuple_iterator()))

[Tensor(shape=[32, 64], dtype=Int64, value= [[ 101, 2769, 1348 ... 0, 0, 0], [ 101, 2894, 7509 ... 0, 0, 0], [ 101, 3300, 6443 ... 0, 0, 0], ... [ 101, 3221, 2616 ... 0, 0, 0], [ 101, 1536, 2769 ... 0, 0, 0], [ 101, 3612, 6589 ... 0, 0, 0]]), Tensor(shape=[32, 64], dtype=Int64, value= [[1, 1, 1 ... 0, 0, 0], [1, 1, 1 ... 0, 0, 0], [1, 1, 1 ... 0, 0, 0], ... [1, 1, 1 ... 0, 0, 0], [1, 1, 1 ... 0, 0, 0], [1, 1, 1 ... 0, 0, 0]]), Tensor(shape=[32], dtype=Int32, value= [1, 1, 1, 1, 1, 1, 1, 1, 1, 0, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 2, 1, 1, 0, 1, 1, 0, 1, 1, 1])]

模型构建

通过 BertForSequenceClassification 构建用于情感分类的 BERT 模型,加载预训练权重,设置情感三分类的超参数自动构建模型。后面对模型采用自动混合精度操作,提高训练的速度,然后实例化优化器,紧接着实例化评价指标,设置模型训练的权重保存策略,最后就是构建训练器,模型开始训练。

[13]:

from mindnlp.transformers import BertForSequenceClassification, BertModel

from mindnlp._legacy.amp import auto_mixed_precision

# set bert config and define parameters for training

model = BertForSequenceClassification.from_pretrained('bert-base-chinese', num_labels=3)

model = auto_mixed_precision(model, 'O1')

optimizer = nn.Adam(model.trainable_params(), learning_rate=2e-5)

100%

392M/392M [00:30<00:00, 10.7MB/s]

The following parameters in checkpoint files are not loaded: ['cls.predictions.bias', 'cls.predictions.transform.dense.bias', 'cls.predictions.transform.dense.weight', 'cls.seq_relationship.bias', 'cls.seq_relationship.weight', 'cls.predictions.transform.LayerNorm.bias', 'cls.predictions.transform.LayerNorm.weight'] The following parameters in models are missing parameter: ['classifier.weight', 'classifier.bias']

[14]:

metric = Accuracy()

# define callbacks to save checkpoints

ckpoint_cb = CheckpointCallback(save_path='checkpoint', ckpt_name='bert_emotect', epochs=1, keep_checkpoint_max=2)

best_model_cb = BestModelCallback(save_path='checkpoint', ckpt_name='bert_emotect_best', auto_load=True)

trainer = Trainer(network=model, train_dataset=dataset_train,

eval_dataset=dataset_val, metrics=metric,

epochs=5, optimizer=optimizer, callbacks=[ckpoint_cb, best_model_cb])

[15]:

%%time

# start training

trainer.run(tgt_columns="labels")

The train will start from the checkpoint saved in 'checkpoint'.

Epoch 0: 100%

302/302 [03:58<00:00, 1.91s/it, loss=0.35274565]

Checkpoint: 'bert_emotect_epoch_0.ckpt' has been saved in epoch: 0.

Evaluate: 100%

34/34 [00:08<00:00, 1.31s/it]

Evaluate Score: {'Accuracy': 0.9166666666666666}

---------------Best Model: 'bert_emotect_best.ckpt' has been saved in epoch: 0.---------------

Epoch 1: 100%

302/302 [02:36<00:00, 1.92it/s, loss=0.18815893]

Checkpoint: 'bert_emotect_epoch_1.ckpt' has been saved in epoch: 1.

Evaluate: 100%

34/34 [00:04<00:00, 7.63it/s]

Evaluate Score: {'Accuracy': 0.95}

---------------Best Model: 'bert_emotect_best.ckpt' has been saved in epoch: 1.---------------

Epoch 2: 100%

302/302 [02:37<00:00, 1.96it/s, loss=0.12937787]

The maximum number of stored checkpoints has been reached. Checkpoint: 'bert_emotect_epoch_2.ckpt' has been saved in epoch: 2.

Evaluate: 100%

34/34 [00:04<00:00, 8.17it/s]

Evaluate Score: {'Accuracy': 0.9675925925925926}

---------------Best Model: 'bert_emotect_best.ckpt' has been saved in epoch: 2.---------------

Epoch 3: 100%

302/302 [02:38<00:00, 1.98it/s, loss=0.094353676]

The maximum number of stored checkpoints has been reached. Checkpoint: 'bert_emotect_epoch_3.ckpt' has been saved in epoch: 3.

Evaluate: 100%

34/34 [00:04<00:00, 8.16it/s]

Evaluate Score: {'Accuracy': 0.987037037037037}

---------------Best Model: 'bert_emotect_best.ckpt' has been saved in epoch: 3.---------------

Epoch 4: 100%

302/302 [02:38<00:00, 1.90it/s, loss=0.06653374]

The maximum number of stored checkpoints has been reached. Checkpoint: 'bert_emotect_epoch_4.ckpt' has been saved in epoch: 4.

Evaluate: 100%

34/34 [00:04<00:00, 8.03it/s]

Evaluate Score: {'Accuracy': 0.9907407407407407}

---------------Best Model: 'bert_emotect_best.ckpt' has been saved in epoch: 4.---------------

Loading best model from 'checkpoint' with '['Accuracy']': [0.9907407407407407]...

---------------The model is already load the best model from 'bert_emotect_best.ckpt'.---------------

CPU times: user 22min 28s, sys: 13min 42s, total: 36min 10s

Wall time: 15min 11s

模型验证

将验证数据集加再进训练好的模型,对数据集进行验证,查看模型在验证数据上面的效果,此处的评价指标为准确率。

[16]:

evaluator = Evaluator(network=model, eval_dataset=dataset_test, metrics=metric)

evaluator.run(tgt_columns="labels")

Evaluate: 100%

33/33 [00:07<00:00, 1.17it/s]

Evaluate Score: {'Accuracy': 0.9025096525096525}

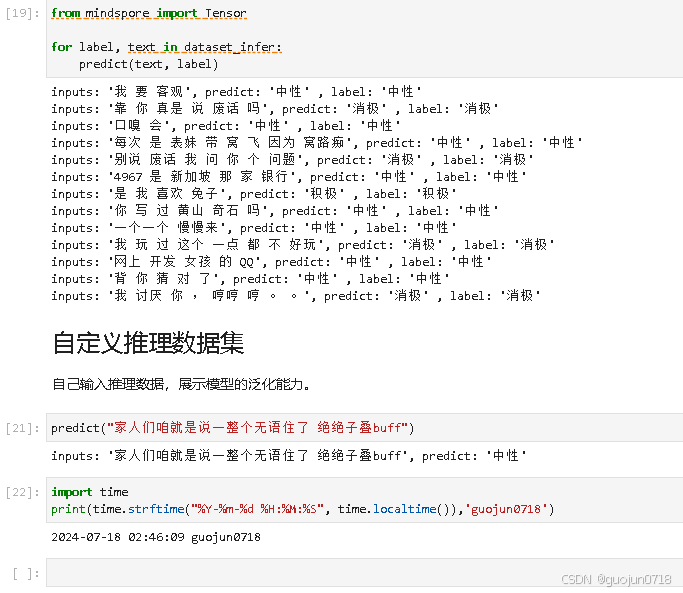

模型推理

遍历推理数据集,将结果与标签进行统一展示。

[17]:

dataset_infer = SentimentDataset("data/infer.tsv")

[18]:

def predict(text, label=None):

label_map = {0: "消极", 1: "中性", 2: "积极"}

text_tokenized = Tensor([tokenizer(text).input_ids])

logits = model(text_tokenized)

predict_label = logits[0].asnumpy().argmax()

info = f"inputs: '{text}', predict: '{label_map[predict_label]}'"

if label is not None:

info += f" , label: '{label_map[label]}'"

print(info)

[19]:

from mindspore import Tensor

for label, text in dataset_infer:

predict(text, label)

inputs: '我 要 客观', predict: '中性' , label: '中性' inputs: '靠 你 真是 说 废话 吗', predict: '消极' , label: '消极' inputs: '口嗅 会', predict: '中性' , label: '中性' inputs: '每次 是 表妹 带 窝 飞 因为 窝路痴', predict: '中性' , label: '中性' inputs: '别说 废话 我 问 你 个 问题', predict: '消极' , label: '消极' inputs: '4967 是 新加坡 那 家 银行', predict: '中性' , label: '中性' inputs: '是 我 喜欢 兔子', predict: '积极' , label: '积极' inputs: '你 写 过 黄山 奇石 吗', predict: '中性' , label: '中性' inputs: '一个一个 慢慢来', predict: '中性' , label: '中性' inputs: '我 玩 过 这个 一点 都 不 好玩', predict: '消极' , label: '消极' inputs: '网上 开发 女孩 的 QQ', predict: '中性' , label: '中性' inputs: '背 你 猜 对 了', predict: '中性' , label: '中性' inputs: '我 讨厌 你 , 哼哼 哼 。 。', predict: '消极' , label: '消极'

自定义推理数据集

自己输入推理数据,展示模型的泛化能力。

[21]:

predict("家人们咱就是说一整个无语住了 绝绝子叠buff")

inputs: '家人们咱就是说一整个无语住了 绝绝子叠buff', predict: '中性'

[22]:

import time

print(time.strftime("%Y-%m-%d %H:%M:%S", time.localtime()),'guojun0718')

2024-07-18 02:46:09 guojun0718

[ ]: