分类识别样例程序

工具

-

Halcon 开发工具

-

Halcon 标注工具

-

Vs2022 社区版

标注图片

样例图片

利用了demo的图片

C:\Users\Public\Documents\MVTec\HALCON-20.11-Steady\examples\images\food

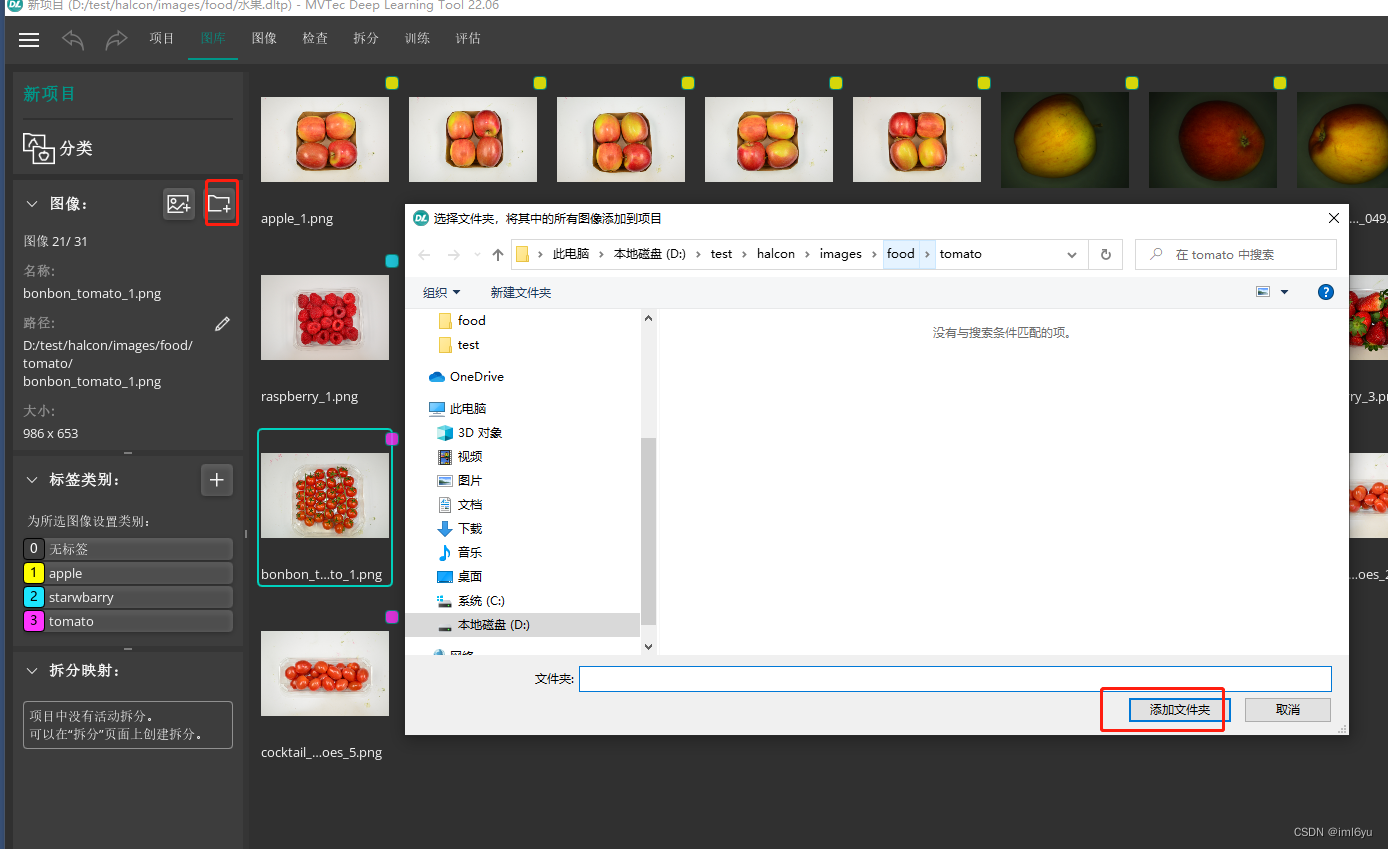

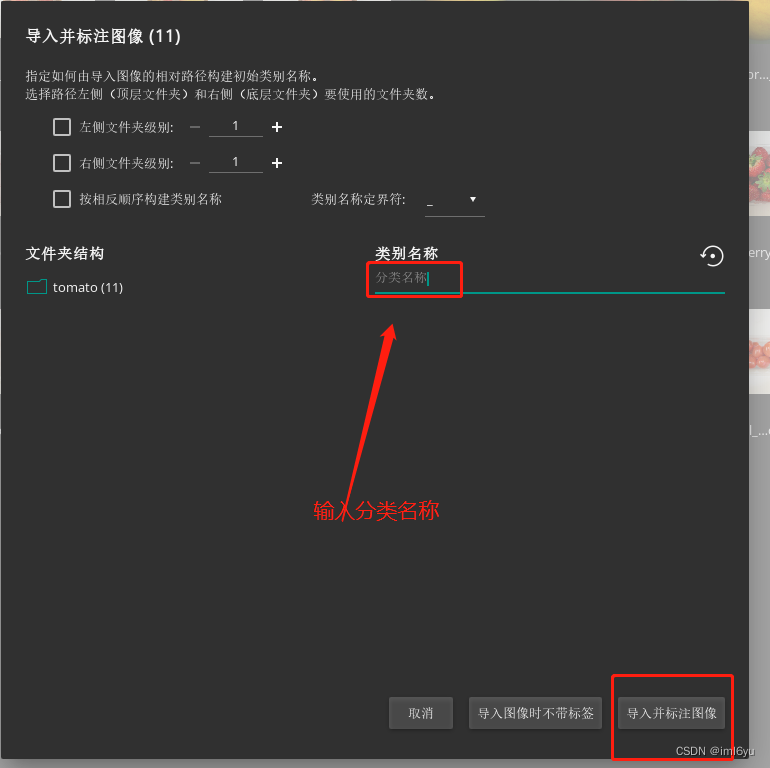

导入图片并标注

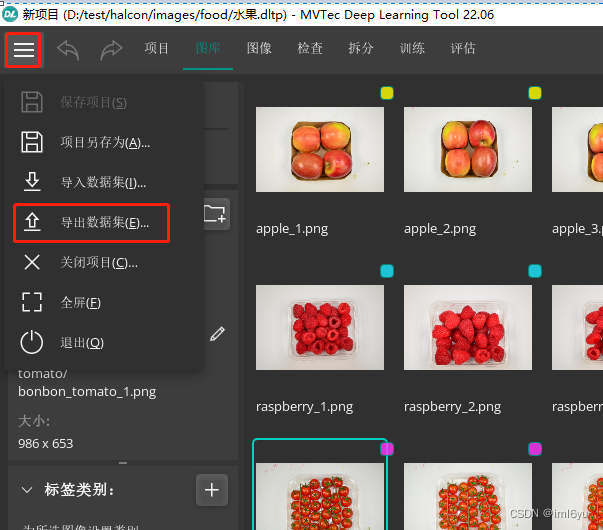

在 DL(Halcon 标注工具)工具中新建一个分类项目,如下图导入图片并且标注

重复如上操作,将所有分类图片都标注成功后,导出数据。

导出数据

如下图操作导出数据

数据预处理之前的操作

为了简化写代码的过程,我们可以使用halcon的demo程序进行修改,demo程序位置如下

C:\Users\Public\Documents\MVTec\HALCON-20.11-Steady\examples\hdevelop\Deep-Learning

将上述路径中的Classification拷贝到一个方便操作的地方进行二次加工

- classify_pill_defects_deep_learning_1_preprocess.hdev(预处理)

- classify_pill_defects_deep_learning_2_train.hdev(训练)

- classify_pill_defects_deep_learning_3_evaluate.hdev(评估)

- classify_pill_defects_deep_learning_4_infer.hdev(验证)

数据预处理

将程序classify_pill_defects_deep_learning_1_preprocess.hdev打开进行修改,修改后代码如下

*

* This example is part of a series of examples, which summarizes

* the workflow for DL classification. It uses the MVTec pill dataset.

*

* The four parts are:

* 1. Dataset preprocessing.

* 2. Training of the model.

* 3. Evaluation of the trained model.

* 4. Inference on new images.

*

* Hint: For a concise version of the workflow please have a look at the example:

* dl_classification_workflow.hdev

*

* This example contains part 1: 'Dataset preprocessing'.

*

dev_update_off ()

*

* *********************************

* ** Set Input/Output paths ***

* *********************************

*

* All example data is written to this folder.

ExampleDataDir := 'defects_data'

* Dataset directory basename for any outputs written by preprocess_dl_dataset.

DataDirectoryBaseName := ExampleDataDir + '/dldataset'

*

* Percentages for splitting the dataset.

TrainingPercent := 70

ValidationPercent := 15

*

* Image dimensions the images are rescaled to during preprocessing.

ImageWidth := 986

ImageHeight := 653

ImageNumChannels := 3

*

* Further parameters for image preprocessing.

NormalizationType := 'none'

DomainHandling := 'full_domain'

*

* In order to get a reproducible split we set a random seed.

* This means that re-running the script results in the same split of DLDataset.

SeedRand := 1

*

* *****************************************************************************

* ** Read the labeled data and split it into train, validation and test ***

* *****************************************************************************

*

* Set the random seed.

set_system ('seed_rand', SeedRand)

*

* Read the dataset with the procedure read_dl_dataset_classification.

* Alternatively, you can read a DLDataset dictionary

* as created by e.g., the MVTec Deep Learning Tool using read_dict().

* read_dl_dataset_classification (RawImageBaseFolder, LabelSource, DLDataset)

read_dict ('D:/test/halcon/images/food/水果.hdict', [], [], DLDataset)

* Generate the split.

split_dl_dataset (DLDataset, TrainingPercent, ValidationPercent, [])

*

*

* *********************************

* ** Preprocess the dataset ***

* *********************************

*

* Create the output directory if it does not exist yet.

file_exists (ExampleDataDir, FileExists)

if (not FileExists)

make_dir (ExampleDataDir)

endif

stop()

*

* Create preprocess parameters.

create_dl_preprocess_param ('classification', ImageWidth, ImageHeight, ImageNumChannels, -127, 128, NormalizationType, DomainHandling, [], [], [], [], DLPreprocessParam)

*

* Dataset directory for any outputs written by preprocess_dl_dataset.

DataDirectory := DataDirectoryBaseName

*

* Preprocess the dataset. This might take a few seconds.

create_dict (GenParam)

set_dict_tuple (GenParam, 'overwrite_files', true)

preprocess_dl_dataset (DLDataset, DataDirectory, DLPreprocessParam, GenParam, DLDatasetFileName)

*

* Store preprocess params separately in order to use it e.g. during inference.

PreprocessParamFileBaseName := DataDirectory + '/dl_preprocess_param.hdict'

write_dict (DLPreprocessParam, PreprocessParamFileBaseName, [], [])

dev_open_window (0, 0, 512, 512, 'black', WindowHandle)

disp_continue_message (WindowHandle, 'black', 'true')

*

* *******************************************

* ** Preview the preprocessed dataset ***

* *******************************************

*

* Before moving on to training, it is recommended to check the preprocessed dataset.

*

* Display the DLSamples for 10 randomly selected train images.

get_dict_tuple (DLDataset, 'samples', DatasetSamples)

find_dl_samples (DatasetSamples, 'split', 'train', 'match', SampleIndices)

tuple_shuffle (SampleIndices, ShuffledIndices)

read_dl_samples (DLDataset, ShuffledIndices[0:9], DLSampleBatchDisplay)

*

create_dict (WindowHandleDict)

for Index := 0 to |DLSampleBatchDisplay| - 1 by 1

* Loop over samples in DLSampleBatchDisplay.

dev_display_dl_data (DLSampleBatchDisplay[Index], [], DLDataset, 'classification_ground_truth', [], WindowHandleDict)

Text := 'Press Run (F5) to continue'

dev_disp_text (Text, 'window', 'bottom', 'right', 'black', [], [])

stop ()

endfor

*

* Close windows that have been used for visualization.

dev_close_window_dict (WindowHandleDict)

*

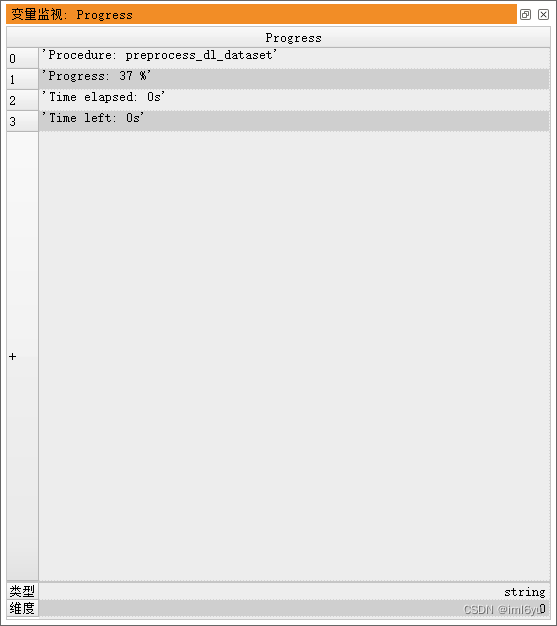

数据预处理,F5运行,效果如下

训练

需要一个有GPU的电脑,如果没有,不建议用cpu尝试,浪费时间。

训练需要安装cuda和cudnn,安装完成后进行训练,代码如下

classify_pill_defects_deep_learning_2_train.hdev 进行修改

*

* This example is part of a series of examples, which summarizes

* the workflow for DL classification. It uses the MVTec pill dataset.

*

* The four parts are:

* 1. Dataset preprocessing.

* 2. Training of the model.

* 3. Evaluation of the trained model.

* 4. Inference on new images.

*

* This examples contains part 2: 'Training of the model.'

*

* Please note: This script requires the output of part 1:

* classify_pill_defects_deep_learning_1_preprocess.hdev

*

dev_update_off ()

*

*

* Training can be performed on a GPU or CPU.

* See the respective system requirements in the Installation Guide.

* If possible a GPU is used in this example.

* In case you explicitely wish to run this example on the CPU,

* choose the CPU device instead.

query_available_dl_devices (['runtime','runtime'], ['gpu','cpu'], DLDeviceHandles)

if (|DLDeviceHandles| == 0)

throw ('No supported device found to continue this example.')

endif

* Due to the filter used in query_available_dl_devices, the first device is a GPU, if available.

DLDevice := DLDeviceHandles[0]

get_dl_device_param (DLDevice, 'type', DLDeviceType)

if (DLDeviceType == 'cpu')

* The number of used threads may have an impact

* on the training duration.

NumThreadsTraining := 4

set_system ('thread_num', NumThreadsTraining)

endif

*

* *************************************

* ** Set input and output paths ***

* *************************************

*

* All example data is written to this folder.

ExampleDataDir := 'defects_data'

* File path of the initialized model.

ModelFileName := 'pretrained_dl_classifier_compact.hdl'

* File path of the preprocessed DLDataset.

* Note: Adapt DataDirectory after preprocessing with another image size.

DataDirectory := ExampleDataDir + '/dldataset'

DLDatasetFileName := DataDirectory + '/dl_dataset.hdict'

DLPreprocessParamFileName := DataDirectory + '/dl_preprocess_param.hdict'

* Output path of the best evaluated model.

BestModelBaseName := ExampleDataDir + '/best_dl_model_classification'

* Output path for the final trained model.

FinalModelBaseName := ExampleDataDir + '/final_dl_model_classification'

*

* *******************************

* ** Set basic parameters ***

* *******************************

* The following parameters need to be adapted frequently.

*

* Model parameters.

* Batch size. In case this example is run on a GPU,

* you can set BatchSize to 'maximum' and it will be

* determined automatically.

BatchSize := 2

* Initial learning rate.

InitialLearningRate := 0.0001

* Momentum should be high if batch size is small.

Momentum := 0.9

*

* Parameters used by train_dl_model.

* Number of epochs to train the model.

NumEpochs := 20

* Evaluation interval (in epochs) to calculate evaluation measures on the validation split.

EvaluationIntervalEpochs := 1

* Change the learning rate in the following epochs, e.g. [4, 8, 12].

* Set it to [] if the learning rate should not be changed.

ChangeLearningRateEpochs := []

* Change the learning rate to the following values, e.g. InitialLearningRate * [0.1, 0.01, 0.001].

* The tuple has to be of the same length as ChangeLearningRateEpochs.

ChangeLearningRateValues := InitialLearningRate * [0.1,0.01,0.001]

*

* **********************************

* ** Set advanced parameters ***

* **********************************

* The following parameters might need to be changed in rare cases.

*

* Model parameter.

* Set the weight prior.

WeightPrior := 0.0005

*

* Parameters used by train_dl_model.

* Control whether training progress is displayed (true/false).

EnableDisplay := true

* Set a random seed for training.

RandomSeed := 42

set_system ('seed_rand', RandomSeed)

*

* In order to obtain nearly deterministic training results on the same GPU

* (system, driver, cuda-version) you could specify "cudnn_deterministic" as

* "true". Note, that this could slow down training a bit.

* set_system ('cudnn_deterministic', 'true')

*

* Set generic parameters of create_dl_train_param.

* Please see the documentation of create_dl_train_param for an overview on all available parameters.

GenParamName := []

GenParamValue := []

*

* Augmentation parameters.

* If samples should be augmented during training, create the dict required by augment_dl_samples.

* Here, we set the augmentation percentage and method.

create_dict (AugmentationParam)

* Percentage of samples to be augmented.

set_dict_tuple (AugmentationParam, 'augmentation_percentage', 50)

* Mirror images along row and column.

set_dict_tuple (AugmentationParam, 'mirror', 'rc')

GenParamName := [GenParamName,'augment']

GenParamValue := [GenParamValue,AugmentationParam]

*

* Change strategies.

* It is possible to change model parameters during training.

* Here, we change the learning rate if specified above.

if (|ChangeLearningRateEpochs| > 0)

create_dict (ChangeStrategy)

* Specify the model parameter to be changed, here the learning rate.

set_dict_tuple (ChangeStrategy, 'model_param', 'learning_rate')

* Start the parameter value at 'initial_value'.

set_dict_tuple (ChangeStrategy, 'initial_value', InitialLearningRate)

* Reduce the learning rate in the following epochs.

set_dict_tuple (ChangeStrategy, 'epochs', ChangeLearningRateEpochs)

* Reduce the learning rate to the following values.

set_dict_tuple (ChangeStrategy, 'values', ChangeLearningRateValues)

* Collect all change strategies as input.

GenParamName := [GenParamName,'change']

GenParamValue := [GenParamValue,ChangeStrategy]

endif

*

* Serialization strategies.

* There are several options for saving intermediate models to disk (see create_dl_train_param).

* Here, we save the best and the final model to the paths set above.

create_dict (SerializationStrategy)

set_dict_tuple (SerializationStrategy, 'type', 'best')

set_dict_tuple (SerializationStrategy, 'basename', BestModelBaseName)

GenParamName := [GenParamName,'serialize']

GenParamValue := [GenParamValue,SerializationStrategy]

create_dict (SerializationStrategy)

set_dict_tuple (SerializationStrategy, 'type', 'final')

set_dict_tuple (SerializationStrategy, 'basename', FinalModelBaseName)

GenParamName := [GenParamName,'serialize']

GenParamValue := [GenParamValue,SerializationStrategy]

*

* Display parameters.

* In this example, 20% of the training split are selected to display the

* evaluation measure for the reduced training split during the training. A lower percentage

* helps to speed up the evaluation/training. If the evaluation measure for the training split

* shall not be displayed, set this value to 0 (default).

SelectedPercentageTrainSamples := 20

* Set the x-axis argument of the training plots.

XAxisLabel := 'epochs'

create_dict (DisplayParam)

set_dict_tuple (DisplayParam, 'selected_percentage_train_samples', SelectedPercentageTrainSamples)

set_dict_tuple (DisplayParam, 'x_axis_label', XAxisLabel)

GenParamName := [GenParamName,'display']

GenParamValue := [GenParamValue,DisplayParam]

*

*

* *****************************************

* ** Read initial model and dataset ***

* *****************************************

*

* Check if all necessary files exist.

check_data_availability (ExampleDataDir, DLDatasetFileName, DLPreprocessParamFileName)

*

* Read in the model that was initialized during preprocessing.

read_dl_model (ModelFileName, DLModelHandle)

stop()

*

* Read in the preprocessed DLDataset file.

read_dict (DLDatasetFileName, [], [], DLDataset)

*

* *******************************

* ** Set model parameters ***

* *******************************

*

* Set model hyper-parameters as specified in the settings above.

set_dl_model_param (DLModelHandle, 'learning_rate', InitialLearningRate)

set_dl_model_param (DLModelHandle, 'momentum', Momentum)

* Set the class names for the model.

get_dict_tuple (DLDataset, 'class_names', ClassNames)

set_dl_model_param (DLModelHandle, 'class_names', ClassNames)

* Get image dimensions from preprocess parameters and set them for the model.

read_dict (DLPreprocessParamFileName, [], [], DLPreprocessParam)

get_dict_tuple (DLPreprocessParam, 'image_width', ImageWidth)

get_dict_tuple (DLPreprocessParam, 'image_height', ImageHeight)

get_dict_tuple (DLPreprocessParam, 'image_num_channels', ImageNumChannels)

set_dl_model_param (DLModelHandle, 'image_dimensions', [ImageWidth,ImageHeight,ImageNumChannels])

if (BatchSize == 'maximum' and DLDeviceType == 'gpu')

set_dl_model_param_max_gpu_batch_size (DLModelHandle, 100)

else

set_dl_model_param (DLModelHandle, 'batch_size', BatchSize)

endif

* When the batch size is determined, set the device.

set_dl_model_param (DLModelHandle, 'device', DLDevice)

if (|WeightPrior| > 0)

set_dl_model_param (DLModelHandle, 'weight_prior', WeightPrior)

endif

* Set class weights to counteract unbalanced training data. In this example

* we choose the default values, since the classes are evenly distributed in the dataset.

tuple_gen_const (|ClassNames|, 1.0, ClassWeights)

set_dl_model_param (DLModelHandle, 'class_weights', ClassWeights)

*

* **************************

* ** Train the model ***

* **************************

*

* Create training parameters.

create_dl_train_param (DLModelHandle, NumEpochs, EvaluationIntervalEpochs, EnableDisplay, RandomSeed, GenParamName, GenParamValue, TrainParam)

*

* Start the training by calling the training operator

* train_dl_model_batch () within the following procedure.

train_dl_model (DLDataset, DLModelHandle, TrainParam, 0, TrainResults, TrainInfos, EvaluationInfos)

*

* Stop after the training has finished, before closing the windows.

dev_disp_text ('Press Run (F5) to continue', 'window', 'bottom', 'right', 'black', [], [])

stop ()

*

* Close training windows.

dev_close_window ()

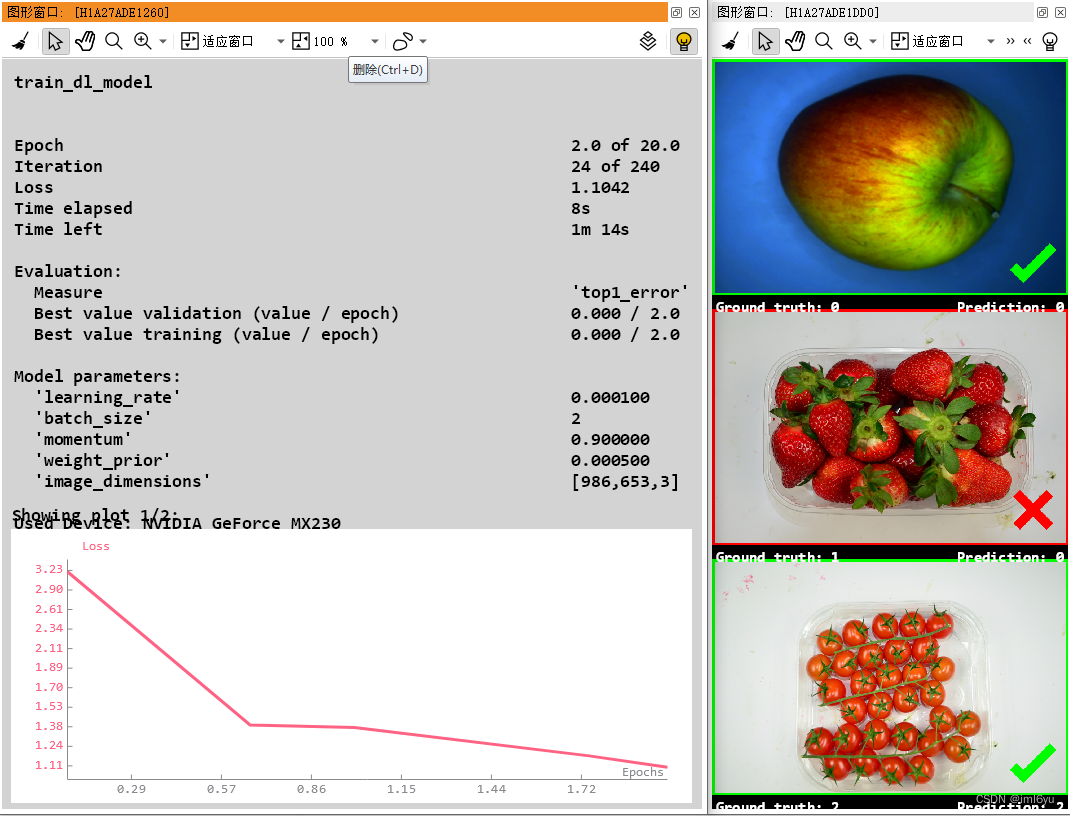

运行效果

模型评估

classify_pill_defects_deep_learning_3_evaluate.hdev进行修改

代码如下

dev_update_off ()

*

* The evaluation can be performed on GPU or CPU.

* See the respective system requirements in the Installation Guide.

* If possible a GPU is used in this example.

* In case you explicitely wish to run this example on the CPU,

* choose the CPU device instead.

query_available_dl_devices (['runtime','runtime'], ['gpu','cpu'], DLDeviceHandles)

if (|DLDeviceHandles| == 0)

throw ('No supported device found to continue this example.')

endif

* Due to the filter used in query_available_dl_devices, the first device is a GPU, if available.

DLDevice := DLDeviceHandles[0]

*

*

* ******************************************************

* ** Set paths and parameters for the evaluation ***

* ******************************************************

*

* Paths.

*

* Project directory for any outputs written by HALCON.

ExampleDataDir := 'defects_data'

* File path of the preprocessed DLDataset.

* Note: Adapt DataDirectory after preprocessing with another image size.

DataDirectory := ExampleDataDir + '/dldataset'

DLDatasetFileName := DataDirectory + '/dl_dataset.hdict'

*

* Path of the retrained classification model.

RetrainedModelFileName := ExampleDataDir + '/best_dl_model_classification.hdl'

*

* Evaluation parameters.

*

* Evaluation measures.

ClassificationMeasures := ['top1_error','precision','recall','f_score','absolute_confusion_matrix','relative_confusion_matrix']

* Batch size used during evaluation.

BatchSize := 2

*

* **********************************

* ** Evaluation of the model ***

* **********************************

*

* Check if all necessary files exist.

check_data_availability (ExampleDataDir, DLDatasetFileName, RetrainedModelFileName, false)

*

* Read the retrained model.

read_dl_model (RetrainedModelFileName, DLModelHandle)

*

set_dl_model_param (DLModelHandle, 'batch_size', BatchSize)

*

set_dl_model_param (DLModelHandle, 'device', DLDevice)

*

* Read the preprocessed DLDataset file.

read_dict (DLDatasetFileName, [], [], DLDataset)

*

* Set parameters for evaluation.

create_dict (GenParamEval)

set_dict_tuple (GenParamEval, 'measures', ClassificationMeasures)

set_dict_tuple (GenParamEval, 'show_progress', 'true')

stop()

*

* Evaluate the retrained model.

evaluate_dl_model (DLDataset, DLModelHandle, 'split', 'test', GenParamEval, EvaluationResult, EvalParams)

*

*

* ******************************

* ** Display the results ***

* ******************************

*

* Display measures.

create_dict (WindowHandleDict)

create_dict (GenParamEvalDisplay)

set_dict_tuple (GenParamEvalDisplay, 'display_mode', ['measures','pie_charts_precision','pie_charts_recall','absolute_confusion_matrix'])

dev_display_classification_evaluation (EvaluationResult, EvalParams, GenParamEvalDisplay, WindowHandleDict)

dev_disp_text ('Press F5 to continue', 'window', 'bottom', 'right', 'black', [], [])

*

stop ()

dev_close_window_dict (WindowHandleDict)

*

* Call interactive confusion matrix.

dev_display_dl_interactive_confusion_matrix (DLDataset, EvaluationResult, [])

*

* Close window handles.

dev_close_window_dict (WindowHandleDict)

*

*

* **************************************

* ** Visual inspection of images ***

* **************************************

*

* To inspect some examples more precisely,

* calculate and display a heatmap.

* Here, we choose the samples

* labeled and classified as 'contamination'.

*

SelectedHeatmapGTClassName := 'contamination'

SelectedHeatmapInfClassName := 'contamination'

*

* Get information from DLDataset and EvaluationResult.

get_dict_tuple (EvaluationResult, 'evaluated_samples', EvaluatedSamples)

get_dict_tuple (EvaluatedSamples, 'image_ids', ImageIDs)

get_dict_tuple (EvaluatedSamples, 'image_label_ids', ImageLabelIDs)

get_dict_tuple (EvaluatedSamples, 'top1_predictions', Predictions)

get_dict_tuple (DLDataset, 'class_names', ClassNames)

get_dict_tuple (DLDataset, 'class_ids', ClassIDs)

* Get class IDs for selected classes.

PredictedClassID := ClassIDs[find(ClassNames,SelectedHeatmapInfClassName)]

GroundTruthClassID := ClassIDs[find(ClassNames,SelectedHeatmapGTClassName)]

* Get tuple position of selected classes.

GTIndices := find(ImageLabelIDs [==] GroundTruthClassID,1)

PredictionIndices := find(Predictions [==] PredictedClassID,1)

* Get image IDs for selected combination.

ImageIDsSelected := []

if (PredictionIndices != -1 and PredictionIndices != [])

ImageIDsSelected := ImageIDs[intersection(GTIndices,PredictionIndices)]

endif

*

* We offer two heatmap options:

* 1) a fast heatmap operator supporting the method 'grad_cam'

* 2) a confidence-based approach implemented as procedure.

* In this example, set HeatmapMethod to 'heatmap_grad_cam'

* or 'heatmap_confidence_based' to switch between the heatmap options.

HeatmapMethod := 'heatmap_grad_cam'

*

* Set the target class ID or [] to show the heatmap

* for the classified class.

TargetClassID := []

create_dict (HeatmapParam)

if (HeatmapMethod == 'heatmap_grad_cam')

* Set generic parameters for operator heatmap.

set_dict_tuple (HeatmapParam, 'use_conv_only', 'false')

set_dict_tuple (HeatmapParam, 'scaling', 'scale_after_relu')

elseif (HeatmapMethod == 'heatmap_confidence_based')

* Set target class ID.

set_dict_tuple (HeatmapParam, 'target_class_id', TargetClassID)

* Set the feature size and the sampling size for the

* confidence based approach.

FeatureSize := 30

SamplingSize := 10

set_dict_tuple (HeatmapParam, 'feature_size', FeatureSize)

set_dict_tuple (HeatmapParam, 'sampling_size', SamplingSize)

else

throw ('Unsupported heatmap method.')

endif

*

* Heatmaps are displayed in sequence, hence set batch size to 1.

set_dl_model_param (DLModelHandle, 'batch_size', 1)

*

* Visualize heatmaps for selected samples.

create_dict (WindowHandleDict)

get_dict_tuple (DLDataset, 'samples', DatasetSamples)

for Index := 0 to min([|ImageIDsSelected| - 1,10]) by 1

* Select the corresponding DLSample.

find_dl_samples (DatasetSamples, 'image_id', ImageIDsSelected[Index], 'match', DLSampleIndex)

read_dl_samples (DLDataset, DLSampleIndex, DLSample)

*

if (HeatmapMethod == 'heatmap_grad_cam')

gen_dl_model_heatmap (DLModelHandle, DLSample, 'grad_cam', TargetClassID, HeatmapParam, DLResult)

else

* Create temporary DLResult for display.

create_dict (DLResult)

gen_dl_model_classification_heatmap (DLModelHandle, DLSample, DLResult, HeatmapParam)

endif

dev_display_dl_data (DLSample, DLResult, DLDataset, HeatmapMethod, [], WindowHandleDict)

dev_disp_text ('Press F5 to continue.', 'window', 'bottom', 'right', 'black', [], [])

stop ()

endfor

*

* Optimize the memory consumption.

set_dl_model_param (DLModelHandle, 'optimize_for_inference', 'true')

write_dl_model (DLModelHandle, RetrainedModelFileName)

* Close the windows.

dev_close_window_dict (WindowHandleDict)

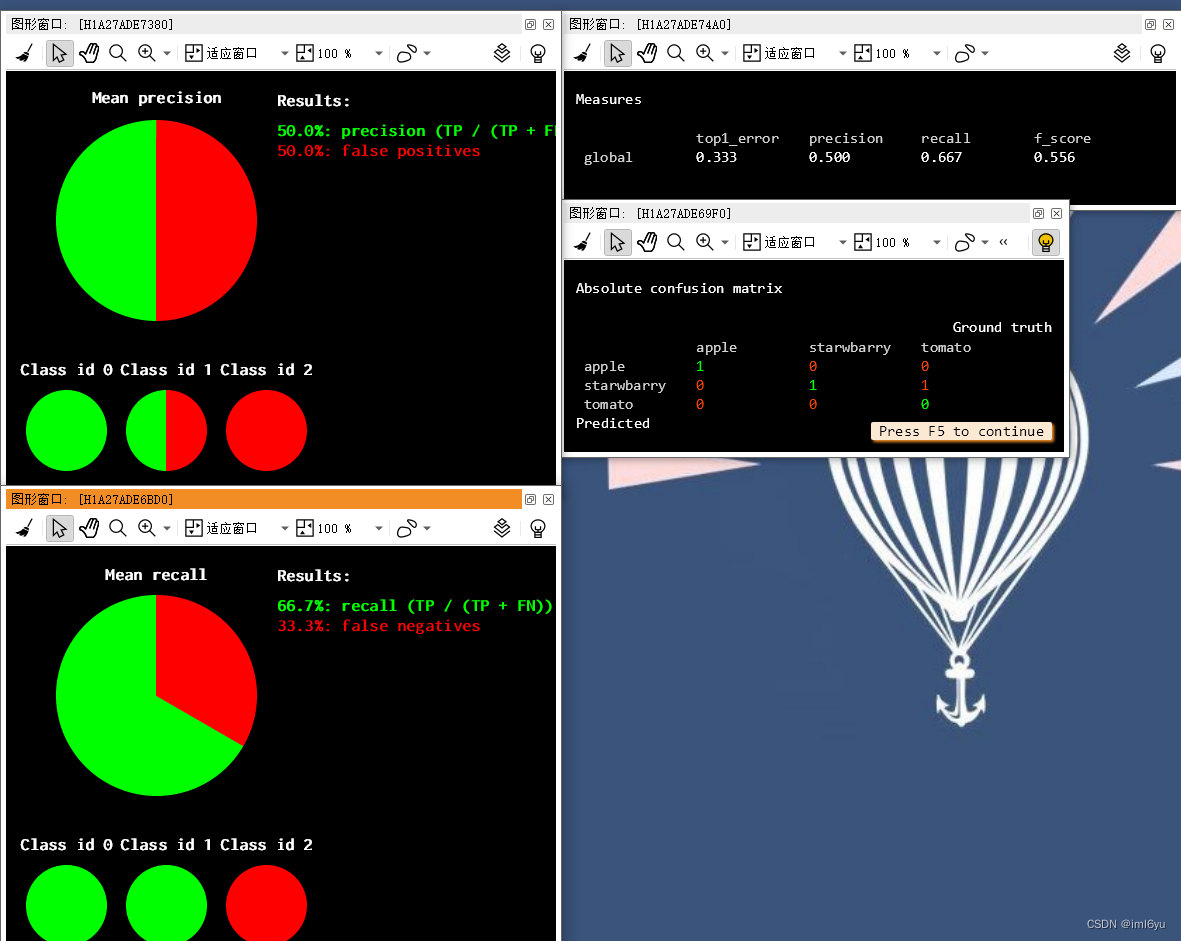

运行效果如下

测试推断

代码如下:

*

* This example is part of a series of examples, which summarizes

* the workflow for DL classification. It uses the MVTec pill dataset.

* The four parts are:

* 1. Dataset preprocessing.

* 2. Training of the model.

* 3. Evaluation of the trained model.

* 4. Inference on new images.

*

* This example covers part 4: 'Inference on new images'.

*

* It explains how to apply a trained model on new images and shows

* an application based on the MVTec pill dataset.

*

* Please note: This script uses a pretrained model. To use the output

* of part 1 and part 2 of this example series, set UsePretrainedModel

* to false below.

*

dev_update_off ()

*

* Inference can be done on any deep learning device available.

* See the respective system requirements in the Installation Guide.

* If possible a GPU is used in this example.

* In case you explicitely wish to run this example on the CPU,

* choose the CPU device instead.

query_available_dl_devices (['runtime','runtime'], ['gpu','cpu'], DLDeviceHandles)

if (|DLDeviceHandles| == 0)

throw ('No supported device found to continue this example.')

endif

* Due to the filter used in query_available_dl_devices, the first device is a GPU, if available.

DLDevice := DLDeviceHandles[0]

* *************************************************

* ** Set paths and parameters for inference ***

* *************************************************

*

* We will demonstrate the inference on the example images.

* In a real application newly incoming images (not used for training or evaluation)

* would be used here.

*

* Set the paths of the retrained model and the corresponding preprocessing parameters.

* Example data folder containing the outputs of the previous example series.

ExampleDataDir := 'defects_data'

DataDirectory := ExampleDataDir + '/dldataset'

PreprocessParamFileName := DataDirectory + '/dl_preprocess_param.hdict'

* File name of the finetuned object detection model.

RetrainedModelFileName := ExampleDataDir + '/best_dl_model_classification.hdl'

*

* Batch Size used during inference.

BatchSizeInference := 1

*

* ********************

* ** Inference ***

* ********************

*

* Check if all necessary files exist.

check_data_availability (ExampleDataDir, PreprocessParamFileName, RetrainedModelFileName, false)

*

* Read in the retrained model.

read_dl_model (RetrainedModelFileName, DLModelHandle)

*

* Set the batch size.

set_dl_model_param (DLModelHandle, 'batch_size', BatchSizeInference)

*

* Initialize the model for inference.

set_dl_model_param (DLModelHandle, 'device', DLDevice)

*

* Get the class names and IDs from the model.

get_dl_model_param (DLModelHandle, 'class_names', ClassNames)

get_dl_model_param (DLModelHandle, 'class_ids', ClassIDs)

*

* Get the parameters used for preprocessing.

read_dict (PreprocessParamFileName, [], [], DLPreprocessParam)

*

* Create window dictionary for displaying results.

create_dict (WindowHandleDict)

* Create dictionary with dataset parameters necessary for displaying.

create_dict (DLDataInfo)

set_dict_tuple (DLDataInfo, 'class_names', ClassNames)

set_dict_tuple (DLDataInfo, 'class_ids', ClassIDs)

* Set generic parameters for visualization.

create_dict (GenParam)

set_dict_tuple (GenParam, 'scale_windows', 1.1)

*

* List the files the model should be applied to (e.g., using list_image_files).

* For this example, we select some images randomly.

ImageDir := 'D:/test/halcon/images/food'

get_example_inference_images (ImageDir, ImageFiles)

*

* Loop over all images in batches of size BatchSizeInference for inference.

for BatchIndex := 0 to floor(|ImageFiles| / real(BatchSizeInference)) - 1 by 1

*

* Get the paths to the images of the batch.

Batch := ImageFiles[BatchIndex * BatchSizeInference:(BatchIndex + 1) * BatchSizeInference - 1]

* Read the images of the batch.

read_image (ImageBatch, Batch)

*

* Generate the DLSampleBatch.

gen_dl_samples_from_images (ImageBatch, DLSampleBatch)

*

* Preprocess the DLSampleBatch.

preprocess_dl_samples (DLSampleBatch, DLPreprocessParam)

*

* Apply the DL model on the DLSampleBatch.

apply_dl_model (DLModelHandle, DLSampleBatch, [], DLResultBatch)

*

* Postprocessing and visualization.

* Loop over each sample in the batch.

for SampleIndex := 0 to BatchSizeInference - 1 by 1

*

* Get sample and according results.

DLSample := DLSampleBatch[SampleIndex]

DLResult := DLResultBatch[SampleIndex]

*

* Display results and text.

dev_display_dl_data (DLSample, DLResult, DLDataInfo, 'classification_result', GenParam, WindowHandleDict)

get_dict_tuple (WindowHandleDict, 'classification_result', WindowHandles)

dev_set_window (WindowHandles[0])

set_display_font (WindowHandles[0], 16, 'mono', 'true', 'false')

dev_disp_text ('Press Run (F5) to continue', 'window', 'bottom', 'right', 'black', [], [])

stop ()

endfor

endfor

*

* Close windows used for visualization.

dev_close_window_dict (WindowHandleDict)

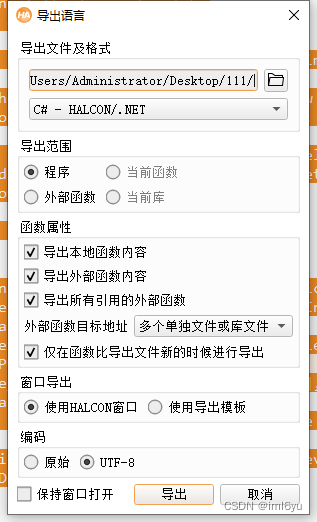

导出语言文件

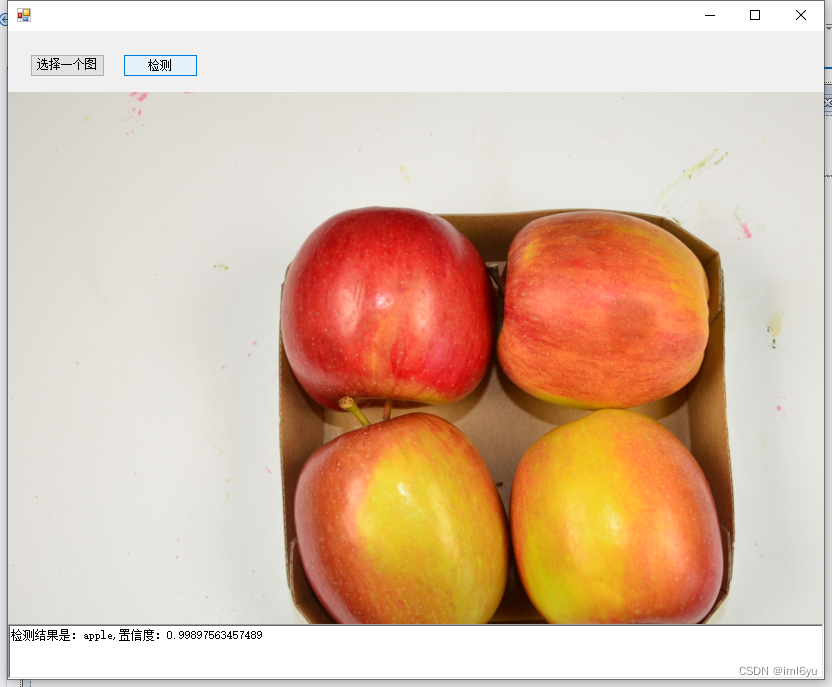

C# 客户端使用模型进行识别

新建项目

- 新建一个桌面项目

- 将导出的.cs文件添加到项目中

- 创建页面

页面如下

界面代码如下

using HalconDotNet;

using System;

using System.Collections.Generic;

using System.ComponentModel;

using System.Data;

using System.Drawing;

using System.Linq;

using System.Text;

using System.Threading.Tasks;

using System.Windows.Forms;

namespace WindowsForms水果分类

{

public partial class Form1 : Form

{

private HTuple hv_DLModelHandle;

private HTuple hv_DLPreprocessParam;

private HTuple hv_WindowHandleDict;

private HTuple hv_ClassNames;

private HTuple hv_ClassIDs;

private HTuple hv_DLDataInfo;

private HTuple hv_GenParam;

private HDevelopExport hDevelop = new HDevelopExport();

private HTuple hv_DLSampleBatch;

private HTuple hv_DLResultBatch;

private HTuple hv_DLDeviceHandles;

private HTuple hv_DLDevice;

public Form1()

{

InitializeComponent();

HTuple hv_DLModelHandle = new HTuple();

HTuple hv_DLPreprocessParam = new HTuple();

HTuple hv_WindowHandleDict = new HTuple();

HTuple hv_ClassNames = new HTuple();

HTuple hv_ClassIDs = new HTuple();

HTuple hv_DLDataInfo = new HTuple();

HTuple hv_GenParam = new HTuple();

HTuple hv_DLSampleBatch = new HTuple();

HTuple hv_DLResultBatch = new HTuple();

this.Load += Form1_Load;

}

private void Form1_Load(object sender, EventArgs e)

{

openFileDialog1.FileOk += OpenFileDialog1_FileOk;

}

private void OpenFileDialog1_FileOk(object sender, CancelEventArgs e)

{

pictureBox1.Image = new Bitmap(openFileDialog1.FileName);

}

private void button1_Click(object sender, EventArgs e)

{

openFileDialog1.ShowDialog();

}

private void button2_Click(object sender, EventArgs e)

{

if (!string.IsNullOrEmpty(openFileDialog1.FileName))

action(openFileDialog1.FileName);

}

private void action(string filename)

{

// Local iconic variables

HObject ho_ImageBatch = null;

// Local control variables

// Initialize local and output iconic variables

HOperatorSet.GenEmptyObj(out ho_ImageBatch);

try

{

hv_DLDeviceHandles?.Dispose();

HOperatorSet.QueryAvailableDlDevices((new HTuple("runtime")).TupleConcat("runtime"),

(new HTuple("gpu")).TupleConcat("cpu"), out hv_DLDeviceHandles);

if ((int)(new HTuple((new HTuple(hv_DLDeviceHandles.TupleLength())).TupleEqual(

0))) != 0)

{

throw new HalconException("No supported device found to continue this example.");

}

//Due to the filter used in query_available_dl_devices, the first device is a GPU, if available.

hv_DLDevice?.Dispose();

using (HDevDisposeHelper dh = new HDevDisposeHelper())

{

hv_DLDevice = hv_DLDeviceHandles.TupleSelect(

0);

}

//

HOperatorSet.ReadDlModel("D:\\test\\halcon\\Classification\\defects_data\\final_dl_model_classification.hdl", out hv_DLModelHandle);

//

//Set the batch size.

HOperatorSet.SetDlModelParam(hv_DLModelHandle, "batch_size", 1);

//

//Initialize the model for inference.

HOperatorSet.SetDlModelParam(hv_DLModelHandle, "device", hv_DLDevice);

//

//Get the class names and IDs from the model.

hv_ClassNames?.Dispose();

HOperatorSet.GetDlModelParam(hv_DLModelHandle, "class_names", out hv_ClassNames);

hv_ClassIDs?.Dispose();

HOperatorSet.GetDlModelParam(hv_DLModelHandle, "class_ids", out hv_ClassIDs);

//

//Get the parameters used for preprocessing.

hv_DLPreprocessParam?.Dispose();

HOperatorSet.ReadDict("D:\\test\\halcon\\Classification\\defects_data\\dldataset\\dl_preprocess_param.hdict", new HTuple(), new HTuple(),

out hv_DLPreprocessParam);

//

//Create window dictionary for displaying results.

hv_WindowHandleDict?.Dispose();

HOperatorSet.CreateDict(out hv_WindowHandleDict);

//Create dictionary with dataset parameters necessary for displaying.

hv_DLDataInfo?.Dispose();

HOperatorSet.CreateDict(out hv_DLDataInfo);

HOperatorSet.SetDictTuple(hv_DLDataInfo, "class_names", hv_ClassNames);

HOperatorSet.SetDictTuple(hv_DLDataInfo, "class_ids", hv_ClassIDs);

//Set generic parameters for visualization.

hv_GenParam?.Dispose();

HOperatorSet.CreateDict(out hv_GenParam);

HOperatorSet.SetDictTuple(hv_GenParam, "scale_windows", 1.1);

ho_ImageBatch?.Dispose();

HOperatorSet.ReadImage(out ho_ImageBatch, filename);

//

//Generate the DLSampleBatch.

hv_DLSampleBatch?.Dispose();

hDevelop.gen_dl_samples_from_images(ho_ImageBatch, out hv_DLSampleBatch);

//

//Preprocess the DLSampleBatch.

hDevelop.preprocess_dl_samples(hv_DLSampleBatch, hv_DLPreprocessParam);

//

//Apply the DL model on the DLSampleBatch.

hv_DLResultBatch?.Dispose();

HOperatorSet.ApplyDlModel(hv_DLModelHandle, hv_DLSampleBatch, new HTuple(),

out hv_DLResultBatch);

HOperatorSet.GetDictTuple(hv_DLResultBatch, "classification_class_names" , out HTuple hv_row);

HOperatorSet.GetDictTuple(hv_DLResultBatch, "classification_confidences", out HTuple hv_conf);

richTextBox1.AppendText($"检测结果是:{hv_row.SArr.FirstOrDefault()},置信度:{hv_conf.DArr.FirstOrDefault()}\r\n");

}

catch (HalconException HDevExpDefaultException)

{

ho_ImageBatch?.Dispose();

hv_DLModelHandle?.Dispose();

hv_ClassNames?.Dispose();

hv_ClassIDs?.Dispose();

hv_DLPreprocessParam?.Dispose();

hv_WindowHandleDict?.Dispose();

hv_DLDataInfo?.Dispose();

hv_GenParam?.Dispose();

hv_DLSampleBatch?.Dispose();

hv_DLResultBatch?.Dispose();

throw HDevExpDefaultException;

}

ho_ImageBatch?.Dispose();

hv_DLModelHandle?.Dispose();

hv_ClassNames?.Dispose();

hv_ClassIDs?.Dispose();

hv_DLPreprocessParam?.Dispose();

hv_WindowHandleDict?.Dispose();

hv_DLDataInfo?.Dispose();

hv_GenParam?.Dispose();

hv_DLSampleBatch?.Dispose();

hv_DLResultBatch?.Dispose();

}

}

}