kubernetes集群类型

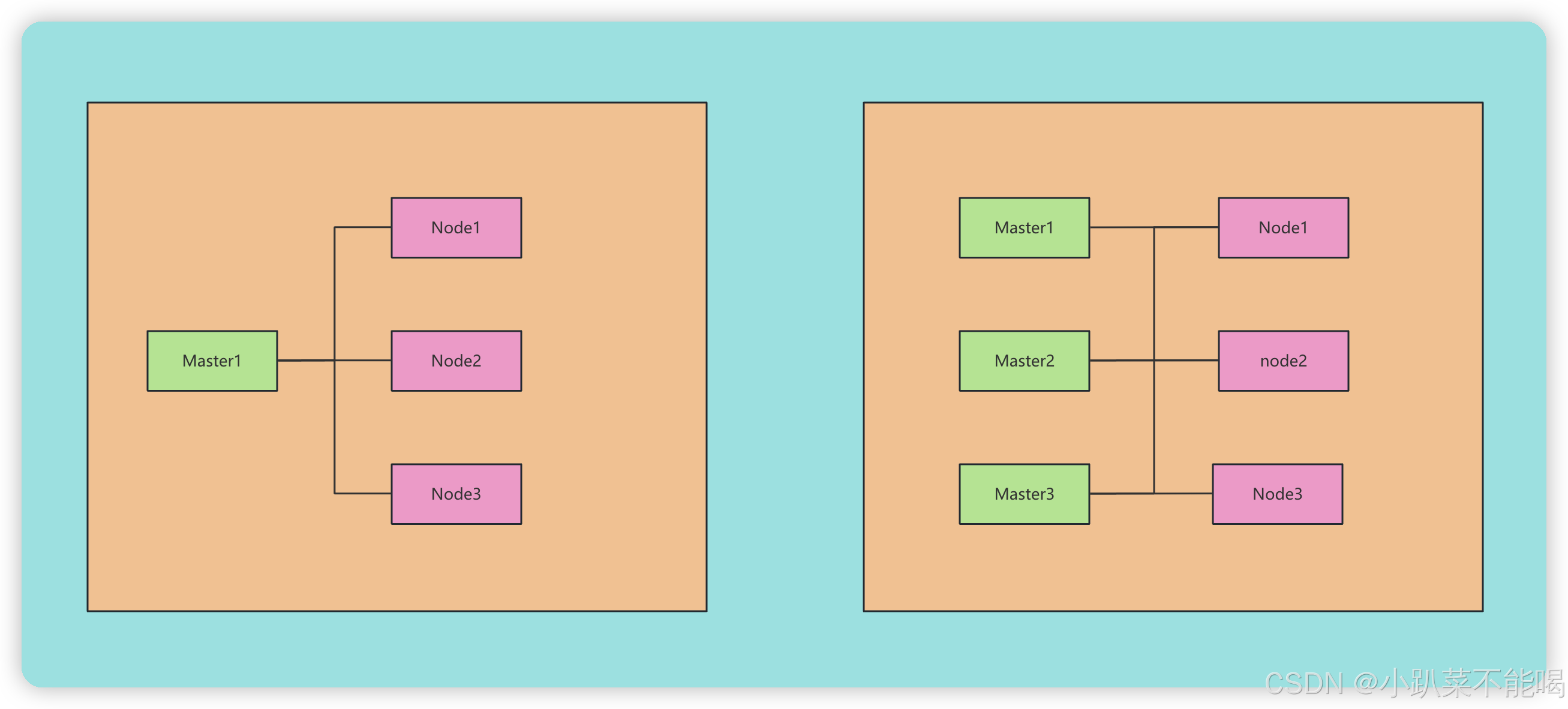

kubernetes集群大体上分为两类:一主多从和多主多从

- 一主多从:一台Master节点和多台Node节点,搭建简单,但有单机故障风险,适合于测试环境部署

- 多主多从:多台Matser节点和多台Node节点,搭建麻烦,安全性高,适用于生产环境

kubernetes安装方式

kubernetes安装方式

kuberbnetes有多种部署方式,目前主流的方式有kubeadm、minikube、二机制包

- kubeadm:一个快速搭建kubernetes集群的工具

- minikube:一个快速搭建单点kubernetes的工具

- 二进制包:从官网下载每个组件的二进制包,依次安装

kubenetes环境安装服务器配置

本人用的是真实的三台云服务器,不是虚拟机,使用虚拟机的话需要另行配置,如果服务器有docker且版本过高的话请卸载并安装稳定版本卸载docker

| 角色 | ip | 组件 |

| master | 123.249.4.17 | docker,kubectl,kubeadm,kubelet |

| Node1 | 120.46.9.98 | docker,kubectl,kubeadm,kubelet |

| Node2 | 120.46.51.145 | docker,kubectl,kubeadm,kubelet |

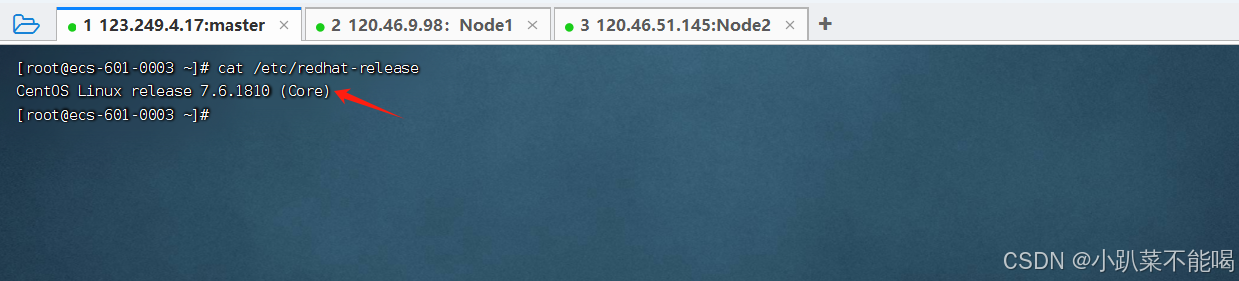

检查服务器版本

kubernetes安装对Centos版本有要求,要在7.5或以上

cat /etc/redhat-release主机域名解析

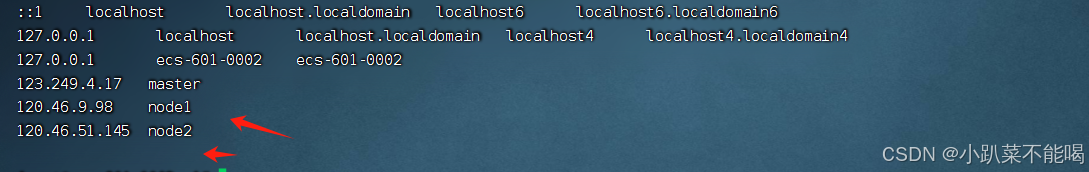

vim /etc/hosts123.249.4.17 master

120.46.9.98 node1

120.46.51.145 node2

注意:最后一行留空格,否则可能不生效

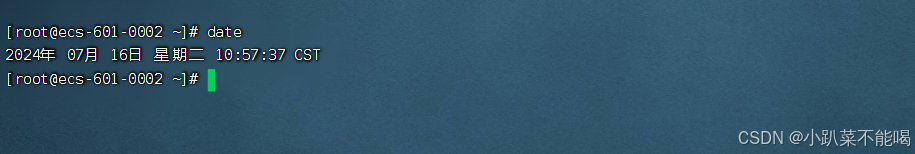

时间同步

kubernetes要求集群中的时间节点必须精确一致,这里直接使用chronyd服务从网络获取时间

#启动chroynd服务

systemctl start chronyd

#设置choroynd开机自启动

systemctl enable chronyd

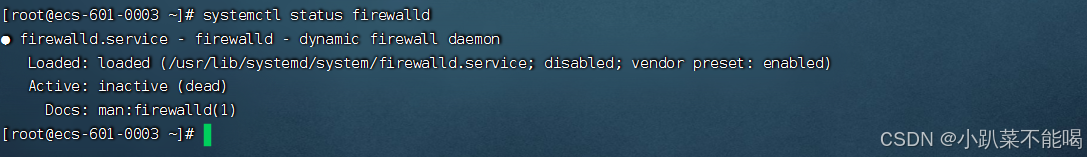

禁用iptables和firewalld服务

kubernetes和docker运行中会产生大量的iptables规则,为了避免系统规则跟他们混淆,直接关闭系统规则

#关闭firewalld服务

systemctl stop firewalld

systemctl disable firewalld

#关闭iptables服务

systemctl stop iptables

systemctl disable iptables禁用selinux

selinux是linux系统下的一个安全服务,如果不关闭它,在安装集群的过程中会产生比较奇葩的问题

vim /etc/selinux/config

#修改值为disable

SETLINUX = disadble

#修改之后重启整个linux服务禁用swap分区

swap分区指的是虚拟内存分区,它的作用是在物理内存使用完之后,将磁盘空间拟成内存来使用

启用swap分区会对系统的性能产生负面影响,因此kubernetes要求每个节点都要禁用swap设备,如果不能关闭分区,在集群安装过程中通过参数配置说明

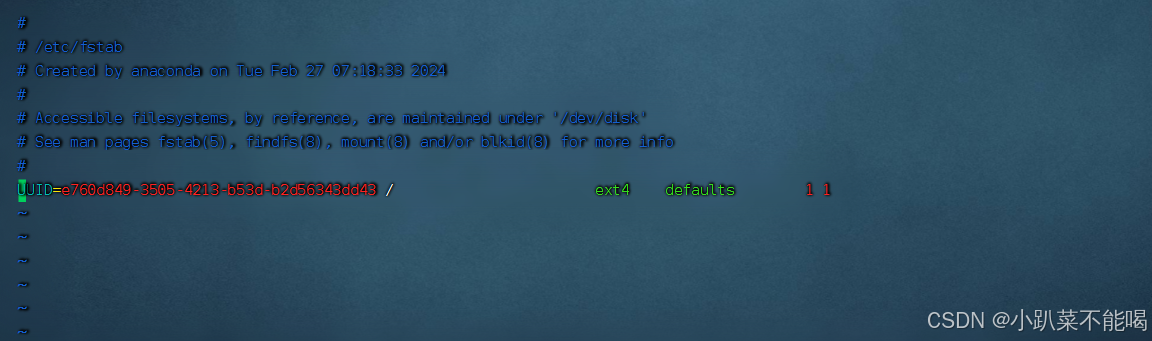

vim /etc/fstab

注释掉分区中的swap分区内容,此操作也需要重启系统

修改linux内核参数

kubernetes的强制要求修改的内核参数

编辑/etc/sysctl.d/kubernetes.conf文件,无此文件,使用默认创建的新文件即可,添加如下配置:

vim /etc/sysctl.d/kubernetes.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

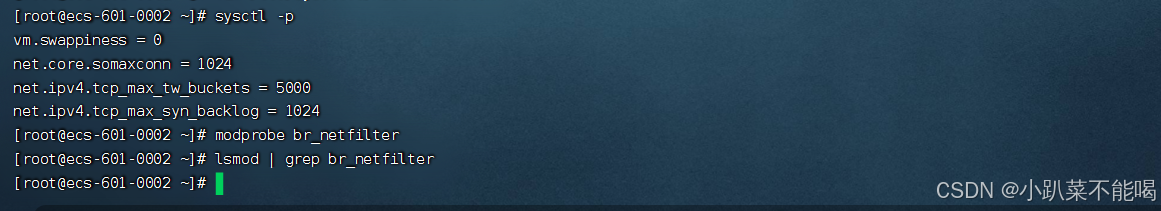

net.ipv4.ip_forward = 1重新加载配置

sysctl -p

加载网桥过滤模块

modprobe br_netfilter

查看网桥过滤模块是否加载成功

lsmod | grep br_netfilter

配置ipvs功能

kubernetes中service有两种代理模型,一种基于iptables的,一种是基于ipvs的,两种比较的话,ipvs明显性能更高一些,但是如果要使用ipvs,需要手动载入

#安装ipset和ipvsadm

yum install ipset ipvsadm -y

#添加需要加载的模块写入脚本文件

cat <<EOF > /etc/sysconfig/modules/ipvs.modules

#! /bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

#为脚本文件添加执行权限

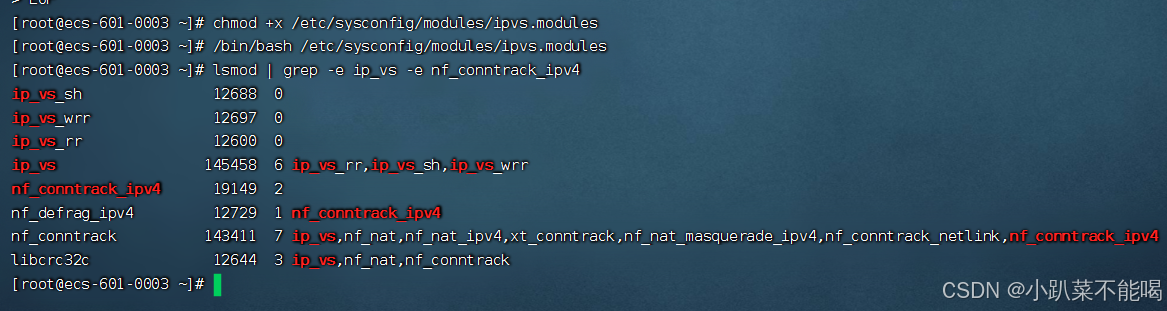

chmod +x /etc/sysconfig/modules/ipvs.modules

#执行脚本文件

/bin/bash /etc/sysconfig/modules/ipvs.modules

#查看对应模块是否加载成功

lsmod | grep -e ip_vs -e nf_conntrack_ipv4

至此,完成所有步骤之后,重启所有服务器

#重启服务器

reboot kubernetes环境安装

需要在每台服务器分别安装Docker(18.06.3.ce-3.el7) kubeadm、kubelet 、kubectl(v1.17.4)程序(无特殊强调都是在三台机器上共同操作)

安装Docker

#切换镜像源

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

#查看当前镜像源中支持的docker版本

yum list docker-ce --showduplicates

#安装特定版本的docker-ce 必须指定–setopt=obsoletes=0,否则yum会自动安装更高版本

yum install --setopt=obsoletes=0 docker-ce-18.06.3.ce-3.el7 -y

#如果最新版安装 yum install docker 正常安装到此结束即可

#添加一个配置文件,Docker在默认情况下使用的Cgroup Driver为cgroupfs,而kubernetes推荐使用#systemd来代替cgroupfs

mkdir /etc/docker

cat <<EOF > /etc/docker/daemon.json

{

"exec-opts" : ["native.cgroupdriver = systemd"],

"registry-mirrors" : ["https://qaisuteo.mirror.aliyuncs.com"]

}

EOF

#启动Docker

systemctl start docker

#设置开机自启动

systemctl enable docker

检查Docker是否正在使用systemd的cgroup驱动

docker info | grep Cgroup安装kubernetes组件

#由于kubernetes的镜像源在国外,速度比较慢,这里切换成国内的镜像源

# 编辑/etc/yum.repos.d/kubernetes.repo,添加下面的配置

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

# 安装kubeadm、kubelet和kubectl

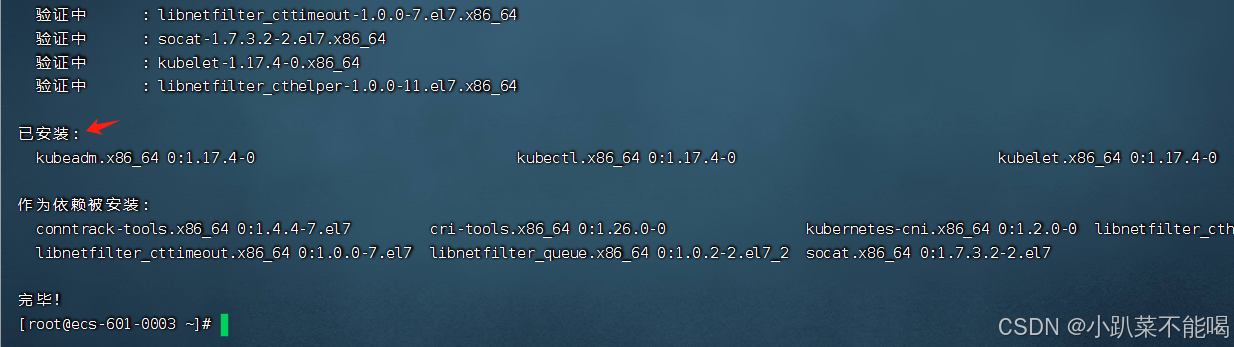

yum install --setopt=obsoletes=0 kubeadm-1.17.4-0 kubelet-1.17.4-0 kubectl-1.17.4-0 -y

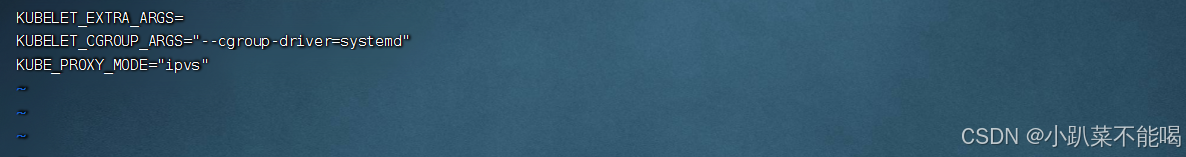

# 配置kubelet的cgroup,编辑/etc/sysconfig/kubelet,添加下面的配置,里面有个空配置,删除即可

KUBELET_CGROUP_ARGS="--cgroup-driver=systemd"

KUBE_PROXY_MODE="ipvs"

#设置kubelet开机自启

systemctl enable kubelet

准备集群镜像

# 在安装kubernetes集群之前,必须要提前准备好集群需要的镜像,所需镜像可以通过下面命令查看

kubeadm config images list

# 下载镜像

# 此镜像在kubernetes的仓库中,由于网络原因,无法连接,下面提供了一种替换方案

images=(

kube-apiserver:v1.17.4

kube-controller-manager:v1.17.4

kube-scheduler:v1.17.4

kube-proxy:v1.17.4

pause:3.1

etcd:3.4.3-0

coredns:1.6.5

)

for imageName in ${images[@]};do

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/$imageName

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/$imageName k8s.gcr.io/$imageName

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/$imageName

done

集群初始化

下面的操作只需要在master节点上执行,这里特别提示一下,如果是公有云,要写内网地址,不要写公网地址(大坑)

# 创建集群,注意将apiserver的ip地址换成自己的master地址

kubeadm init \

--kubernetes-version=v1.17.4 \

--pod-network-cidr=10.244.0.0/16 \

--service-cidr=10.96.0.0/12 \

--apiserver-advertise-address=192.168.0.12复制下关键信息 ,按要求执行即可,不要复制下面的,复制集群创建成功提示的命令

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.0.12:6443 --token lkjig2.ti134s5qc59pypb2 \

--discovery-token-ca-cert-hash sha256:8693c29dda251a4f45306426178c90cae9294e19650dc7e1915564cebe80558d [root@ecs-601-0003 ~]# mkdir -p $HOME/.kube

[root@ecs-601-0003 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@ecs-601-0003 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

如果要重新初始化

kubeadm reset

清理信息 之后再次进行初始化操作

cd ~ 进入根目录

ll -a 查看是否存在.kube文件

rm -rf /root/.kube

systemctl restart docker ## 重启docker

systemctl restart kubelet ## 重启kubelet

rm -rf /etc/cni/net.d

下面的操作只需要在node节点上执行

kubeadm join 192.168.0.12:6443 --token lkjig2.ti134s5qc59pypb2 \

--discovery-token-ca-cert-hash sha256:8693c29dda251a4f45306426178c90cae9294e19650dc7e1915564cebe80558d

在master上查看节点信息

安装网络插件

kubernetes支持多种网络插件,比如flannel、calico、canal等等,任选一种使用即可,本次选择flannel

只在master节点执行即可,插件使用的是DaemonSet的控制器,它会在每个节点上都运行

直接使用这个kube-flannel.yml ,https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml中的镜像地址是访问不到的

cat <<EOF > kube-flannel.yml

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unused in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-amd64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- amd64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- arm64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-arm64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-arm64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- arm

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-arm

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-arm

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-ppc64le

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- ppc64le

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-ppc64le

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-ppc64le

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-s390x

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- s390x

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-s390x

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-s390x

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

EOF

kubectl apply -f kube-flannel.yml

#查看状态

kubectl get pod -n kube-system

#如果不行可以卸载flannel

kubectl delete -f kube-flannel.yml

kubectl get nodes如果出现 coredns模块一直处于pending状态,需要下载包解压到/opt/cni/bin目录,所有节点都要操作https://github.com/containernetworking/plugins/releases/download/v0.8.6/cni-plugins-linux-amd64-v0.8.6.tgz

tar zxvf cni-plugins-linux-amd64-v0.8.6.tgz -C /opt/cni/bi再次查看节点状态

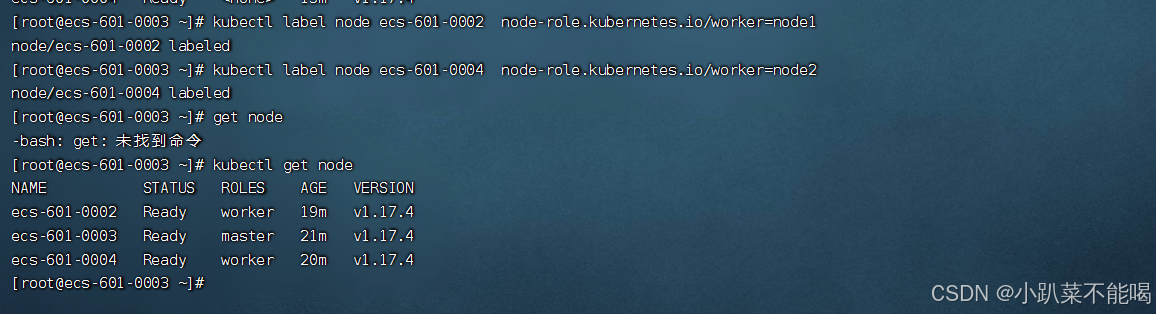

节点显示none,重新命名一下

kubectl label node <nodename> node-role.kubernetes.io/worker=worker至此,集群搭建完毕,过程中有许多的坑和注意事项,每个版本之间也可能会有差异,如果要是离线搭建的话就更费劲了

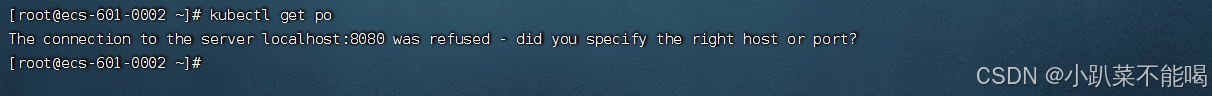

在node节点使用kubectl命令

kubectl 命令在master节点好用,那么在node节点也是可以使用的,现在在node节点使用是这样的

原因就是kubectl的运行是需要进行配置的,它的配置文件是$HOME/.kube,如果想要在node节点运行此命令,需要将master上的.kube文件复制到node节点上才可以执行

scp -r HOME/.kube node1: HOME/测试kubernetes集群

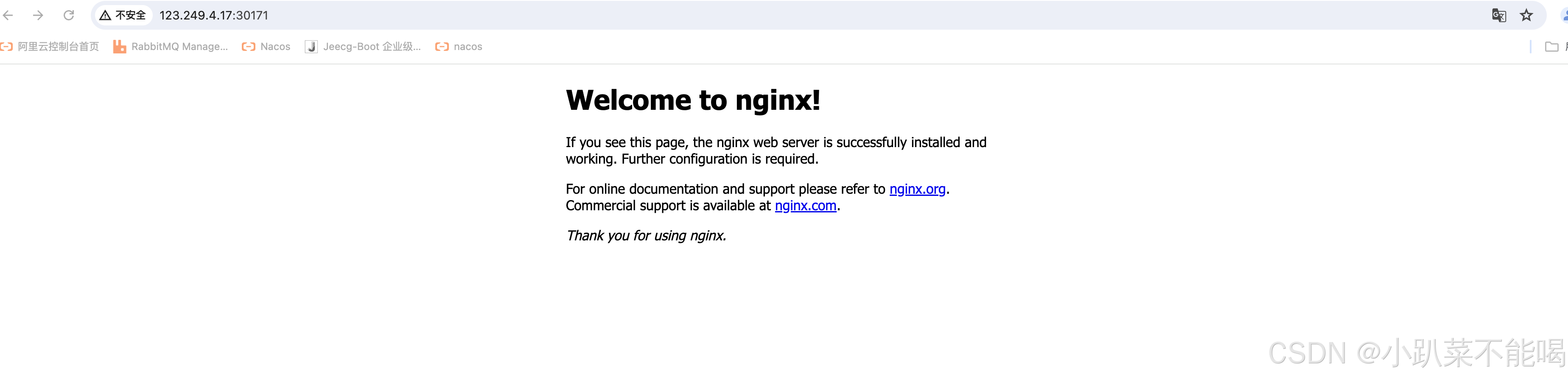

在k8s集群中部署一个nginx服务,并外网访问。

# 1.部署nginx

kubectl create deployment nginx --image=nginx:1.18.0

# 2.暴露端口

kubectl expose deployment nginx --port=80 --type=NodePort

# 3.查看服务状态

kubectl get pods,service | kubectl get pods,svc

30171就是暴露出来对外访问端口,访问试一下