一,Prometheus

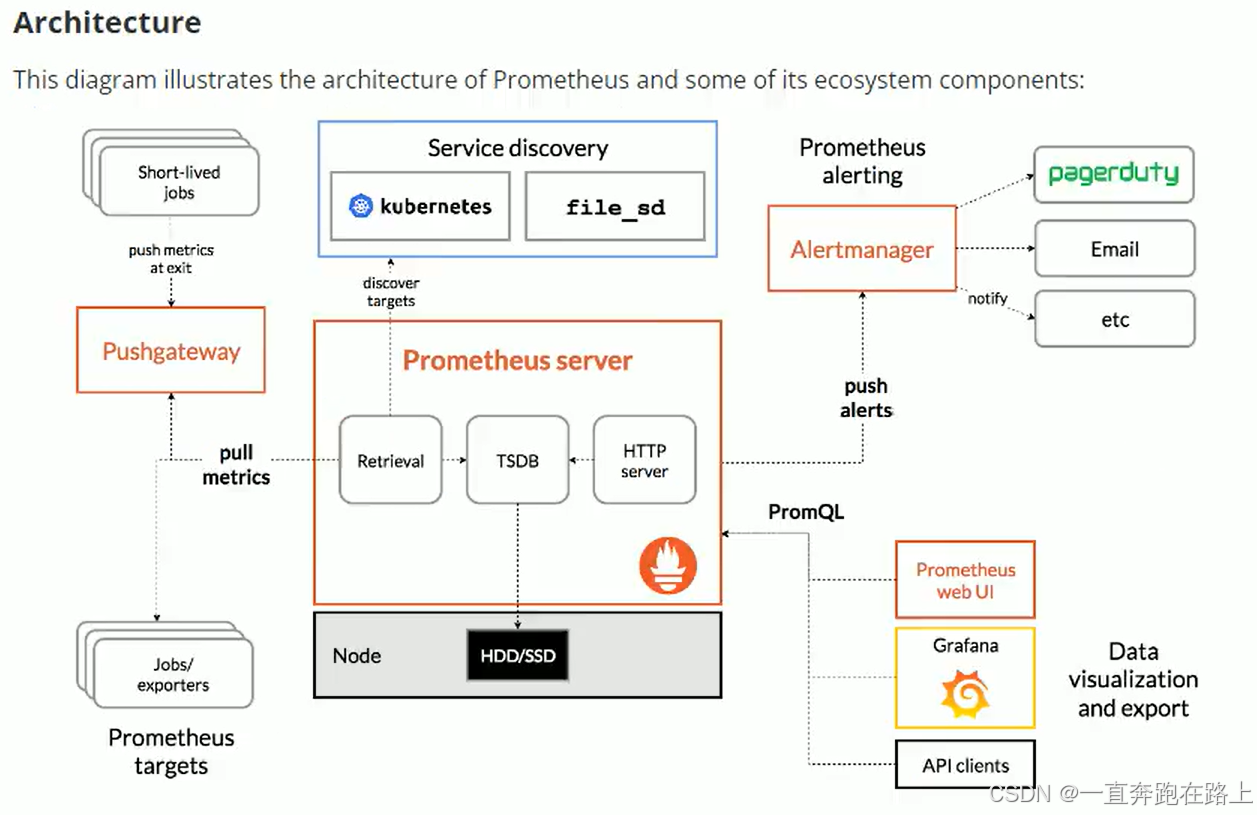

Prometheus是一个开源的系统监控和报警系统。

支持多种 exporter采集数据,支持pushgateway进行数据上报,Prometheus性能足够支撑上万台规模的集群。

资源站点:

1,Prometheus配置:https://prometheus.io/docs/prometheus/latest/configuration/configuration/

2,Prometheus监控组件对应的exporter部署地址:

https://prometheus.io/docs/instrumenting/exporters/

3,Prometheus基于k8s服务发现配置参考:

https://github.com/prometheus/prometheus/blob/release-2.31/documentation/examples/prometheus-kubernetes.yml

四种数据类型:

1,Counter :累计值。只增加,不减少。

2,Gauge:测量器,常规数值。可大,可小。

3,Histogram:柱状图。范围内对数据统计。

4,Summary:时间内的数据统计。

1,node-exporter安装

node-exporter可以采集机器(物理机、虚拟机、云主机等)的监控指标数据,能够采集到的指标包括CPU, 内存,磁盘,网络,文件数等信。

1-1 创建命名空间

创建monitor-sa 的命名空间,存放prometheus相关的组件和配置。

导入镜像文件:node-exporter.tar.gz

# 创建命名空间

kubectl create ns monitor-sa

# 导入镜像

ctr -n=k8s.io images import node-exporter.tar.gz

【扩展】:

格式化,使用命令 set paste 进行vim粘贴

1-2 用DaemonSet部署到每个节点

# vim node-exporter.yaml

apiVersion: apps/v1

kind: DaemonSet # 可以保证k8s集群的每个节点都运行完全一样的pod

metadata:

name: node-exporter

namespace: monitor-sa

labels:

name: node-exporter

spec:

selector:

matchLabels:

name: node-exporter

template:

metadata:

labels:

name: node-exporter

spec:

hostPID: true

hostIPC: true

hostNetwork: true

# hostNetwork、hostIPC、hostPID都为True时,表示这个Pod里的所有容器,会直接使用宿主机的网络,直接与宿主机进行IPC(进程间通信)通信,可以看到宿主机里正在运行的所有进程。加入了hostNetwork:true会直接将我们的宿主机的9100端口映射出来,从而不需要创建service 在我们的宿主机上就会有一个9100的端口

containers:

- name: node-exporter

image: prom/node-exporter:v0.16.0

imagePullPolicy: IfNotPresent

ports:

- containerPort: 9100

resources:

requests:

cpu: 0.15 # 这个容器运行至少需要0.15核cpu

securityContext:

privileged: true # 开启特权模式

args:

- --path.procfs # 配置挂载宿主机(node节点)的路径

- /host/proc

- --path.sysfs # 配置挂载宿主机(node节点)的路径

- /host/sys

- --collector.filesystem.ignored-mount-points

- '"^/(sys|proc|dev|host|etc)($|/)"'

#通过正则表达式忽略某些文件系统挂载点的信息收集

volumeMounts:

- name: dev

mountPath: /host/dev

- name: proc

mountPath: /host/proc

- name: sys

mountPath: /host/sys

- name: rootfs

mountPath: /rootfs

tolerations:

- key: "node-role.kubernetes.io/master" # 配置实际master主机的容忍

operator: "Exists"

effect: "NoSchedule"

volumes:

- name: proc

hostPath:

path: /proc

- name: dev

hostPath:

path: /dev

- name: sys

hostPath:

path: /sys

- name: rootfs

hostPath:

path: /

#将主机/dev、/proc、/sys这些目录挂在到容器中,这是因为我们采集的很多节点数据都是通过这些文件来获取系统信息的

【注意】:

容忍度,一定根据自己master主机的实际污点来配置配置。否则master节点主机上无法部署。

kubectl apply -f node-export.yaml

node-exporter 默认的监听端口是9100,可以看到当前主机获取到的所有监控数据,curl http://主机ip:9100/metrics,例如:

# 显示192.168.40.180主机cpu的使用情况

curl http://192.168.40.180:9100/metrics | grep node_cpu_seconds

node-exporter安装成功!!

2,prometheus安装

2-1 创建sa用户,并给sa用户和user用户授权

prometheus生成,会自动创建user用户 ,对其授权。

# 创建sa账号

kubectl create serviceaccount monitor -n monitor-sa

# 对sa做rbac授权

kubectl create clusterrolebinding monitor-clusterrolebinding -n monitor-sa --clusterrole=cluster-admin --serviceaccount=monitor-sa:monitor

# 防止后面prometheus后面授权报错,提前执行此命令

# 此用户:system:serviceaccount:monitor:monitor-sa 为prometheus自动创建的用户

kubectl create clusterrolebinding monitor-clusterrolebinding-1 -n monitor-sa --clusterrole=cluster-admin --user=system:serviceaccount:monitor:monitor-sa

在node03节点(可以自己指定)创建数据目录/data/:

# 创建prometheus数据存储目录,并加权限

mkdir /data

chmod 777 /data/

2-2 用Configmap管理Prometheus配置信息

创建一个configmap存储卷,存放prometheus配置信息。此文件一般为固定配置,无特殊需求,不用更改:

# vim prometheus-cfg.yaml

---

kind: ConfigMap

apiVersion: v1

metadata:

labels:

app: prometheus

name: prometheus-config

namespace: monitor-sa

data:

prometheus.yml: |- # 此处保留“|”线,去掉 -

global:

scrape_interval: 15s # 采集目标主机监控据的时间间隔,不可大于evaluation_interval值

scrape_timeout: 10s # 数据采集超时时间,默认10s

evaluation_interval: 1m # 触发告警检测的时间,默认是1分钟

scrape_configs: # 配置数据源,

- job_name: 'kubernetes-node' # job_name称为target。又分为静态配置和服务发现

kubernetes_sd_configs: # 使用的是k8s的服务发现

- role: node # 使用node角色,默认是使用kubelet提供的http端口来发现集群中每个node节点

relabel_configs: # 重新标记

- source_labels: [__address__] # 配置的原始标签,匹配地址

regex: '(.*):10250' # 匹配带有10250端口的url

replacement: '${1}:9100' # 把匹配到的ip:10250的ip保留

target_label: __address__ # 新生成的url是${1}获取到的ip:9100

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- job_name: 'kubernetes-node-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: 'kubernetes-apiserver'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

2-3 用Deployment 部署Prometheus

导入镜像文件:prometheus-2-2-1.tar.gz

ctr -n=k8s.io images import prometheus-2-2-1.tar.gz

# vim prometheus-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus-server

namespace: monitor-sa

labels:

app: prometheus

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

component: server

#matchExpressions:

#- {key: app, operator: In, values: [prometheus]}

#- {key: component, operator: In, values: [server]}

template:

metadata:

labels:

app: prometheus

component: server

annotations:

prometheus.io/scrape: 'false'

spec:

nodeName: node03 # 指定自己node节点名称

serviceAccountName: monitor

containers:

- name: prometheus

image: prom/prometheus:v2.2.1

imagePullPolicy: IfNotPresent

command:

- prometheus

- --config.file=/etc/prometheus/prometheus.yml

- --storage.tsdb.path=/prometheus # 旧数据存储目录

- --storage.tsdb.retention=720h # 何时删除旧数据,默认为15天

- --web.enable-lifecycle # 开启热加载

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: /etc/prometheus

name: prometheus-config

- mountPath: /prometheus/

name: prometheus-storage-volume

volumes:

- name: prometheus-config

configMap:

name: prometheus-config

- name: prometheus-storage-volume

hostPath:

path: /data

type: Directory

【注意】:

在k8s集群的哪个节点创建/data,就让pod调度到哪个节点,nodeName根据实际主机去修改即可。

2-4 pod创建一个service(prometheus的pod前端)

# cat prometheus-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: prometheus

namespace: monitor-sa

labels:

app: prometheus

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

protocol: TCP

selector:

app: prometheus

component: server

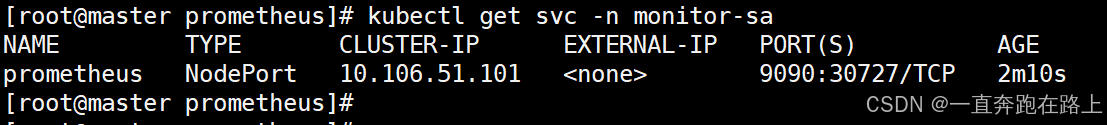

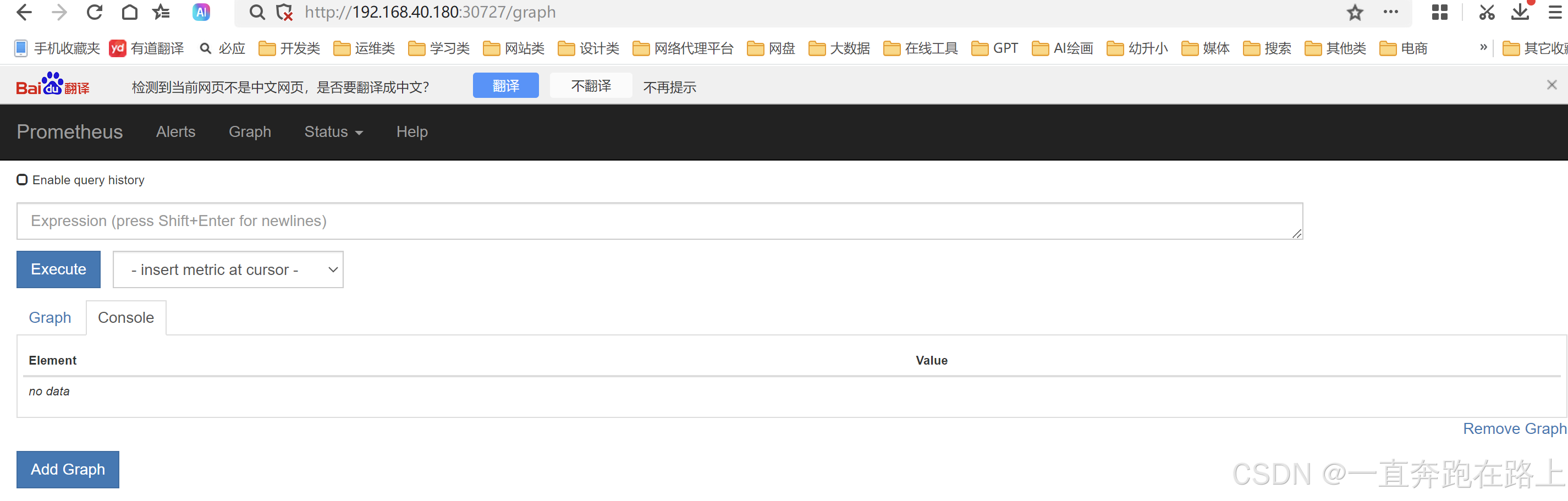

访问:[k8s集群的master1节点的ip]:30727

至此,Prometheus安装完毕!!!

【扩展】:

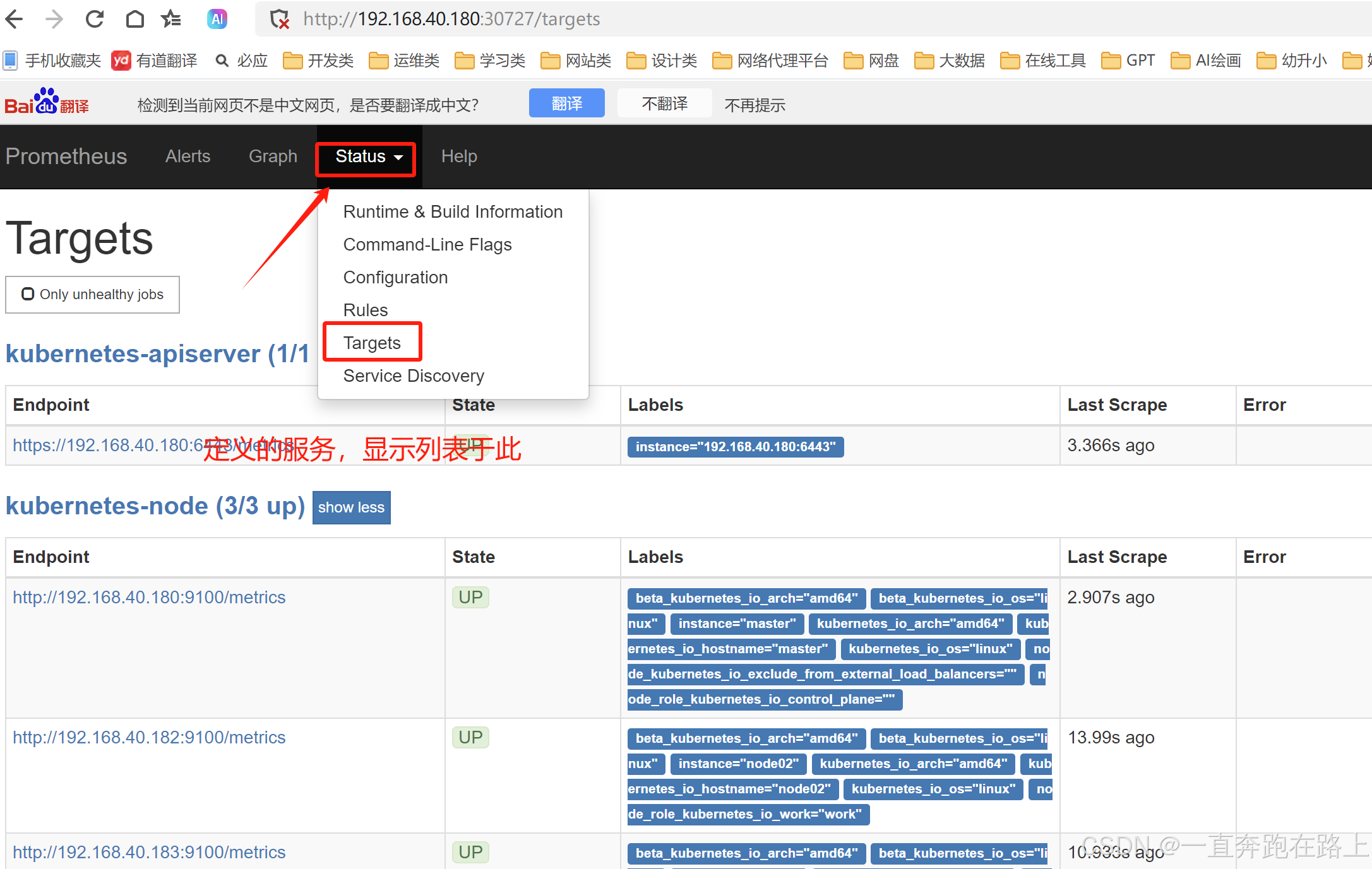

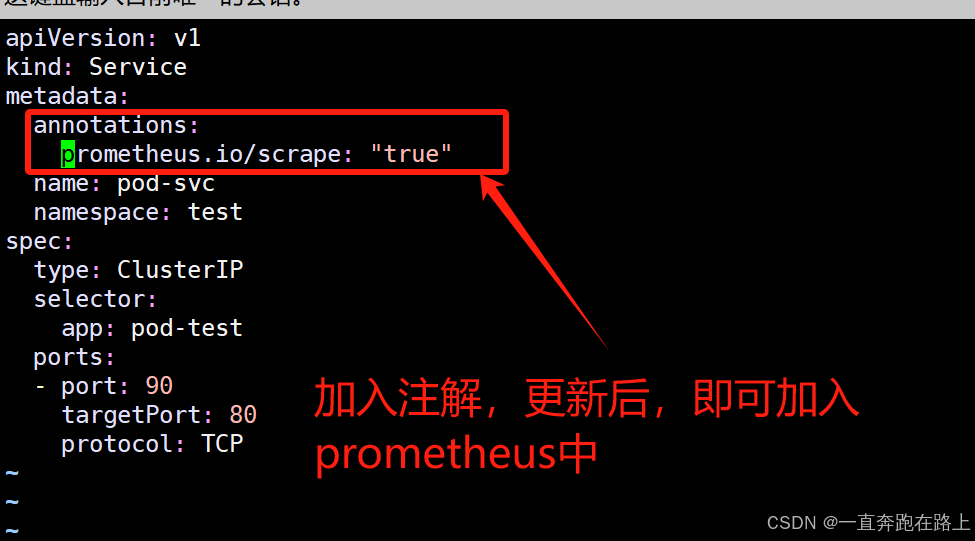

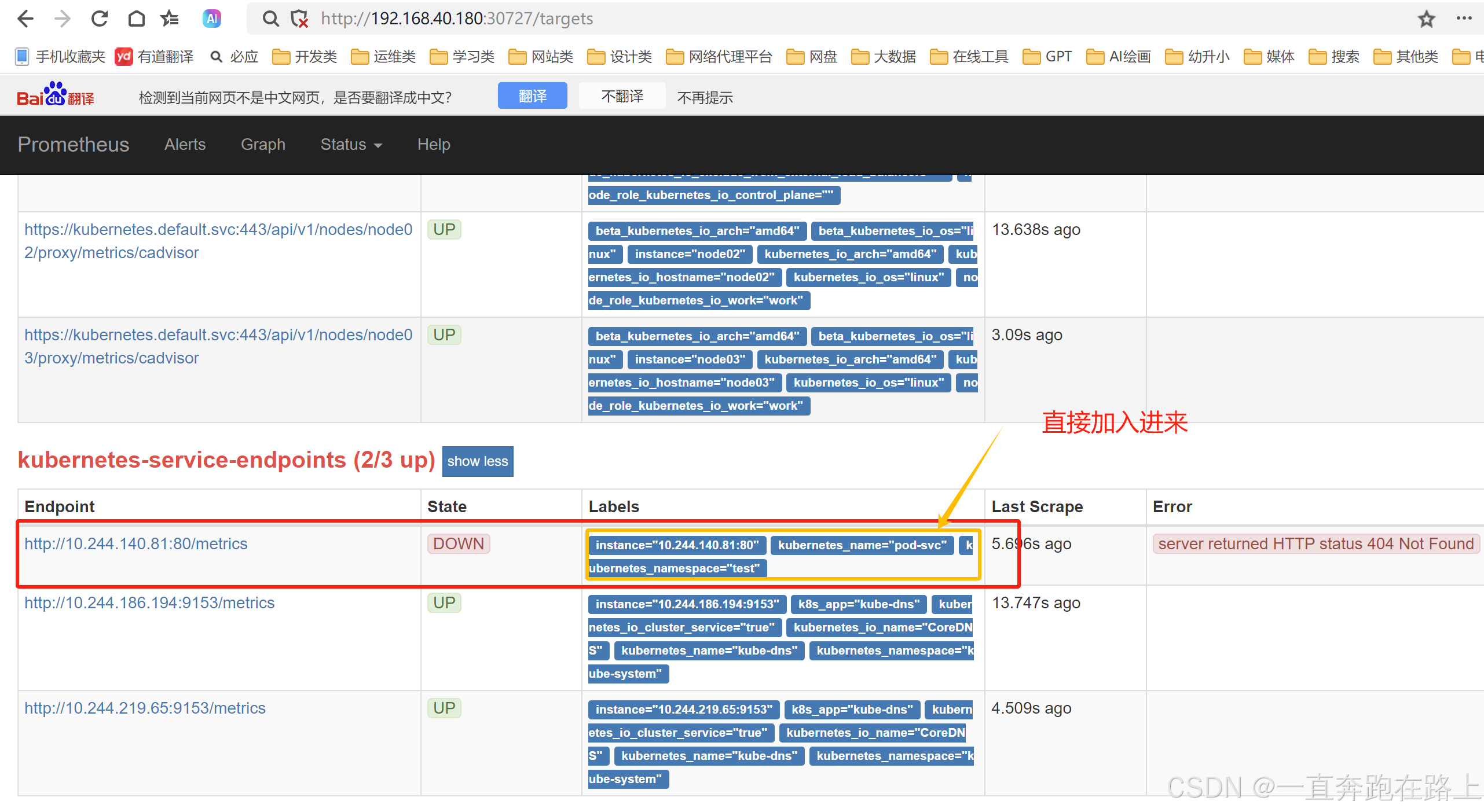

对应资源svc,如果要加入到prometheus中,只需要在yaml文件加入“注解”,更新prometheus配置,刷新页面即可:

更新prometheus即可:

2-5 Prometheus热加载

在不停止prometheus服务情况下,每次修改配置文件可以进行热加载,使配置生效。

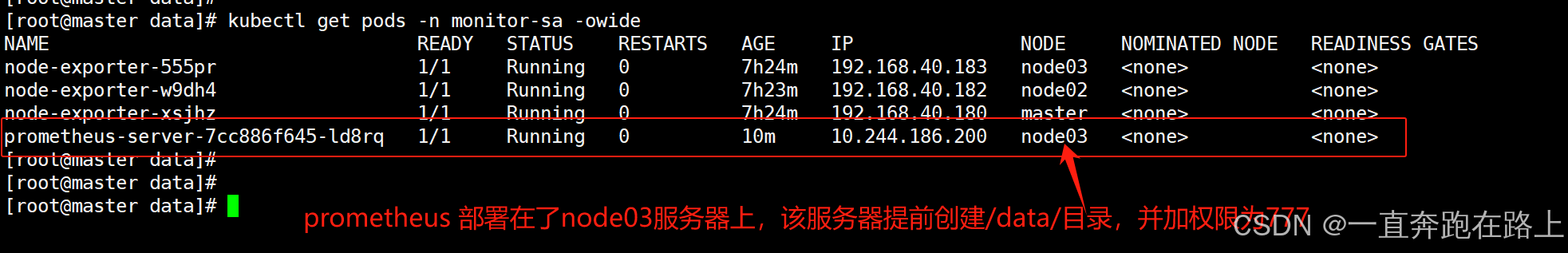

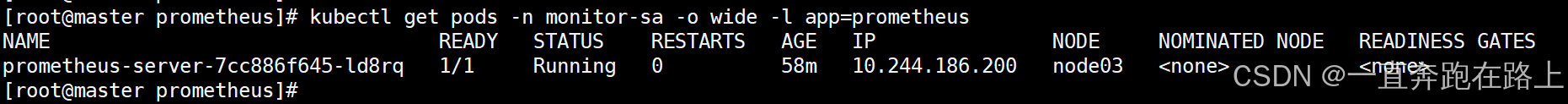

(1)先查看prometheus的pod对应的ip

kubectl get pods -n monitor-sa -o wide -l app=prometheus

prometheus的pod的ip地址为:10.244.186.200

(2)热加载命令:

curl -X POST http://10.244.186.200:9090/-/reload

(3)暴力删除(不推荐)

热加载速度比较慢,可以暴力重启prometheus。

当修改上面的prometheus-cfg.yaml文件之后,可执行如下强制删除:

kubectl delete -f prometheus-cfg.yaml

kubectl delete -f prometheus-deploy.yaml

然后再通过apply更新:

kubectl apply -f prometheus-cfg.yaml

kubectl apply -f prometheus-deploy.yaml

【注意】:

线上最好热加载,暴力删除可能造成监控数据的丢失。

二,Grafana

Grafana是一个跨平台的开源的度量分析和可视化工具,可以将采集的数据可视化的展示,并及时通知给告警接收方。

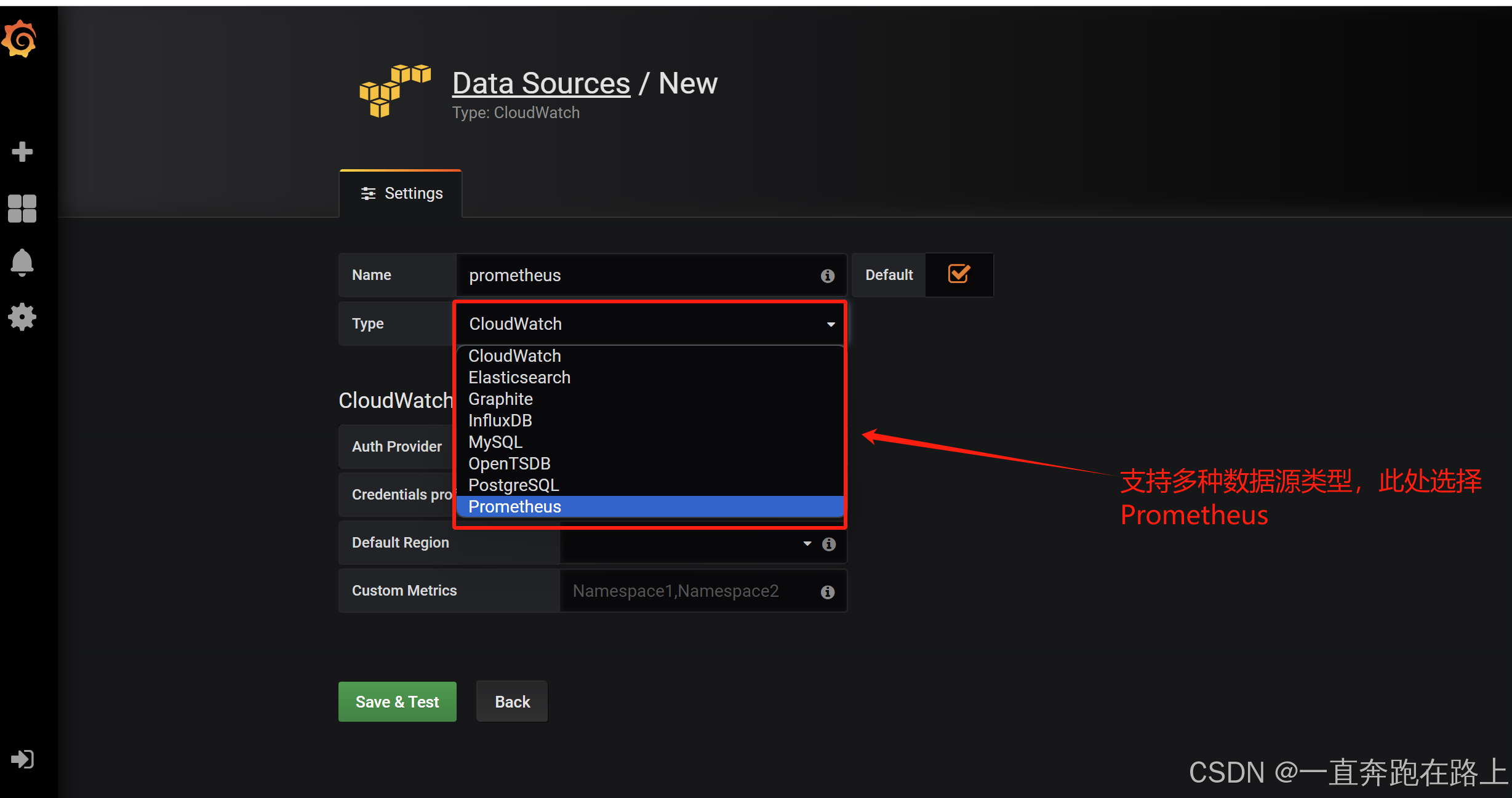

数据源:Graphite,InfluxDB,OpenTSDB,Prometheus,Elasticsearch,CloudWatch和KairosDB等。

1,安装Grafana

node节点导入镜像文件:heapster-grafana-amd64_v5_0_4.tar.gz

ctr -n=k8s.io images import heapster-grafana-amd64_v5_0_4.tar.gz

# vim grafana.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: monitoring-grafana

namespace: kube-system # 命名空间设置为kube-system

spec:

replicas: 1

selector:

matchLabels:

task: monitoring

k8s-app: grafana

template:

metadata:

labels:

task: monitoring

k8s-app: grafana

spec:

containers:

- name: grafana

image: k8s.gcr.io/heapster-grafana-amd64:v5.0.4

imagePullPolicy: IfNotPresent

ports:

- containerPort: 3000

protocol: TCP

volumeMounts:

- mountPath: /etc/ssl/certs

name: ca-certificates

readOnly: true

- mountPath: /var

name: grafana-storage

env:

- name: INFLUXDB_HOST

value: monitoring-influxdb

- name: GF_SERVER_HTTP_PORT

value: "3000"

# The following env variables are required to make Grafana accessible via

# the kubernetes api-server proxy. On production clusters, we recommend

# removing these env variables, setup auth for grafana, and expose the grafana

# service using a LoadBalancer or a public IP.

- name: GF_AUTH_BASIC_ENABLED

value: "false"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

- name: GF_SERVER_ROOT_URL

# If you're only using the API Server proxy, set this value instead:

# value: /api/v1/namespaces/kube-system/services/monitoring-grafana/proxy

value: /

volumes:

- name: ca-certificates

hostPath:

path: /etc/ssl/certs

- name: grafana-storage

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

labels:

# For use as a Cluster add-on (https://github.com/kubernetes/kubernetes/tree/master/cluster/addons)

# If you are NOT using this as an addon, you should comment out this line.

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-grafana

name: monitoring-grafana

namespace: kube-system # 命名空间设置为kube-system

spec:

# In a production setup, we recommend accessing Grafana through an external Loadbalancer

# or through a public IP.

# type: LoadBalancer

# You could also use NodePort to expose the service at a randomly-generated port

# type: NodePort

ports:

- port: 80

targetPort: 3000

selector:

k8s-app: grafana

type: NodePort

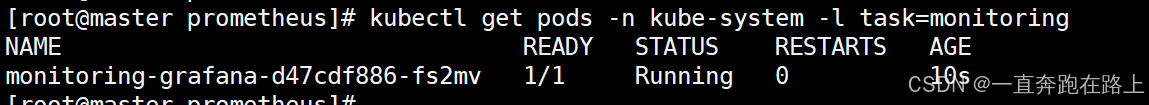

查看grafana是否创建成功:

kubectl get pods -n kube-system -l task=monitoring

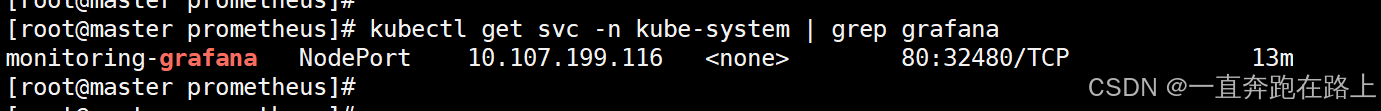

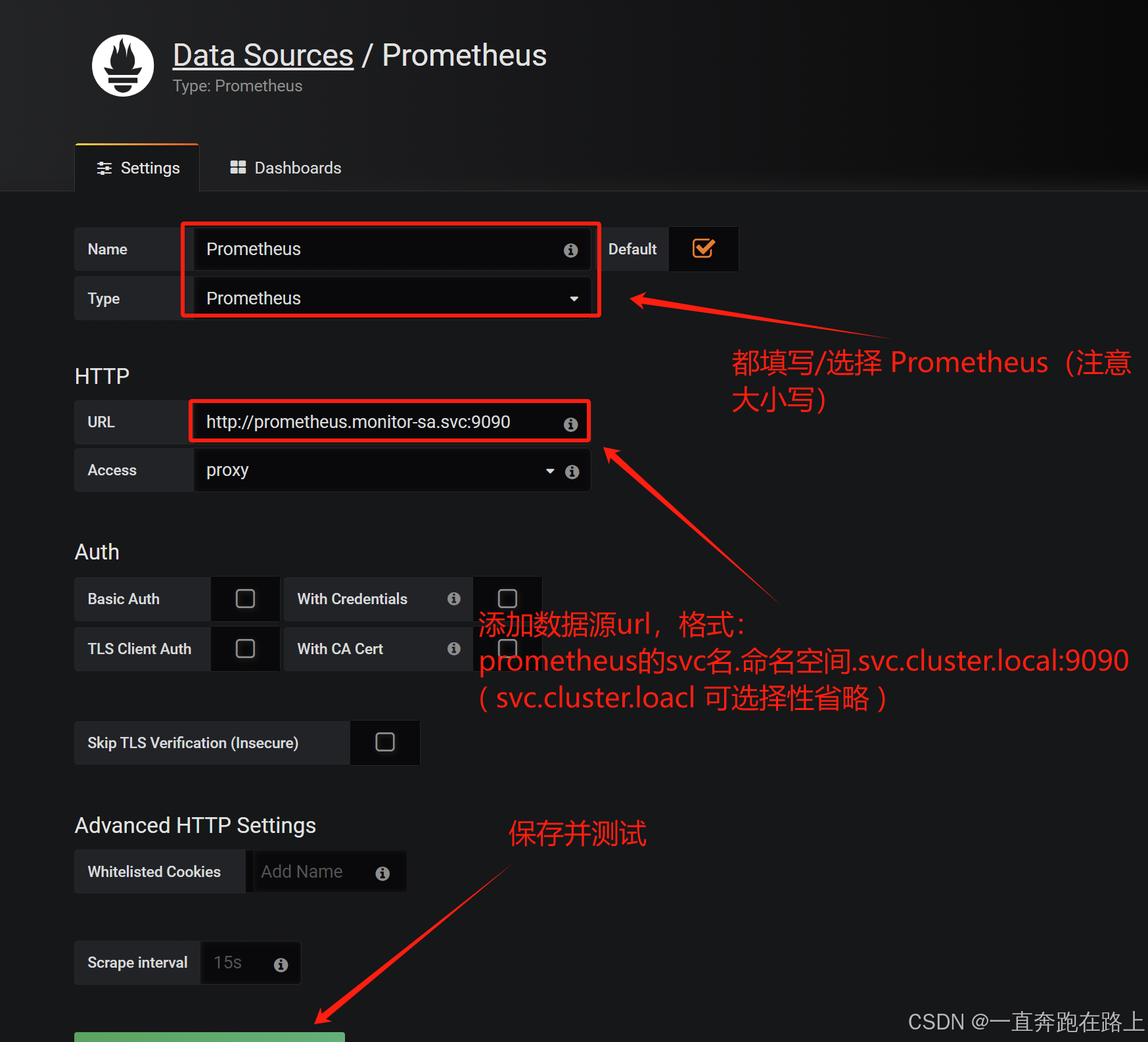

2,Grafana 如何接入 prometheus

kubectl get svc -n kube-system | grep grafana

查看对外暴露端口:

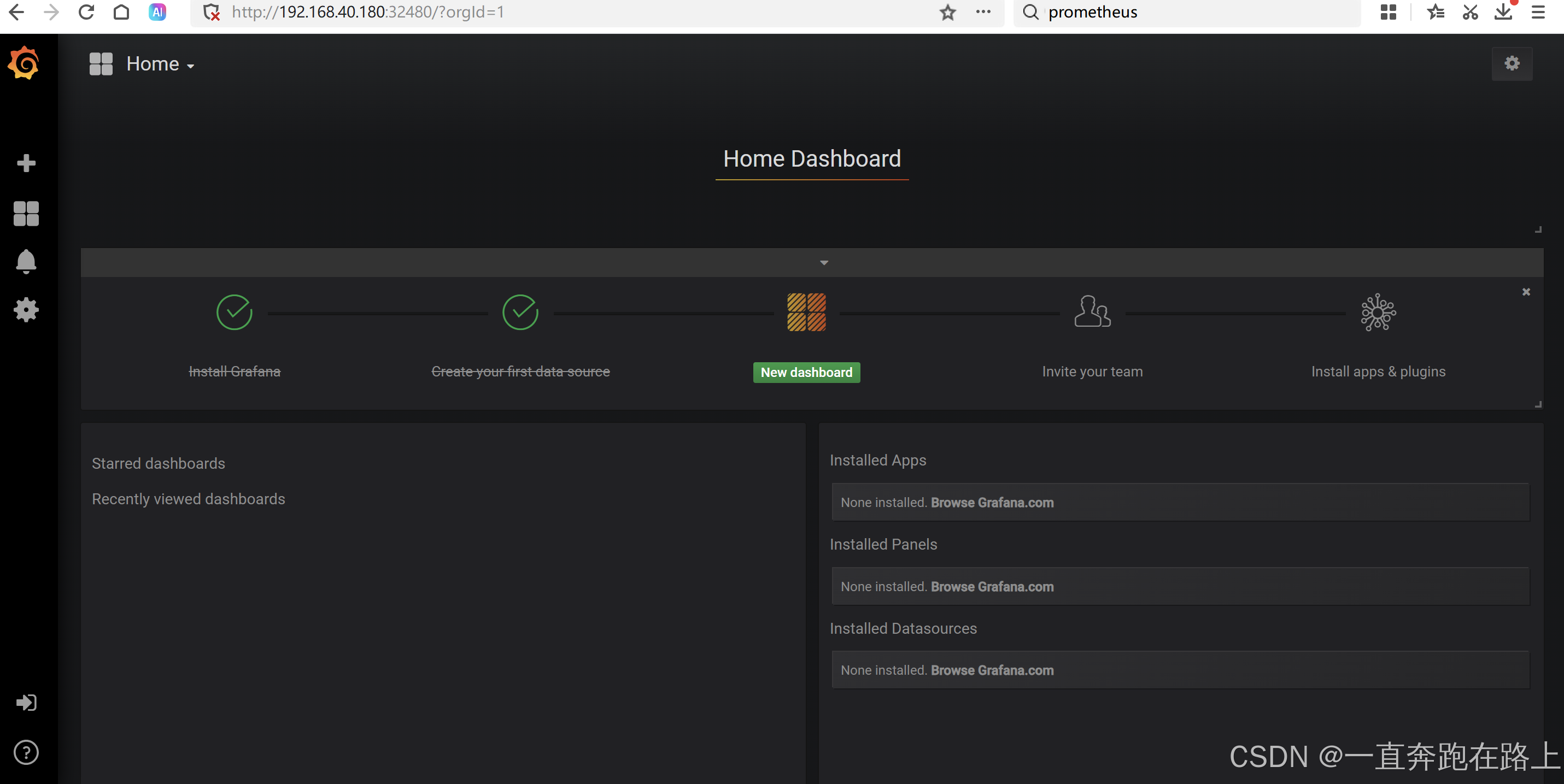

(1)在浏览器访问,登陆grafana: master的IP:32480

http://192.168.40.180:32480

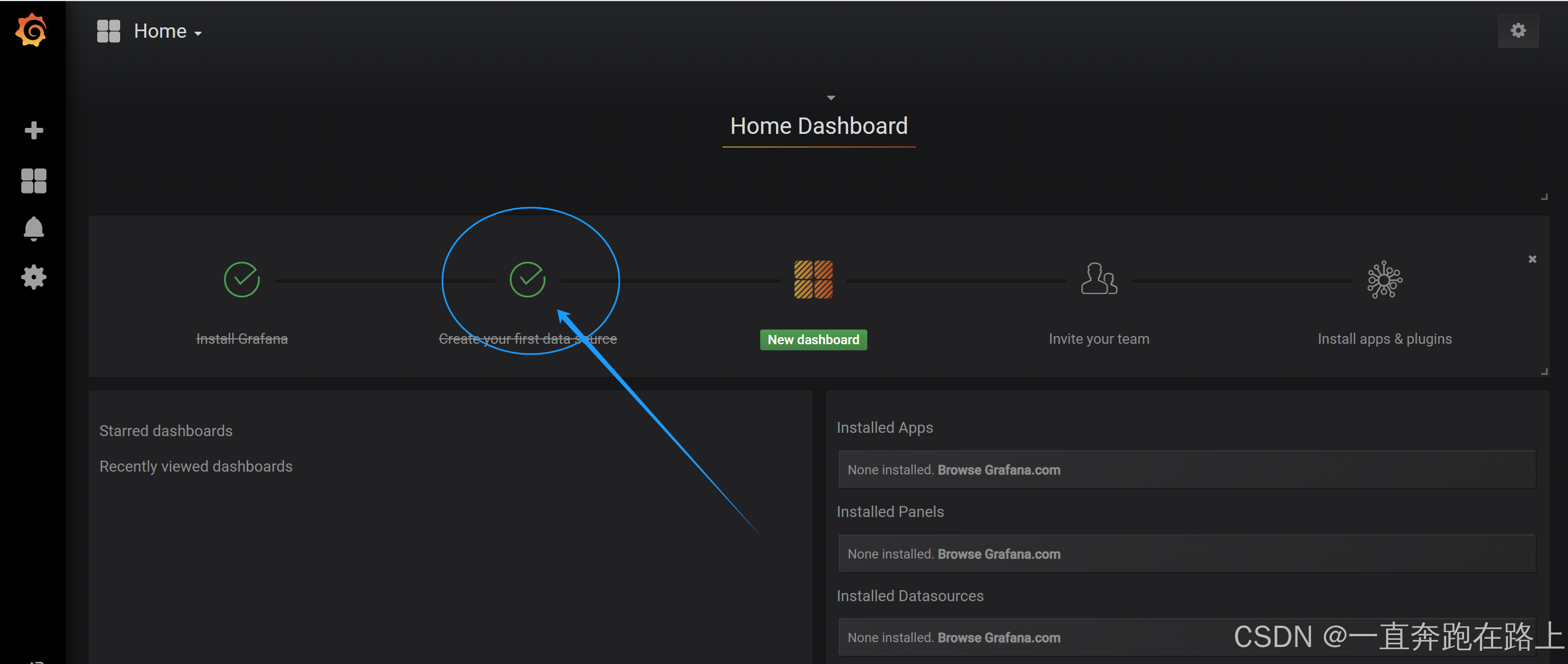

配置数据源连接:

点击左下角 “Save & Test”,出现如下 “Data source is working”,说明Prometheus数据源成功接入Grafana。

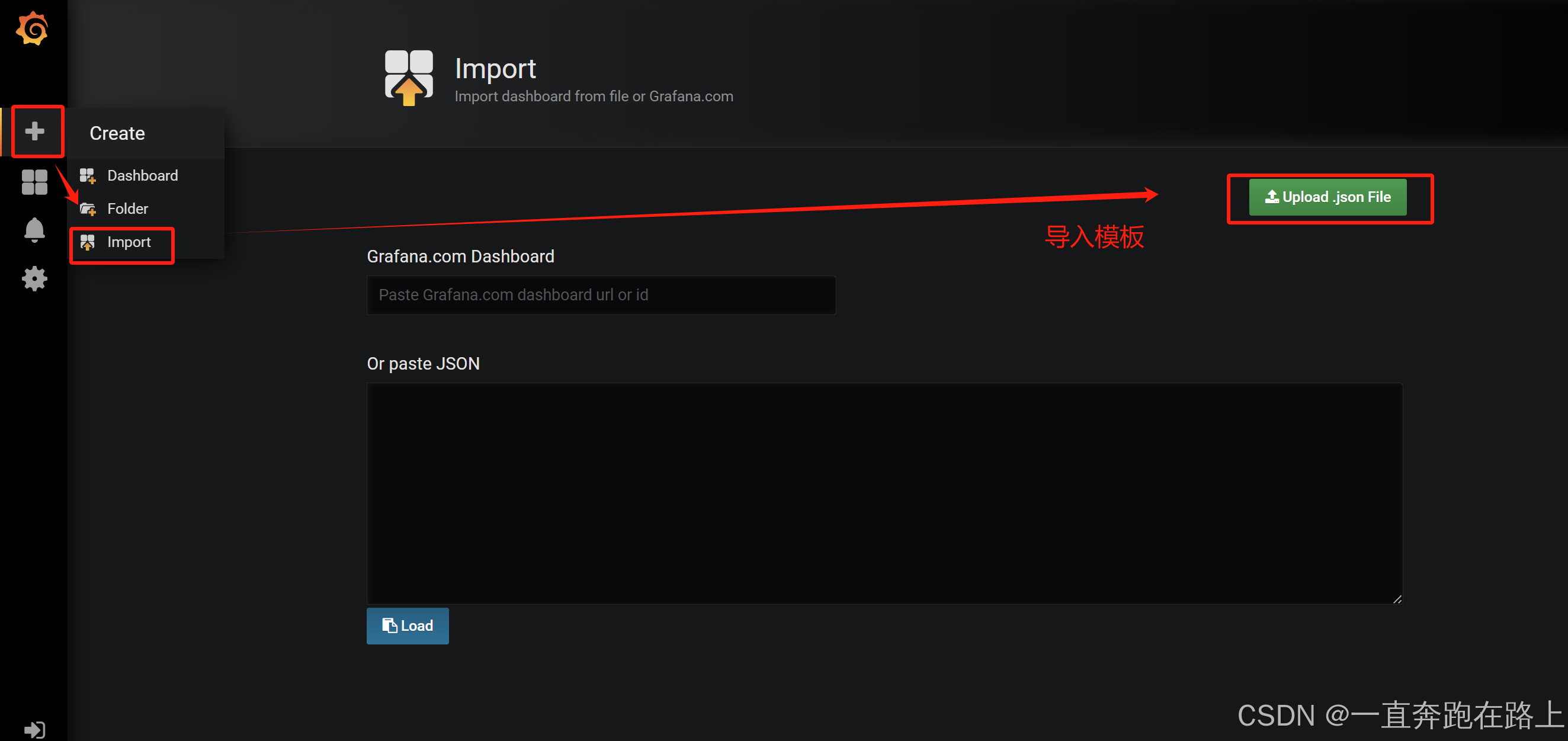

3, 导入数据展示模板

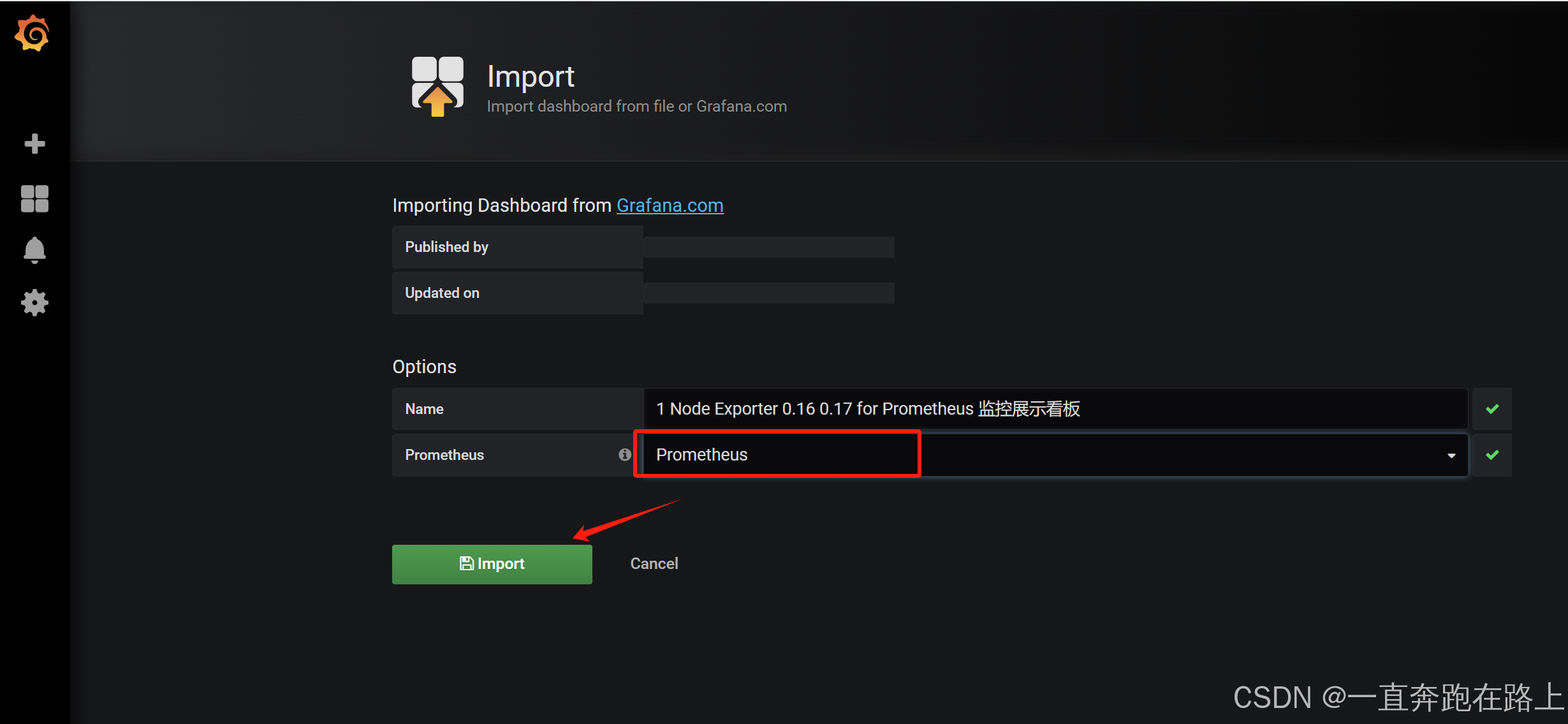

依然选择设置成Prometheus,然后再点击Import,出现下面界面:

依据上述步骤,可分别导入模板:docker_rev1.json和mysql-overview_rev5.json

至此,模板导入完成,Grafana搭建成功!!!

【扩展】:

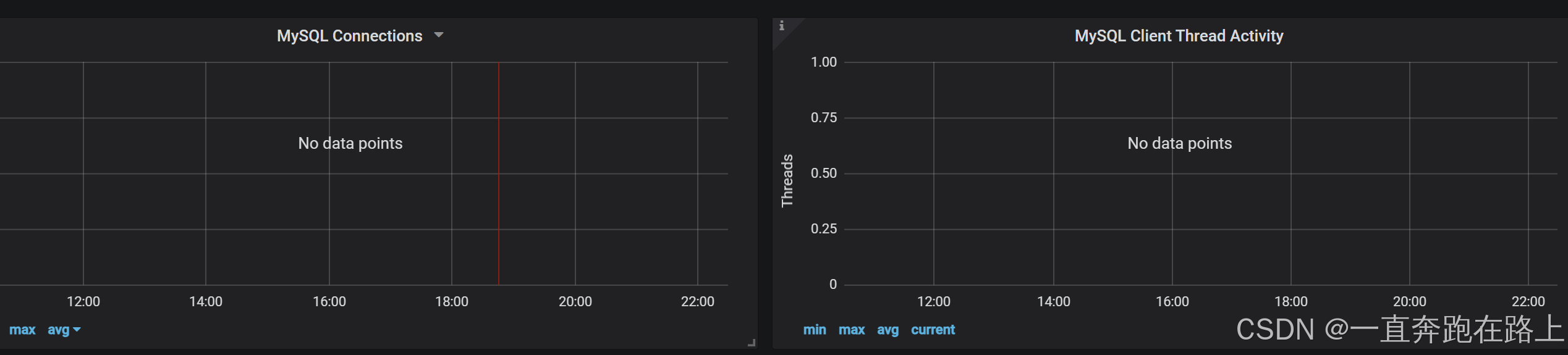

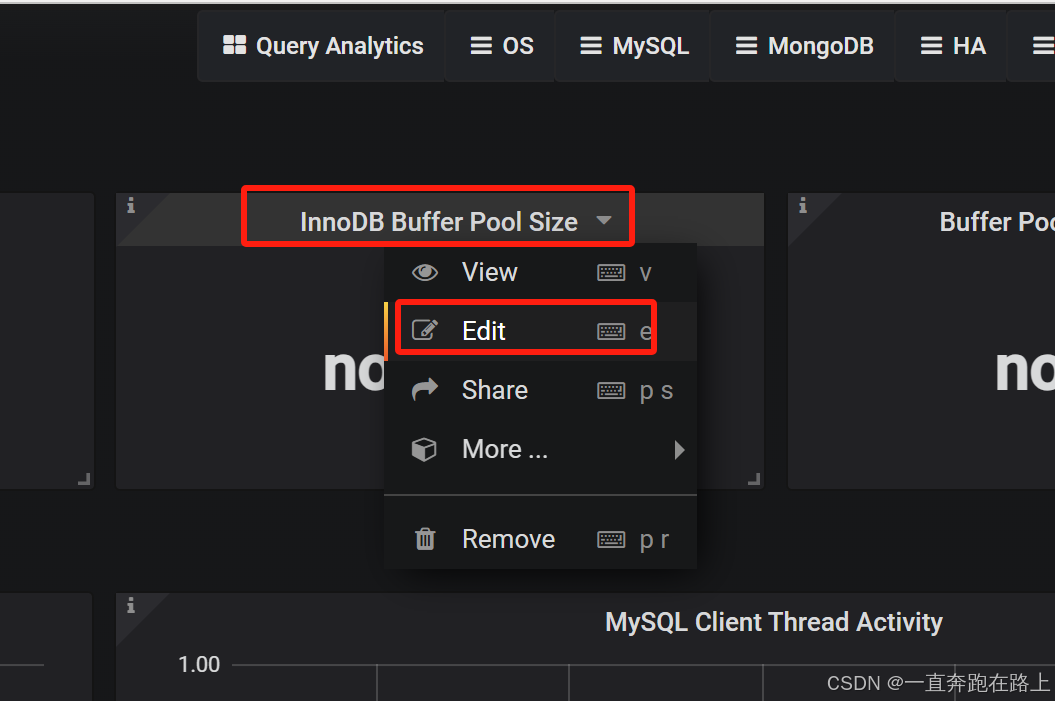

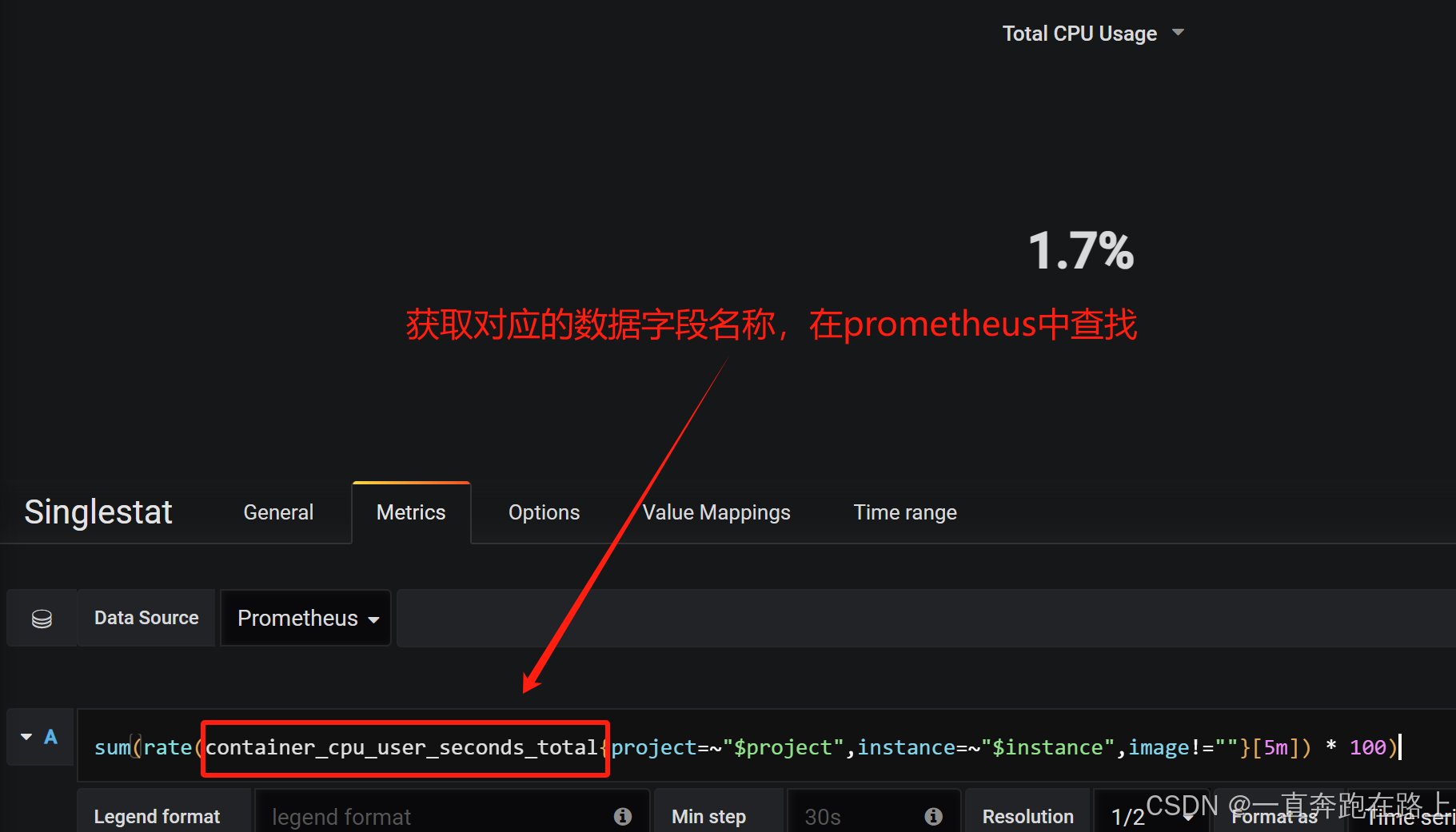

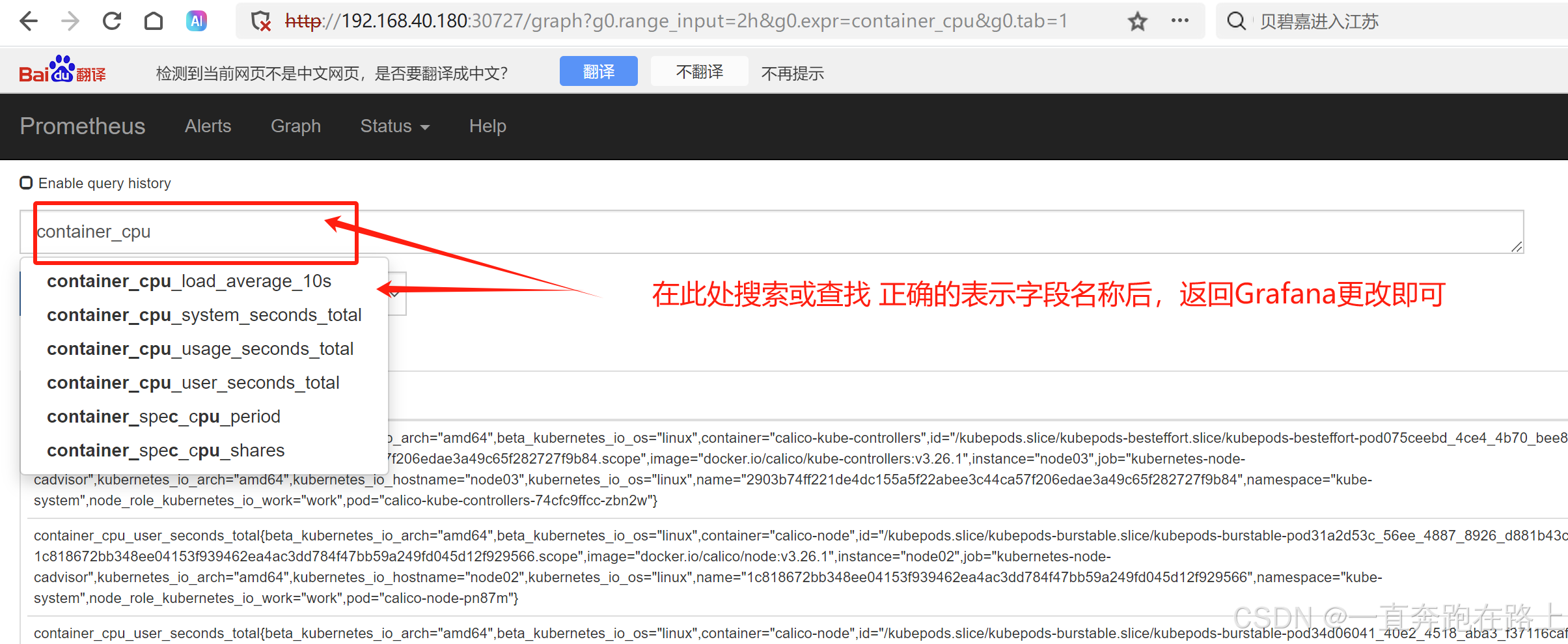

如果Grafana导入Prometheusz之后,发现仪表盘没有数据,如何排查?

(1)无数据模块,点击编辑,获取数据字段名

(2)返回prometheus中进行搜索查找数据字段名 , 是否包含。多数去情况因为缺少“s”

将正确字段,填写入Grafana中即可加载对应数据内容。

4,安装kube-state-metrics组件

kube-state-metrics通过监听API Server生成有关资源对象的状态指标,比如Node、Pod。

需要注意的是kube-state-metrics只是简单的提供一个metrics数据,并不会存储这些指标数据,所以我们可以使用Prometheus来抓取这些数据然后存储,主要关注的是业务相关的一些元数据,比如Pod副本状态等;调度了多少个replicas?现在可用的有几个?Pod重启了多少次?我有多少job在运行中。

4-1 创建sa账号,并授权

通过yaml文件,创建并授权:

# vim kube-state-metrics-rbac.yaml

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: kube-state-metrics

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: kube-state-metrics

rules:

- apiGroups: [""]

resources: ["nodes", "pods", "services", "resourcequotas", "replicationcontrollers", "limitranges", "persistentvolumeclaims", "persistentvolumes", "namespaces", "endpoints"]

verbs: ["list", "watch"]

- apiGroups: ["extensions"]

resources: ["daemonsets", "deployments", "replicasets"]

verbs: ["list", "watch"]

- apiGroups: ["apps"]

resources: ["statefulsets"]

verbs: ["list", "watch"]

- apiGroups: ["batch"]

resources: ["cronjobs", "jobs"]

verbs: ["list", "watch"]

- apiGroups: ["autoscaling"]

resources: ["horizontalpodautoscalers"]

verbs: ["list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kube-state-metrics

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kube-state-metrics

subjects:

- kind: ServiceAccount

name: kube-state-metrics

4-2 安装kube-state-metrics组件

node节点上,导入所需镜像文件:kube-state-metrics_1_9_0.tar.gz

ctr -n=k8s.io images import kube-state-metrics_1_9_0.tar.gz

# cat kube-state-metrics-deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: kube-state-metrics

namespace: kube-system # 指定命名空间

spec:

replicas: 1

selector:

matchLabels:

app: kube-state-metrics

template:

metadata:

labels:

app: kube-state-metrics

spec:

serviceAccountName: kube-state-metrics

containers:

- name: kube-state-metrics

image: quay.io/coreos/kube-state-metrics:v1.9.0

imagePullPolicy: IfNotPresent

ports:

- containerPort: 8080

查看kube-state-metrics是否部署成功:

kubectl get pods -n kube-system -l app=kube-state-metrics

4-3 创建service

cat kube-state-metrics-svc.yaml

apiVersion: v1

kind: Service

metadata:

annotations:

prometheus.io/scrape: 'true'

name: kube-state-metrics

namespace: kube-system

labels:

app: kube-state-metrics

spec:

ports:

- name: kube-state-metrics

port: 8080

protocol: TCP

selector:

app: kube-state-metrics

查看service是否创建成功

kubectl get svc -n kube-system | grep kube-state-metrics

创建成功!!!

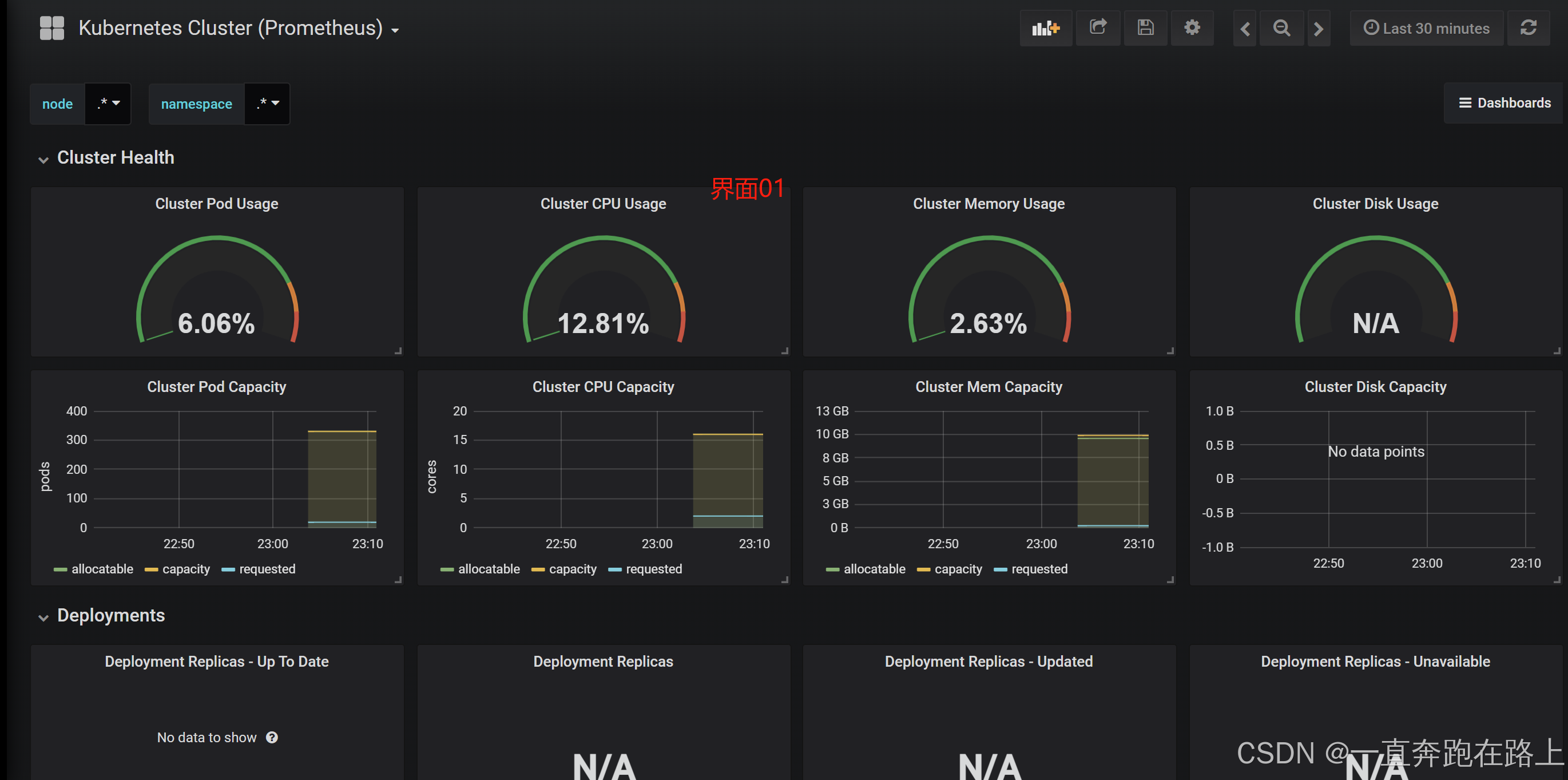

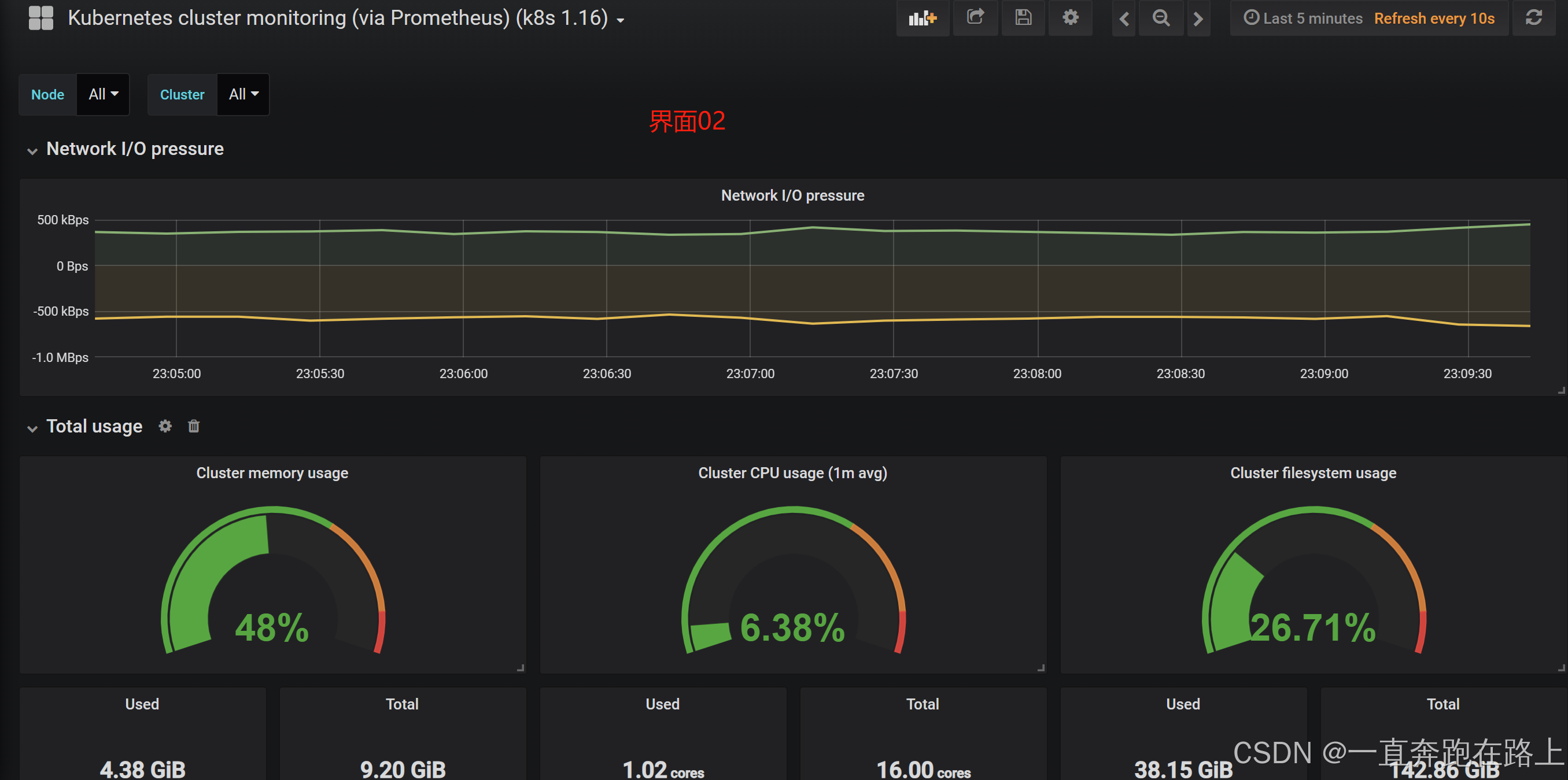

4-4 导入数据模板

在grafana web界面导入

Kubernetes Cluster (Prometheus)-1577674936972.json和

Kubernetes cluster monitoring (via Prometheus) (k8s 1.16)-1577691996738.json。

至此,Grafana软件安装完毕(根据所需,可自行下载数据模板导入)!!!

三,Alertmanager

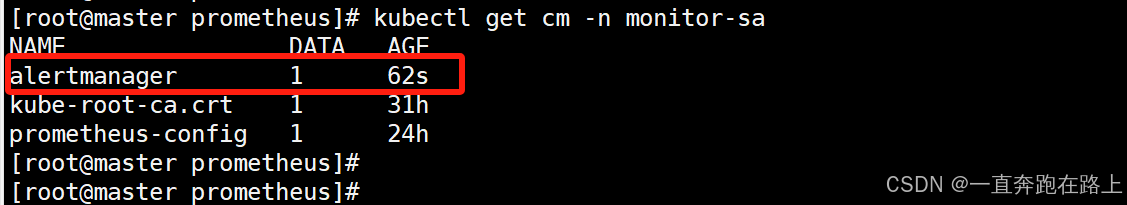

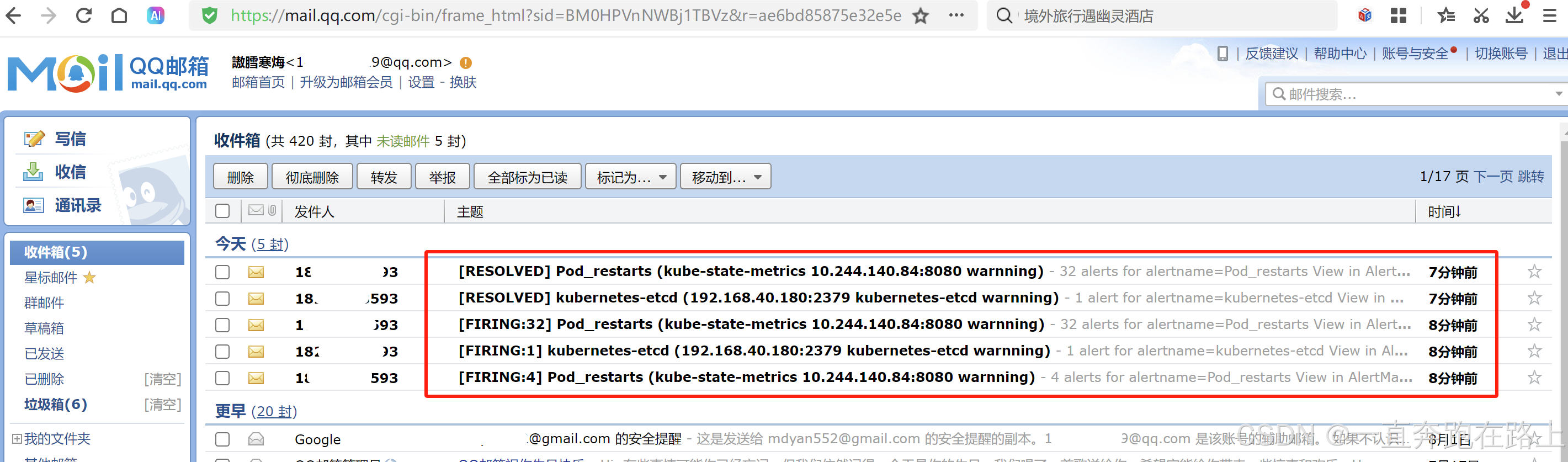

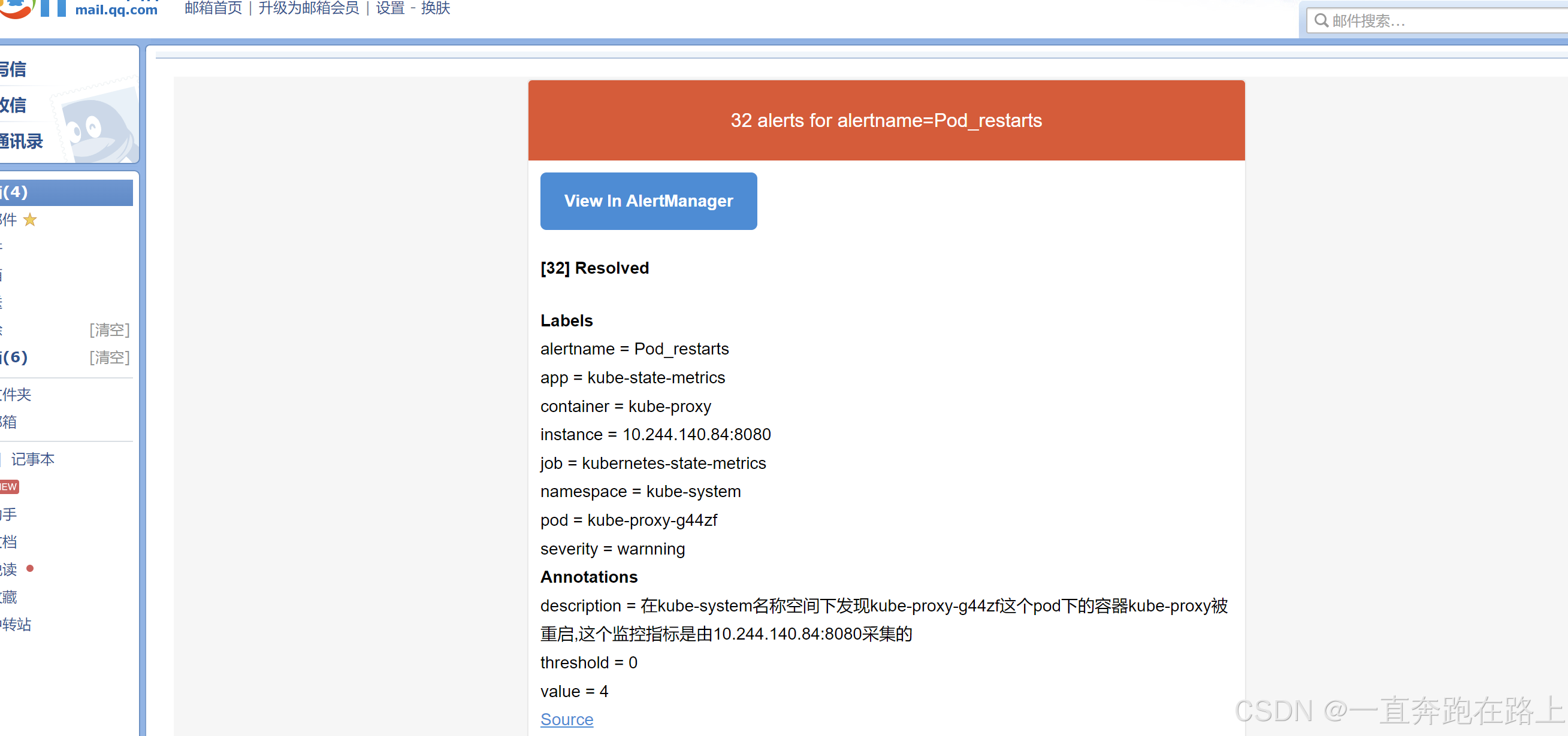

1,创建Configmap管理账号, 发送到邮箱

# vim alertmanager-cm.yaml

kind: ConfigMap

apiVersion: v1

metadata:

name: alertmanager

namespace: monitor-sa

data:

alertmanager.yml: |- # 记得去掉 -

global:

resolve_timeout: 1m

smtp_smarthost: 'smtp.163.com:25'

smtp_from: '[email protected]' # 自己的163邮箱 或其他

smtp_auth_username: '[email protected]' # 自己的163邮箱 或其他

smtp_auth_password: 'xxexx8ZpccxxMc' # 自己的163邮箱授权码

smtp_require_tls: false

route:

group_by: [alertname]

group_wait: 10s

group_interval: 10s

repeat_interval: 10m

receiver: default-receiver # 该名称与下面名称保持一致

receivers:

- name: 'default-receiver' # 该名称与上面名称保持一致

email_configs:

- to: '[email protected]' # 自己接受邮件的常用邮箱

send_resolved: true

2,创建prometheus和告警规则配置文件

资源镜像下载:Alertmanager.zip

# vim prometheus-alertmanager-cfg.yaml

# 配置内容较多,文件从镜像资源下载即可

# 创建配置文件:

kubectl apply -f prometheus-alertmanager-cfg.yaml

3,安装prometheus和alertmanager

安装导入镜像包:alertmanager.tar.gz

生成一个etcd-certs,这个在部署prometheus需要:

kubectl -n monitor-sa create secret generic etcd-certs --from-file=/etc/kubernetes/pki/etcd/server.key --from-file=/etc/kubernetes/pki/etcd/server.crt --from-file=/etc/kubernetes/pki/etcd/ca.crt

ctr -n=k8s.io images import alertmanager.tar.gz

创建alertmanager的pod:

# vim prometheus-alertmanager-deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus-server

namespace: monitor-sa

labels:

app: prometheus

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

component: server

#matchExpressions:

#- {key: app, operator: In, values: [prometheus]}

#- {key: component, operator: In, values: [server]}

template:

metadata:

labels:

app: prometheus

component: server

annotations:

prometheus.io/scrape: 'false'

spec:

nodeName: node03 # 指定实际自己的node03节点

serviceAccountName: monitor

containers:

- name: prometheus

image: prom/prometheus:v2.2.1

imagePullPolicy: IfNotPresent

command:

- "/bin/prometheus"

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus"

- "--storage.tsdb.retention=24h"

- "--web.enable-lifecycle"

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: /etc/prometheus

name: prometheus-config

- mountPath: /prometheus/

name: prometheus-storage-volume

- name: k8s-certs

mountPath: /var/run/secrets/kubernetes.io/k8s-certs/etcd/

- name: alertmanager

image: prom/alertmanager:v0.14.0

imagePullPolicy: IfNotPresent

args:

- "--config.file=/etc/alertmanager/alertmanager.yml"

- "--log.level=debug"

ports:

- containerPort: 9093

protocol: TCP

name: alertmanager

volumeMounts:

- name: alertmanager-config

mountPath: /etc/alertmanager

- name: alertmanager-storage

mountPath: /alertmanager

- name: localtime

mountPath: /etc/localtime

volumes:

- name: prometheus-config

configMap:

name: prometheus-config

- name: prometheus-storage-volume

hostPath:

path: /data

type: Directory

- name: k8s-certs

secret:

secretName: etcd-certs

- name: alertmanager-config

configMap:

name: alertmanager

- name: alertmanager-storage

hostPath:

path: /data/alertmanager

type: DirectoryOrCreate

- name: localtime

hostPath:

path: /usr/share/zoneinfo/Asia/Shanghai

kubectl apply -f prometheus-alertmanager-deploy.yaml

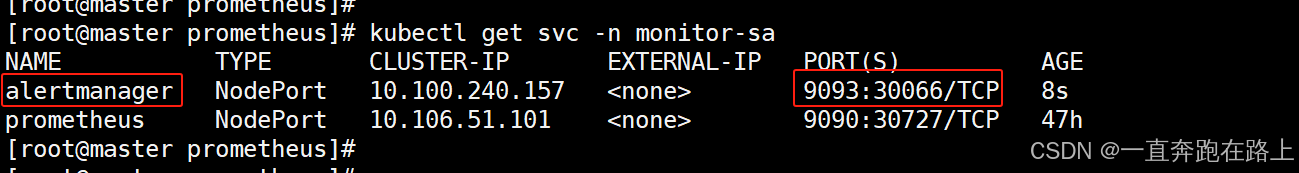

4,部署alertmanager的service,并访问

# vim alertmanager-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: prometheus

namespace: monitor-sa

labels:

app: prometheus

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

protocol: TCP

selector:

app: prometheus

component: server

kubectl apply -f alertmanager-svc.yaml

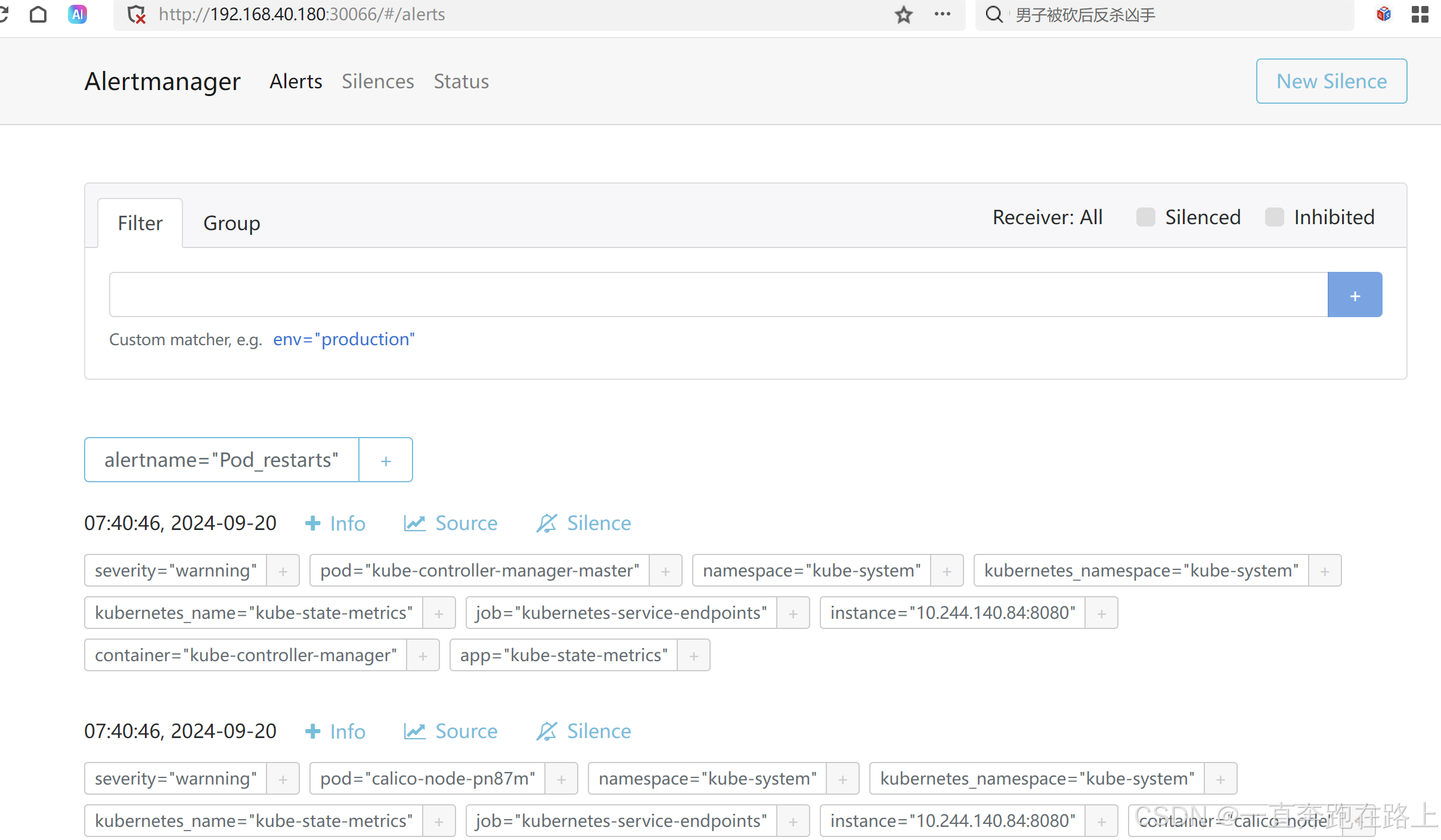

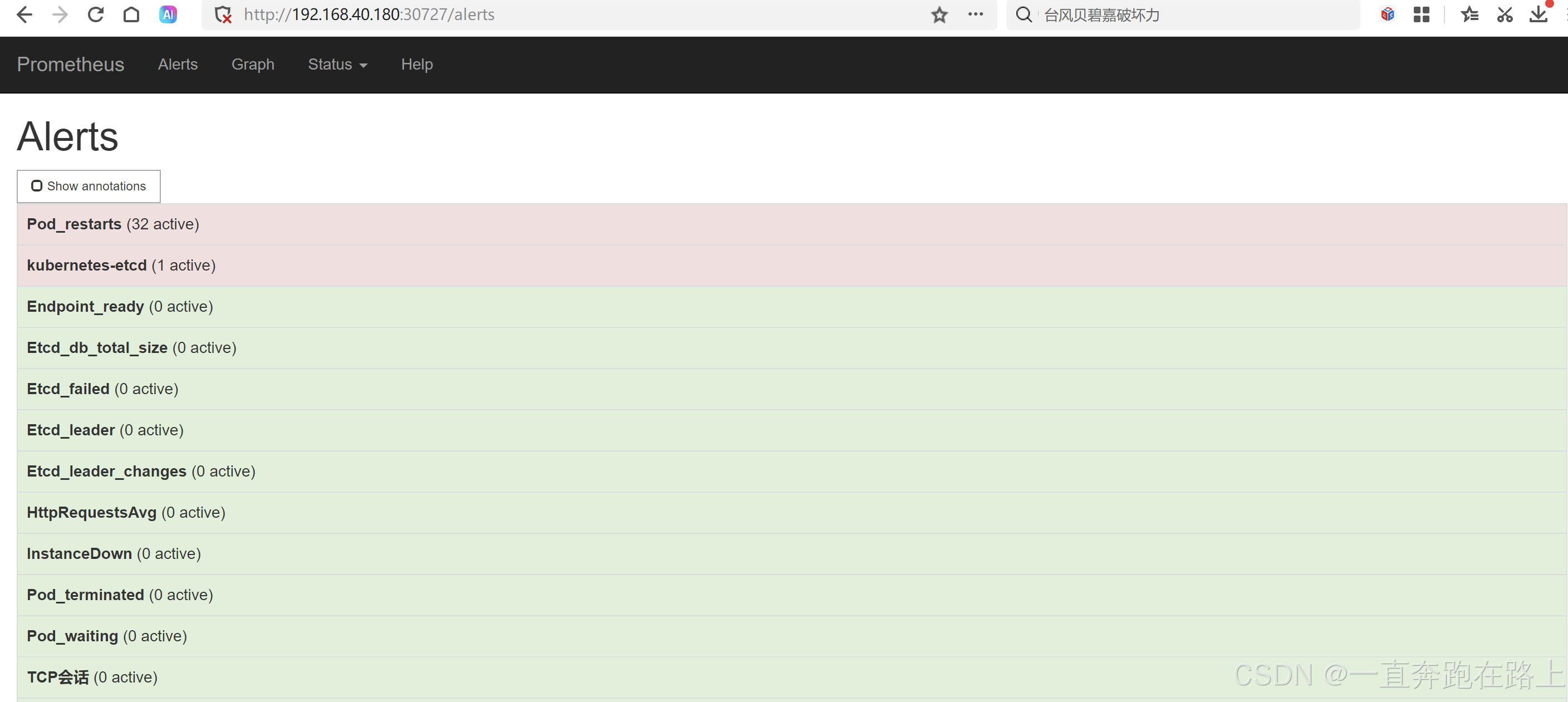

根据svc暴露的端口,浏览器访问:192.168.40.180:30066

5,发送报警到钉钉

5-1 获取钉钉机器人的 关键词 和 webhook

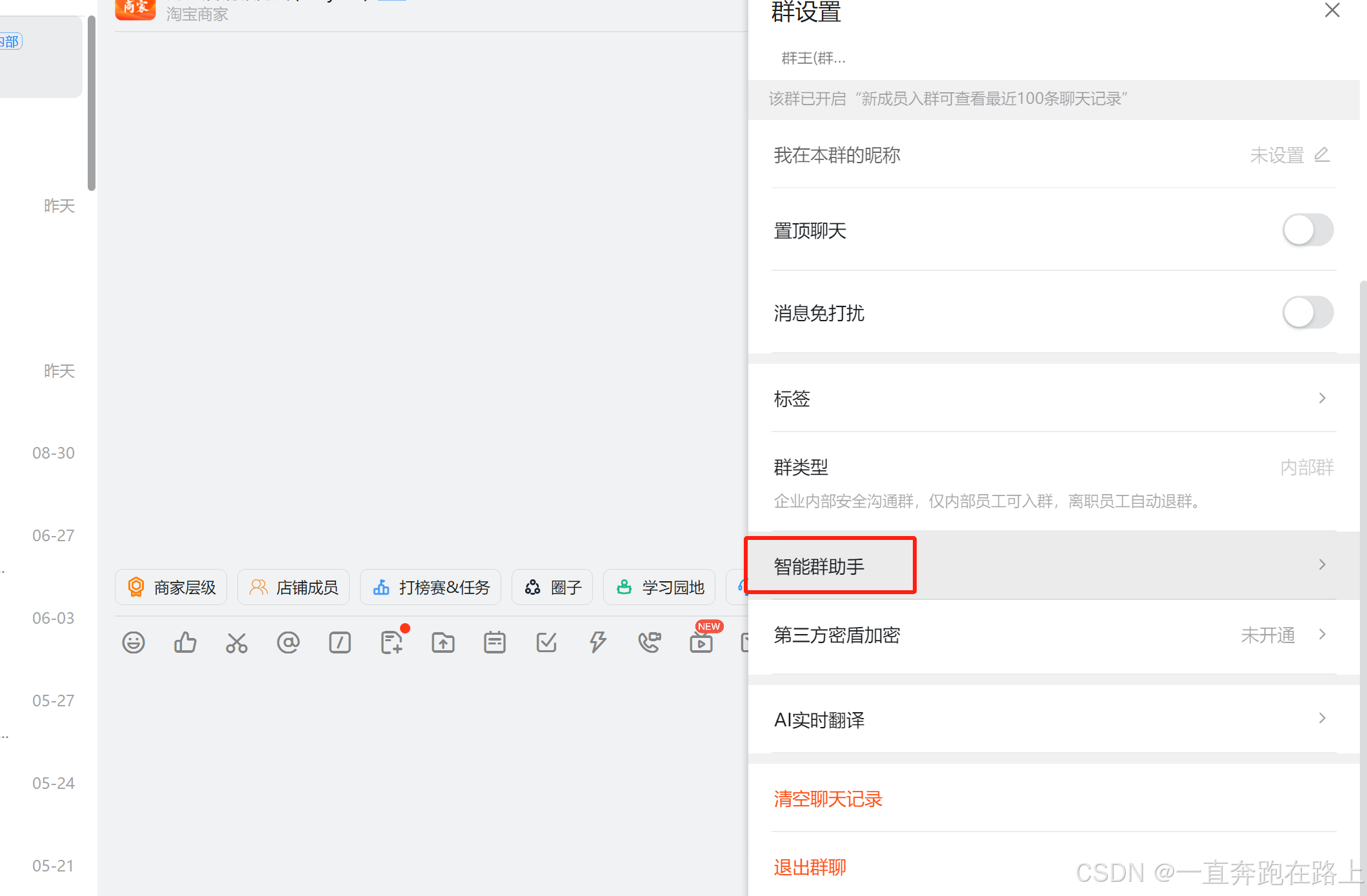

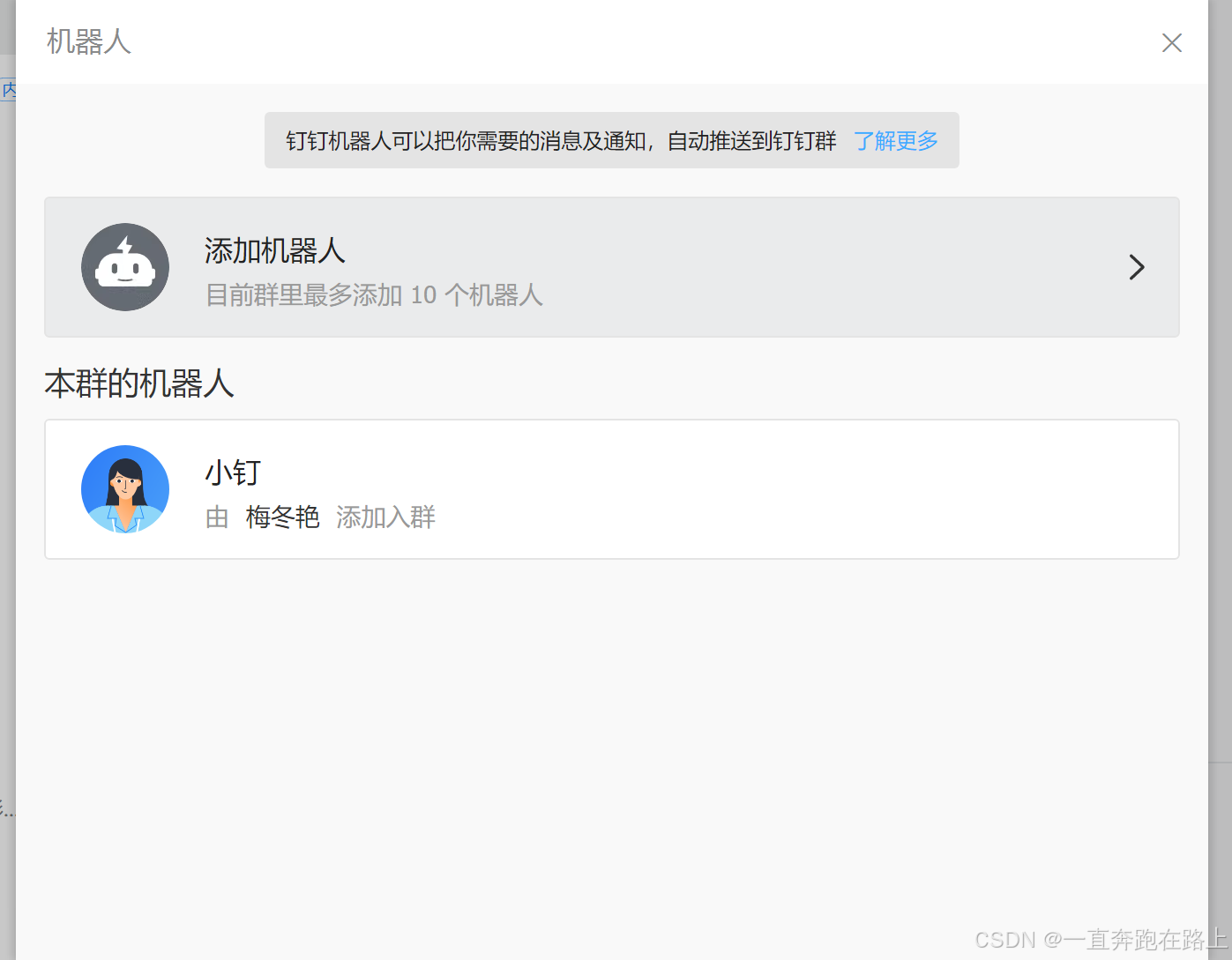

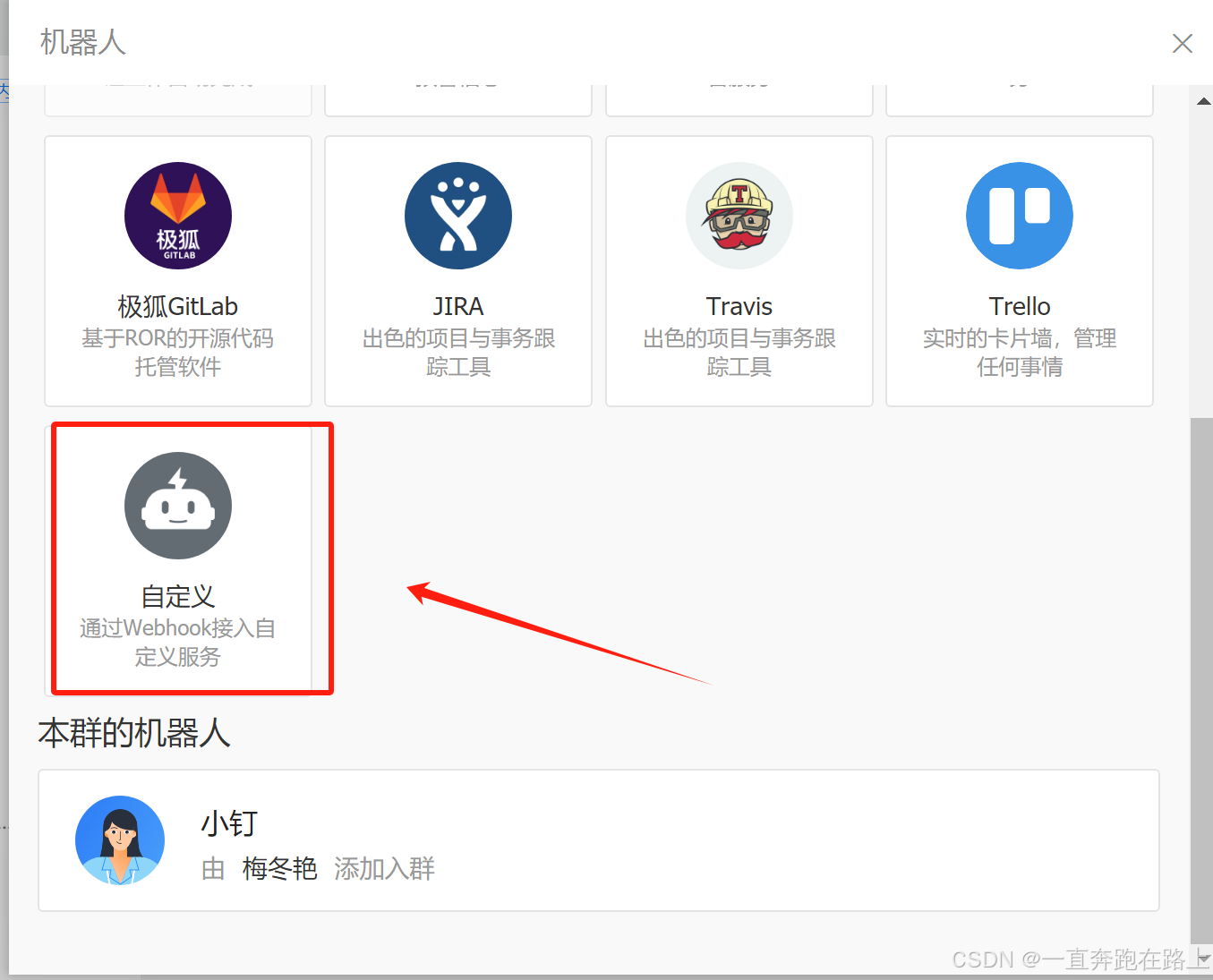

钉钉创建群,–> 设置 --> 智能群助手 --> 添加机器人 --> 自定义机器人–> (保存好关键字)

【获取】:

自定义关键词:k8s

“Webhook”地址:https://oapi.dingtalk.com/robot/send?access_token=0s32222sfds69e47733sffsdfd084f6a2cc0fasdfsfrwerq816f3e1a1fbrrrref

官方文档:https://open.dingtalk.com/document/robots/custom-robot-access

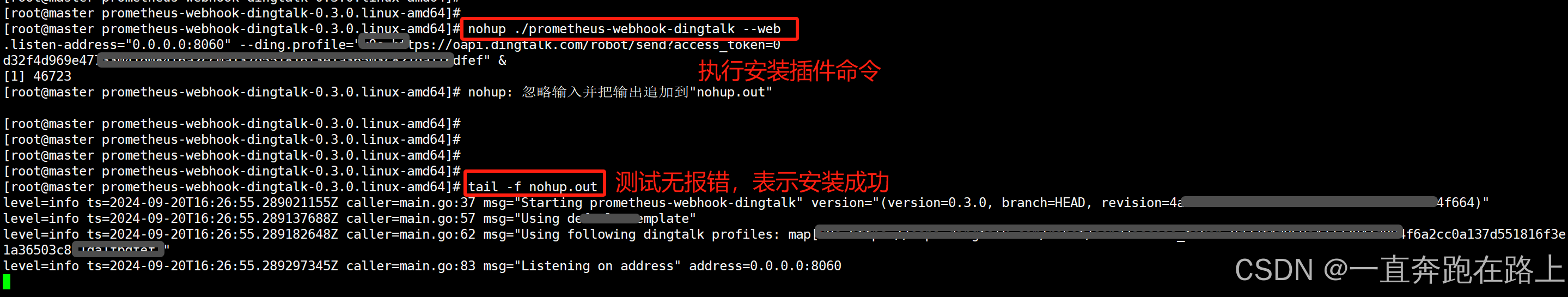

5-2 安装钉钉webhook插件(master节点操作)

导入钉钉插件:prometheus-webhook-dingtalk-0.3.0.linux-amd64.tar.gz

在master节点加压:

# 解压

tar -zxvf prometheus-webhook-dingtalk-0.3.0.linux-amd64.tar.gz

# 进入目录

cd prometheus-webhook-dingtalk-0.3.0.linux-amd64

启动钉钉插件:

nohup ./prometheus-webhook-dingtalk --web.listen-address="0.0.0.0:8060" --ding.profile="k8s=https://oapi.dingtalk.com/robot/send?access_token=0s32222sfds69e47733sffsdfd084f6a2cc0fasdfsfrwerq816f3e1a1fbrrrref" &

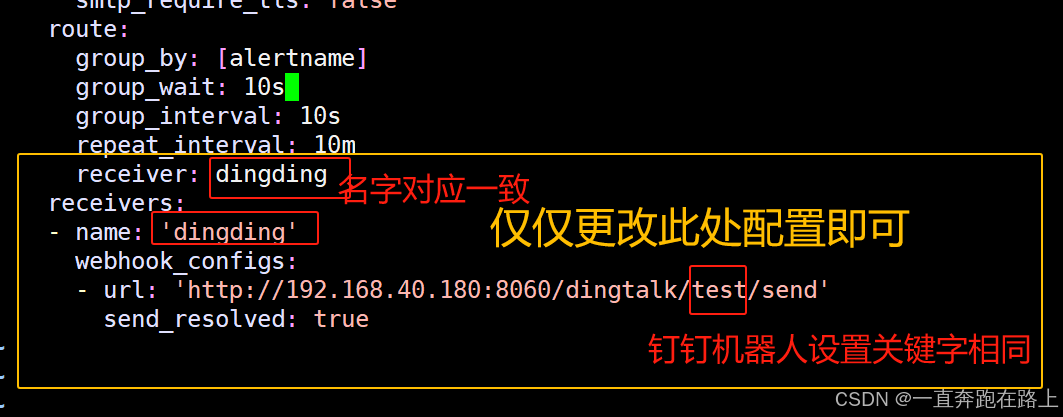

5-3 修改alertmanager-cm.yaml配置文件

更改部分已经备注

# vim alertmanager-cm.yaml

kind: ConfigMap

apiVersion: v1

metadata:

name: alertmanager

namespace: monitor-sa

data:

alertmanager.yml: |- # 记得去掉 -

global:

resolve_timeout: 1m

smtp_smarthost: 'smtp.163.com:25'

smtp_from: '[email protected]' # 自己的163邮箱 或其他

smtp_auth_username: '[email protected]' # 自己的163邮箱 或其他

smtp_auth_password: 'xxexx8ZpccxxMc' # 自己的163邮箱授权码

smtp_require_tls: false

route:

group_by: [alertname]

group_wait: 10s

group_interval: 10s

repeat_interval: 10m # 重复报警时间

receiver: dingding #与下面名称保持一致(尽量设置为“关键字”)

receivers:

- name: 'dingding' #与上面名称保持一致(尽量设置为“关键字”)

webhook_configs:

- url: 'http://192.168.40.180:8060/dingtalk/k8s/send'

# http://dingtalk安装机器ip/dingtalk/关键字/send

send_resolved: true

配置完成!

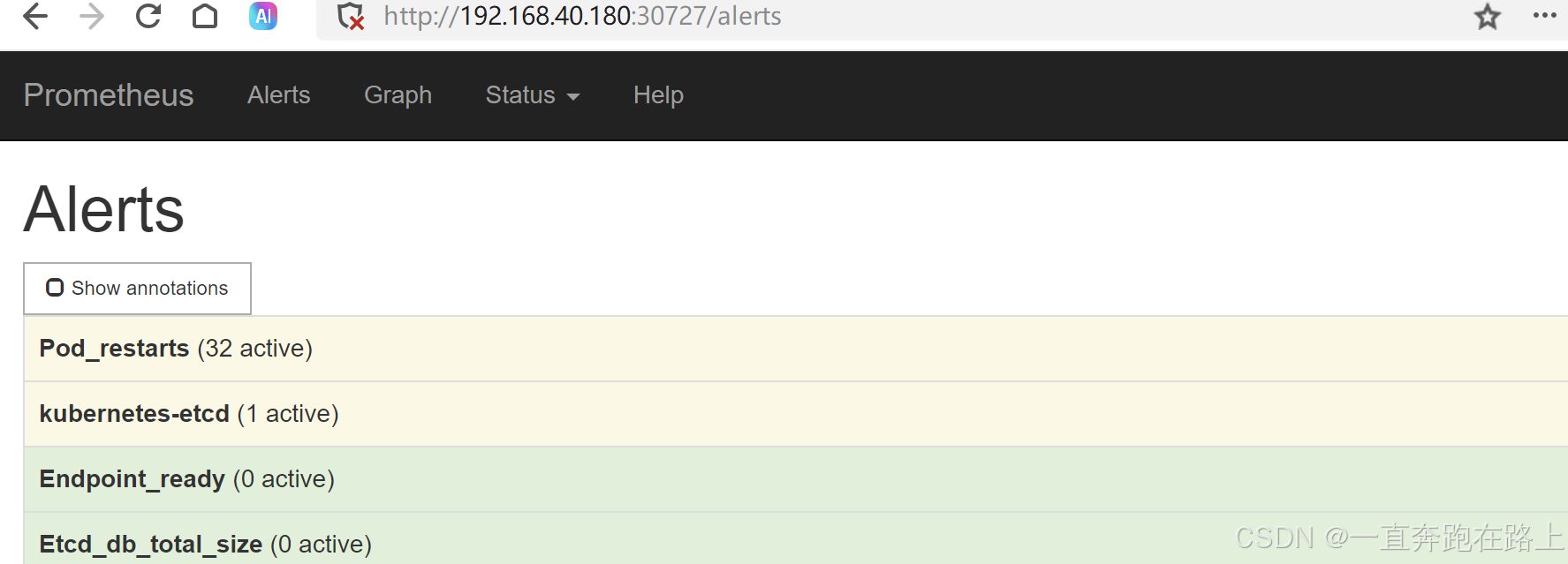

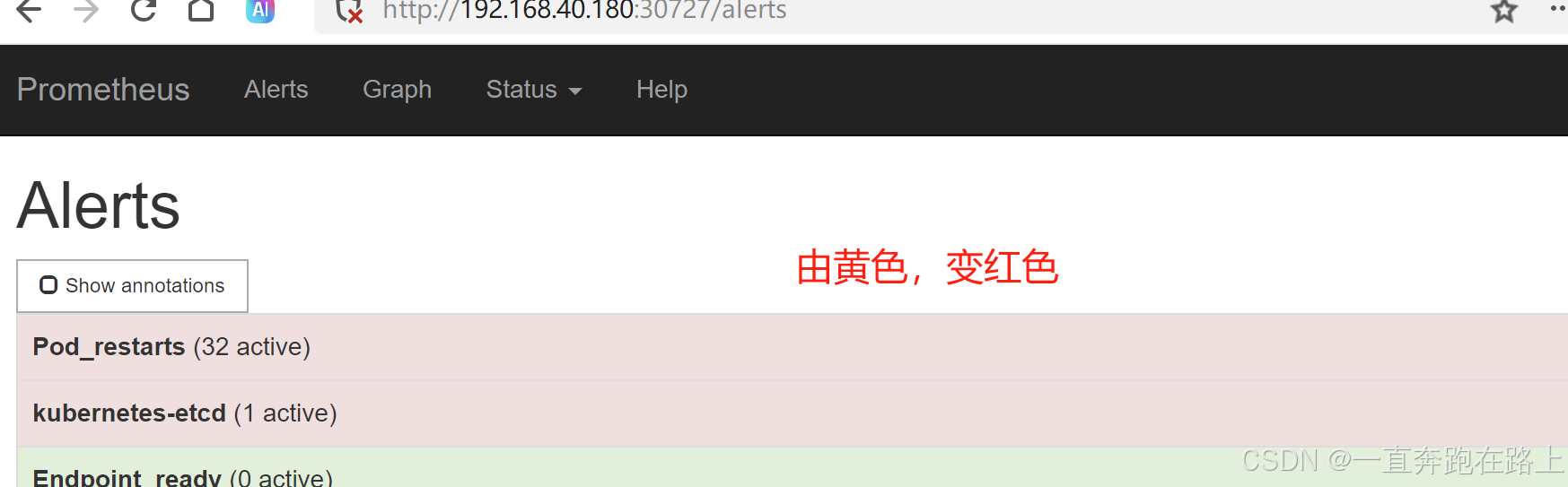

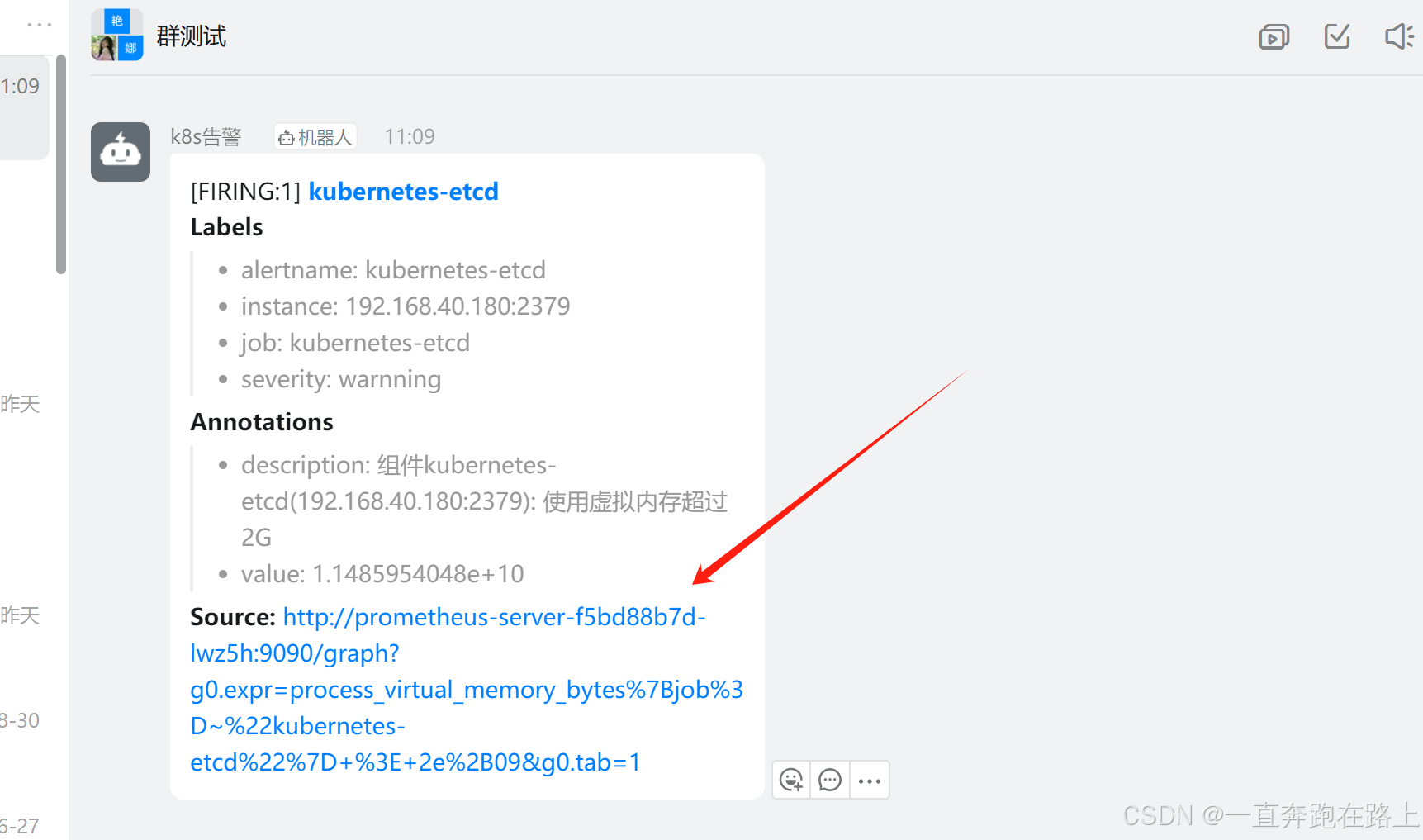

5-4 测试

颜色从黄色 ,变为红色,说明告警已发出!

钉钉群助手,收到告警提醒:

6,发送报警到微信(企业微信)

6-1 创建企业微信 获取AgentId和Secret值

注册企业微信,登陆网址:https://work.weixin.qq.com/

找到应用管理 --> 创建应用–> 应用名字k8s-test

AgentId:1000001

Secret:KkbdSWq_HkolsOj6dM3Jg98aMu1TTaDzVTCrXHcigRE

corp_id: wta82dsf90gd3a810

6-2 修改alertmanager-cm.yaml配置文件

# vim alertmanager-cm.yaml

kind: ConfigMap

apiVersion: v1

metadata:

name: alertmanager

namespace: monitor-sa

data:

alertmanager.yml: |- # 记得去掉 -

global:

resolve_timeout: 1m

smtp_smarthost: 'smtp.163.com:25'

smtp_from: '[email protected]' # 自己的163邮箱 或其他

smtp_auth_username: '[email protected]' # 自己的163邮箱 或其他

smtp_auth_password: 'xxexx8ZpccxxMc' # 自己的163邮箱授权码

smtp_require_tls: false

route:

group_by: [alertname]

group_wait: 10s

group_interval: 10s

repeat_interval: 10m # 重复报警时间

receiver: weixin #与下面名称保持一致(创建应用的名称)

receivers:

- name: 'weixin' #与上面名称保持一致(创建应用的名称)

wechat_configs:

- corp_id: wta82dsf90gd3a810 # 指定corp_id的实际值

to_user: '@all' # 发送给群所有人

agent_id: 1000001 # AgentId的实际值

api_secret: KkbdSWq_HkolsOj6dM3Jg98aMu1TTaDzVTCrXHcigRE # Secret的实际值

参数说明:

secret: 企业微信(“企业应用”–>“自定应用”[Prometheus]–> “Secret”)

wechat是本人自创建应用名称

corp_id: 企业信息(“我的企业”—>“CorpID”[在底部])

agent_id: 企业微信(“企业应用”–>“自定应用”[Prometheus]–> “AgentId”)

weixin是自创建应用名称 #在这创建的应用名字是weixin,那么在配置route时,receiver也应该是Prometheus

to_user: ‘@all’ :发送报警到所有人

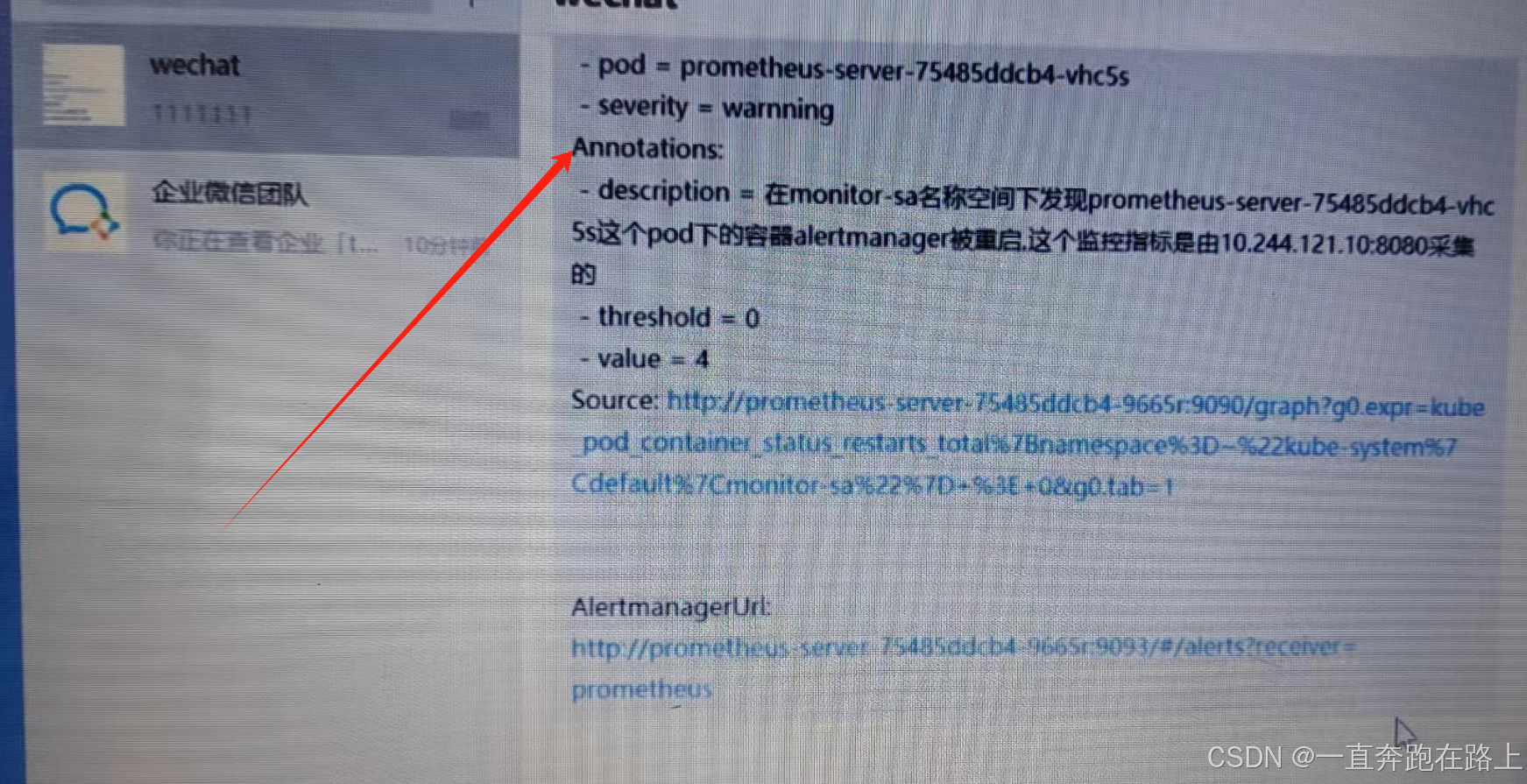

企业微信,收到告警提醒:

至此,微信告警提醒配置成功!!