Qt +FFmpeg实现音视频播放器(1

一.实现功能

1.支持qsv/dxva2/d3d11va 硬解码H265/H264码流的MP4文件,CPU软解视频文件。

2.支持音视频同步。

3.支持上一首,下一首,暂停,停止,拍照截图。

4.调节音量大小,静音,滑动条快进回退。

5.支持windows/MacOs/linux平台。

6.windows平台APP下载链接:https://pan.baidu.com/s/1NnxBr_SvhPA4ioBK0-PN1w?pwd=owgw 提取码:owgw

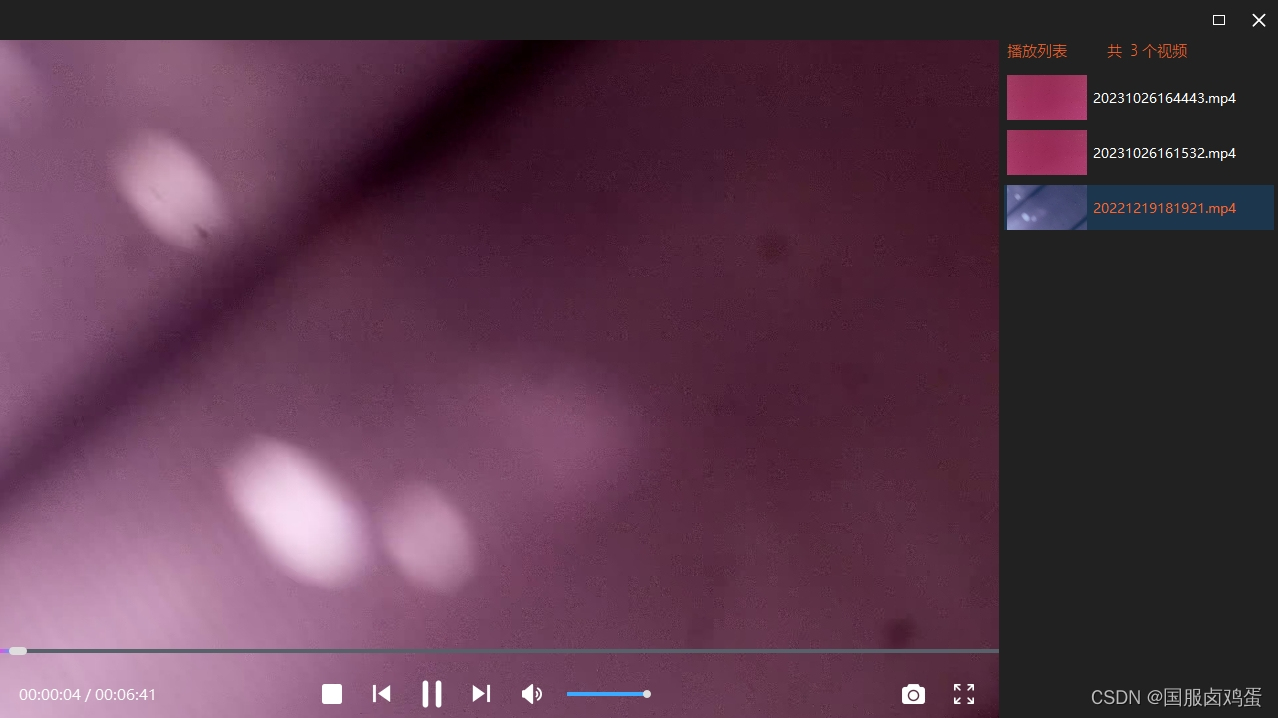

二.效果

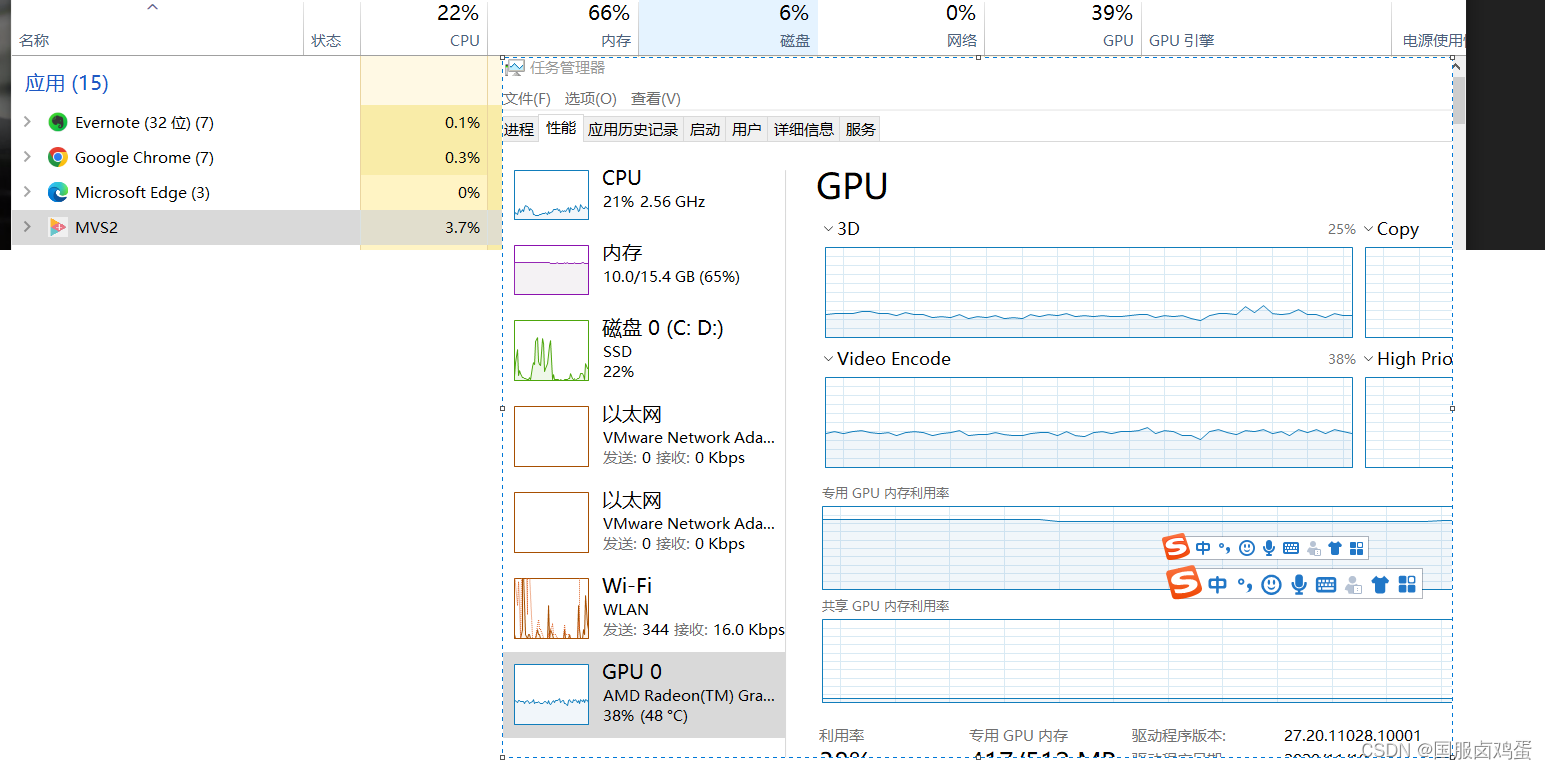

1.硬解码4k h265编码的MP4文件,cpu使用率4%,GPU40%

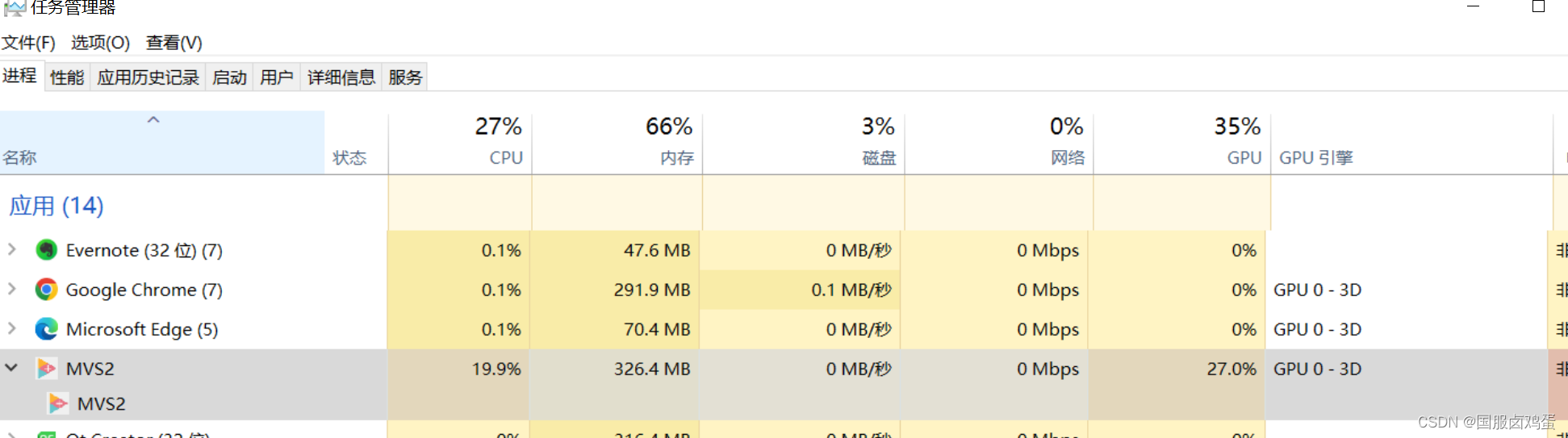

2.软解码4k cpu20% gpu28%

3.电脑配置:

三.实现方法

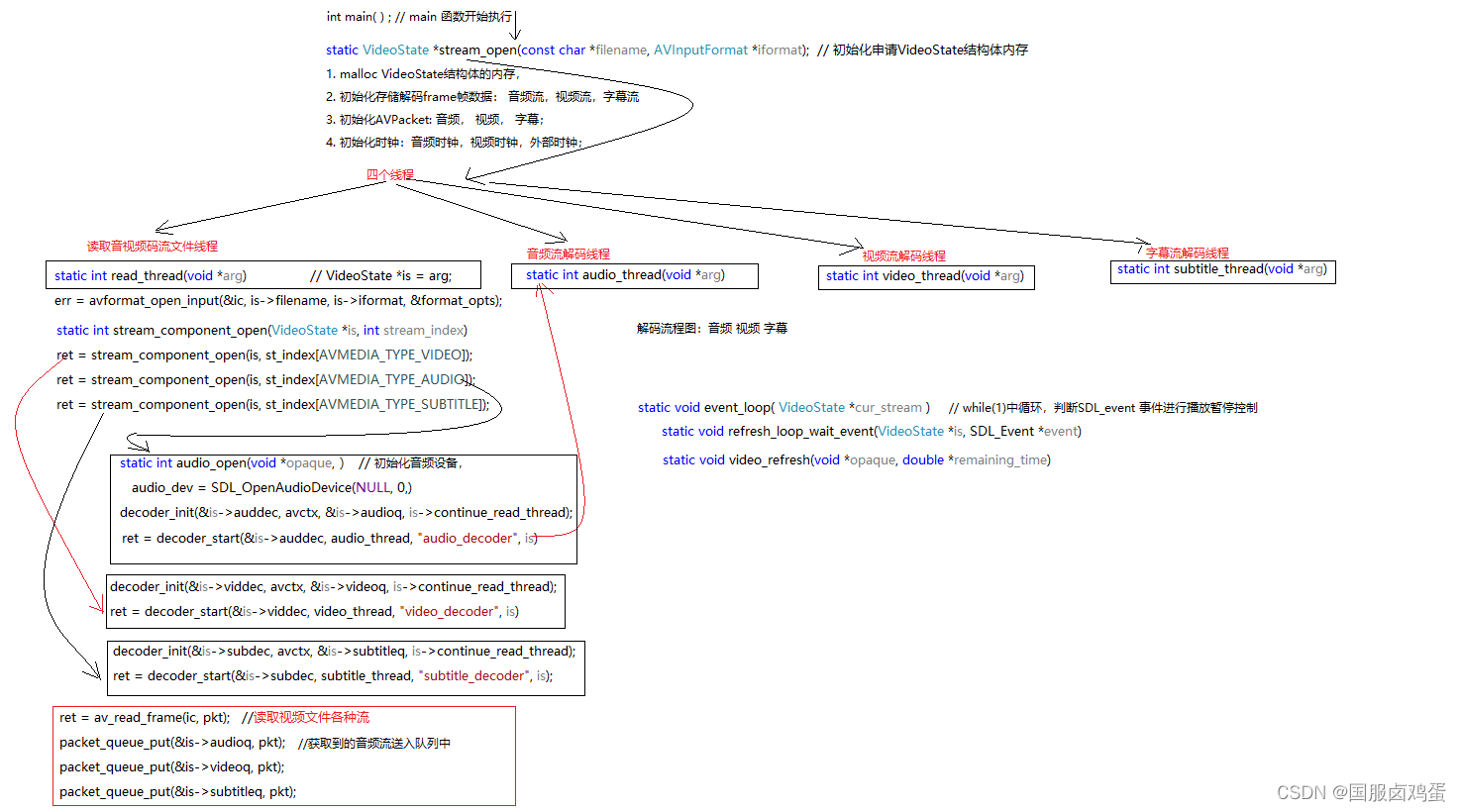

使用QOpenGLWidget控件+ ffmpeg库解码,参考过ffplay.c 播放器实现思路,ffplay读取MP4文件后,使用了四个线程,分别是读取文件主线程,视频流线程,音频流线程,字幕流线程。

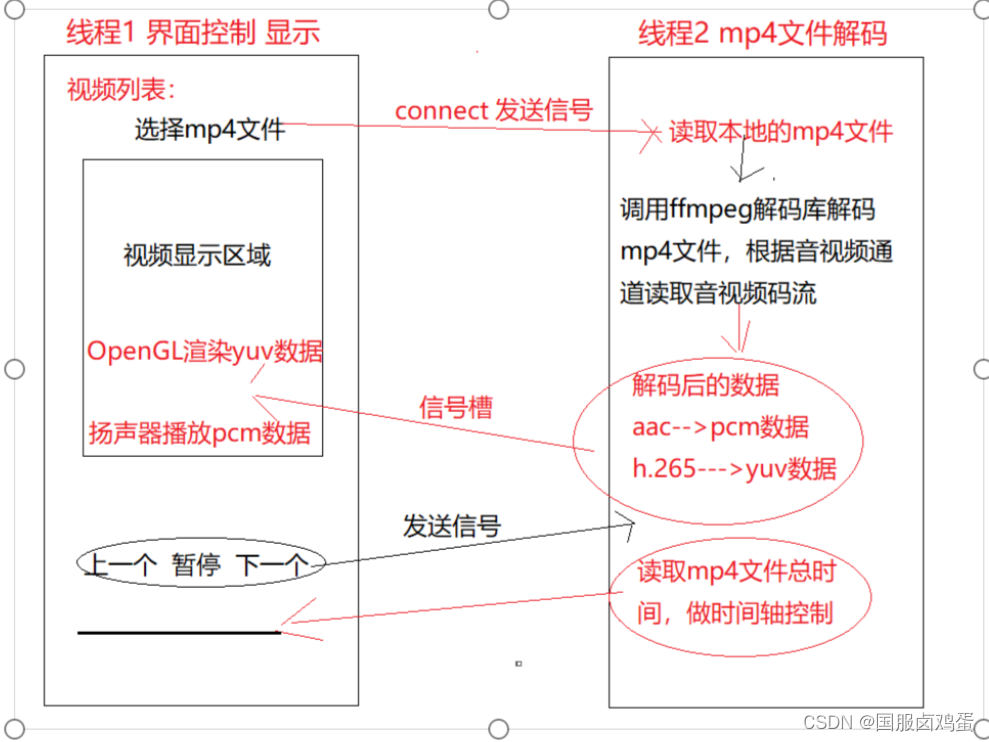

我这边使用了两个线程,主线程作为界面控制,显示刷新。第二个线程用于视频流解码和音频流解码(音频流数据很小解码很快,没开第三个线程)。ffmpeg库读取MP4文件后,解码为yuv420p/nv12数据,然后使用OpenGL渲染,(OpenGLWidget控件显示)

四.代码实现

代码太多了,提供部分凑合看

int VideoThread:: readMp4FileDecode (const char *mp4FilePath)

{

qint64 TimeStartDecode = 0; //记录解码开始时间

qint64 TimeEndDecode = 0; //记录解码完成时间

qint64 TimeFrame = 0; //解一帧需要多长时间

double fps = 0; //帧率

int ret = 0;

AVPacket pkt;

if(mp4FilePath== NULL)

{

emit emitMp4FileError(0);

return -1;

}

/* open input file, and allocate format context */

if (avformat_open_input(&mp4FmtCtx, mp4FilePath, NULL, NULL) < 0)

{

emit emitMp4FileError(0);

return -1;

}

//获取mp4文件总秒数

mp4FileIsPlay = true;

emit emitOpenFileButton(0);

emit emitGetMp4FileTime(mp4FmtCtx->duration/1000000);

emit emitGetPlayStatus(1);

emit emitGetStopStatus(1);

/* retrieve stream information */

if (avformat_find_stream_info(mp4FmtCtx, NULL) < 0)

{

emit emitMp4FileError(2);

return -1;

}

if(open_codec_context(&videoIndex, &video_dec_ctx, mp4FmtCtx, AVMEDIA_TYPE_VIDEO) >= 0)

{

video_stream = mp4FmtCtx->streams[videoIndex];

/* allocate image where the decoded image will be put */

mp4Width = video_dec_ctx->width;

mp4Height = video_dec_ctx->height;

mp4PixFmt = video_dec_ctx->pix_fmt;

ret = av_image_alloc(video_dst_data, video_dst_linesize, mp4Width, mp4Height, mp4PixFmt, 1);

if (ret < 0)

{

emit emitMp4FileError(2);

return -1;

}

fps = video_stream->avg_frame_rate.num/video_stream->avg_frame_rate.den;

TimeFrame = 1000.0/fps;

}

if (open_codec_context(&audioIndex, &audio_dec_ctx, mp4FmtCtx, AVMEDIA_TYPE_AUDIO) >= 0)

{

audio_stream = mp4FmtCtx->streams[audioIndex];

/*

qDebug()<<audio_stream->codec->sample_rate<<"音频采样率 16000";

qDebug()<<audio_stream->codec->sample_fmt<<"采样格式 枚举变量 8";

qDebug()<<audio_stream->codec->frame_size<<"音频帧编码采样个数 1024";

qDebug()<<audio_stream->codec->channels<<"音频采样通道号 1";

*/

emit emitGetAudioFormat(audio_stream->codec->sample_rate, audio_stream->codec->sample_fmt, audio_stream->codec->channels);

}

frame = av_frame_alloc();

yuvframe = av_frame_alloc();

if (!frame || !yuvframe)

{

qDebug()<<"frame yuvframe error";

emit emitMp4FileError(2);

return -1;

}

uint8_t *out_buffer = (uint8_t *)av_malloc(avpicture_get_size(yuv_pixfmt, mp4Width, mp4Height));

avpicture_fill((AVPicture *)yuvframe,out_buffer, yuv_pixfmt, mp4Width, mp4Height);

SwsContext * swsContext = sws_getContext(mp4Width, mp4Height, mp4PixFmt,

mp4Width, mp4Height, yuv_pixfmt, SWS_BILINEAR,NULL,NULL,NULL);

/* initialize packet, set data to NULL, let the demuxer fill it */

av_init_packet(&pkt);

pkt.data = NULL;

pkt.size = 0;

/* read frames from the file */

while (1)

{

//暂停

if(mp4FileIsPause)

{

TimeStartDecode = QDateTime::currentDateTime().toMSecsSinceEpoch();

ret = av_read_frame(mp4FmtCtx, &pkt);

/*

* 1.视频回放播放界面进度条跳转

* 2.int av_seek_frame(AVFormatContext *s, int stream_index, int64_t timestamp, int flags);

* 3.2021/01/19;

*/

if(mp4FileSeek)

{

mp4FileSeek = !mp4FileSeek;

int64_t videoStamp = posSeek/ av_q2d(mp4FmtCtx->streams[videoIndex]->time_base);

if(av_seek_frame(mp4FmtCtx, videoIndex, videoStamp, AVSEEK_FLAG_FRAME | AVSEEK_FLAG_BACKWARD )<0)

{

return -1;

}

if(audioIndex == 1)

{

int64_t audioStamp = posSeek/ av_q2d(mp4FmtCtx->streams[audioIndex]->time_base);

if(av_seek_frame(mp4FmtCtx,audioIndex, audioStamp, AVSEEK_FLAG_FRAME | AVSEEK_FLAG_BACKWARD)<0)

{

return -1;

}

}

av_packet_unref(&pkt);

continue;

}

/*

* 1. mp4文件实现倍速播放

*/

if(mp4FileDouSpeek)

{

fps = video_stream->avg_frame_rate.num*douSpeek/video_stream->avg_frame_rate.den;

mp4FileDouSpeek = !mp4FileDouSpeek;

}

if(pkt.stream_index == videoIndex)

{

decode_packet(video_dec_ctx, &pkt, yuvframe, swsContext);

TimeEndDecode = QDateTime::currentDateTime().toMSecsSinceEpoch();

if((TimeEndDecode - TimeStartDecode) < TimeFrame)

{

av_usleep(1000*(TimeFrame-(TimeEndDecode - TimeStartDecode)));

}

}

else if(pkt.stream_index == audioIndex)

{

decode_packet(audio_dec_ctx, &pkt, frame);

}

av_packet_unref(&pkt);

if (ret < 0){

emit emitOpenFileButton(1);

break;

}

}

//上一首

if(mp4FilePreviousPlay)

break;

//下一首

if(mp4FileNextPlay)

break;

//停止

if(mp4FileIsStop){

emit emitOpenFileButton(1);

break;

}

}

emit emitMP4Yuv420pData(nullptr, nullptr, nullptr, 0, 0, 2);

emit emitStopPlayTimes();

emit emitGetPlayStatus(0);

emit emitGetStopStatus(0);

QThread::msleep(600);

mp4FileIsPlay = false;

mp4FileIsPause = true;

mp4FilePreviousPlay = false;

mp4FileNextPlay = false;

mp4FileIsStop = false;

avcodec_free_context(&video_dec_ctx);

avcodec_free_context(&audio_dec_ctx);

avformat_close_input(&mp4FmtCtx);

if(swsContext)

{

sws_freeContext(swsContext);

swsContext = NULL;

}

av_frame_free(&frame);

av_frame_free(&yuvframe);

if(swFrame)

av_frame_free(&swFrame);

av_free(video_dst_data[0]);

return ret < 0;

}

/*

* function: 打开解码器

* @param stream_idx 是音频流/视频流

* @param dec_ctx 编解码上下文

* @param fmt_ctx mp4文件信息

* @param type 音频流/视频流

*

* @return: 正常执行返回0

*/

int VideoThread:: open_codec_context(int *stream_idx, AVCodecContext **dec_ctx, AVFormatContext *fmt_ctx, enum AVMediaType type)

{

int ret, stream_index;

AVStream *st;

AVCodec *dec = NULL;

AVDictionary *opts = NULL;

ret = av_find_best_stream(fmt_ctx, type, -1, -1, NULL, 0);

if (ret < 0)

{

return ret;

}

else

{

stream_index = ret;

st = fmt_ctx->streams[stream_index];

//windows: qsv hw decode video

if(hwDecodeFlag && !stream_index)

{

HwDecodeVideo hwDecode;

hwDecode.getHWdecode(hwDecode.hwList);

for(int i=0; i<hwDecode.hwList.count(); i++)

{

if(hwDecode.hwList.at(i) == "qsv")

{

if(av_hwdevice_ctx_create(&videoHwDevice.hw_device_ref, AV_HWDEVICE_TYPE_QSV, "auto", NULL, 0)<0)

{

return -1;

}

if(st->codecpar->codec_id == AV_CODEC_ID_HEVC)

{

dec = avcodec_find_decoder_by_name("hevc_qsv");

}

else if(st->codecpar->codec_id == AV_CODEC_ID_H264)

{

dec = avcodec_find_decoder_by_name("h264_qsv");

}

*dec_ctx = avcodec_alloc_context3(dec);

avcodec_parameters_to_context(*dec_ctx, st->codecpar);

(*dec_ctx)->opaque = &videoHwDevice;

(*dec_ctx)->get_format = get_format;

(*dec_ctx)->thread_count = 4;

(*dec_ctx)->thread_safe_callbacks = 1;

break;

}

else if(hwDecode.hwList.at(i) == "dxva2" || hwDecode.hwList.at(i) == "d3d11va")

{

enum AVHWDeviceType type;

type = av_hwdevice_find_type_by_name(hwDecode.hwList.at(i).toStdString().c_str());

if (type == AV_HWDEVICE_TYPE_NONE)

{

hwDecodeFlag = false;

return -1;

}

dec = avcodec_find_decoder(st->codecpar->codec_id);

for (int i = 0;; i++)

{

const AVCodecHWConfig *config = avcodec_get_hw_config(dec, i);

if (!config)

{

fprintf(stderr, "Decoder %s does not support device type %s.\n",

dec->name, av_hwdevice_get_type_name(type));

return -1;

}

if (config->methods & AV_CODEC_HW_CONFIG_METHOD_HW_DEVICE_CTX &&

config->device_type == type) {

hw_pix_fmt = config->pix_fmt;

break;

}

}

*dec_ctx = avcodec_alloc_context3(dec);

avcodec_parameters_to_context(*dec_ctx, st->codecpar);

//回调硬解码函数

(*dec_ctx)->get_format = get_hw_format;

if(hw_decoder_init(*dec_ctx, type) < 0)

return -1;

break;

}

}

yuv_pixfmt = AV_PIX_FMT_NV12;

if(avcodec_open2(*dec_ctx, dec, NULL )<0)

{

return -1;

}

swFrame = av_frame_alloc();

}

else

{

/* find decoder for the stream */

if(stream_index == 0)

yuv_pixfmt = AV_PIX_FMT_YUV420P;

dec = avcodec_find_decoder(st->codecpar->codec_id);

if (!dec)

{

fprintf(stderr, "Failed to find %s codec\n",av_get_media_type_string(type));

return AVERROR(EINVAL);

}

/* Allocate a codec context for the decoder */

*dec_ctx = avcodec_alloc_context3(dec);

if (!*dec_ctx)

{

fprintf(stderr, "Failed to allocate the %s codec context\n",av_get_media_type_string(type));

return AVERROR(ENOMEM);

}

//开启四线程解码

(*dec_ctx)->thread_count = 4;

(*dec_ctx)->thread_safe_callbacks = 1;

/* Copy codec parameters from input stream to output codec context */

if ((ret = avcodec_parameters_to_context(*dec_ctx, st->codecpar)) < 0) {

fprintf(stderr, "Failed to copy %s codec parameters to decoder context\n",

av_get_media_type_string(type));

return ret;

}

/* Init the decoders */

if ((ret = avcodec_open2(*dec_ctx, dec, &opts)) < 0) {

fprintf(stderr, "Failed to open %s codec\n",

av_get_media_type_string(type));

return ret;

}

}

*stream_idx = stream_index;

}

return 0;

}

五.从视频文件中截图

/*

* function: 拍照截图,根据播放秒数寻找最近的I帧截图为jpg图片 time=2020/10/20

* @param: filePath 视频文件路径名

* @param: index 播放秒数

*/

int VideoWidget::playVideoPhoto(const char *filePath, int index)

{

AVFormatContext *pFormatCtx = nullptr;

AVCodecContext *pCodecCtx = nullptr;

AVCodec *pCodec = nullptr;

AVFrame *frameyuv = nullptr;

AVFrame *frame = nullptr;

AVPacket *pkt = nullptr;

struct SwsContext *swsContext = nullptr;

int got_picture = 0;

pFormatCtx = avformat_alloc_context();

int res = avformat_open_input(&pFormatCtx, filePath, nullptr, nullptr);

if(res)

{

emit emitGetVideoImage(0);

return -1;

}

avformat_find_stream_info(pFormatCtx, nullptr);

int videoStream = -1;

for(int i=0; i < (int)pFormatCtx->nb_streams; i++)

{

if(pFormatCtx->streams[i]->codec->codec_type == AVMEDIA_TYPE_VIDEO)

{

videoStream = i;

break;

}

}

if(videoStream == -1)

{

emit emitGetVideoImage(0);

return -1;

}

//查找解码器

pCodecCtx = pFormatCtx->streams[videoStream]->codec;

pCodec = avcodec_find_decoder(pCodecCtx->codec_id);

if(pCodec == nullptr)

{

emit emitGetVideoImage(0);

return -1;

}

//打开解码器

if(avcodec_open2(pCodecCtx, pCodec, nullptr)<0)

{

emit emitGetVideoImage(0);

return -1;

}

res = av_seek_frame(pFormatCtx, -1, index * AV_TIME_BASE, AVSEEK_FLAG_BACKWARD);//10(second)

if (res<0)

{

return 0;

}

frame = av_frame_alloc();

frameyuv = av_frame_alloc();

uint8_t *out_buffer = (uint8_t *)av_malloc(avpicture_get_size(AV_PIX_FMT_YUV420P, pCodecCtx->width, pCodecCtx->height));

avpicture_fill((AVPicture *)frameyuv, out_buffer, AV_PIX_FMT_YUV420P, pCodecCtx->width, pCodecCtx->height);

pkt = (AVPacket *)av_malloc(sizeof(AVPacket));

swsContext = sws_getContext(pCodecCtx->width, pCodecCtx->height,pCodecCtx->pix_fmt,

pCodecCtx->width, pCodecCtx->height, AV_PIX_FMT_YUV420P,

SWS_BICUBIC, nullptr, nullptr, nullptr);

while(av_read_frame(pFormatCtx, pkt)>=0)

{

//读取一帧压缩数据

if(pkt->stream_index == videoStream)

{

//解码一帧数据

res = avcodec_decode_video2(pCodecCtx, frame, &got_picture, pkt);

if(res < 0)

{

emit emitGetVideoImage(0);

return -1;

}

if(got_picture)

{

sws_scale(swsContext, (const uint8_t *const*)frame->data,frame->linesize,0,pCodecCtx->height,frameyuv->data, frameyuv->linesize);

playVideoSaveJpgImage(frameyuv, pCodecCtx->width, pCodecCtx->height, index);

break;

}

}

}

//释放内存

if(frameyuv!=nullptr)

{

av_frame_free(&frameyuv);

frameyuv = nullptr;

}

av_free_packet(pkt);

if(swsContext!=nullptr){

sws_freeContext(swsContext);

swsContext = nullptr;

}

if(frame !=nullptr){

av_frame_free(&frame);

frame = nullptr;

}

avformat_close_input(&pFormatCtx);

av_free(out_buffer);

return 0;

}

/*

* function:保存jpg图片

* @param: frmae 一帧视频数据

* @param: width 图片宽度

* @param: height 图片高度

* @param: index 第几分钟秒数

*/

int VideoWidget::playVideoSaveJpgImage(AVFrame *frame, int width, int height, int index)

{

QDir dir;

QString pathStr = sysPhotoPath; //系统默认存储路径

if(!dir.exists(pathStr))

{

dir.mkpath(pathStr);

}

QString strTime;

getCurrentTime(strTime);

QString str = pathStr +"/" +strTime + ".jpg";

AVFormatContext *pFormatCtx = nullptr;

AVCodecContext *pCodecCtx = nullptr;

AVStream *pAVStream = nullptr;

AVCodec *pCodec = nullptr;

AVPacket pkt;

int y_size = 0;

int got_picture = 0;

int ret = 0;

pFormatCtx = avformat_alloc_context();

pFormatCtx->oformat = av_guess_format("mjpeg",nullptr,nullptr);

if(avio_open(&pFormatCtx->pb, str.toUtf8(), AVIO_FLAG_READ_WRITE)<0)

{

emit emitGetVideoImage(0);

return -1;

}

pAVStream = avformat_new_stream(pFormatCtx, 0);

if(pAVStream == NULL)

{

emit emitGetVideoImage(0);

return -1;

}

pCodecCtx = pAVStream->codec;

pCodecCtx->codec_id = pFormatCtx->oformat->video_codec;

pCodecCtx->codec_type = AVMEDIA_TYPE_VIDEO;

pCodecCtx->pix_fmt = AV_PIX_FMT_YUVJ420P;

pCodecCtx->width = width;

pCodecCtx->height = height;

pCodecCtx->time_base.num = 1;

pCodecCtx->time_base.den = 30;

//查找编码器

pCodec = avcodec_find_encoder(pCodecCtx->codec_id); //mjpeg编码器

if(!pCodec)

{

emit emitGetVideoImage(0);

return -1;

}

if(avcodec_open2(pCodecCtx, pCodec, NULL)<0)

{

emit emitGetVideoImage(0);

return -1;

}

//写 header

avformat_write_header(pFormatCtx, nullptr);

y_size = pCodecCtx->width *pCodecCtx->height;

av_new_packet(&pkt, y_size*3);

ret = avcodec_encode_video2(pCodecCtx, &pkt, frame, &got_picture);

if(ret < 0)

{

emit emitGetVideoImage(0);

return -1;

}

if(got_picture == 1)

{

pkt.stream_index = pAVStream->index;

ret = av_write_frame(pFormatCtx, &pkt);

}

//添加尾部

av_write_trailer(pFormatCtx);

av_free_packet(&pkt);

if(pAVStream!=nullptr)

avcodec_close(pAVStream->codec);

avio_close(pFormatCtx->pb);

avformat_free_context(pFormatCtx);

return 0;

}