在客户端部署上线的日志服务器因为等保扫瞄到ES(Elasticsearch) 7.17.8版本有安全漏洞,所以要做一个升级,升级到最新的 8.15.3。

因为ES 8.X 版本是默认启动了安全配置,也就是配置文件 elasticsearch.yml 中的 xpack.security.enabled 配置默认是设为 true 的,所以当启动 ES的时候访问9200端口会要求输入账户和密码。

接下来,我将一步步分享如何手动配置 elastic 超级用户的密码以及如何让 logstash 和 kibana 以 https的方式对 es 进行数据的写入(logstash output)和读取(kibana需要读es数据将不同index内的数据进行可视化等操作)。

ELK部署方式

OS: Ubuntu 22.04 LTS

部署运行方式:docker-compose (也就是说logstash, es和kibana都是以容器的方式运行的,并且属于一个网络)

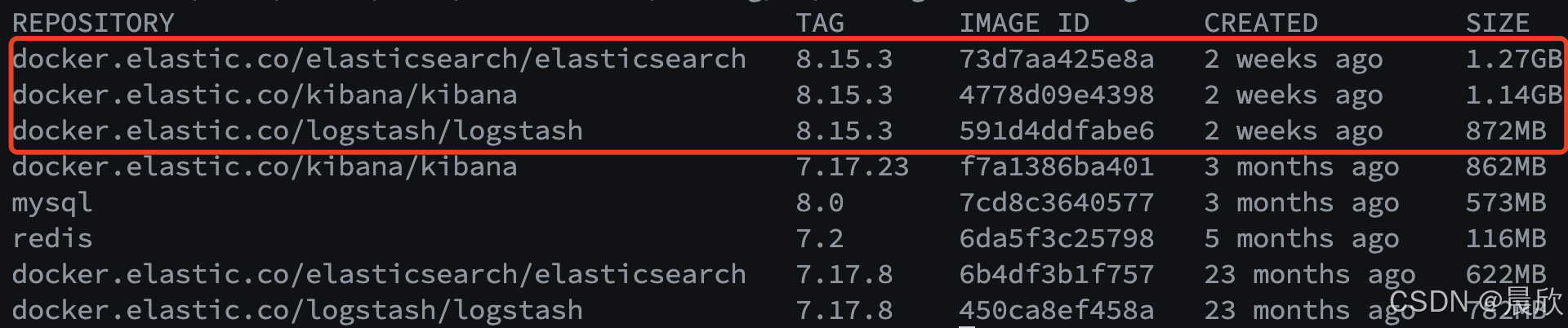

docker 镜像拉取

# 拉取 es

docker pull docker.elastic.co/elasticsearch/elasticsearch:8.15.3

# 拉取 logstash

docker pull docker.elastic.co/logstash/logstash:8.15.3

# 拉取 kibana

docker pull docker.elastic.co/elasticsearch/elasticsearch:8.15.3

拉取完成后,可以通过 docker images 命令查看镜像:

更换镜像

将所有正在运行的7.X版本镜像的容器都停下来

docker-compose down

编辑 docker-compose.yaml 文件,修改镜像的tag为8.15.3。

下一步修改宿主机上映射到 es 容器内的配置文件 elasticsearch.yml 内容,我们首先配置 elastic 超级用户的密码,后面再把 https 连接需要的证书搞定,所以先把 xpack.security.transport.ssl.enabled 设置为 false(默认是 true)。

需要加上以下配置内容:

# 此配置项用于启用或禁用 Elasticsearch 的安全特性

xpack.security.enabled: true

# 此配置项用于启用或禁用通过 HTTPS(SSL/TLS)对 Elasticsearch 的 HTTP 接口进行加密。

xpack.security.transport.ssl.enabled: false

然后启动 docker-compose

docker-compose up -d

配置 elastic 超级用户的密码

确保 elasticsearch(容器名)在正常运行后,可以尝试访问 http://ip:9200, 这个时候发现会有弹出框要求输出用户名和密码(因为 xpack.security.enabled: true)。

现在进入 elasticsearch 容器内部:

docker exec -it elasticsearch bash

elasticsearch@elasticsearch:~$ pwd

# 容器内部当前目录

/usr/share/elasticsearch

有以下两种设置密码的方式,手动和随机自动:

手动设置密码

./bin/elasticsearch-reset-password -u elastic

按照提示输入想要为 elastic 用户设置的新密码即可。

自动生成随机密码

-i:关闭交互模式,Elasticsearch 会自动生成一个随机密码

./bin/elasticsearch-reset-password -u elastic -i

假如设置的密码是:p0s9Lb3uThEJfN5T0v6x

这个时候再去访问 http://ip:9200,输入 elastic/p0s9Lb3uThEJfN5T0v6x 就可以正常访问了。

Logstash通过elastic账号与es建立http连接

虽然我们最终达成的目标是让logstash和kibana以 https(http+ssl)的方式与es建立连接,但在配置相关证书之前我们可以尝试一下让logstash仅通过账号密码的方式与es建立http连接(kibana无法尝试,在 xpack.security.enabled: true 配置的情况下必须通过https)。

1. 将elastic 超级用户密码写入 docker-compose.yml

services:

elasticsearch:

restart: always

image: docker.elastic.co/elasticsearch/elasticsearch:8.15.3

container_name: elasticsearch

hostname: elasticsearch

privileged: true

environment:

- "ES_JAVA_OPTS=-Xms8192m -Xmx8192m"

- "http.host=0.0.0.0"

- "node.name=node-01"

- "cluster.name=cluster-01"

- "discovery.type=single-node"

# 将密码作为环境变量写入

- "ELASTIC_PASSWORD=p0s9Lb3uThEJfN5T0v6x"

2. 修改宿主机上的 logstash.yml 和涉及到output给es的配置文件

logstash.yml配置新增内容如下

# 开启xpack监控

# ===================es版本升级,需要加入认证信息==============

xpack.monitoring.elasticsearch.username: "elastic"

xpack.monitoring.elasticsearch.password: "p0s9Lb3uThEJfN5T0v6x"

对于将接收到的日志处理完之后output给es的配置内容,需要加上账户密码等信息。

以下是 ChatGPT 生成的一个示例,需要注意:

hosts要加上http://;- 若有逻辑分支则涉及到es的部分都需要添加

user/password信息。

input {

# 假设从stdin输入数据

stdin {}

}

filter {

# 使用 Ruby 进行自定义处理

ruby {

code => "

event.set('new_field', event.get('message').upcase) # 将'message'字段转为大写并保存到'new_field'

event.set('timestamp', Time.now) # 添加当前时间戳到'timestamp'字段

"

}

}

output {

# 输出到 Elasticsearch

elasticsearch {

# 注意需要加上http,安全验证未开启的情况下是不需要的

hosts => ["http://elasticsearch:9200"]

index => "logstash-ruby-example"

# 增加用户名

user => "elastic"

# 增加用户名密码

password => "p0s9Lb3uThEJfN5T0v6x"

document_id => "%{[@metadata][fingerprint]}" # 自定义document ID(可选)

}

}

3. 重启 elasticsearch 和 logstash docker 容器

重启命令如下:

docker-compose restart elasticsearch

docker-compose restart logstash

重启之后检查 logstash 和 elasticsearch 容器日志是否有报错信息:

docker logs logstash

docker logs elasticsearch

# 如果只想看最新N条,添加 --tail 参数指定一下数量就可以

docker logs --tail N <logstash-container-name>

如果没有可以进入到 logstash 容器内部,运行检验命令检查是否能正常连接上es:

# 进入 logstash 容器内部

docker exec -it logstash /bin/bash

logstash@d996504f0329:~$ pwd

/usr/share/logstash

# 运行如下检查命令,如连接成功会显示es相关信息

curl -u elastic:p0s9Lb3uThEJfN5T0v6x http://elasticsearch:9200

成功连接到 Elasticsearch 的输出示例(由 ChatGPT 生成):

{

"name" : "your-node-name",

"cluster_name" : "your-cluster-name",

"cluster_uuid" : "uuid-string",

"version" : {

"number" : "8.15.3",

"build_flavor" : "default",

"build_type" : "docker",

"build_hash" : "abc12345",

"build_date" : "2024-01-01T00:00:00Z",

"build_snapshot" : false,

"lucene_version" : "9.7.0",

"minimum_wire_compatibility_version" : "7.10.0",

"minimum_index_compatibility_version" : "7.0.0"

},

"tagline" : "You Know, for Search"

}

当然也可以实际让logstash接收处理一些数据,然后检查es里面是否有数据的新增,以此确保logstash可以成功将处理完成的数据写入es。

ES生成CA和证书

为什么需要?(以下回答由 ChatGPT 生成)

在 Elasticsearch 中,生成 CA(Certificate Authority,证书颁发机构) 和 证书 的过程是为了启用 安全通信,即通过 SSL/TLS 加密来保护 Elasticsearch 节点之间的通信 以及 客户端和服务器之间的通信。

具体步骤如下(copy from 参考文章 [1]):

# 进入docker容器的es实例

docker exec -it elasticsearch bash

# 生成ca证书 遇到提示回车,共两次

bin/elasticsearch-certutil ca

# 生成节点证书 遇到提示回车,共三次

bin/elasticsearch-certutil cert --ca elastic-stack-ca.p12

# 切换到config目录

cd config

# 创建certs文件夹

mkdir certs

# 回到原目录

cd ..

# 将ca证书移动到certs中

mv elastic-certificates.p12 elastic-stack-ca.p12 config/certs

把生成的两个证书文件放在容器 /usr/share/elasticsearch/config/certs 下后,就可以执行 exit 先退出了。

现在编辑宿主机上的 elasticsearch.yml 文件,新增内容如下:

# 将 xpack.security.transport.ssl.enabled 设置为 false的配置注释掉

# xpack.security.transport.ssl.enabled: false

# 新增以下内容

xpack.security.enrollment.enabled: true

# 是否开启ssl

xpack.security.http.ssl:

enabled: true

keystore.path: /usr/share/elasticsearch/config/certs/elastic-certificates.p12

truststore.path: /usr/share/elasticsearch/config/certs/elastic-certificates.p12

# 是否开启访问安全认证

xpack.security.transport.ssl:

enabled: true

verification_mode: certificate

keystore.path: /usr/share/elasticsearch/config/certs/elastic-certificates.p12

truststore.path: /usr/share/elasticsearch/config/certs/elastic-certificates.p12

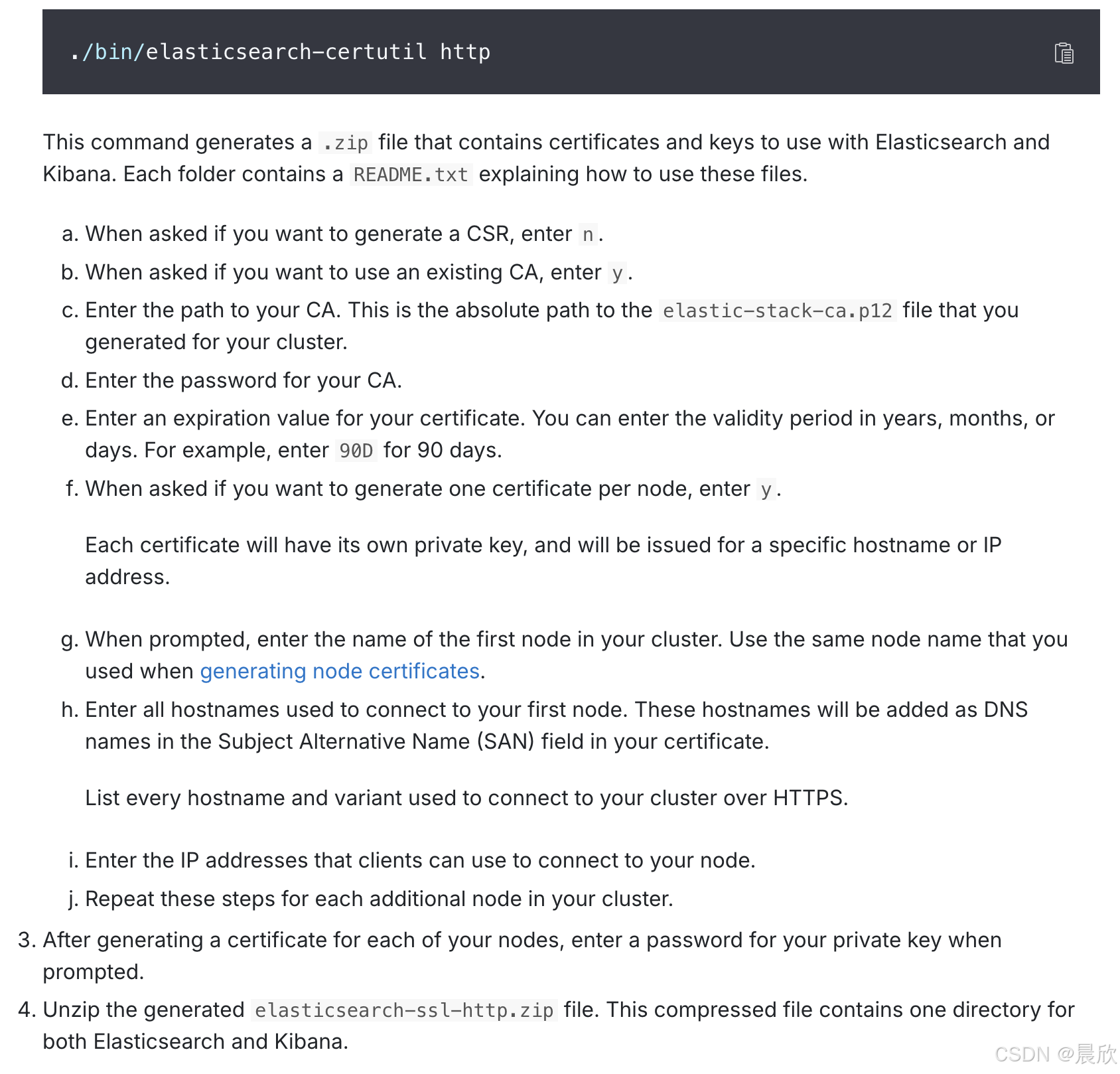

Http 证书生成

为什么需要?(以下回答由 ChatGPT 生成)

Elasticsearch 生成 HTTP 证书 是为了通过 HTTPS(SSL/TLS 加密) 来保护 HTTP 层 的通信。主要原因是为了确保在客户端(如浏览器、Kibana、Logstash)与 Elasticsearch 之间通过 REST API 进行通信时,数据是加密的,并且可以实现 身份验证 和 数据完整性。

以下操作步骤参考 [2]

再次进入 elasticsearch 容器内部,生成 http 证书。

# 进入docker容器的es实例

docker exec -it elasticsearch bash

# 生成 Http 的证书

./bin/elasticsearch-certutil http

会有很多提问,详情可参考 官方文档

完整输入如下供大家参考

elasticsearch@elasticsearch:~$ ./bin/elasticsearch-certutil http

## Elasticsearch HTTP Certificate Utility

The 'http' command guides you through the process of generating certificates

for use on the HTTP (Rest) interface for Elasticsearch.

This tool will ask you a number of questions in order to generate the right

set of files for your needs.

## Do you wish to generate a Certificate Signing Request (CSR)?

A CSR is used when you want your certificate to be created by an existing

Certificate Authority (CA) that you do not control (that is, you don't have

access to the keys for that CA).

If you are in a corporate environment with a central security team, then you

may have an existing Corporate CA that can generate your certificate for you.

Infrastructure within your organisation may already be configured to trust this

CA, so it may be easier for clients to connect to Elasticsearch if you use a

CSR and send that request to the team that controls your CA.

If you choose not to generate a CSR, this tool will generate a new certificate

for you. That certificate will be signed by a CA under your control. This is a

quick and easy way to secure your cluster with TLS, but you will need to

configure all your clients to trust that custom CA.

Generate a CSR? [y/N]N

## Do you have an existing Certificate Authority (CA) key-pair that you wish to use to sign your certificate?

If you have an existing CA certificate and key, then you can use that CA to

sign your new http certificate. This allows you to use the same CA across

multiple Elasticsearch clusters which can make it easier to configure clients,

and may be easier for you to manage.

If you do not have an existing CA, one will be generated for you.

Use an existing CA? [y/N]y

## What is the path to your CA?

Please enter the full pathname to the Certificate Authority that you wish to

use for signing your new http certificate. This can be in PKCS#12 (.p12), JKS

(.jks) or PEM (.crt, .key, .pem) format.

CA Path: /usr/share/elasticsearch/config/certs/elastic-stack-ca.p12

Reading a PKCS12 keystore requires a password.

It is possible for the keystore's password to be blank,

in which case you can simply press <ENTER> at the prompt

Password for elastic-stack-ca.p12:

## How long should your certificates be valid?

Every certificate has an expiry date. When the expiry date is reached clients

will stop trusting your certificate and TLS connections will fail.

Best practice suggests that you should either:

(a) set this to a short duration (90 - 120 days) and have automatic processes

to generate a new certificate before the old one expires, or

(b) set it to a longer duration (3 - 5 years) and then perform a manual update

a few months before it expires.

You may enter the validity period in years (e.g. 3Y), months (e.g. 18M), or days (e.g. 90D)

For how long should your certificate be valid? [5y]

## Do you wish to generate one certificate per node?

If you have multiple nodes in your cluster, then you may choose to generate a

separate certificate for each of these nodes. Each certificate will have its

own private key, and will be issued for a specific hostname or IP address.

Alternatively, you may wish to generate a single certificate that is valid

across all the hostnames or addresses in your cluster.

If all of your nodes will be accessed through a single domain

(e.g. node01.es.example.com, node02.es.example.com, etc) then you may find it

simpler to generate one certificate with a wildcard hostname (*.es.example.com)

and use that across all of your nodes.

However, if you do not have a common domain name, and you expect to add

additional nodes to your cluster in the future, then you should generate a

certificate per node so that you can more easily generate new certificates when

you provision new nodes.

Generate a certificate per node? [y/N]y

## What is the name of node #1?

This name will be used as part of the certificate file name, and as a

descriptive name within the certificate.

You can use any descriptive name that you like, but we recommend using the name

of the Elasticsearch node.

node #1 name: node-01

## Which hostnames will be used to connect to node-01?

These hostnames will be added as "DNS" names in the "Subject Alternative Name"

(SAN) field in your certificate.

You should list every hostname and variant that people will use to connect to

your cluster over http.

Do not list IP addresses here, you will be asked to enter them later.

If you wish to use a wildcard certificate (for example *.es.example.com) you

can enter that here.

Enter all the hostnames that you need, one per line.

When you are done, press <ENTER> once more to move on to the next step.

elasticsearch

You entered the following hostnames.

- elasticsearch

Is this correct [Y/n]y

## Which IP addresses will be used to connect to node-01?

If your clients will ever connect to your nodes by numeric IP address, then you

can list these as valid IP "Subject Alternative Name" (SAN) fields in your

certificate.

If you do not have fixed IP addresses, or not wish to support direct IP access

to your cluster then you can just press <ENTER> to skip this step.

Enter all the IP addresses that you need, one per line.

When you are done, press <ENTER> once more to move on to the next step.

You did not enter any IP addresses.

Is this correct [Y/n]y

## Other certificate options

The generated certificate will have the following additional configuration

values. These values have been selected based on a combination of the

information you have provided above and secure defaults. You should not need to

change these values unless you have specific requirements.

Key Name: node-01

Subject DN: CN=node-01

Key Size: 2048

Do you wish to change any of these options? [y/N]n

Generate additional certificates? [Y/n]n

## What password do you want for your private key(s)?

Your private key(s) will be stored in a PKCS#12 keystore file named "http.p12".

This type of keystore is always password protected, but it is possible to use a

blank password.

If you wish to use a blank password, simply press <enter> at the prompt below.

Provide a password for the "http.p12" file: [<ENTER> for none]

## Where should we save the generated files?

A number of files will be generated including your private key(s),

public certificate(s), and sample configuration options for Elastic Stack products.

These files will be included in a single zip archive.

What filename should be used for the output zip file? [/usr/share/elasticsearch/elasticsearch-ssl-http.zip]

需要关注的几个提问:

- What is the name of node #1?

输入的 node name 需要和docker-compose.yml里给 Elasticsearch 配置的node.name保持一致。

## What is the name of node #1?

This name will be used as part of the certificate file name, and as a

descriptive name within the certificate.

You can use any descriptive name that you like, but we recommend using the name

of the Elasticsearch node.

node #1 name: node-01

- Which hostnames will be used to connect to node-01?

因为 ES 是以 docker 的方式运行,并且ELK都在同一个docker network中,所以 logstash 和 kibana 其实直接通过 es的容器名,即elasticsearch访问即可,并且 es 没有被外网访问的需要,所以这里的hostname设置为容器名elasticsearch就可以。

## Which hostnames will be used to connect to node-01?

These hostnames will be added as "DNS" names in the "Subject Alternative Name"

(SAN) field in your certificate.

You should list every hostname and variant that people will use to connect to

your cluster over http.

Do not list IP addresses here, you will be asked to enter them later.

If you wish to use a wildcard certificate (for example *.es.example.com) you

can enter that here.

Enter all the hostnames that you need, one per line.

When you are done, press <ENTER> once more to move on to the next step.

elasticsearch

You entered the following hostnames.

- elasticsearch

Is this correct [Y/n]y

可以看到生成的 elasticsearch-ssl-http.zip 文件

elasticsearch@elasticsearch:~$ ls

LICENSE.txt NOTICE.txt README.asciidoc bin config data elasticsearch-ssl-http.zip jdk lib logs modules plugins

# 解压 elasticsearch-ssl-http.zip

elasticsearch@elasticsearch:~$ unzip elasticsearch-ssl-http.zip

Archive: elasticsearch-ssl-http.zip

creating: elasticsearch/

inflating: elasticsearch/README.txt

inflating: elasticsearch/http.p12

inflating: elasticsearch/sample-elasticsearch.yml

creating: kibana/

inflating: kibana/README.txt

inflating: kibana/elasticsearch-ca.pem

inflating: kibana/sample-kibana.yml

将 elasticsearch-ssl-http.zip 文件解压缩之后,会有两个文件夹,即 elasticsearch/ 和 kibana/。

# /kibana 目录下的三个文件

elasticsearch@elasticsearch:~/kibana$ ls

README.txt elasticsearch-ca.pem sample-kibana.yml

# /elasticsearch 目录下的三个文件

elasticsearch@elasticsearch:~/elasticsearch$ ls

README.txt http.p12 sample-elasticsearch.yml

现在将 /usr/share/elasticsearch/elasticsearch/http.p12 移动到 /usr/share/elasticsearch/config/certs 下

mv elasticsearch/http.p12 /usr/share/elasticsearch/config/certs

所以 certs/ 下有三个文件了

elasticsearch@elasticsearch:~/config/certs$ ls

elastic-certificates.p12 elastic-stack-ca.p12 http.p12

elasticsearch 生成 kibana 的安全认证文件

# 生成kibana证书(-dns后接的是kibana容器名)

./bin/elasticsearch-certutil csr -name kibana -dns kibana

# 解压文件

elasticsearch@elasticsearch:~$ unzip csr-bundle.zip

Archive: csr-bundle.zip

inflating: kibana/kibana.csr

inflating: kibana/kibana.key

将生成的安全认证文件从es容器内部复制到宿主机kibana的config目录

# 退出容器

exit

# 进入宿主机kibana的config目录

cd /usr/local/config/kibana/config/

# 创建certs文件夹

mkdir certs

# 将elasticsearch生成在容器中的证书复制到certs中

docker cp elasticsearch:/usr/share/elasticsearch/kibana/kibana.csr /usr/local/config/kibana/config/certs

docker cp elasticsearch:/usr/share/elasticsearch/kibana/kibana.key /usr/local/config/kibana/config/certs

# 还要将上一步生成elasticsearch-ca.pem文件一块复制到kibana

docker cp elasticsearch:/usr/share/elasticsearch/kibana/elasticsearch-ca.pem /usr/local/config/kibana/config/certs

# 进入certs文件夹

cd certs

# 生成crt文件

openssl x509 -req -in kibana.csr -signkey kibana.key -out kibana.crt

# 查看 /certs 目录下的四个文件

elasticsearch-ca.pem

kibana.crt

kibana.csr

kibana.key

将 elasticsearch-ca.pem 复制到宿主机logstash的config目录

为什么需要?

当在 Logstash 中配置到 Elasticsearch 的HTTPS 连接时,需要在配置文件中指定 elasticsearch-ca.pem 作为信任证书。

# 进入宿主机kibana的config目录

cd /usr/local/config/logstash/config/

# 创建certs文件夹

mkdir certs

docker cp elasticsearch:/usr/share/elasticsearch/kibana/elasticsearch-ca.pem /usr/local/config/logstash/config/certs

将 elasticsearch 容器内 certs 下的文件持久化到宿主机

# 切换到elasticsearch的config目录

cd /usr/local/config/elasticsearch/config

# 复制docker中的certs到linux

docker cp elasticsearch:/usr/share/elasticsearch/config/certs /usr/local/config/elasticsearch/config

部署 kibana 认证文件

现在将宿主机 /usr/local/config/kibana/config/certs 下的认证文件都同步到容器内。

# 将服务器上kibana的certs上传到docker容器中

docker cp /usr/local/config/kibana/config/certs kibana:/usr/share/kibana/config

# 使用root进入kibana容器

docker exec -it -u root kibana bash

# 进入config文件夹

cd config

# 修改certs权限

chown -R kibana certs

查看容器内认证文件权限情况

root@286e3f490b30:/usr/share/kibana/config/certs# ll -l

total 24

drwxr-xr-x 2 kibana root 4096 Oct 28 12:42 ./

drwxrwxrwx 3 root root 4096 Oct 28 13:18 ../

-rw-r--r-- 1 kibana root 1200 Oct 28 08:18 elasticsearch-ca.pem

-rw-r--r-- 1 kibana root 985 Oct 28 12:42 kibana.crt

-rw-r--r-- 1 kibana root 936 Oct 28 12:36 kibana.csr

-rw-r--r-- 1 kibana root 1675 Oct 28 12:36 kibana.key

部署 logstash 认证文件

和部署 kibana 类似的操作

将宿主机 /usr/local/config/logstash/config/certs 下的认证文件都同步到容器内。

# 将服务器上kibana的certs上传到docker容器中

docker cp /usr/local/config/logstash/config/certs logstash:/usr/share/logstash/config

# 使用root进入logstash容器

docker exec -it -u root logstash bash

# 进入config文件夹

cd config

# 修改certs权限

chown -R logstash certs

查看容器内认证文件 elasticsearch-ca.pem 权限情况

root@d996504f0329:/usr/share/logstash/config/certs# ll -l

total 24

drwxr-xr-x 2 logstash root 4096 Oct 29 03:11 ./

drwxrwxrwx 3 root root 4096 Oct 30 03:26 ../

-rw-r--r-- 1 logstash root 1200 Oct 28 15:11 elasticsearch-ca.pem

配置文件内容调整

1. logstash.yml http -> https

xpack.monitoring.elasticsearch.username: "elastic"

xpack.monitoring.elasticsearch.password: "p0s9Lb3uThEJfN5T0v6x"

xpack.monitoring.enabled: true

# 由之前的 http 改为 https

xpack.monitoring.elasticsearch.hosts: ["https://elasticsearch:9200"]

xpack.monitoring.collection.interval: 10s

log.level: debug

- logstash output es 配置文件开启ssl并指定cacert

input {

# 假设从stdin输入数据

stdin {}

}

filter {

# 使用 Ruby 进行自定义处理

ruby {

code => "

event.set('new_field', event.get('message').upcase) # 将'message'字段转为大写并保存到'new_field'

event.set('timestamp', Time.now) # 添加当前时间戳到'timestamp'字段

"

}

}

output {

# 输出到 Elasticsearch

elasticsearch {

# http调整为https

hosts => ["http://elasticsearch:9200"]

# 启用 ssl

ssl => true

# 指定认证文件

cacert => "/usr/share/logstash/config/certs/elasticsearch-ca.pem"

index => "logstash-ruby-example"

# 增加用户名

user => "elastic"

# 增加用户名密码

password => "p0s9Lb3uThEJfN5T0v6x"

document_id => "%{[@metadata][fingerprint]}" # 自定义document ID(可选)

}

}

- 调整

kibana.yml内容

涉及到elasticsearch.hosts的连接协议是https

elasticsearch.password密码可以随便填写一个,后面再进入 es 容器内部修改。

i18n.locale: zh-CN

server.host: "0.0.0.0"

server.shutdownTimeout: "5s"

elasticsearch.hosts: [ "https://elasticsearch:9200" ]

elasticsearch.username: "kibana_system"

elasticsearch.password: "12345678"

# ===============================ssl配置如下======================================

server.ssl.enabled: true

server.ssl.certificate: /usr/share/kibana/config/certs/kibana.crt

server.ssl.key: /usr/share/kibana/config/certs/kibana.key

elasticsearch.ssl.verificationMode: none

elasticsearch.ssl.certificateAuthorities: [ "/usr/share/kibana/config/certs/elasticsearch-ca.pem" ]

# ===============================ssl配置如上======================================

monitoring.ui.container.elasticsearch.enabled: true

xpack.monitoring.enabled: true

xpack.monitoring.elasticsearch.hosts: ["https://elasticsearch:9200"]

xpack.monitoring.kibana.collection.enabled: true

xpack.monitoring.kibana.collection.interval: 10000

进入 elasticsearch容器重置 kibana_system 账户密码

# 进入 elasticsearch 容器内部

docker exec -it elasticsearch /bin/bash

# 重置 kibana_system 账户密码

elasticsearch@elasticsearch:~$ ./bin/elasticsearch-reset-password -u kibana_system -i --url https://elasticsearch:9200

WARNING: Owner of file [/usr/share/elasticsearch/config/users] used to be [root], but now is [elasticsearch]

WARNING: Owner of file [/usr/share/elasticsearch/config/users_roles] used to be [root], but now is [elasticsearch]

This tool will reset the password of the [kibana_system] user.

You will be prompted to enter the password.

Please confirm that you would like to continue [y/N]

然后把重置的密码在 kibana.yml 文件中进行更新

elasticsearch.username: "kibana_system"

elasticsearch.password: "password_reset"

最后修改 docker-compose.yml 文件里的目录映射

这一步操作是为了确保宿主机ELK config/certs 目录下的文件与容器内是同步的,检查ELK volumes 配置下看是否有需要调整的地方。

针对于我的情况,elasticsearch 需要新增映射。

services:

elasticsearch:

restart: always

image: docker.elastic.co/elasticsearch/elasticsearch:8.15.3

container_name: elasticsearch

hostname: elasticsearch

privileged: true

volumes:

# 新增目录映射

-"usr/local/config/es/config/certs:/usr/share/elasticsearch/config/certs"

配置文件内容调整完成后,就可以重启所有容器了。

docker-compose restart

需要注意 kibana 访问输入的账户密码并非配置文件中的 kibana_system/password_reset,而是需要elastic超级用户和对应密码,即 elastic/p0s9Lb3uThEJfN5T0v6x 进行登陆访问。

认证文件所在目录概览

宿主机 /usr/local/config/elasticsearch/config/certs 下的文件

.

├── elastic-certificates.p12

├── elastic-stack-ca.p12

└── http.p12

0 directories, 3 files

宿主机 /usr/local/config/logstash/config/certs 下的文件

elasticsearch-ca.pem

宿主机 /usr/local/config/kibana/config/certs 下的文件

.

├── elasticsearch-ca.pem

├── kibana.crt

├── kibana.csr

└── kibana.key

0 directories, 4 files

最后,非常感谢以下博客内容,帮助很多!也希望这篇博客可以帮助到有需要的朋友!

[1] docker开启es集群安全认证

[2] Elasticsearch8.X+ Kibana 8.X安全配置