主代码:

# -*- coding: utf-8 -*-

import scrapy

from scrapy.linkextractors import LinkExtractor

from scrapy.spiders import CrawlSpider, Rule

from wxapp.items import WxappItem

class WxappSpiderSpider(CrawlSpider):

name = 'wxapp_spider'

allowed_domains = ['wxapp-union.com']

start_urls = ['http://www.wxapp-union.com/portal.php?mod=list&catid=2&page=1']

rules = (

Rule(LinkExtractor(allow=r'.+mod=list&catid=2&page=\d'), follow=True),

Rule(LinkExtractor(allow=r'.+article-.+\.html'), callback="parse_detail", follow=False),

)

def parse_detail(self, response):

title = response.xpath("//h1[@class='ph']/text()").get()

author_p = response.xpath("//p[@class='authors']")

author = author_p.xpath("./a/text()").get()

pub_time = author_p.xpath("./span[@class='time']/text()").get()

content = response.xpath("//td[@id='article_content']//text()").getall()

content = "".join(content).strip()

print("*"*50)

item = WxappItem(title=title, author=author, pub_time=pub_time, content=content)

yield item

pipelines的代码:

from scrapy.exporters import JsonItemExporter

class WxappPipeline(object):

def __init__(self):

self.fp = open("wxjc.json", "wb")

self.exporter = JsonItemExporter(self.fp, ensure_ascii=False, encoding="utf-8")

def process_item(self, item, spider):

self.exporter.export_item(item)

return item

def close_spider(self):

self.fp.close()

setting设置:

BOT_NAME = 'wxapp'

SPIDER_MODULES = ['wxapp.spiders']

NEWSPIDER_MODULE = 'wxapp.spiders'

LOG_LEVEL = "WARNING"

# Crawl responsibly by identifying yourself (and your website) on the user-agent

#USER_AGENT = 'demo (+http://www.yourdomain.com)'

# Obey robots.txt rules

ROBOTSTXT_OBEY = False

# Configure maximum concurrent requests performed by Scrapy (default: 16)

CONCURRENT_REQUESTS = 32

# Configure a delay for requests for the same website (default: 0)

# See https://docs.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

DOWNLOAD_DELAY = 0.5

# The download delay setting will honor only one of:

CONCURRENT_REQUESTS_PER_DOMAIN = 16

CONCURRENT_REQUESTS_PER_IP = 16

ITEM_PIPELINES = {

'wxapp.pipelines.WxappPipeline': 300,

}

DEFAULT_REQUEST_HEADERS = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

'Accept-Language': 'en',

'User-Agent': "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/77.0.3865.75 Safari/537.36"

}

items代码:

import scrapy

class WxappItem(scrapy.Item):

title = scrapy.Field()

author = scrapy.Field()

pub_time = scrapy.Field()

content = scrapy.Field()

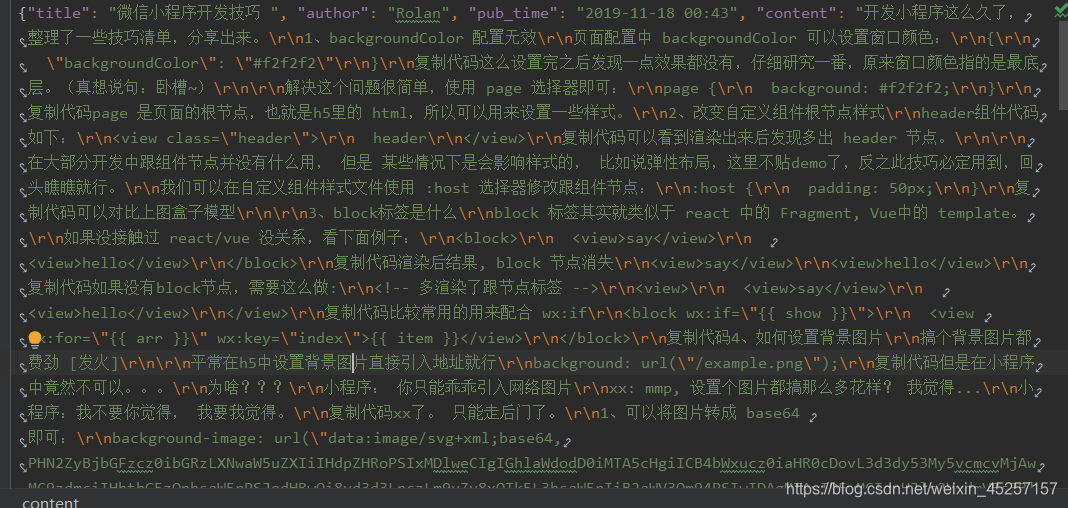

运行结果:

这边因为数据比较多,所以只截取了第一个程序爬取的结果。爬取下来的每一个内容会以json格式来保存下来。