一,前提准备本地ai模型

1,首先需要去ollama官网下载开源ai到本地

网址:Ollama

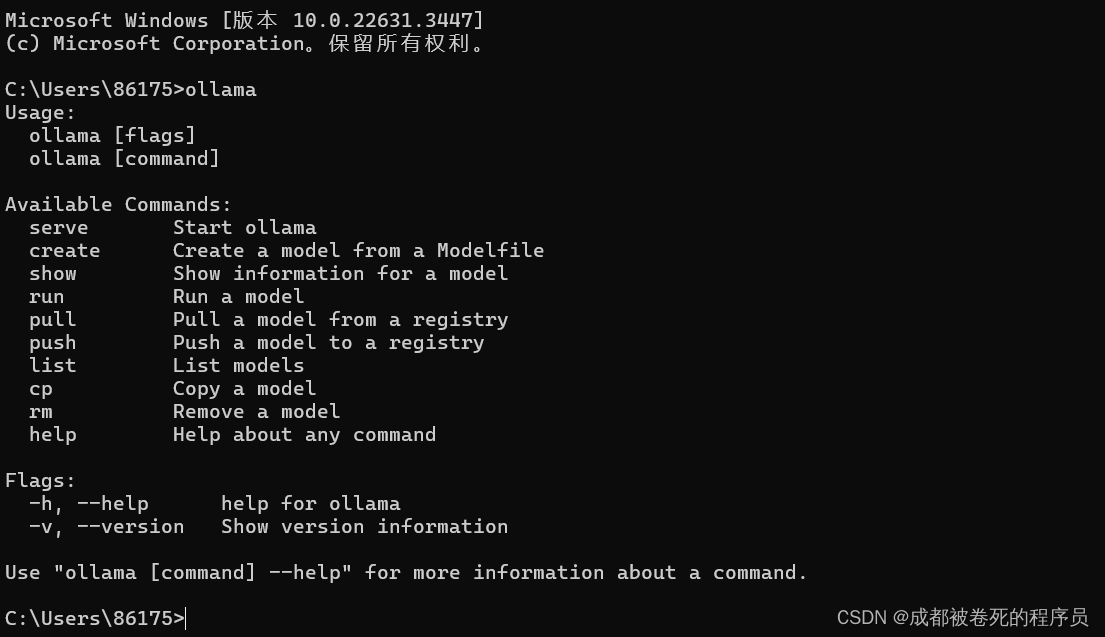

直接下载到本地,然后启动ollama

启动完成后,我们可以在cmd中执行ollama可以看到相关命令行

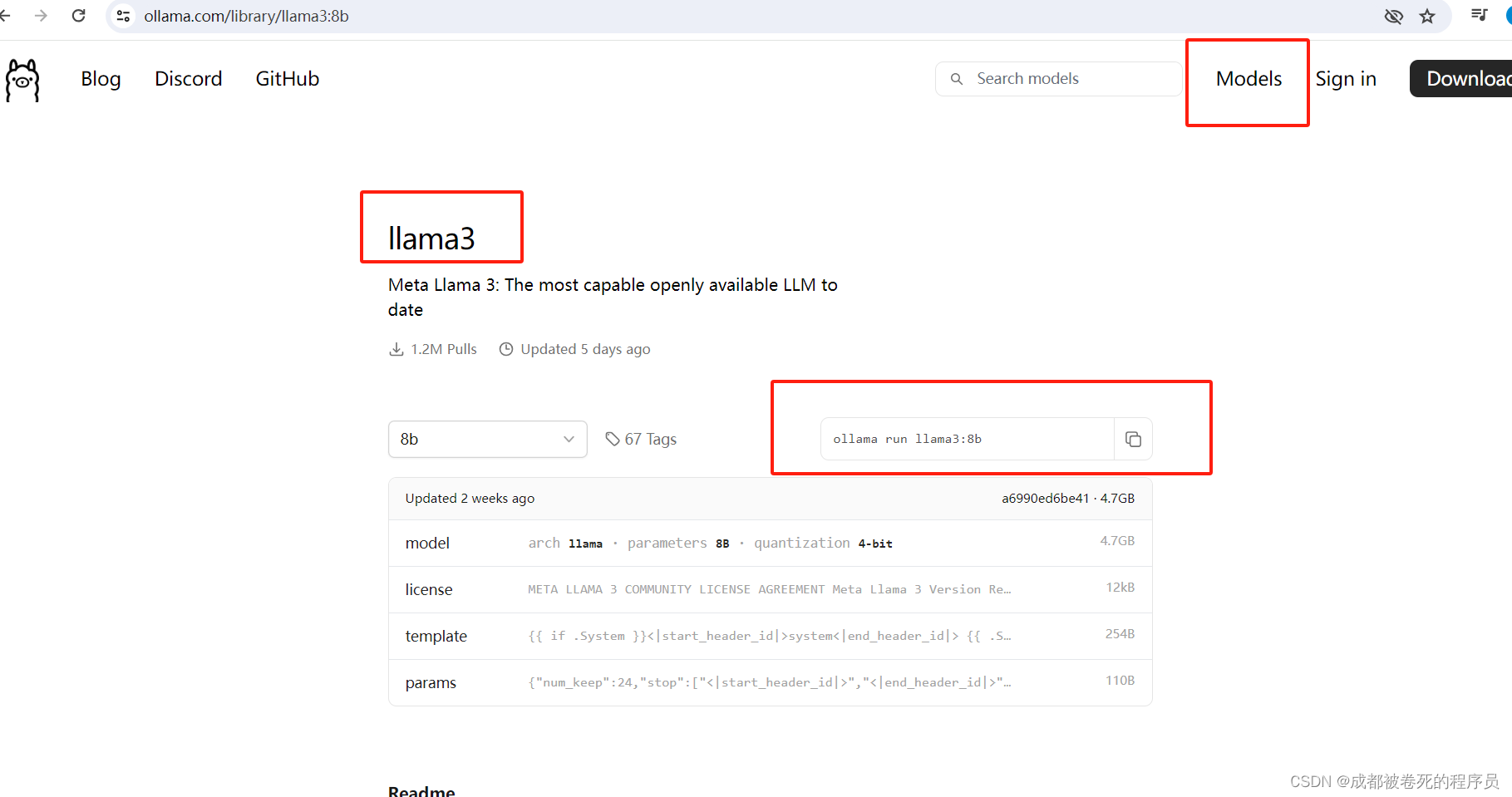

2, 下载ai moudle

然后我们需要在这个ai中给它下载好一个已有模型给我们自己使用

将命令行运行即可下载。

二, 准备本地代码

1,首先准备pom文件中的相关依赖

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

<version>3.2.5</version>

</dependency>

<dependency>

<groupId>io.springboot.ai</groupId>

<artifactId>spring-ai-ollama</artifactId>

<version>1.0.3</version>

</dependency>

</dependencies>2,搭建一个简单的springboot框架

启动类

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

@SpringBootApplication

public class Application {

public static void main(String[] args) {

SpringApplication.run(Application.class, args);

}

}yaml配置

spring:

ai:

ollama:

base-url: localhost:11434具体代码实现--controller

import com.rojer.delegete.OllamaDelegete;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.web.bind.annotation.GetMapping;

import org.springframework.web.bind.annotation.RequestParam;

import org.springframework.web.bind.annotation.RestController;

@RestController

public class OllamaController {

@Autowired(required=true)

OllamaDelegete ollamaDelegete;

@GetMapping("/ai/getMsg")

public Object getOllame(@RequestParam(value = "msg") String msg) {

return ollamaDelegete.getOllame(msg);

}

@GetMapping("/ai/stream/getMsg")

public Object getOllameByStream(@RequestParam(value = "msg") String msg) {

return ollamaDelegete.getOllameByStream(msg);

}具体代码实现--impl

import com.rojer.delegete.OllamaDelegete;

import org.springframework.ai.chat.ChatResponse;

import org.springframework.ai.chat.prompt.Prompt;

import org.springframework.ai.ollama.OllamaChatClient;

import org.springframework.ai.ollama.api.OllamaApi;

import org.springframework.ai.ollama.api.OllamaOptions;

import org.springframework.stereotype.Service;

import reactor.core.publisher.Flux;

import java.util.List;

import java.util.stream.Collectors;

@Service

public class OllamaImpl implements OllamaDelegete {

OllamaApi ollamaApi;

OllamaChatClient chatClient;

{

// 实例化ollama

ollamaApi = new OllamaApi();

OllamaOptions options = new OllamaOptions();

options.setModel("llama3");

chatClient = new OllamaChatClient(ollamaApi).withDefaultOptions(options);

}

/**

* 普通文本调用

*

* @param msg

* @return

*/

@Override

public Object getOllame(String msg) {

Prompt prompt = new Prompt(msg);

ChatResponse call = chatClient.call(prompt);

return call.getResult().getOutput().getContent();

}

/**

* 流式调用

*

* @param msg

* @return

*/

@Override

public Object getOllameByStream(String msg) {

Prompt prompt = new Prompt(msg);

Flux<ChatResponse> stream = chatClient.stream(prompt);

List<String> result = stream.toStream().map(a -> {

return a.getResult().getOutput().getContent();

}).collect(Collectors.toList());

return result;

}

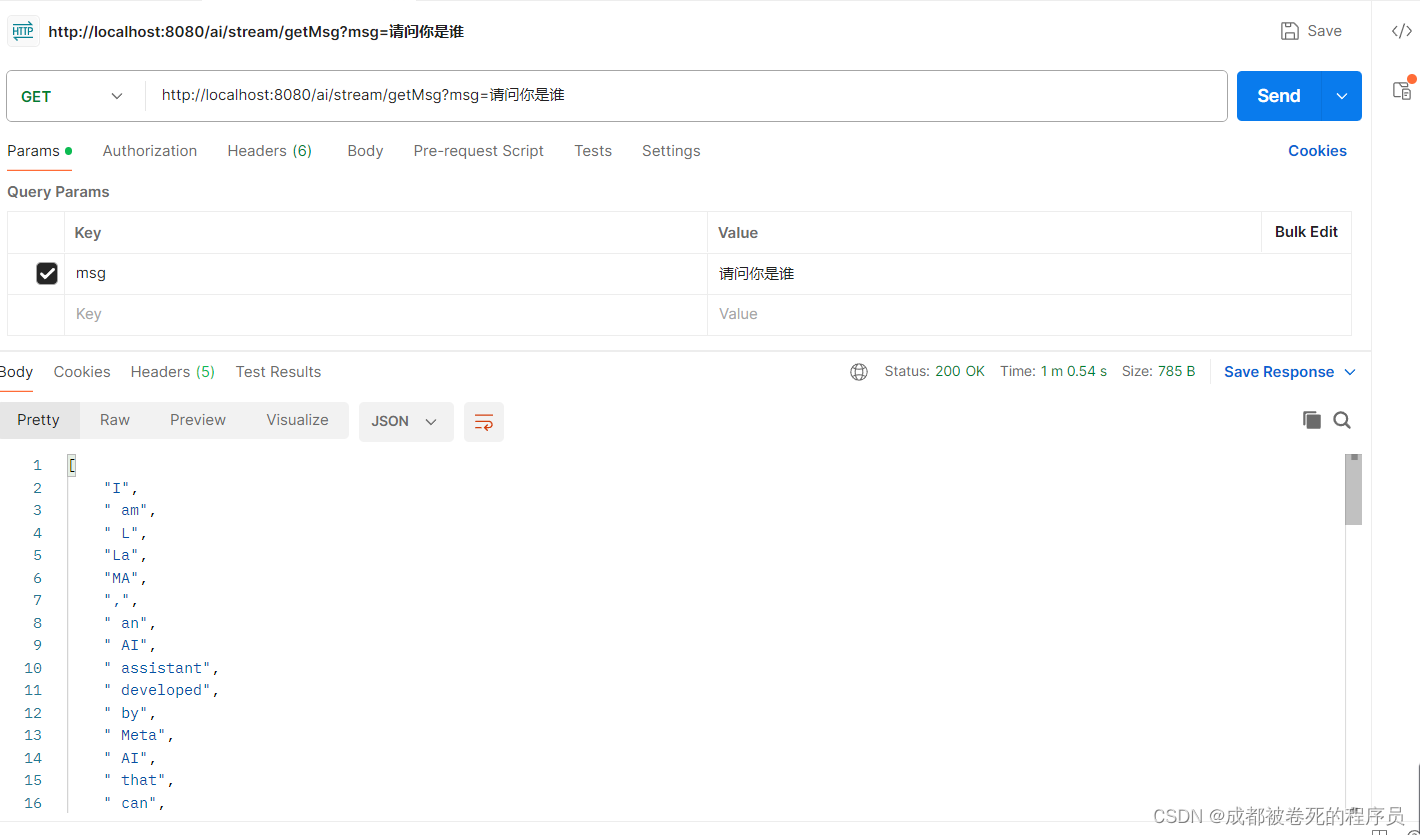

}三,调用展示

我们看看普通调用的展示

流式调用的展示(我们跟ai聊天,回答不是一下子就出来的,就是这种流式调用所展示的这般)

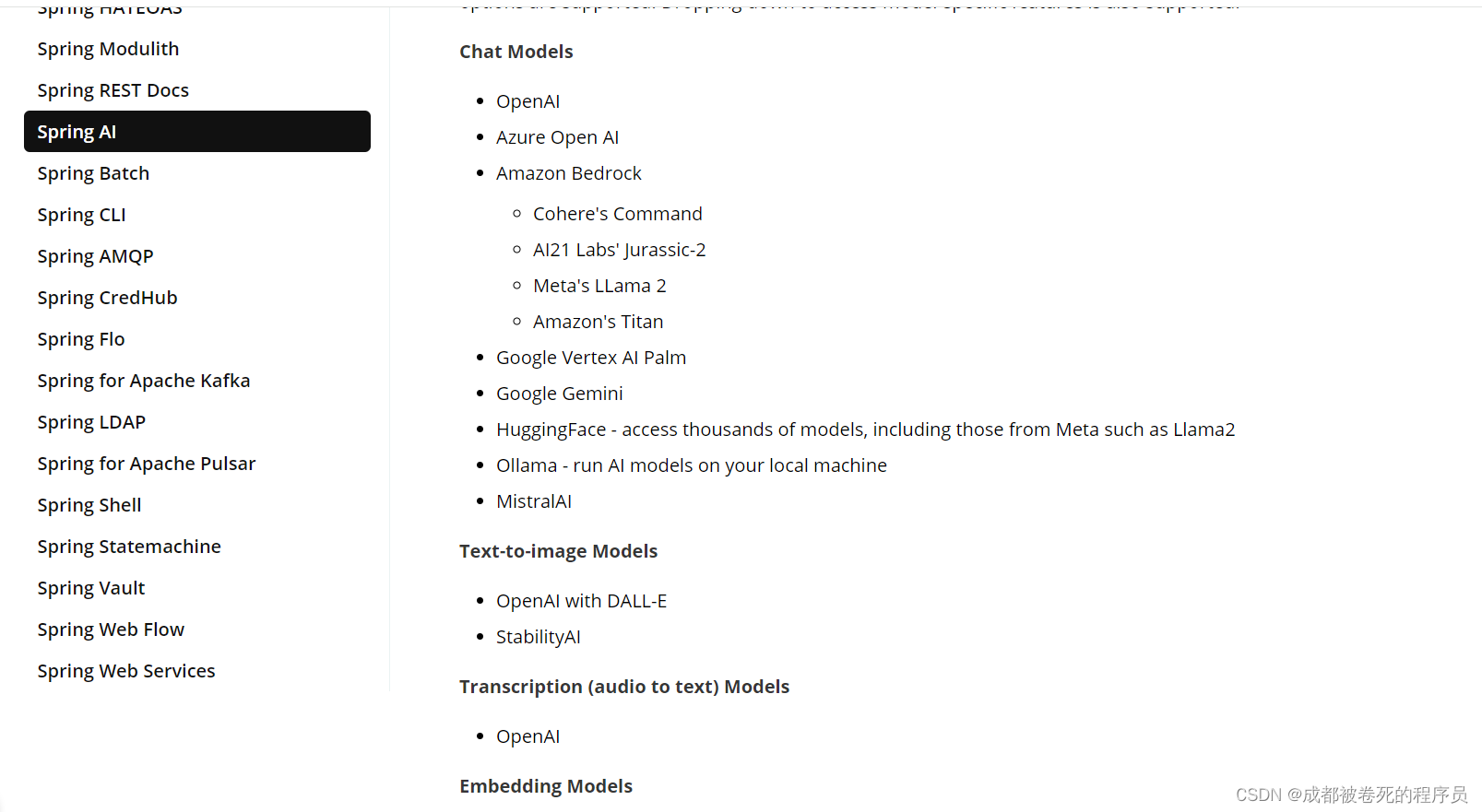

这里不做具体的代码深挖,只做基本基础的运用。后期有机会会出个人模型训练方法。另外我们可以去spring官网查看,目前支持的ai模型有哪些,我这里简单截个图,希望各位看官老爷点个赞,加个关注,谢谢!Spring AI