Tensorflow-0-带GPU支持的安装与校验

零. 回顾与概述

这篇文章详细介绍了Tensorflow的安装和校验安装是否成功的教程,涵盖了在Ubuntu 16.04环境下GPU支持安装和非GPU支持的安装以及校验。

系统概览:

- Ubuntu 16.04 64位

- NVIDIA GTX 770M

内容概览:

- 根据NVIDIA官方文档安装CUDA-Toolkit

- 校验CUDA安装是否成功

- 根据Tensorflow官方文档安装Tensorflow(GPU支持)

- 校验Tensorflow安装是否成功

原版文档很长,这篇文章给使用Ubuntu的朋友们提供一下便利!

一. Tensorflow入门资源推荐

我的第一份Tensorflow入门,给大家介绍一下,是Youtube里周莫烦的Tensorflow基础教程,翻墙点击这里带你去看!

下面好评很多,很基础,现在20集的样子,大家可以订阅,并且希望他能持续更新。

二. Tensorflow安装

首先请翻墙;

点击进入Tensofrflow官网Linux安装页。进入页面之后就会看到安装选择,可以安装非GPU支持的TF(Tensorflow以下简称TF)和GPU支持的TF,我们这边选择安装GPU支持的TF;

检查系统软件硬件是否符合NVIDIA要求;

完整的安装前检查如下:检查系统GPU是否处于CUDA支持列表

通过 lspci | grep -i nvidia 来查看系统GPU型号;如果没有输入,请先运行update-pciids,然后再次运行上一个命令;

并且到 CUDA支持的GPU列表 查看系统GPU是否处于支持列表;

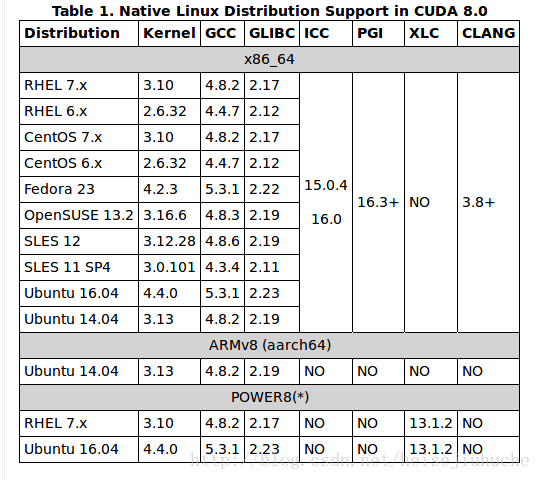

检查当前Linux版本处于CUDA支持列表

通过 uname -m && cat /etc/*release 来查看Linux版本;

CUDA-8支持的Linux版本

检查系统安装了gcc

通过 gcc –version 来查看系统是否安装gcc;如果报错,请安装相应的开发工具包;

检查系统是否安装内核头文件,以及必要的开发工具包;

通过 sudo apt-get install linux-headers-$(uname -r) 安装;

其他Linux发行版本,安装方法详见CUDA安装文档 第2.4章节;

如果上述条件符合,转到第4步,否则,转到第8步安装

非GPU支持的Tensorflow;

需要安装CUDA-TOOLKIT来支持GPU。官方提供了NVIDIA的官方文档;

下载NVIDIA最新的CUDA-TOOLKIT;在页面最下方,以此点击

Linux->x86_64->Ubuntu->16.04->deb(local); 之后点击Download开始下载;下载完成之后,在终端执行以下命令,安装CUDA:

sudo dpkg -i cuda-repo-ubuntu1604-8-0-local-ga2_8.0.61-1_amd64.deb sudo apt-get update sudo apt-get install cuda安装CUDA依赖库:

sudo apt-get install libcupti-dev通过官方推荐的

virtualenv方式来安装TF:完整安装步骤如下:安装pip,virtualenv,以及python开发包

sudo apt-get install python-pip python-dev python-virtualenv创建virtualenv环境

virtualenv --system-site-packages <tensorflow> 上述命令讲创建系统默认python版本的虚拟环境 如果需要指定python版本,务必添加--python选项 virtualenv --system-site-packages --python=<path-to-python-executable> <tensorflow>激活虚拟环境

$ source ~/tensorflow/bin/activate # bash, sh, ksh, or zsh $ source ~/tensorflow/bin/activate.csh # csh or tcsh使用如下相应的命令安装

TF(tensorflow)$ pip install --upgrade tensorflow # for Python 2.7 (tensorflow)$ pip3 install --upgrade tensorflow # for Python 3.n (tensorflow)$ pip install --upgrade tensorflow-gpu # for Python 2.7 and GPU (tensorflow)$ pip3 install --upgrade tensorflow-gpu # for Python 3.n and GPU如果上面一步失败,可能由于使用的pip版本低于了8.1,使用如下命令安装

pip install --upgrade pip # 升级pip,然后重试上一步骤 如果有权限错误,请使用 sudo -H pip install --upgrade pip # 升级pip,然后重试上一步骤至此,CUDA和TF都安装完成;

三. 校验CUDA和TF安装

校验CUDA安装

1) 重启一下系统,让NVIDIA GPU加载刚刚安装的驱动,重启完成之后运行cat /proc/driver/nvidia/version 如果有如下显示,说明GPU驱动加载成功: NVRM version: NVIDIA UNIX x86_64 Kernel Module 375.26 Thu Dec 8 18:36:43 PST 2016 GCC version: gcc version 5.4.0 20160609 (Ubuntu 5.4.0-6ubuntu1~16.04.4)2) 配置环境变量

# cuda env export PATH=/usr/local/cuda-8.0/bin${PATH:+:${PATH}} export LD_LIBRARY_PATH=/usr/local/cuda-8.0/lib64/${LD_LIBRARY_PATH:+:${LD_LIBRARY_PATH}} export LPATH=/usr/lib/nvidia-375:$LPATH export LIBRARY_PATH=/usr/lib/nvidia-375:$LIBRARY_PATH export CUDA_HOME=/usr/local/cuda-8.0务必配置这些环境变量,否则在接下来编译sample的时候会遇到如下错误:

Makefile:346: recipe for target 'cudaDecodeGL' failed3) 安装CUDA样例程序

cuda-install-samples-8.0.sh <dir> 该命令已经在系统环境变量中,直接使用,dir为自定义目录; 执行完该命令之后,如果成功,会在dir中生成一个 NVIDIA_CUDA-8.0_Samples 目录4) 编译样例程序,校验CUDA安装

编译之前首先保证第2)步中的环境变量设置无误,并且第1)步中,GPU驱动版本显示正常 进入 NVIDIA_CUDA-8.0_Samples 目录,执行 make 编译成功之后输入如下: /usr/local/cuda-8.0/bin/nvcc -ccbin g++ -I../../common/inc -I../common/UtilNPP -I../common/FreeImage/include -m64 -gencode arch=compute_20,code=compute_20 -o jpegNPP.o -c jpegNPP.cpp nvcc warning : The 'compute_20', 'sm_20', and 'sm_21' architectures are deprecated, and may be removed in a future release (Use -Wno-deprecated-gpu-targets to suppress warning). /usr/local/cuda-8.0/bin/nvcc -ccbin g++ -m64 -gencode arch=compute_20,code=compute_20 -o jpegNPP jpegNPP.o -L../common/FreeImage/lib -L../common/FreeImage/lib/linux -L../common/FreeImage/lib/linux/x86_64 -lnppi -lnppc -lfreeimage nvcc warning : The 'compute_20', 'sm_20', and 'sm_21' architectures are deprecated, and may be removed in a future release (Use -Wno-deprecated-gpu-targets to suppress warning). mkdir -p ../../bin/x86_64/linux/release cp jpegNPP ../../bin/x86_64/linux/release make[1]: Leaving directory '/home/yuanzimiao/Downloads/NVIDIA_CUDA-8.0_Samples/7_CUDALibraries/jpegNPP' Finished building CUDA samples 注:CUDA版本可以使用 nvcc -V 来查看5) 运行样例程序

进入 bin 目录 运行 ./deviceQuery 如果CUDA安装及配置无误,输出如下: ./deviceQuery Starting... CUDA Device Query (Runtime API) version (CUDART static linking) Detected 1 CUDA Capable device(s) Device 0: "GeForce GTX 770M" CUDA Driver Version / Runtime Version 8.0 / 8.0 CUDA Capability Major/Minor version number: 3.0 Total amount of global memory: 3017 MBytes (3163357184 bytes) ( 5) Multiprocessors, (192) CUDA Cores/MP: 960 CUDA Cores GPU Max Clock rate: 797 MHz (0.80 GHz) Memory Clock rate: 2004 Mhz Memory Bus Width: 192-bit L2 Cache Size: 393216 bytes Maximum Texture Dimension Size (x,y,z) 1D=(65536), 2D=(65536, 65536), 3D=(4096, 4096, 4096) Maximum Layered 1D Texture Size, (num) layers 1D=(16384), 2048 layers Maximum Layered 2D Texture Size, (num) layers 2D=(16384, 16384), 2048 layers Total amount of constant memory: 65536 bytes Total amount of shared memory per block: 49152 bytes Total number of registers available per block: 65536 Warp size: 32 Maximum number of threads per multiprocessor: 2048 Maximum number of threads per block: 1024 Max dimension size of a thread block (x,y,z): (1024, 1024, 64) Max dimension size of a grid size (x,y,z): (2147483647, 65535, 65535) Maximum memory pitch: 2147483647 bytes Texture alignment: 512 bytes Concurrent copy and kernel execution: Yes with 1 copy engine(s) Run time limit on kernels: Yes Integrated GPU sharing Host Memory: No Support host page-locked memory mapping: Yes Alignment requirement for Surfaces: Yes Device has ECC support: Disabled Device supports Unified Addressing (UVA): Yes Device PCI Domain ID / Bus ID / location ID: 0 / 1 / 0 Compute Mode: < Default (multiple host threads can use ::cudaSetDevice() with device simultaneously) > deviceQuery, CUDA Driver = CUDART, CUDA Driver Version = 8.0, CUDA Runtime Version = 8.0, NumDevs = 1, Device0 = GeForce GTX 770M Result = PASS Result = Pass,校验通过 运行: ./bandwidthTest 来测试系统和CUDA组建的通信无误,正常结果输出如下: [CUDA Bandwidth Test] - Starting... Running on... Device 0: GeForce GTX 770M Quick Mode Host to Device Bandwidth, 1 Device(s) PINNED Memory Transfers Transfer Size (Bytes) Bandwidth(MB/s) 33554432 11534.4 Device to Host Bandwidth, 1 Device(s) PINNED Memory Transfers Transfer Size (Bytes) Bandwidth(MB/s) 33554432 11768.0 Device to Device Bandwidth, 1 Device(s) PINNED Memory Transfers Transfer Size (Bytes) Bandwidth(MB/s) 33554432 72735.8 Result = PASS NOTE: The CUDA Samples are not meant for performance measurements. Results may vary when GPU Boost is enabled. 输出Result = Pass 校验通过注:运行期间如果遇到错误,可能是系统SELINUX状态为开启,或者NVIDIA必要文件缺失,详见官方文档 第

6.2.2.3章节至此,CUDA安装校验完成

校验Tensorflow安装

1) 激活虚拟环境,然后运行

$ python >>> import tensorflow as tf >>> hello = tf.constant('Hello, TensorFlow!') >>> sess = tf.Session() >>> print(sess.run(hello)) 如果输出 Hello, TensorFlow! 说明TF安装正常

Edit 1

带GPU支持TF在import tensorflow的时候出现了错误日志:

>>> import tensorflow as t

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcublas.so.8.0 locally

I tensorflow/stream_executor/dso_loader.cc:126] Couldn't open CUDA library libcudnn.so.5. LD_LIBRARY_PATH: /usr/local/cuda-8.0/lib64/

I tensorflow/stream_executor/cuda/cuda_dnn.cc:3517] Unable to load cuDNN DSO

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcufft.so.8.0 locally

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcuda.so.1 locally

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcurand.so.8.0 locally

Couldn't open CUDA library libcudnn.so.5. LD_LIBRARY_PATH: /usr/local/cuda-8.0/lib64/Google得知是因为CUDNN包没有安装;

点击这里进入下载页面;

下载CUDNN需要注册NVIDIA帐号,有些问题要答,随便勾选,随便写点什么就可以了;

完成注册之后,就可以下载了;

下载完成之后,运行

sudo tar -xvf cudnn-8.0-linux-x64-v5.1-rc.tgz -C /usr/local

这里假设/usr/local是cuda的安装目录然后再次import:

>>> import tensorflow as t

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcublas.so.8.0 locally

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcudnn.so.5 locally

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcufft.so.8.0 locally

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcuda.so.1 locally

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcurand.so.8.0 locally

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE3 instructions, but these are available on your machine and could speed up CPU computations.

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.1 instructions, but these are available on your machine and could speed up CPU computations.

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.2 instructions, but these are available on your machine and could speed up CPU computations.

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX instructions, but these are available on your machine and could speed up CPU computations.

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX2 instructions, but these are available on your machine and could speed up CPU computations.

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use FMA instructions, but these are available on your machine and could speed up CPU computations.

I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:910] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

I tensorflow/core/common_runtime/gpu/gpu_device.cc:885] Found device 0 with properties:

name: GeForce GTX 770M

major: 3 minor: 0 memoryClockRate (GHz) 0.797

pciBusID 0000:01:00.0

Total memory: 2.95GiB

Free memory: 2.52GiB

I tensorflow/core/common_runtime/gpu/gpu_device.cc:906] DMA: 0

I tensorflow/core/common_runtime/gpu/gpu_device.cc:916] 0: Y

I tensorflow/core/common_runtime/gpu/gpu_device.cc:975] Creating TensorFlow device (/gpu:0) -> (device: 0, name: GeForce GTX 770M, pci bus id: 0000:01:00.0)

Those are simply warnings. They are just informing you if you build TensorFlow from source it can be faster on your machine. Those instructions are not enabled by default on the builds available I think to be compatible with more CPUs as possible.

If you have any other doubts regarding this please feel free to ask, otherwise this can be closed.所有的库都加载成功了;上面输出的带W的警告,查询后是因为没有通过源代码编译TF,有些CPU的参数没有开启,因此CPU不支持一系列协议。不过,这只会影响CPU的计算速度,并不影响GPU的计算。