一、同步MySQL数据到HDFS案例

案例要求:同步gmall数据库中base_province表数据到HDFS的/base_province目录

需求分析:要实现该功能,需选用MySQLReader和HDFSWriter,MySQLReader具有两种模式分别是TableMode和QuerySQLMode,前者使用table,column,where等属性声明需要同步的数据;后者使用一条SQL查询语句声明需要同步的数据。

因为需要使用到MySQL和HDFS,需要确保MySQL服务和HDFS进程都成功启动。

1.MySQLReader之TableMode

1.1 编写配置文件

(1)创建配置文件base_province.json

vim /opt/module/datax/job/base_province.json

(2)配置文件内容如下

{

"job": {

"content": [

{

"reader": {

"name": "mysqlreader",

"parameter": {

"column": [

"id",

"name",

"region_id",

"area_code",

"iso_code",

"iso_3166_2",

"create_time",

"operate_time"

],

"where": "id>=3",

"connection": [

{

"jdbcUrl": [

"jdbc:mysql://hadoop102:3306/gmall?useUnicode=true&allowPublicKeyRetrieval=true&characterEncoding=utf-8"

],

"table": [

"base_province"

]

}

],

"password": "000000",

"splitPk": "",

"username": "root"

}

},

"writer": {

"name": "hdfswriter",

"parameter": {

"column": [

{

"name": "id",

"type": "bigint"

},

{

"name": "name",

"type": "string"

},

{

"name": "region_id",

"type": "string"

},

{

"name": "area_code",

"type": "string"

},

{

"name": "iso_code",

"type": "string"

},

{

"name": "iso_3166_2",

"type": "string"

},

{

"name": "create_time",

"type": "string"

},

{

"name": "operate_time",

"type": "string"

}

],

"compress": "gzip",

"defaultFS": "hdfs://hadoop102:8020",

"fieldDelimiter": "\t",

"fileName": "base_province",

"fileType": "text",

"path": "/base_province",

"writeMode": "append"

}

}

}

],

"setting": {

"speed": {

"channel": 1

}

}

}

}

配置文件说明

(1)Reader参数说明

"reader": {

"name": "mysqlreader", #使用MySQL读取器

"parameter": {

"column": [ "id","name", "region_id", "area_code", "iso_code","iso_3166_2",

"create_time","operate_time" ],

"where": "id>=3", # where过滤条件,只同步id>3的数据

"connection": [

{

"jdbcUrl": [ #数据库JDBC URL

"jdbc:mysql://hadoop102:3306/gmall?useUnicode=true&allowPublicKeyRetrieval=true&characterEncoding=utf-8"

],

"table": [

"base_province" #需要同步的表名

]

}

],

"password": "******", #数据库密码

"splitPk": "", #分片字段,如果指定该字段,则DataX会启动多个Taask同步数据;若未指定,则只会有单个Task。该参数只在TableMode模式下有效,QueryMode只会有单个Task。

"username": "root" #数据库用户名

}

}

(2)Writer参数说明

"writer": {

"name": "hdfswriter",

"parameter": {

"column": [ #列信息

{

"name": "id",

"type": "bigint"

},

{

"name": "name",

"type": "string"

},

{

"name": "region_id",

"type": "string"

},

{

"name": "area_code",

"type": "string"

},

{

"name": "iso_code",

"type": "string"

},

{

"name": "iso_3166_2",

"type": "string"

},

{

"name": "create_time",

"type": "string"

},

{

"name": "operate_time",

"type": "string"

}

],

"compress": "gzip", #HDFS压缩类型

"defaultFS": "hdfs://hadoop102:8020", #HDFS文件系统给namenode节点地址

"fieldDelimiter": "\t", #HDFS文件字段分隔符

"fileName": "base_province", #HDFS文件名前缀

"fileType": "text", #HDFS文件类型,支持“text”或“orc”

"path": "/base_province", #HDFS文件系统目标路径

"writeMode": "append" #数据写入格式

}

}

(3)Setting参数说明

"setting": {

"speed": { #传输速度配置

"channel": 1 #并发数

}

}

HFDS Writer并未提供nullFormat参数:也就是用户并不能自定义null值写到HFDS文件中的存储格式。默认情况下,HFDS Writer会将null值存储为空字符串(''),而Hive默认的null值存储格式为\N。所以后期将DataX同步的文件导入Hive表就会出现问题。

解决该问题的方案有两个:

一是修改DataX HDFS Writer的源码,增加自定义null值存储格式的逻辑,可参考记Datax3.0解决MySQL抽数到HDFSNULL变为空字符的问题_datax nullformat-CSDN博客。

二是在Hive中建表时指定null值存储格式为空字符串(''),例如:

CREATE EXTERNAL TABLE base_province

(

`id` STRING COMMENT '编号',

`name` STRING COMMENT '省份名称',

`region_id` STRING COMMENT '地区ID',

`area_code` STRING COMMENT '地区编码',

`iso_code` STRING COMMENT '旧版ISO-3166-2编码,供可视化使用',

`iso_3166_2` STRING COMMENT '新版IOS-3166-2编码,供可视化使用'

) COMMENT '省份表'

ROW FORMAT DELIMITED FIELDS TERMINATED BY '\t'

NULL DEFINED AS ''

LOCATION '/base_province/';

1.2 提交任务

1)在HDFS创建base_province目录

使用DataX向HDFS同步数据时,需确保目标路径已存在

hadoop fs -mkdir /base_province

2)进入DataX根目录

cd /opt/module/datax

3)开始同步

python bin/datax.py job/base_province.json

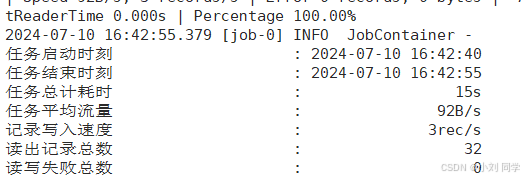

4)查看同步完成后的结果

查看HDFS文件

hadoop fs -cat /base_province/* | zcat

内容如下:

2. MySQLReader之QuerySQLMode

2.1 编写配置文件

(1)创建并修改配置文件base_province_sql.json

vim /opt/module/datax/job/base_province_sql.json

(2)配置文件内容如下

{

"job": {

"content": [

{

"reader": {

"name": "mysqlreader",

"parameter": {

"connection": [

{

"jdbcUrl": [

"jdbc:mysql://hadoop102:3306/gmall?useUnicode=true&allowPublicKeyRetrieval=true&characterEncoding=utf-8"

],

"querySql": [

"select id,name,region_id,area_code,iso_code,iso_3166_2,create_time,operate_time from base_province where id>=3"

]

}

],

"password": "000000",

"username": "root"

}

},

"writer": {

"name": "hdfswriter",

"parameter": {

"column": [

{

"name": "id",

"type": "bigint"

},

{

"name": "name",

"type": "string"

},

{

"name": "region_id",

"type": "string"

},

{

"name": "area_code",

"type": "string"

},

{

"name": "iso_code",

"type": "string"

},

{

"name": "iso_3166_2",

"type": "string"

},

{

"name": "create_time",

"type": "string"

},

{

"name": "operate_time",

"type": "string"

}

],

"compress": "gzip",

"defaultFS": "hdfs://hadoop102:8020",

"fieldDelimiter": "\t",

"fileName": "base_province",

"fileType": "text",

"path": "/base_province",

"writeMode": "append"

}

}

}

],

"setting": {

"speed": {

"channel": 1

}

}

}

}

配置文件说明

(1)Reader参数说明

"reader": {

"name": "mysqlreader",

"parameter": {

"connection": [

{

"jdbcUrl": [ #数据JDBC URL

"jdbc:mysql://hadoop102:3306/gmall?useUnicode=true&allowPublicKeyRetrieval=true&characterEncoding=utf-8"

],

"querySql": [ #SQL查询语句。查询base_province表中id>3的数据

"select id,name,region_id,area_code,iso_code,iso_3166_2,create_time,operate_time from base_province where id>=3"

]

}

],

"password": "000000", #数据库密码

"username": "root" #数据库用户名

}

}

2.2 提交任务

1)清空历史数据

hadoop fs -rm -r -f /base_province/*

2)进入DataX根目录

cd /opt/module/datax

3)开始同步

python bin/datax.py job/base_province_sql.json

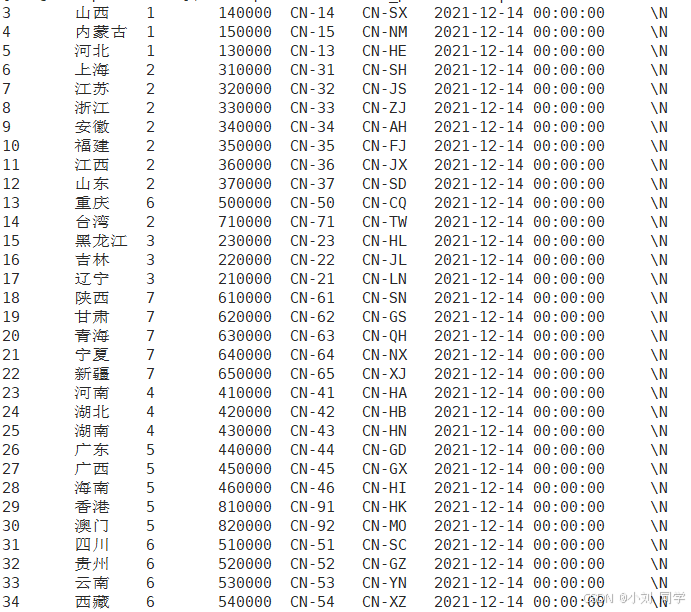

4)查看结果

查看HDFS文件

hadoop fs -cat /base_province/* | zcat

3. DataX传参

通常情况下,离线数据同步任务需要每日定时重复执行,故HDFS上的目标路径通常会包含一层日期,以对每日同步的数据加以区分,也就是说每日同步数据的目标路径不是固定不变的,因此DataX配置文件中HDFS Writer的path参数的值应该是动态的。为实现这一效果,就需要使用DataX传参的功能。

DataX传参的用法如下,在JSON配置文件中使用${param}引用参数,在提交任务时使用-p"-Dparam=value"传入参数值。

3.1 修改配置文件

1)修改配置文件base_province.json

vim /opt/module/datax/job/base_province.json

配置文件内容如下

{

"job": {

"content": [

{

"reader": {

"name": "mysqlreader",

"parameter": {

"connection": [

{

"jdbcUrl": [

"jdbc:mysql://hadoop102:3306/gmall?useUnicode=true&allowPublicKeyRetrieval=true&characterEncoding=utf-8"

],

"querySql": [

"select id,name,region_id,area_code,iso_code,iso_3166_2,create_time,operate_time from base_province where id>=3"

]

}

],

"password": "000000",

"username": "root"

}

},

"writer": {

"name": "hdfswriter",

"parameter": {

"column": [

{

"name": "id",

"type": "bigint"

},

{

"name": "name",

"type": "string"

},

{

"name": "region_id",

"type": "string"

},

{

"name": "area_code",

"type": "string"

},

{

"name": "iso_code",

"type": "string"

},

{

"name": "iso_3166_2",

"type": "string"

},

{

"name": "create_time",

"type": "string"

},

{

"name": "operate_time",

"type": "string"

}

],

"compress": "gzip",

"defaultFS": "hdfs://hadoop102:8020",

"fieldDelimiter": "\t",

"fileName": "base_province",

"fileType": "text",

"path": "/base_province/${dt}",

"writeMode": "append"

}

}

}

],

"setting": {

"speed": {

"channel": 1

}

}

}

}

3.2 提交任务

1)创建目标路径

hadoop fs -mkdir /base_province/2022-06-08

2)进入DataX目录

cd /opt/module/datax

3)开始

python bin/datax.py -p"-Ddt=2022-06-08" job/base_province.json

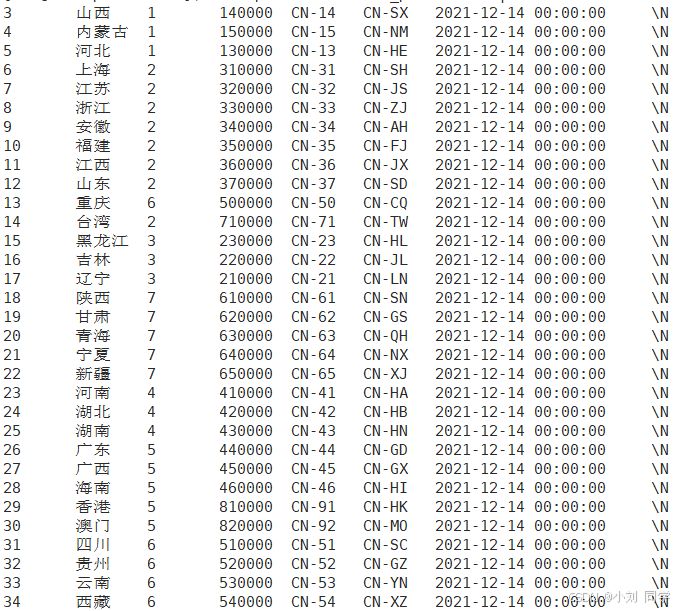

3.3 查看结果

二、同步HDFS数据到MySQL案例

案例要求:同步HDFS上的/base_province目录下的数据到MySQL gmall数据库下的test_province表。

需求分析:要实现该功能,需选用HDFSReader和MySQLWriter。

1.编写配置文件

1)创建配置文件test_province.json

vim /opt/module/datax/job/test_province.json

配置文件内容如下

{

"job": {

"content": [

{

"reader": {

"name": "hdfsreader",

"parameter": {

"defaultFS": "hdfs://hadoop102:8020",

"path": "/base_province/2022-06-08",

"column": [

"*"

],

"fileType": "text",

"compress": "gzip",

"encoding": "UTF-8",

"nullFormat": "\\N",

"fieldDelimiter": "\t",

}

},

"writer": {

"name": "mysqlwriter",

"parameter": {

"username": "root",

"password": "000000",

"connection": [

{

"table": [

"test_province"

],

"jdbcUrl": "jdbc:mysql://hadoop102:3306/gmall?useUnicode=true&allowPublicKeyRetrieval=true&characterEncoding=utf-8"

}

],

"column": [

"id",

"name",

"region_id",

"area_code",

"iso_code",

"iso_3166_2",

"create_time",

"operate_time"

],

"writeMode": "replace"

}

}

}

],

"setting": {

"speed": {

"channel": 1

}

}

}

}

配置文件说明

(1)Reader参数说明

"reader": {

"name": "hdfsreader",

"parameter": {

"defaultFS": "hdfs://hadoop102:8020", #HDFS文件系统namenode地址

"path": "/base_province", #文件所在路径

"column": [ #需要同步的列,*标识所有列

"*"

],

"fileType": "text", #文件类型

"compress": "gzip", #压缩类型

"encoding": "UTF-8", #文件编码

"nullFormat": "\\N", #null值存储格式

"fieldDelimiter": "\t", #字段分隔符

}

}

(2)Writer参数说明

"writer": {

"name": "mysqlwriter",

"parameter": {

"username": "root", #数据库用户名

"password": "000000", #数据库密码

"connection": [

{

"table": [

"test_province/2022-06-08" #目标表

],

"jdbcUrl": "jdbc:mysql://hadoop102:3306/gmall?useUnicode=true&allowPublicKeyRetrieval=true&characterEncoding=utf-8"

}

],

"column": [ #目标列

"id",

"name",

"region_id",

"area_code",

"iso_code",

"iso_3166_2",

"create_time",

"operate_time"

],

"writeMode": "replace" #写入方式

}

}

}

//column:需要同步的列。可以使用索引选择所需列,例如:[{"index":0,"type":"数据类型"},{"index":1,"type":"数据类型"}]标识前两列;或者直接使用属性名选择,例如:["id","name"]; * 可以标识所有列。

写入方式:控制写入数据到目标表采用insert into(insert)或者replace into(replace) 或者 ON DUPLICATE KEY UPDATE(update)语句。

2. 提交任务

1)在MySQL的gmall数据库中创建test_province表

DROP TABLE IF EXISTS `test_province`;

CREATE TABLE `test_province` (

`id` bigint(20) NOT NULL,

`name` varchar(20) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`region_id` varchar(20) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`area_code` varchar(20) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`iso_code` varchar(20) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`iso_3166_2` varchar(20) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`create_time` varchar(20) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

`operate_time` varchar(20) CHARACTER SET utf8 COLLATE utf8_general_ci NULL DEFAULT NULL,

PRIMARY KEY (`id`)

) ENGINE = InnoDB CHARACTER SET = utf8 COLLATE = utf8_general_ci ROW_FORMAT = Dynamic;2)进入DataX根目录

cd /opt/module/datax

3)开始同步

python bin/datax.py job/test_province.json

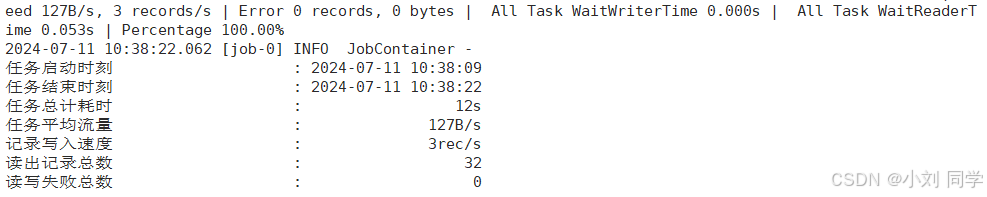

4)查看结果

查看MySQL目标表数据