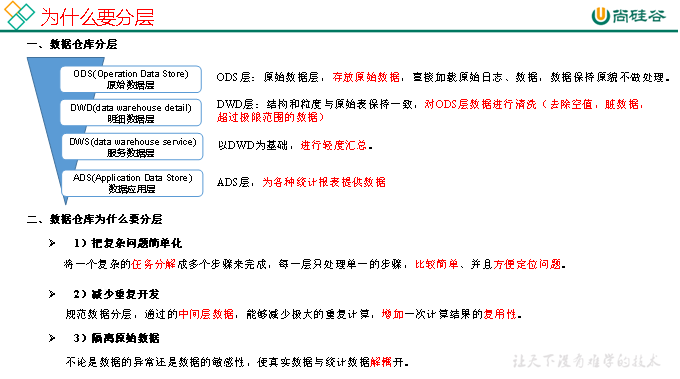

第1章 数仓分层概念

1.1 为什么要分层

1.2 数仓命名规范

- ODS层命名为ods

- DWD层命名为dwd

- DWS层命名为dws

- ADS层命名为ads

- 临时表数据库命名为xxx_tmp

- 备份数据数据库命名为xxx_bak

详见:尚硅谷大数据技术之Hive

2.1 Hive&MySQL安装

2.1.1 Hive&MySQL安装

2.1.2 修改hive-site.xml

1)关闭元数据检查

[atguigu@hadoop102 conf]$ pwd

/opt/module/hive/conf

[atguigu@hadoop102 conf]$ vim hive-site.xml

增加如下配置:

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

</property>

第2章 环境准备

jdk1.8 、hadoop-2.7.2、hive、spark2.1.1

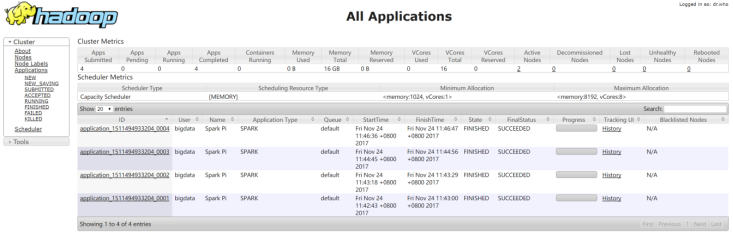

第3章Spark集群安装

机器准备

准备三台Linux服务器,安装好JDK1.8

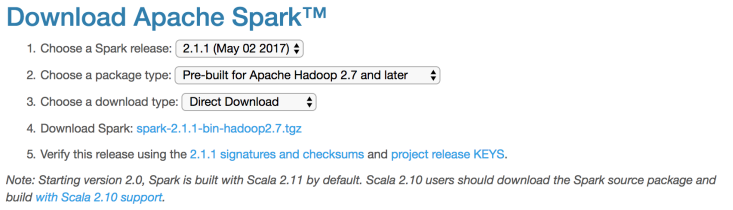

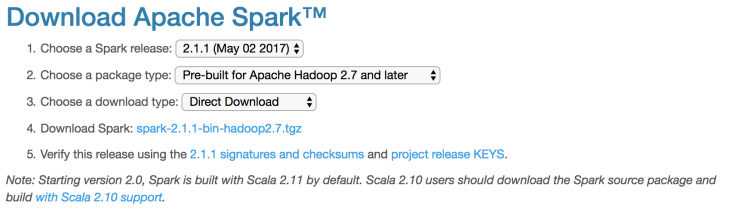

下载Spark安装包

上传解压安装包

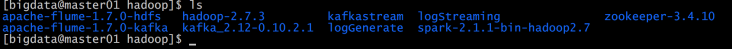

上传spark-2.1.1-bin-hadoop2.7.tgz安装包到Linux上

解压安装包到指定位置

tar -xf spark-2.1.1-bin-hadoop2.7.tgz -C /home/bigdata/Hadoop

配置Spark【Yarn】

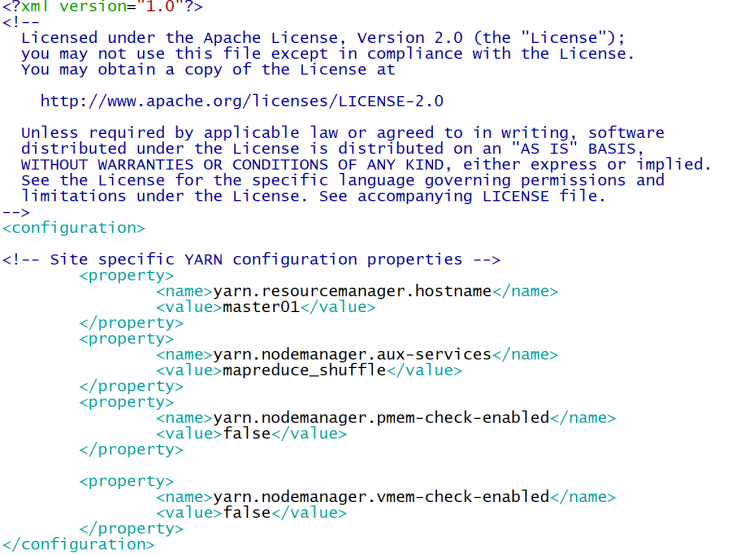

修改Hadoop配置下的yarn-site.xml:

| <configuration> <!-- Site specific YARN configuration properties --> <property> <name>yarn.resourcemanager.hostname</name> <value>master01</value> </property> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <!--是否启动一个线程检查每个任务正使用的物理内存量,如果任务超出分配值,则直接将其杀掉,默认是true --> <property> <name>yarn.nodemanager.pmem-check-enabled</name> <value>false</value> </property> <!--是否启动一个线程检查每个任务正使用的虚拟内存量,如果任务超出分配值,则直接将其杀掉,默认是true --> <property> <name>yarn.nodemanager.vmem-check-enabled</name> <value>false</value> </property> </configuration> |

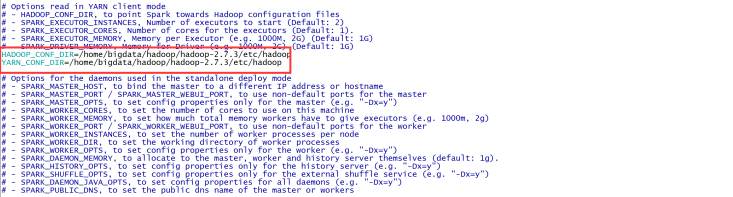

修改Spark-env.sh 添加:

让Spark能够发现Hadoop配置文件

| HADOOP_CONF_DIR=/home/bigdata/hadoop/hadoop-2.7.3/etc/hadoop YARN_CONF_DIR=/home/bigdata/hadoop/hadoop-2.7.3/etc/hadoop |

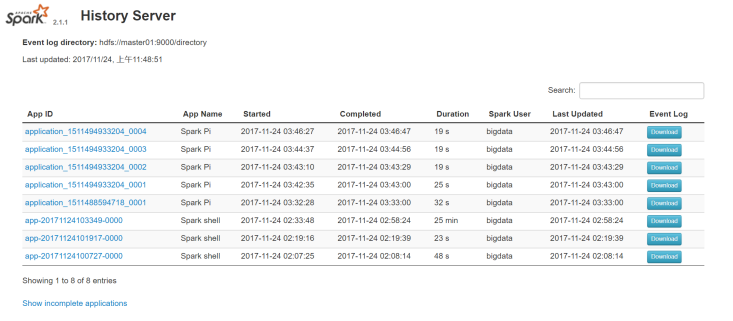

启动spark history server:

可以查看日志。

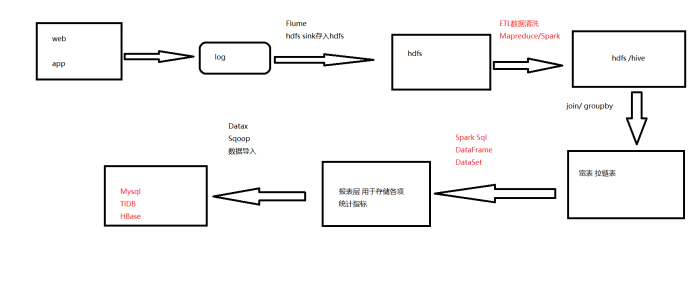

第4章 流程图

第5章 用户注册模块需求

5.1原始数据格式及字段含义

1.baseadlog 广告基础表原始json数据

{

"adid": "0", //基础广告表广告id

"adname": "注册弹窗广告0", //广告详情名称

"dn": "webA" //网站分区

}

- basewebsitelog 网站基础表原始json数据

{

"createtime": "2000-01-01",

"creator": "admin",

"delete": "0",

"dn": "webC", //网站分区

"siteid": "2", //网站id

"sitename": "114", //网站名称

"siteurl": "www.114.com/webC" //网站地址

}

- memberRegtype 用户跳转地址注册表

{

"appkey": "-",

"appregurl": "http:www.webA.com/product/register/index.html", //注册时跳转地址

"bdp_uuid": "-",

"createtime": "2015-05-11",

"dt":"20190722", //日期分区

"dn": "webA", //网站分区

"domain": "-",

"isranreg": "-",

"regsource": "4", //所属平台 1.PC 2.MOBILE 3.APP 4.WECHAT

"uid": "0", //用户id

"websiteid": "0" //对应basewebsitelog 下的siteid网站

}

- pcentermempaymoneylog 用户支付金额表

{

"dn": "webA", //网站分区

"paymoney": "162.54", //支付金额

"siteid": "1", //网站id对应 对应basewebsitelog 下的siteid网站

"dt":"20190722", //日期分区

"uid": "4376695", //用户id

"vip_id": "0" //对应pcentermemviplevellog vip_id

}

- pcentermemviplevellog用户vip等级基础表

{

"discountval": "-",

"dn": "webA", //网站分区

"end_time": "2019-01-01", //vip结束时间

"last_modify_time": "2019-01-01",

"max_free": "-",

"min_free": "-",

"next_level": "-",

"operator": "update",

"start_time": "2015-02-07", //vip开始时间

"vip_id": "2", //vip id

"vip_level": "银卡" //vip级别名称

}

- memberlog 用户基本信息表

{

"ad_id": "0", //广告id

"birthday": "1981-08-14", //出生日期

"dt":"20190722", //日期分区

"dn": "webA", //网站分区

"email": "[email protected]",

"fullname": "王69239", //用户姓名

"iconurl": "-",

"lastlogin": "-",

"mailaddr": "-",

"memberlevel": "6", //用户级别

"password": "123456", //密码

"paymoney": "-",

"phone": "13711235451", //手机号

"qq": "10000",

"register": "2016-08-15", //注册时间

"regupdatetime": "-",

"uid": "69239", //用户id

"unitname": "-",

"userip": "123.235.75.48", //ip地址

"zipcode": "-"

}

其余字段为非统计项 直接使用默认值“-”存储即可

5.2数据分层

在hadoop集群上创建 ods目录

hadoop dfs -mkdir /user/atguigu/ods

在hive里分别建立三个库,dwd、dws、ads 分别用于存储etl清洗后的数据、宽表和拉链表数据、各报表层统计指标数据。

create database dwd;

create database dws;

create database ads;

各层级 ods 存放原始数据

dwd 结构与原始表结构保持一致,对ods层数据进行清洗

dws 以dwd为基础进行轻度汇总

ads 报表层,为各种统计报表提供数据

各层建表语句:

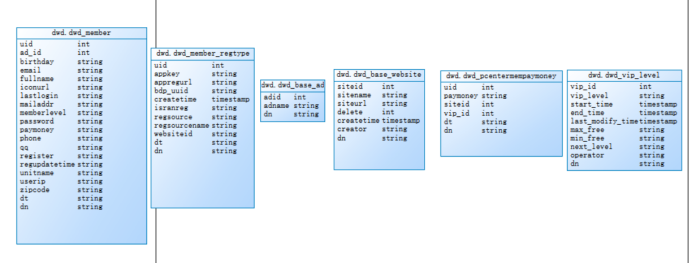

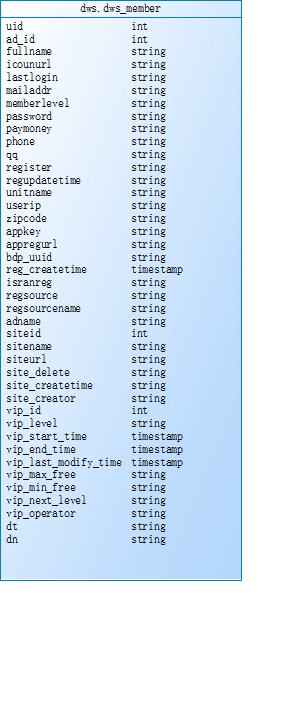

表模型:

dwd层 6张基础表

dws层 宽表和拉链表

宽表

拉链表

报表层各统计表

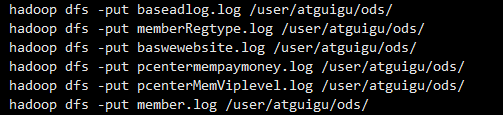

5.3模拟数据采集上传数据

模拟数据采集 将日志文件数据直接上传到hadoop集群上,

5.4 ETL数据清洗

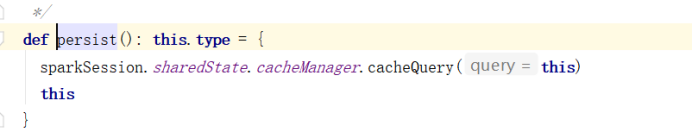

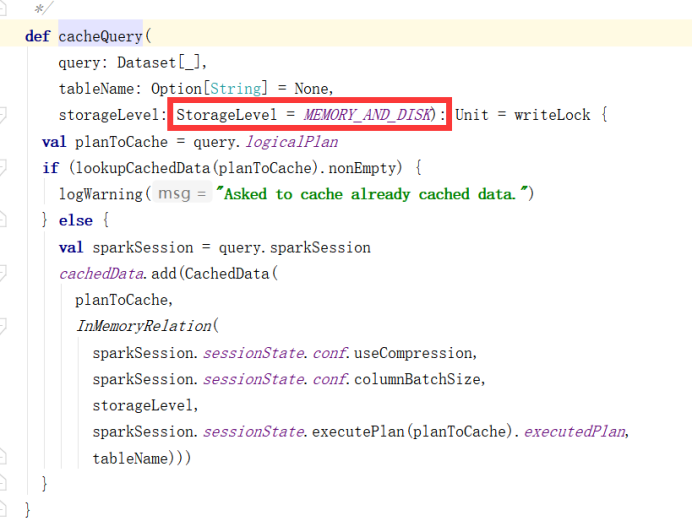

需求1:必须使用Spark进行数据清洗,对用户名、手机号、密码进行脱敏处理,并使用Spark将数据导入到dwd层hive表中

清洗规则 用户名:王XX 手机号:137*****789 密码直接替换成******

5.5基于dwd层表合成dws层的宽表

需求2:对dwd层的6张表进行合并,生成一张宽表,先使用Spark Sql实现。有时间的同学需要使用DataFrame api实现功能,并对join进行优化。

5.6拉链表

需求3:针对dws层宽表的支付金额(paymoney)和vip等级(vip_level)这两个会变动的字段生成一张拉链表,需要一天进行一次更新

5.7报表层各指标统计

需求4:使用Spark DataFrame Api统计通过各注册跳转地址(appregurl)进行注册的用户数,有时间的再写Spark Sql

需求5:使用Spark DataFrame Api统计各所属网站(sitename)的用户数,有时间的再写Spark Sql

需求6:使用Spark DataFrame Api统计各所属平台的(regsourcename)用户数,有时间的再写Spark Sql

需求7:使用Spark DataFrame Api统计通过各广告跳转(adname)的用户数,有时间的再写Spark Sql

需求8:使用Spark DataFrame Api统计各用户级别(memberlevel)的用户数,有时间的再写Spark Sql

需求9:使用Spark DataFrame Api统计各分区网站、用户级别下(dn、memberlevel)的top3用户,有时间的再写Spark Sql

第6章 用户做题模块需求

6.1原始数据格式及字段含义

- QzWebsite.log 做题网站日志数据

{

"createtime": "2019-07-22 11:47:18", //创建时间

"creator": "admin", //创建者

"dn": "webA", //网站分区

"domain": "-",

"dt": "20190722", //日期分区

"multicastgateway": "-",

"multicastport": "-",

"multicastserver": "-",

"sequence": "-",

"siteid": 0, //网站id

"sitename": "sitename0", //网站名称

"status": "-",

"templateserver": "-"

}

- QzSiteCourse.log 网站课程日志数据

{

"boardid": 64, //课程模板id

"coursechapter": "-",

"courseid": 66, //课程id

"createtime": "2019-07-22 11:43:32", //创建时间

"creator": "admin", //创建者

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"helpparperstatus": "-",

"sequence": "-",

"servertype": "-",

"showstatus": "-",

"sitecourseid": 2, //网站课程id

"sitecoursename": "sitecoursename2", //网站课程名称

"siteid": 77, //网站id

"status": "-"

}

- QzQuestionType.log 题目类型数据

{

"createtime": "2019-07-22 10:42:47", //创建时间

"creator": "admin", //创建者

"description": "-",

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"papertypename": "-",

"questypeid": 0, //做题类型id

"quesviewtype": 0,

"remark": "-",

"sequence": "-",

"splitscoretype": "-",

"status": "-",

"viewtypename": "viewtypename0"

}

- QzQuestion.log 做题日志数据

{

"analysis": "-",

"answer": "-",

"attanswer": "-",

"content": "-",

"createtime": "2019-07-22 11:33:46", //创建时间

"creator": "admin", //创建者

"difficulty": "-",

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"lecture": "-",

"limitminute": "-",

"modifystatus": "-",

"optnum": 8,

"parentid": 57,

"quesskill": "-",

"questag": "-",

"questionid": 0, //题id

"questypeid": 57, //题目类型id

"quesviewtype": 44,

"score": 24.124501582742543, //题的分数

"splitscore": 0.0,

"status": "-",

"vanalysisaddr": "-",

"vdeoaddr": "-"

}

- QzPointQuestion.log 做题知识点关联数据

{

"createtime": "2019-07-22 09:16:46", //创建时间

"creator": "admin", //创建者

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"pointid": 0, //知识点id

"questionid": 0, //题id

"questype": 0

}

- QzPoint.log 知识点数据日志

{

"chapter": "-", //所属章节

"chapterid": 0, //章节id

"courseid": 0, //课程id

"createtime": "2019-07-22 09:08:52", //创建时间

"creator": "admin", //创建者

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"excisenum": 73,

"modifystatus": "-",

"pointdescribe": "-",

"pointid": 0, //知识点id

"pointlevel": "9", //知识点级别

"pointlist": "-",

"pointlistid": 82, //知识点列表id

"pointname": "pointname0", //知识点名称

"pointnamelist": "-",

"pointyear": "2019", //知识点所属年份

"remid": "-",

"score": 83.86880766562163, //知识点分数

"sequece": "-",

"status": "-",

"thought": "-",

"typelist": "-"

}

- QzPaperView.log 试卷视图数据

{

"contesttime": "2019-07-22 19:02:19",

"contesttimelimit": "-",

"createtime": "2019-07-22 19:02:19", //创建时间

"creator": "admin", //创建者

"dayiid": 94,

"description": "-",

"dn": "webA", //网站分区

"downurl": "-",

"dt": "20190722", //日期分区

"explainurl": "-",

"iscontest": "-",

"modifystatus": "-",

"openstatus": "-",

"paperdifficult": "-",

"paperid": 83, //试卷id

"paperparam": "-",

"papertype": "-",

"paperuse": "-",

"paperuseshow": "-",

"paperviewcatid": 1,

"paperviewid": 0, //试卷视图id

"paperviewname": "paperviewname0", //试卷视图名称

"testreport": "-"

}

- QzPaper.log 做题试卷日志数据

{

"chapter": "-", //章节

"chapterid": 33, //章节id

"chapterlistid": 69, //所属章节列表id

"courseid": 72, //课程id

"createtime": "2019-07-22 19:14:27", //创建时间

"creator": "admin", //创建者

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"papercatid": 92,

"paperid": 0, //试卷id

"papername": "papername0", //试卷名称

"paperyear": "2019", //试卷所属年份

"status": "-",

"suitnum": "-",

"totalscore": 93.16710017696484 //试卷总分

}

- QzMemberPaperQuestion.log 学员做题详情数据

{

"chapterid": 33, //章节id

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"istrue": "-",

"lasttime": "2019-07-22 11:02:30",

"majorid": 77, //主修id

"opertype": "-",

"paperid": 91,//试卷id

"paperviewid": 37, //试卷视图id

"question_answer": 1, //做题结果(0错误 1正确)

"questionid": 94, //题id

"score": 76.6941793631127, //学员成绩分数

"sitecourseid": 1, //网站课程id

"spendtime": 4823, //所用时间单位(秒)

"useranswer": "-",

"userid": 0 //用户id

}

- QzMajor.log 主修数据

{

"businessid": 41, //主修行业id

"columm_sitetype": "-",

"createtime": "2019-07-22 11:10:20", //创建时间

"creator": "admin", //创建者

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"majorid": 1, //主修id

"majorname": "majorname1", //主修名称

"sequence": "-",

"shortname": "-",

"siteid": 24, //网站id

"status": "-"

}

- QzCourseEduSubject.log 课程辅导数据

{

"courseeduid": 0, //课程辅导id

"courseid": 0, //课程id

"createtime": "2019-07-22 11:14:43", //创建时间

"creator": "admin", //创建者

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"edusubjectid": 44, //辅导科目id

"majorid": 38 //主修id

}

- QzCourse.log 题库课程数据

{

"chapterlistid": 45, //章节列表id

"courseid": 0, //课程id

"coursename": "coursename0", //课程名称

"createtime": "2019-07-22 11:08:15", //创建时间

"creator": "admin", //创建者

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"isadvc": "-",

"majorid": 39, //主修id

"pointlistid": 92, //知识点列表id

"sequence": "8128f2c6-2430-42c7-9cb4-787e52da2d98",

"status": "-"

}

- QzChapterList.log 章节列表数据

{

"chapterallnum": 0, //章节总个数

"chapterlistid": 0, //章节列表id

"chapterlistname": "chapterlistname0", //章节列表名称

"courseid": 71, //课程id

"createtime": "2019-07-22 16:22:19", //创建时间

"creator": "admin", //创建者

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"status": "-"

}

- QzChapter.log 章节数据

{

"chapterid": 0, //章节id

"chapterlistid": 0, //所属章节列表id

"chaptername": "chaptername0", //章节名称

"chapternum": 10, //章节个数

"courseid": 61, //课程id

"createtime": "2019-07-22 16:37:24", //创建时间

"creator": "admin", //创建者

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"outchapterid": 0,

"sequence": "-",

"showstatus": "-",

"status": "-"

}

- QzCenterPaper.log 试卷主题关联数据

{

"centerid": 55, //主题id

"createtime": "2019-07-22 10:48:30", //创建时间

"creator": "admin", //创建者

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"openstatus": "-",

"paperviewid": 2, //视图id

"sequence": "-"

}

- QzCenter.log 主题数据

{

"centerid": 0, //主题id

"centername": "centername0", //主题名称

"centerparam": "-",

"centertype": "3", //主题类型

"centerviewtype": "-",

"centeryear": "2019", //主题年份

"createtime": "2019-07-22 19:13:09", //创建时间

"creator": "-",

"description": "-",

"dn": "webA",

"dt": "20190722", //日期分区

"openstatus": "1",

"provideuser": "-",

"sequence": "-",

"stage": "-"

}

Centerid:主题id centername:主题名称 centertype:主题类型 centeryear:主题年份

createtime:创建时间 dn:网站分区 dt:日期分区

- QzBusiness.log 所属行业数据

{

"businessid": 0, //行业id

"businessname": "bsname0", //行业名称

"createtime": "2019-07-22 10:40:54", //创建时间

"creator": "admin", //创建者

"dn": "webA", //网站分区

"dt": "20190722", //日期分区

"sequence": "-",

"siteid": 1, //所属网站id

"status": "-"

}

6.2模拟数据采集上传数据

6.3解析数据

需求1:使用spark解析ods层数据,将数据存入到对应的hive表中,要求对所有score 分数字段进行保留两位1位小数并且四舍五入。

6.4维度退化

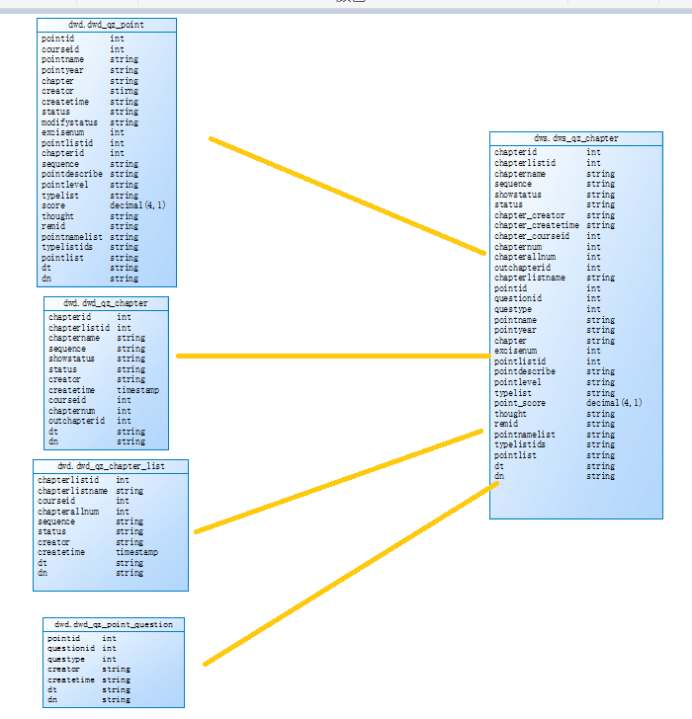

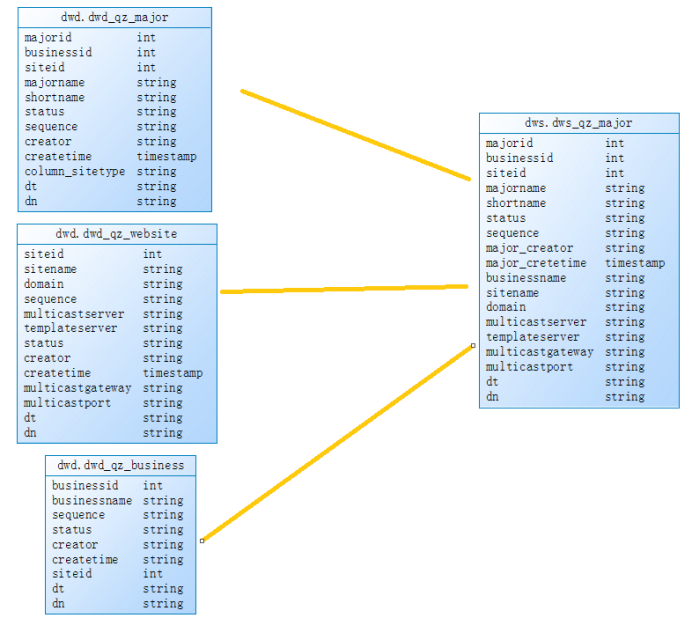

需求2:基于dwd层基础表数据,需要对表进行维度退化进行表聚合,聚合成dws.dws_qz_chapter(章节维度表),dws.dws_qz_course(课程维度表),dws.dws_qz_major(主修维度表),dws.dws_qz_paper(试卷维度表),dws.dws_qz_question(题目维度表),使用spark sql和dataframe api操作

dws.dws_qz_chapte : 4张表join dwd.dwd_qz_chapter inner join dwd.qz_chapter_list join条件:chapterlistid和dn ,inner join dwd.dwd_qz_point join条件:chapterid和dn, inner join dwd.dwd_qz_point_question join条件:pointid和dn

dws.dws_qz_course:3张表join dwd.dwd_qz_site_course inner join dwd.qz_course join条件:courseid和dn , inner join dwd.qz_course_edusubject join条件:courseid和dn

dws.dws_qz_major:3张表join dwd.dwd_qz_major inner join dwd.dwd_qz_website join条件:siteid和dn , inner join dwd.dwd_qz_business join条件:siteid和dn

dws.dws_qz_paper: 4张表join qz_paperview left join qz_center join 条件:paperviewid和dn, 阿善看到

left join qz_center join 条件:centerid和dn, inner join qz_paper join条件:paperid和dn

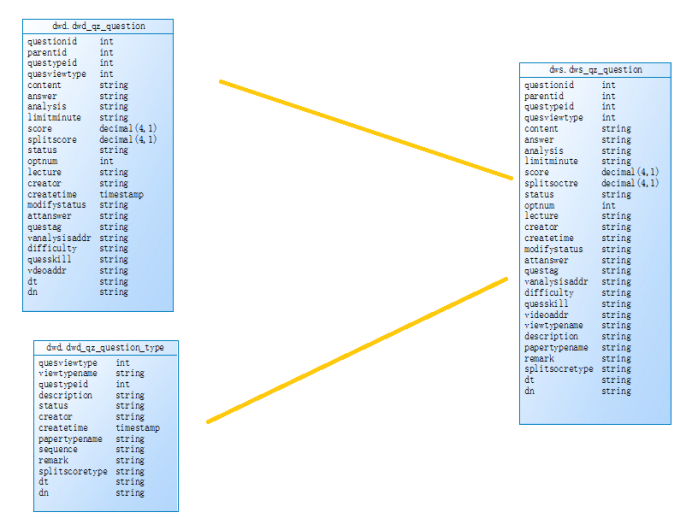

dws.dws_qz_question:2表join qz_quesiton inner join qz_questiontype join条件:

questypeid 和dn

6.5宽表合成

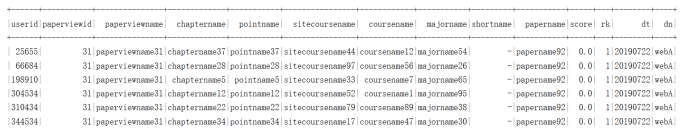

需求3:基于dws.dws_qz_chapter、dws.dws_qz_course、dws.dws_qz_major、dws.dws_qz_paper、dws.dws_qz_question、dwd.dwd_qz_member_paper_question 合成宽表dw.user_paper_detail,使用spark sql和dataframe api操作

dws.user_paper_detail:dwd_qz_member_paper_question inner join dws_qz_chapter join条件:chapterid 和dn ,inner join dws_qz_course join条件:sitecourseid和dn , inner join dws_qz_major join条件majorid和dn, inner join dws_qz_paper 条件paperviewid和dn , inner join dws_qz_question 条件questionid和dn

6.6报表层各指标统计

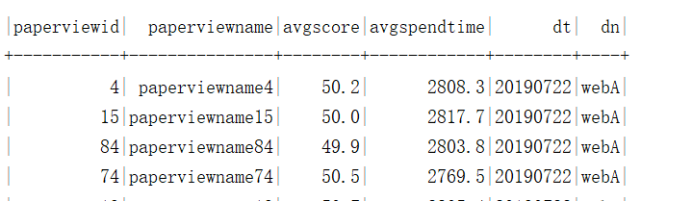

需求4:基于宽表统计各试卷平均耗时、平均分,先使用Spark Sql 完成指标统计,再使用Spark DataFrame Api。

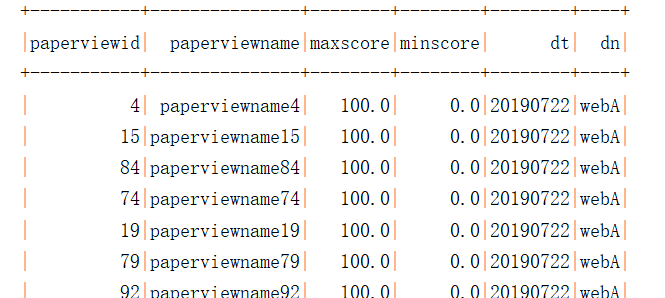

需求5:统计各试卷最高分、最低分,先使用Spark Sql 完成指标统计,再使用Spark DataFrame Api。

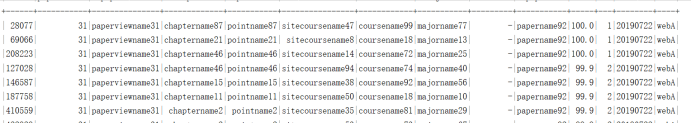

需求6:按试卷分组统计每份试卷的前三用户详情,先使用Spark Sql 完成指标统计,再使用Spark DataFrame Api。

需求7:按试卷分组统计每份试卷的倒数前三的用户详情,先使用Spark Sql 完成指标统计,再使用Spark DataFrame Api。

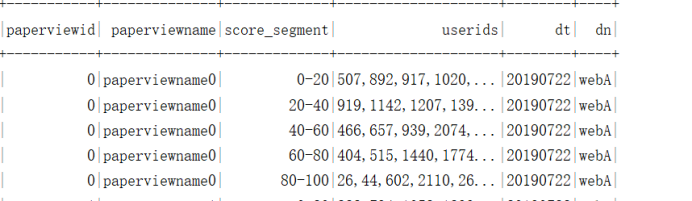

需求8:统计各试卷各分段的用户id,分段有0-20,20-40,40-60,60-80,80-100

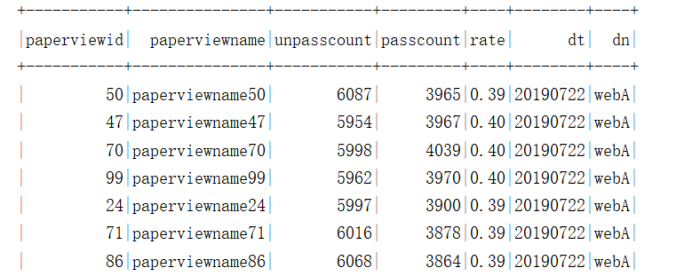

需求9:统计试卷未及格的人数,及格的人数,试卷的及格率 及格分数60

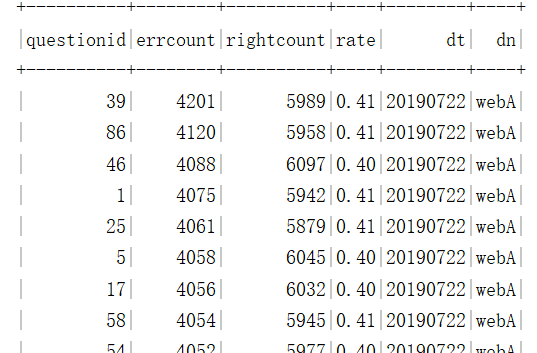

需求10:统计各题的错误数,正确数,错题率

6.7将数据导入mysql

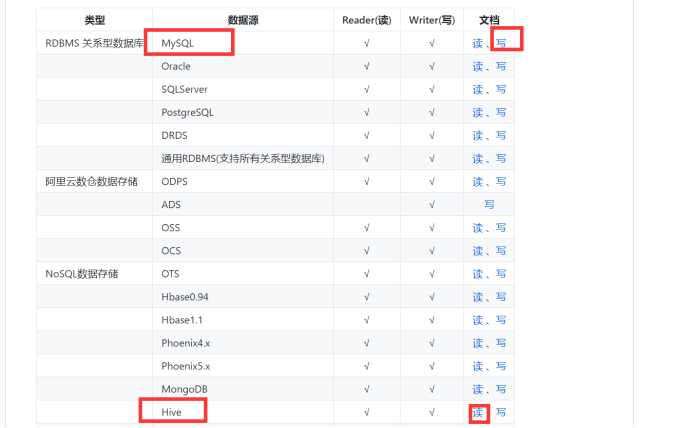

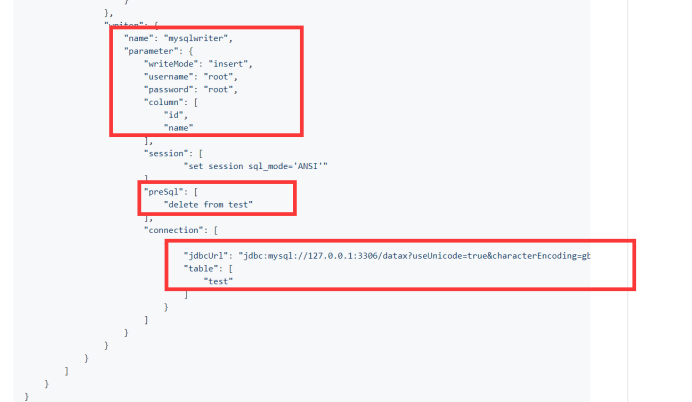

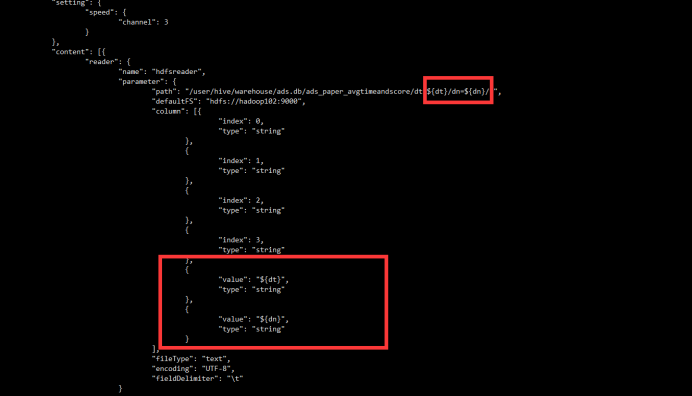

需求11:统计指标数据导入到ads层后,通过datax将ads层数据导入到mysql中

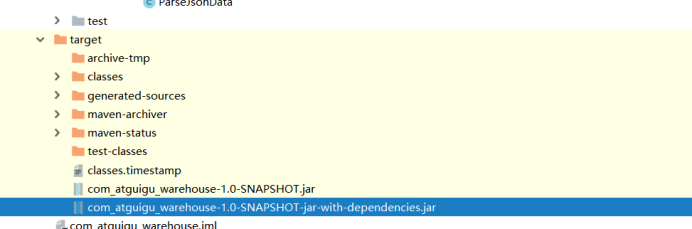

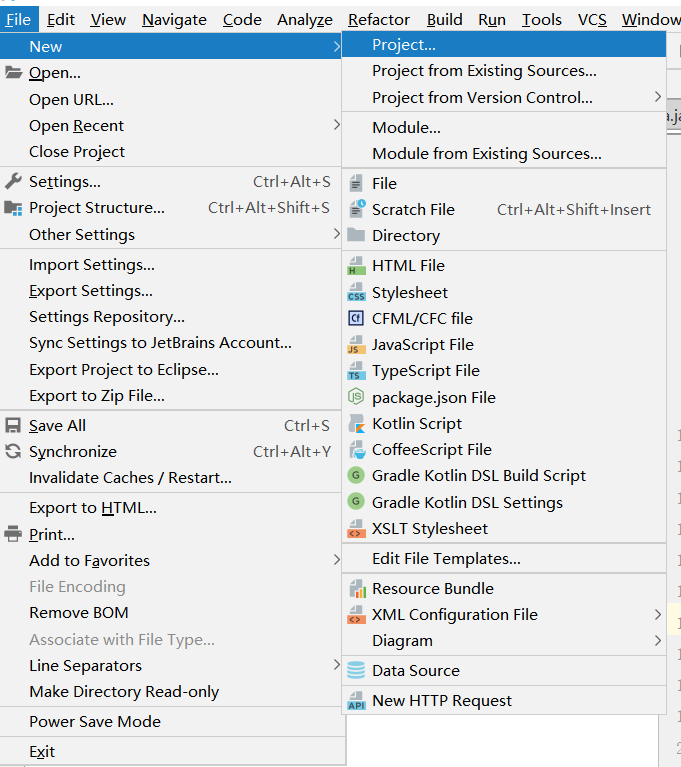

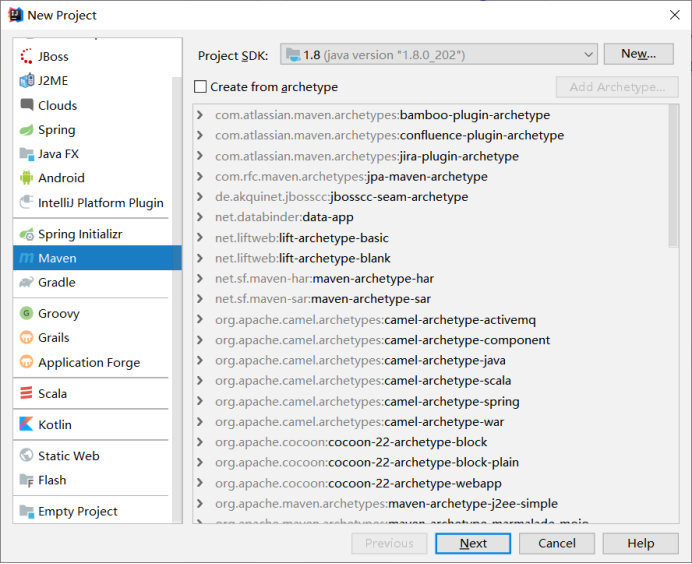

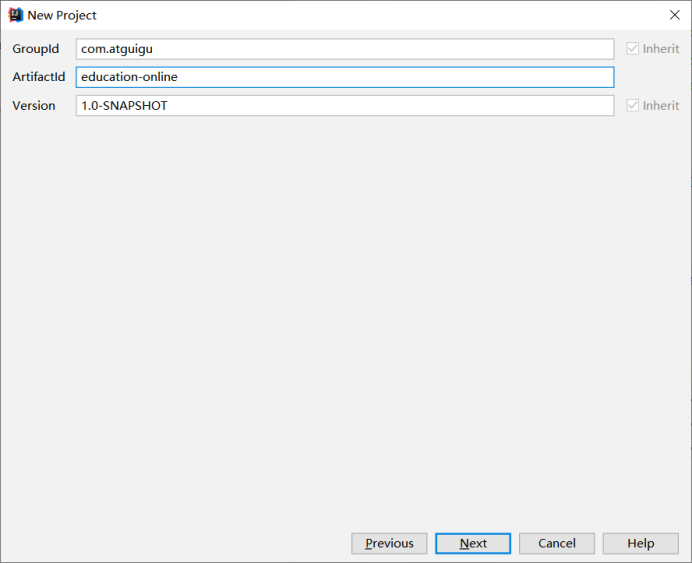

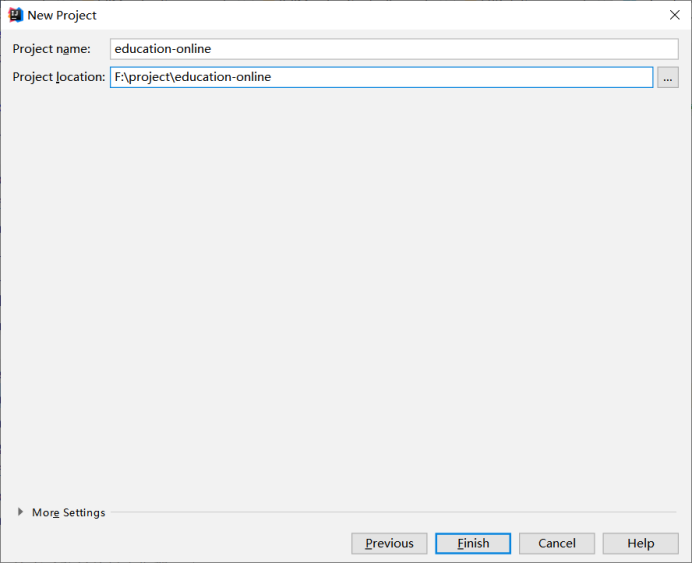

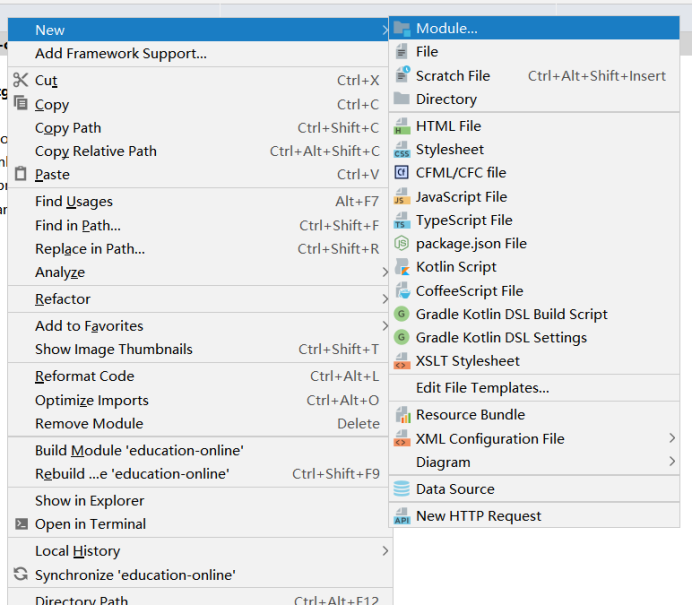

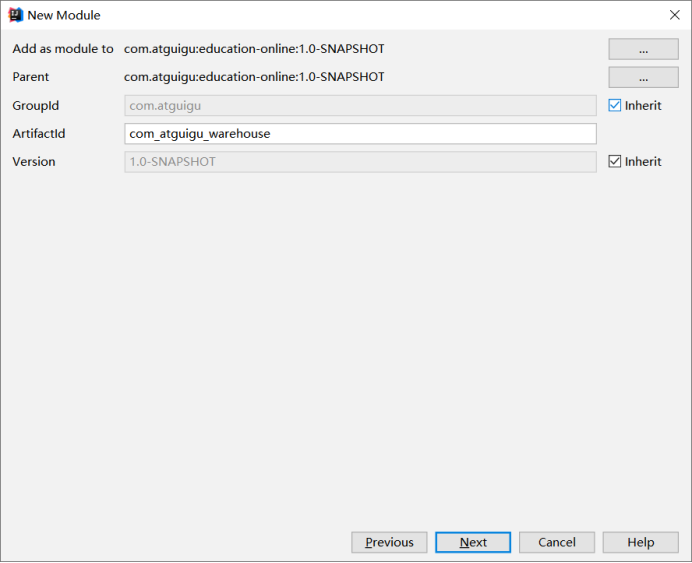

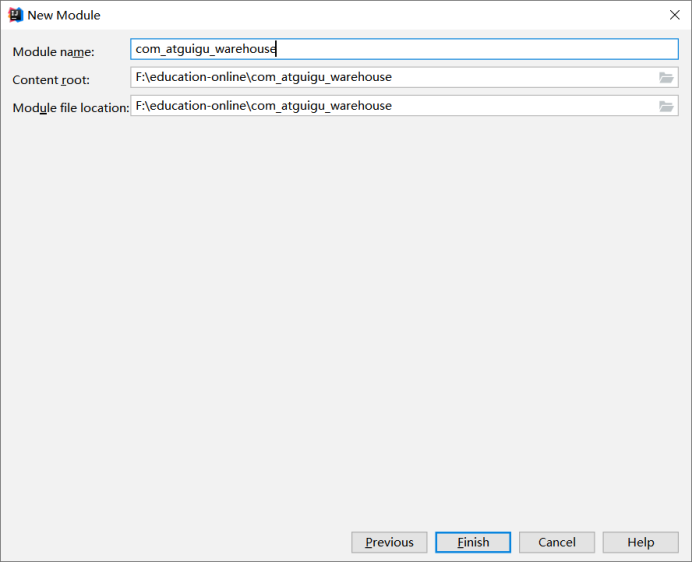

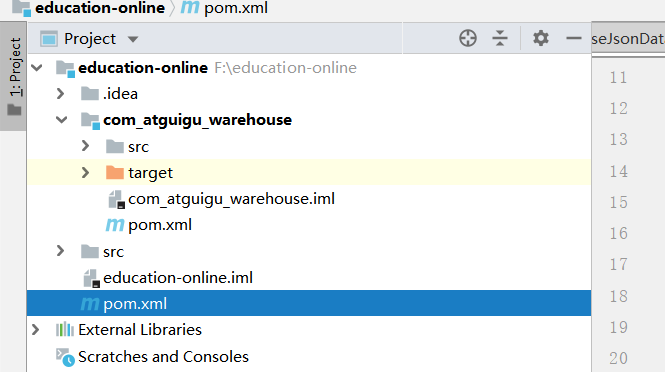

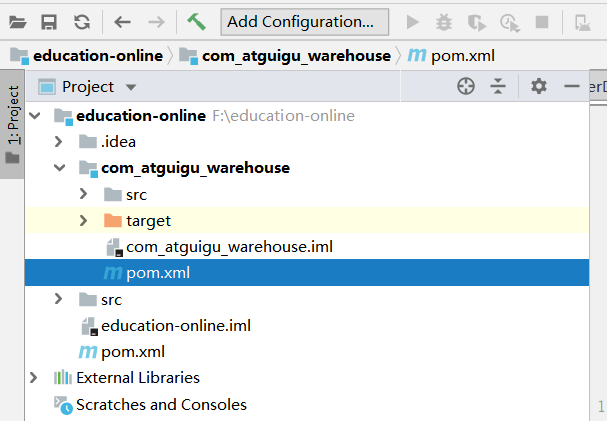

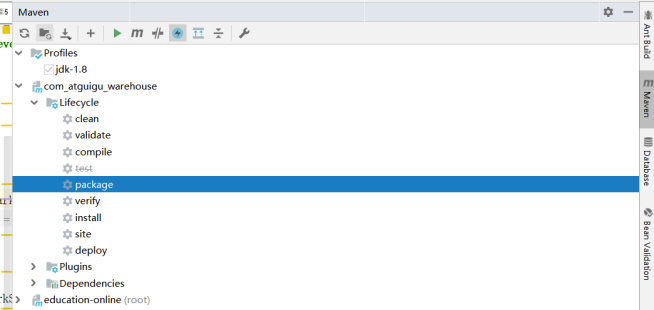

第7章 创建maven 项目

创建子项目

配置主目录pom.xml

<?xml version="1.0" encoding="UTF-8"?><project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>com.atguigu</groupId> <artifactId>education-online</artifactId> <packaging>pom</packaging> <version>1.0-SNAPSHOT</version> <modules> <module>com_atguigu_warehouse</module> </modules> <properties> <spark.version>2.1.1</spark.version> <scala.version>2.11.8</scala.version> <log4j.version>1.2.17</log4j.version> <slf4j.version>1.7.22</slf4j.version> </properties> <dependencies> <!-- 所有子项目的日志框架 --> <dependency> <groupId>org.slf4j</groupId> <artifactId>jcl-over-slf4j</artifactId> <version>${slf4j.version}</version> </dependency> <dependency> <groupId>org.slf4j</groupId> <artifactId>slf4j-api</artifactId> <version>${slf4j.version}</version> </dependency> <dependency> <groupId>org.slf4j</groupId> <artifactId>slf4j-log4j12</artifactId> <version>${slf4j.version}</version> </dependency> <!-- 具体的日志实现 --> <dependency> <groupId>log4j</groupId> <artifactId>log4j</artifactId> <version>${log4j.version}</version> </dependency> <!-- Logging End --> </dependencies> <dependencyManagement> <dependencies> <!-- 引入Spark相关的Jar包 --> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-core_2.11</artifactId> <version>${spark.version}</version> <!-- provider如果存在,那么运行时该Jar包不存在,也不会打包到最终的发布版本中,只是编译器有效 --> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-sql_2.11</artifactId> <version>${spark.version}</version> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-streaming_2.11</artifactId> <version>${spark.version}</version> </dependency> <dependency> <groupId>org.scala-lang</groupId> <artifactId>scala-library</artifactId> <version>${scala.version}</version> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-hive_2.11</artifactId> <version>${spark.version}</version> </dependency> </dependencies> </dependencyManagement> <!-- 声明构建信息 --> <build> <!-- 声明并引入子项目共有的插件【插件就是附着到Maven各个声明周期的具体实现】 --> <plugins> <plugin > <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-compiler-plugin</artifactId> <version>3.6.1</version> <!-- 所有的编译都依照JDK1.8来搞 --> <configuration> <source>1.8</source> <target>1.8</target> </configuration> </plugin> </plugins> <!-- 仅声明子项目共有的插件,如果子项目需要此插件,那么子项目需要声明 --> <pluginManagement> <plugins> <!-- 该插件用于将Scala代码编译成class文件 --> <plugin> <groupId>net.alchim31.maven</groupId> <artifactId>scala-maven-plugin</artifactId> <version>3.2.2</version> <executions> <execution> <!-- 声明绑定到maven的compile阶段 --> <goals> <goal>compile</goal> <goal>testCompile</goal> </goals> </execution> </executions> </plugin> <!-- 用于项目的打包插件 --> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-assembly-plugin</artifactId> <version>3.0.0</version> <executions> <execution> <id>make-assembly</id> <phase>package</phase> <goals> <goal>single</goal> </goals> </execution> </executions> </plugin> </plugins> </pluginManagement> </build></project>

配置子项目pom.xml

<?xml version="1.0" encoding="UTF-8"?><project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <parent> <artifactId>education-online</artifactId> <groupId>com.atguigu</groupId> <version>1.0-SNAPSHOT</version> </parent> <modelVersion>4.0.0</modelVersion> <artifactId>com_atguigu_warehouse</artifactId> <dependencies> <!-- Spark的依赖引入 --> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-core_2.11</artifactId> <version>${spark.version}</version> <scope>provided</scope> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-sql_2.11</artifactId> <version>${spark.version}</version> <scope>provided</scope> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-hive_2.11</artifactId> <version>${spark.version}</version> <scope>provided</scope> </dependency> <!-- 引入Scala --> <dependency> <groupId>org.scala-lang</groupId> <artifactId>scala-library</artifactId> <scope>provided</scope> </dependency> <dependency> <groupId>com.alibaba</groupId> <artifactId>fastjson</artifactId> <version>1.2.47</version> </dependency> </dependencies> <build> <plugins> <plugin> <groupId>org.scala-tools</groupId> <artifactId>maven-scala-plugin</artifactId> <version>2.15.1</version> <executions> <execution> <id>compile-scala</id> <goals> <goal>add-source</goal> <goal>compile</goal> </goals> </execution> <execution> <id>test-compile-scala</id> <goals> <goal>add-source</goal> <goal>testCompile</goal> </goals> </execution> </executions> </plugin> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-assembly-plugin</artifactId> <configuration> <archive> <manifest> </manifest> </archive> <descriptorRefs> <descriptorRef>jar-with-dependencies</descriptorRef> </descriptorRefs> </configuration> </plugin> </plugins> </build></project>

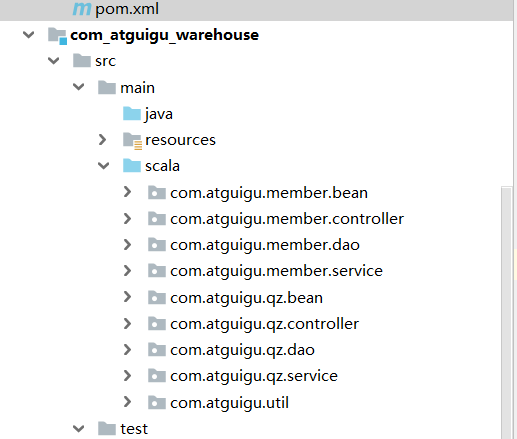

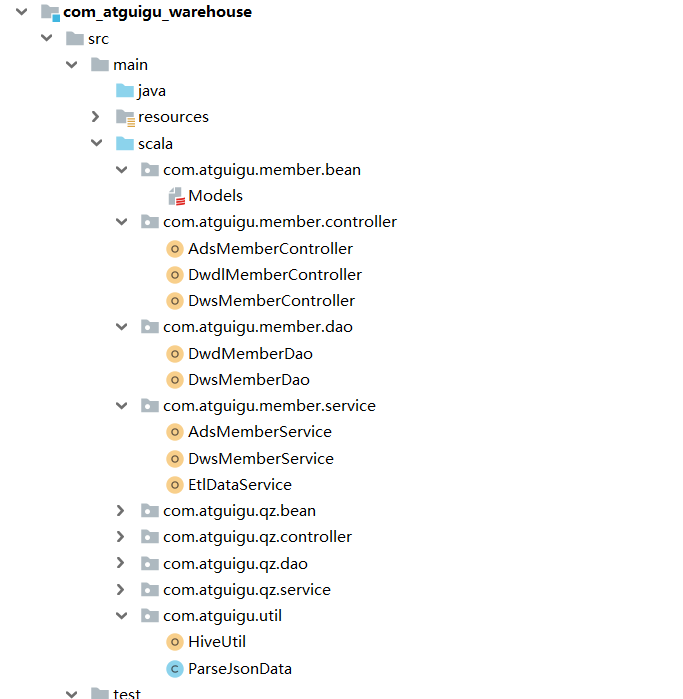

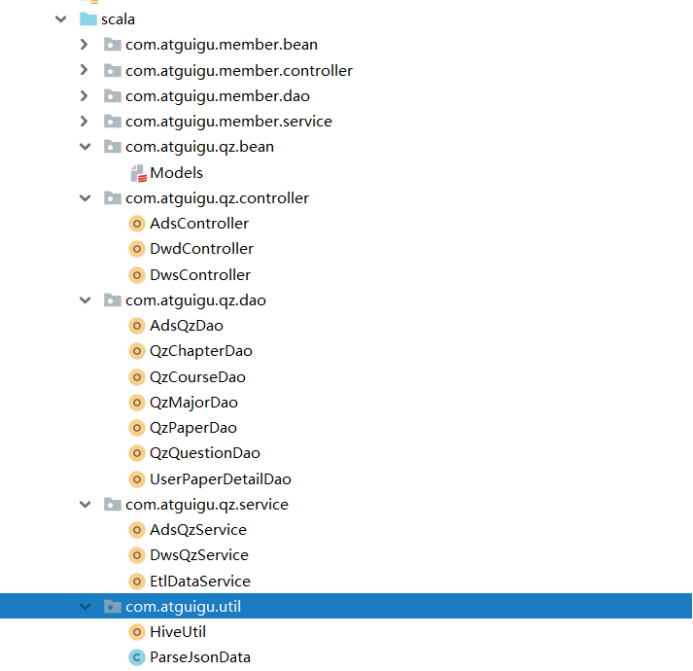

建相应的项目包

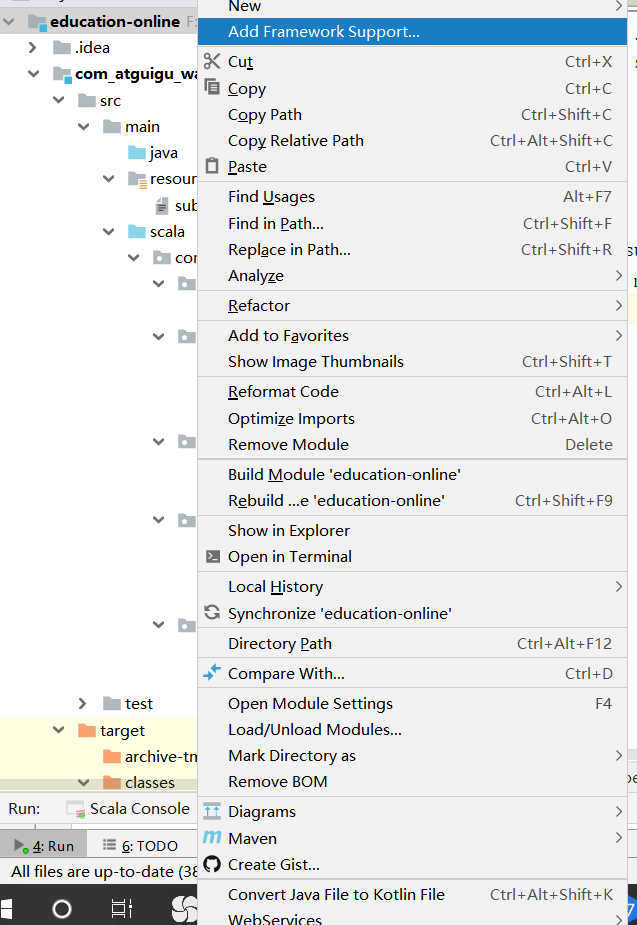

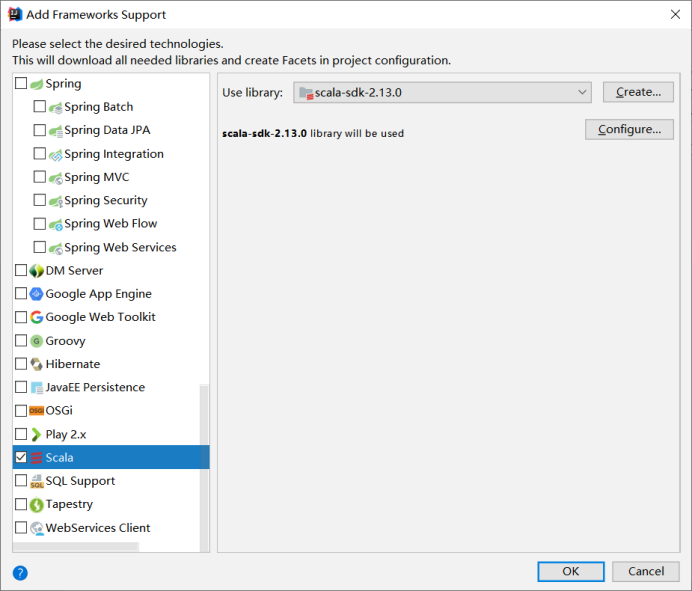

添加scala库支持

Bean 目录下存放实体类

Controller 目录下存放程序入口类

Dao 目录下存放各表sql类

Service 目录下存放各表业务类

Util目录下存放工具类

第8章 用户注册模块代码实现

8.1整体类结构

8.2准备样例类

package com.atguigu.member.beancase class MemberZipper( uid: Int, var paymoney: String, vip_level: String, start_time: String, var end_time: String, dn: String )case class MemberZipperResult(list: List[MemberZipper])case class QueryResult( uid: Int, ad_id: Int, memberlevel: String, register: String, appregurl: String, //注册来源url regsource: String, regsourcename: String, adname: String, siteid: String, sitename: String, vip_level: String, paymoney: BigDecimal, dt: String, dn: String )case class DwsMember( uid: Int, ad_id: Int, fullname: String, iconurl: String, lastlogin: String, mailaddr: String, memberlevel: String, password: String, paymoney: BigDecimal, phone: String, qq: String, register: String, regupdatetime: String, unitname: String, userip: String, zipcode: String, appkey: String, appregurl: String, bdp_uuid: String, reg_createtime: String, isranreg: String, regsource: String, regsourcename: String, adname: String, siteid: String, sitename: String, siteurl: String, site_delete: String, site_createtime: String, site_creator: String, vip_id: String, vip_level: String, vip_start_time: String, vip_end_time: String, vip_last_modify_time: String, vip_max_free: String, vip_min_free: String, vip_next_level: String, vip_operator: String, dt: String, dn: String )case class DwsMember_Result( uid: Int, ad_id: Int, fullname: String, icounurl: String, lastlogin: String, mailaddr: String, memberlevel: String, password: String, paymoney: String, phone: String, qq: String, register: String, regupdatetime: String, unitname: String, userip: String, zipcode: String, appkey: String, appregurl: String, bdp_uuid: String, reg_createtime: String, isranreg: String, regsource: String, regsourcename: String, adname: String, siteid: String, sitename: String, siteurl: String, site_delete: String, site_createtime: String, site_creator: String, vip_id: String, vip_level: String, vip_start_time: String, vip_end_time: String, vip_last_modify_time: String, vip_max_free: String, vip_min_free: String, vip_next_level: String, vip_operator: String, dt: String, dn: String )

8.3创建工具类

解析json使用fastjson,在util下创建ParseJosnData工具类

package com.atguigu.util;import com.alibaba.fastjson.JSONObject;public class ParseJsonData { public static JSONObject getJsonData(String data) { try { return JSONObject.parseObject(data); } catch (Exception e) { return null; } }}

在util包下创建Hive工具HiveUtil类

package com.atguigu.utilimport org.apache.spark.sql.SparkSessionobject HiveUtil { /** * 调大最大分区个数 * @param spark * @return */ def setMaxpartitions(spark: SparkSession)={ spark.sql("set hive.exec.dynamic.partition=true") spark.sql("set hive.exec.dynamic.partition.mode=nonstrict") spark.sql("set hive.exec.max.dynamic.partitions=100000") spark.sql("set hive.exec.max.dynamic.partitions.pernode=100000") spark.sql("set hive.exec.max.created.files=100000") } /** * 开启压缩 * * @param spark * @return */ def openCompression(spark: SparkSession) = { spark.sql("set mapred.output.compress=true") spark.sql("set hive.exec.compress.output=true") } /** * 开启动态分区,非严格模式 * * @param spark */ def openDynamicPartition(spark: SparkSession) = { spark.sql("set hive.exec.dynamic.partition=true") spark.sql("set hive.exec.dynamic.partition.mode=nonstrict") } /** * 使用lzo压缩 * * @param spark */ def useLzoCompression(spark: SparkSession) = { spark.sql("set io.compression.codec.lzo.class=com.hadoop.compression.lzo.LzoCodec") spark.sql("set mapred.output.compression.codec=com.hadoop.compression.lzo.LzopCodec") } /** * 使用snappy压缩 * @param spark */ def useSnappyCompression(spark:SparkSession)={ spark.sql("set mapreduce.map.output.compress.codec=org.apache.hadoop.io.compress.SnappyCodec"); spark.sql("set mapreduce.output.fileoutputformat.compress=true") spark.sql("set mapreduce.output.fileoutputformat.compress.codec=org.apache.hadoop.io.compress.SnappyCodec") }}

8.4对日志进行数据清洗导入

收集日志原始数据后 我们需要对日志原始数据进行清洗 将清洗后的数据存入dwd层表

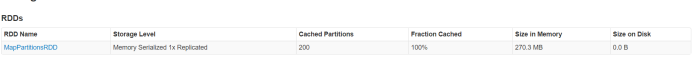

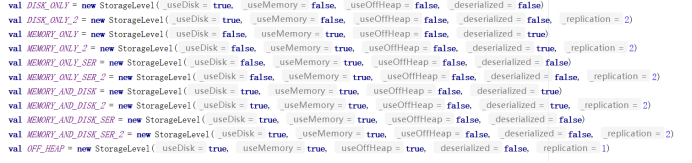

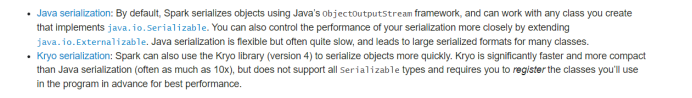

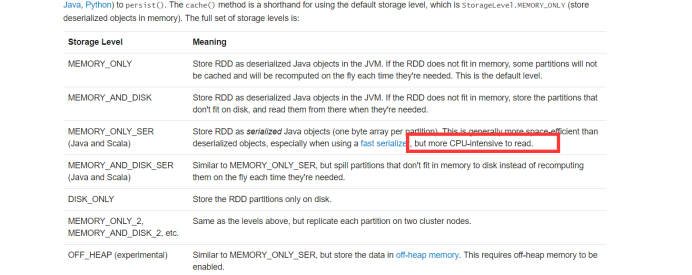

创建EtlDatService清洗类,使用该类读取hdfs上的原始日志数据,对原始日志进行清洗处理,对敏感字段姓名、电话做脱敏处理。filter对不能正常转换json数据的日志数据进行过滤,mappartiton针对每个分区去做数据循环map操作组装成对应表需要的字段,重组完之后coalesce缩小分区(减少文件个数)刷新到目标表中。

package com.atguigu.member.serviceimport com.alibaba.fastjson.JSONObjectimport com.atguigu.util.ParseJsonDataimport org.apache.spark.SparkContextimport org.apache.spark.sql.{SaveMode, SparkSession}object EtlDataService { /** * etl用户注册信息 * * @param ssc * @param sparkSession */ def etlMemberRegtypeLog(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ //隐式转换 ssc.textFile("/user/atguigu/ods/memberRegtype.log") .filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitoin => { partitoin.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val appkey = jsonObject.getString("appkey") val appregurl = jsonObject.getString("appregurl") val bdp_uuid = jsonObject.getString("bdp_uuid") val createtime = jsonObject.getString("createtime") val isranreg = jsonObject.getString("isranreg") val regsource = jsonObject.getString("regsource") val regsourceName = regsource match { case "1" => "PC" case "2" => "Mobile" case "3" => "App" case "4" => "WeChat" case _ => "other" } val uid = jsonObject.getIntValue("uid") val websiteid = jsonObject.getIntValue("websiteid") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (uid, appkey, appregurl, bdp_uuid, createtime, isranreg, regsource, regsourceName, websiteid, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_member_regtype") } /** * etl用户表数据 * * @param ssc * @param sparkSession */ def etlMemberLog(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ //隐式转换 ssc.textFile("/user/atguigu/ods/member.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partition => { partition.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val ad_id = jsonObject.getIntValue("ad_id") val birthday = jsonObject.getString("birthday") val email = jsonObject.getString("email") val fullname = jsonObject.getString("fullname").substring(0, 1) + "xx" val iconurl = jsonObject.getString("iconurl") val lastlogin = jsonObject.getString("lastlogin") val mailaddr = jsonObject.getString("mailaddr") val memberlevel = jsonObject.getString("memberlevel") val password = "******" val paymoney = jsonObject.getString("paymoney") val phone = jsonObject.getString("phone") val newphone = phone.substring(0, 3) + "*****" + phone.substring(7, 11) val qq = jsonObject.getString("qq") val register = jsonObject.getString("register") val regupdatetime = jsonObject.getString("regupdatetime") val uid = jsonObject.getIntValue("uid") val unitname = jsonObject.getString("unitname") val userip = jsonObject.getString("userip") val zipcode = jsonObject.getString("zipcode") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (uid, ad_id, birthday, email, fullname, iconurl, lastlogin, mailaddr, memberlevel, password, paymoney, newphone, qq, register, regupdatetime, unitname, userip, zipcode, dt, dn) }) }).toDF().coalesce(2).write.mode(SaveMode.Append).insertInto("dwd.dwd_member") } /** * 导入广告表基础数据 * * @param ssc * @param sparkSession */ def etlBaseAdLog(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ //隐式转换 val result = ssc.textFile("/user/atguigu/ods/baseadlog.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partition => { partition.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val adid = jsonObject.getIntValue("adid") val adname = jsonObject.getString("adname") val dn = jsonObject.getString("dn") (adid, adname, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Overwrite).insertInto("dwd.dwd_base_ad") } /** * 导入网站表基础数据 * * @param ssc * @param sparkSession */ def etlBaseWebSiteLog(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ //隐式转换 ssc.textFile("/user/atguigu/ods/baswewebsite.log").filter(item => { val obj = ParseJsonData.getJsonDat a(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partition => { partition.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val siteid = jsonObject.getIntValue("siteid") val sitename = jsonObject.getString("sitename") val siteurl = jsonObject.getString("siteurl") val delete = jsonObject.getIntValue("delete") val createtime = jsonObject.getString("createtime") val creator = jsonObject.getString("creator") val dn = jsonObject.getString("dn") (siteid, sitename, siteurl, delete, createtime, creator, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Overwrite).insertInto("dwd.dwd_base_website") } /** * 导入用户付款信息 * * @param ssc * @param sparkSession */ def etlMemPayMoneyLog(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ //隐式转换 ssc.textFile("/user/atguigu/ods/pcentermempaymoney.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partition => { partition.map(item => { val jSONObject = ParseJsonData.getJsonData(item) val paymoney = jSONObject.getString("paymoney") val uid = jSONObject.getIntValue("uid") val vip_id = jSONObject.getIntValue("vip_id") val site_id = jSONObject.getIntValue("siteid") val dt = jSONObject.getString("dt") val dn = jSONObject.getString("dn") (uid, paymoney, site_id, vip_id, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_pcentermempaymoney") } /** * 导入用户vip基础数据 * * @param ssc * @param sparkSession */ def etlMemVipLevelLog(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ //隐式转换 ssc.textFile("/user/atguigu/ods/pcenterMemViplevel.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partition => { partition.map(item => { val jSONObject = ParseJsonData.getJsonData(item) val discountval = jSONObject.getString("discountval") val end_time = jSONObject.getString("end_time") val last_modify_time = jSONObject.getString("last_modify_time") val max_free = jSONObject.getString("max_free") val min_free = jSONObject.getString("min_free") val next_level = jSONObject.getString("next_level") val operator = jSONObject.getString("operator") val start_time = jSONObject.getString("start_time") val vip_id = jSONObject.getIntValue("vip_id") val vip_level = jSONObject.getString("vip_level") val dn = jSONObject.getString("dn") (vip_id, vip_level, start_time, end_time, last_modify_time, max_free, min_free, next_level, operator, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Overwrite).insertInto("dwd.dwd_vip_level") }}

8.5创建DwdMemberController

package com.atguigu.member.controllerimport com.atguigu.member.service.EtlDataServiceimport com.atguigu.util.HiveUtilimport org.apache.spark.SparkConfimport org.apache.spark.sql.SparkSessionobject DwdMemberController { def main(args: Array[String]): Unit = { System.setProperty("HADOOP_USER_NAME", "atguigu") val sparkConf = new SparkConf().setAppName("dwd_member_import").setMaster("local[*]") val sparkSession = SparkSession.builder().config(sparkConf).enableHiveSupport().getOrCreate() val ssc = sparkSession.sparkContext HiveUtil.openDynamicPartition(sparkSession) //开启动态分区 HiveUtil.openCompression(sparkSession) //开启压缩 HiveUtil.useSnappyCompression(sparkSession) //使用snappy压缩 //对用户原始数据进行数据清洗 存入bdl层表中 EtlDataService.etlBaseAdLog(ssc, sparkSession) //导入基础广告表数据 EtlDataService.etlBaseWebSiteLog(ssc, sparkSession) //导入基础网站表数据 EtlDataService.etlMemberLog(ssc, sparkSession) //清洗用户数据 EtlDataService.etlMemberRegtypeLog(ssc, sparkSession) //清洗用户注册数据 EtlDataService.etlMemPayMoneyLog(ssc, sparkSession) //导入用户支付情况记录 EtlDataService.etlMemVipLevelLog(ssc, sparkSession) //导入vip基础数据 }}

8.6创建DwdMemberDao

package com.atguigu.member.daoimport org.apache.spark.sql.SparkSessionobject DwdMemberDao { def getDwdMember(sparkSession: SparkSession) = { sparkSession.sql("select uid,ad_id,email,fullname,iconurl,lastlogin,mailaddr,memberlevel," + "password,phone,qq,register,regupdatetime,unitname,userip,zipcode,dt,dn from dwd.dwd_member") } def getDwdMemberRegType(sparkSession: SparkSession) = { sparkSession.sql("select uid,appkey,appregurl,bdp_uuid,createtime as reg_createtime,domain,isranreg," + "regsource,regsourcename,websiteid as siteid,dn from dwd.dwd_member_regtype ") } def getDwdBaseAd(sparkSession: SparkSession) = { sparkSession.sql("select adid as ad_id,adname,dn from dwd.dwd_base_ad") } def getDwdBaseWebSite(sparkSession: SparkSession) = { sparkSession.sql("select siteid,sitename,siteurl,delete as site_delete," + "createtime as site_createtime,creator as site_creator,dn from dwd.dwd_base_website") } def getDwdVipLevel(sparkSession: SparkSession) = { sparkSession.sql("select vip_id,vip_level,start_time as vip_start_time,end_time as vip_end_time," + "last_modify_time as vip_last_modify_time,max_free as vip_max_free,min_free as vip_min_free," + "next_level as vip_next_level,operator as vip_operator,dn from dwd.dwd_vip_level") } def getDwdPcentermemPayMoney(sparkSession: SparkSession) = { sparkSession.sql("select uid,cast(paymoney as decimal(10,4)) as paymoney,vip_id,dn from dwd.dwd_pcentermempaymoney") }}

8.7基于dwd层表合成dws层的宽表和拉链表

宽表两种方式实现:

一种查询各单表基于单表dataframe使用 join算子得到结果,再使用groupbykey算子去重和取最大最小值等操作得到最终结果。

一种使用spark sql直接实现。

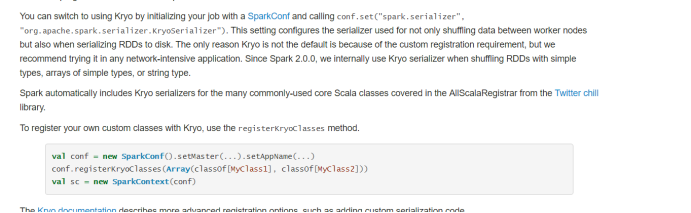

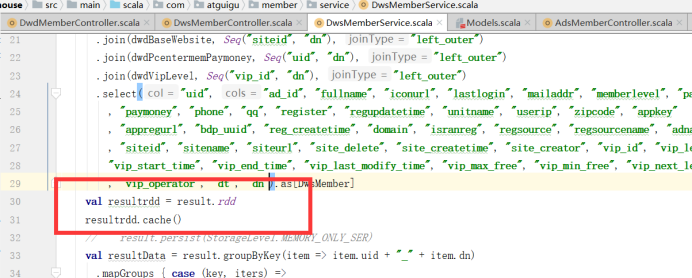

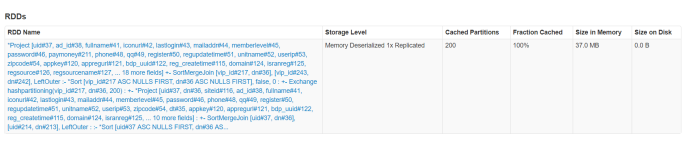

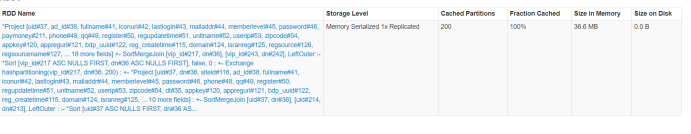

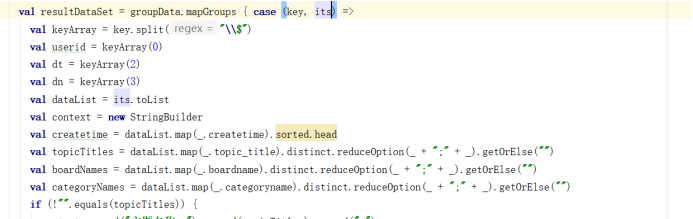

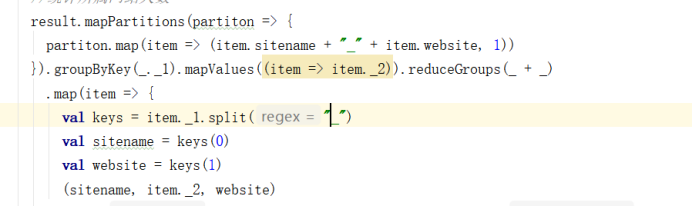

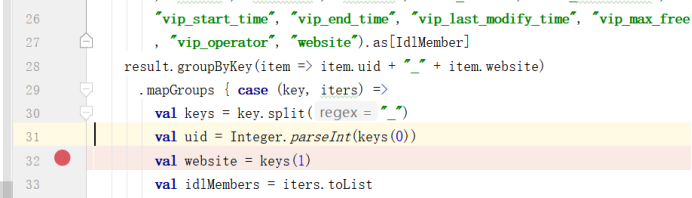

package com.atguigu.member.serviceimport com.atguigu.member.bean.{DwsMember, DwsMember_Result, MemberZipper, MemberZipperResult}import com.atguigu.member.dao.DwdMemberDaoimport org.apache.spark.sql.{SaveMode, SparkSession}object DwsMemberService { def importMemberUseApi(sparkSession: SparkSession, dt: String) = { import sparkSession.implicits._ //隐式转换 val dwdMember = DwdMemberDao.getDwdMember(sparkSession).where(s"dt='${dt}'") //主表用户表 val dwdMemberRegtype = DwdMemberDao.getDwdMemberRegType(sparkSession) val dwdBaseAd = DwdMemberDao.getDwdBaseAd(sparkSession) val dwdBaseWebsite = DwdMemberDao.getDwdBaseWebSite(sparkSession) val dwdPcentermemPaymoney = DwdMemberDao.getDwdPcentermemPayMoney(sparkSession) val dwdVipLevel = DwdMemberDao.getDwdVipLevel(sparkSession) import org.apache.spark.sql.functions.broadcast val result = dwdMember.join(dwdMemberRegtype, Seq("uid", "dn"), "left_outer") .join(broadcast(dwdBaseAd), Seq("ad_id", "dn"), "left_outer") .join(broadcast(dwdBaseWebsite), Seq("siteid", "dn"), "left_outer") .join(broadcast(dwdPcentermemPaymoney), Seq("uid", "dn"), "left_outer") .join(broadcast(dwdVipLevel), Seq("vip_id", "dn"), "left_outer") .select("uid", "ad_id", "fullname", "iconurl", "lastlogin", "mailaddr", "memberlevel", "password" , "paymoney", "phone", "qq", "register", "regupdatetime", "unitname", "userip", "zipcode", "appkey" , "appregurl", "bdp_uuid", "reg_createtime", "domain", "isranreg", "regsource", "regsourcename", "adname" , "siteid", "sitename", "siteurl", "site_delete", "site_createtime", "site_creator", "vip_id", "vip_level", "vip_start_time", "vip_end_time", "vip_last_modify_time", "vip_max_free", "vip_min_free", "vip_next_level" , "vip_operator", "dt", "dn").as[DwsMember] result.groupByKey(item => item.uid + "_" + item.dn) .mapGroups { case (key, iters) => val keys = key.split("_") val uid = Integer.parseInt(keys(0)) val dn = keys(1) val dwsMembers = iters.toList val paymoney = dwsMembers.filter(_.paymoney != null).map(_.paymoney).reduceOption(_ + _).getOrElse(BigDecimal.apply(0.00)).toString val ad_id = dwsMembers.map(_.ad_id).head val fullname = dwsMembers.map(_.fullname).head val icounurl = dwsMembers.map(_.iconurl).head val lastlogin = dwsMembers.map(_.lastlogin).head val mailaddr = dwsMembers.map(_.mailaddr).head val memberlevel = dwsMembers.map(_.memberlevel).head val password = dwsMembers.map(_.password).head val phone = dwsMembers.map(_.phone).head val qq = dwsMembers.map(_.qq).head val register = dwsMembers.map(_.register).head val regupdatetime = dwsMembers.map(_.regupdatetime).head val unitname = dwsMembers.map(_.unitname).head val userip = dwsMembers.map(_.userip).head val zipcode = dwsMembers.map(_.zipcode).head val appkey = dwsMembers.map(_.appkey).head val appregurl = dwsMembers.map(_.appregurl).head val bdp_uuid = dwsMembers.map(_.bdp_uuid).head val reg_createtime = dwsMembers.map(_.reg_createtime).head val domain = dwsMembers.map(_.domain).head val isranreg = dwsMembers.map(_.isranreg).head val regsource = dwsMembers.map(_.regsource).head val regsourcename = dwsMembers.map(_.regsourcename).head val adname = dwsMembers.map(_.adname).head val siteid = dwsMembers.map(_.siteid).head val sitename = dwsMembers.map(_.sitename).head val siteurl = dwsMembers.map(_.siteurl).head val site_delete = dwsMembers.map(_.site_delete).head val site_createtime = dwsMembers.map(_.site_createtime).head val site_creator = dwsMembers.map(_.site_creator).head val vip_id = dwsMembers.map(_.vip_id).head val vip_level = dwsMembers.map(_.vip_level).max val vip_start_time = dwsMembers.map(_.vip_start_time).min val vip_end_time = dwsMembers.map(_.vip_end_time).max val vip_last_modify_time = dwsMembers.map(_.vip_last_modify_time).max val vip_max_free = dwsMembers.map(_.vip_max_free).head val vip_min_free = dwsMembers.map(_.vip_min_free).head val vip_next_level = dwsMembers.map(_.vip_next_level).head val vip_operator = dwsMembers.map(_.vip_operator).head DwsMember_Result(uid, ad_id, fullname, icounurl, lastlogin, mailaddr, memberlevel, password, paymoney, phone, qq, register, regupdatetime, unitname, userip, zipcode, appkey, appregurl, bdp_uuid, reg_createtime, domain, isranreg, regsource, regsourcename, adname, siteid, sitename, siteurl, site_delete, site_createtime, site_creator, vip_id, vip_level, vip_start_time, vip_end_time, vip_last_modify_time, vip_max_free, vip_min_free, vip_next_level, vip_operator, dt, dn) }.show() } def importMember(sparkSession: SparkSession, time: String) = { import sparkSession.implicits._ //隐式转换 //查询全量数据 刷新到宽表 sparkSession.sql("select uid,first(ad_id),first(fullname),first(iconurl),first(lastlogin)," + "first(mailaddr),first(memberlevel),first(password),sum(cast(paymoney as decimal(10,4))),first(phone),first(qq)," + "first(register),first(regupdatetime),first(unitname),first(userip),first(zipcode)," + "first(appkey),first(appregurl),first(bdp_uuid),first(reg_createtime),first(domain)," + "first(isranreg),first(regsource),first(regsourcename),first(adname),first(siteid),first(sitename)," + "first(siteurl),first(site_delete),first(site_createtime),first(site_creator),first(vip_id),max(vip_level)," + "min(vip_start_time),max(vip_end_time),max(vip_last_modify_time),first(vip_max_free),first(vip_min_free),max(vip_next_level)," + "first(vip_operator),dt,dn from" + "(select a.uid,a.ad_id,a.fullname,a.iconurl,a.lastlogin,a.mailaddr,a.memberlevel," + "a.password,e.paymoney,a.phone,a.qq,a.register,a.regupdatetime,a.unitname,a.userip," + "a.zipcode,a.dt,b.appkey,b.appregurl,b.bdp_uuid,b.createtime as reg_createtime,b.domain,b.isranreg,b.regsource," + "b.regsourcename,c.adname,d.siteid,d.sitename,d.siteurl,d.delete as site_delete,d.createtime as site_createtime," + "d.creator as site_creator,f.vip_id,f.vip_level,f.start_time as vip_start_time,f.end_time as vip_end_time," + "f.last_modify_time as vip_last_modify_time,f.max_free as vip_max_free,f.min_free as vip_min_free," + "f.next_level as vip_next_level,f.operator as vip_operator,a.dn " + s"from dwd.dwd_member a left join dwd.dwd_member_regtype b on a.uid=b.uid " + "and a.dn=b.dn left join dwd.dwd_base_ad c on a.ad_id=c.adid and a.dn=c.dn left join " + " dwd.dwd_base_website d on b.websiteid=d.siteid and b.dn=d.dn left join dwd.dwd_pcentermempaymoney e" + s" on a.uid=e.uid and a.dn=e.dn left join dwd.dwd_vip_level f on e.vip_id=f.vip_id and e.dn=f.dn where a.dt='${time}')r " + "group by uid,dn,dt").coalesce(3).write.mode(SaveMode.Overwrite).insertInto("dws.dws_member") //查询当天增量数据 val dayResult = sparkSession.sql(s"select a.uid,sum(cast(a.paymoney as decimal(10,4))) as paymoney,max(b.vip_level) as vip_level," + s"from_unixtime(unix_timestamp('$time','yyyyMMdd'),'yyyy-MM-dd') as start_time,'9999-12-31' as end_time,first(a.dn) as dn " + " from dwd.dwd_pcentermempaymoney a join " + s"dwd.dwd_vip_level b on a.vip_id=b.vip_id and a.dn=b.dn where a.dt='$time' group by uid").as[MemberZipper] //查询历史拉链表数据 val historyResult = sparkSession.sql("select *from dws.dws_member_zipper").as[MemberZipper] //两份数据根据用户id进行聚合 对end_time进行重新修改 val reuslt = dayResult.union(historyResult).groupByKey(item => item.uid + "_" + item.dn) .mapGroups { case (key, iters) => val keys = key.split("_") val uid = keys(0) val dn = keys(1) val list = iters.toList.sortBy(item => item.start_time) //对开始时间进行排序 if (list.size > 1 && "9999-12-31".equals(list(list.size - 2).end_time)) { //如果存在历史数据 需要对历史数据的end_time进行修改 //获取历史数据的最后一条数据 val oldLastModel = list(list.size - 2) //获取当前时间最后一条数据 val lastModel = list(list.size - 1) oldLastModel.end_time = lastModel.start_time lastModel.paymoney = list.map(item => BigDecimal.apply(item.paymoney)).sum.toString } MemberZipperResult(list) }.flatMap(_.list).coalesce(3).write.mode(SaveMode.Overwrite).insertInto("dws.dws_member_zipper") //重组对象打散 刷新拉链表 }}

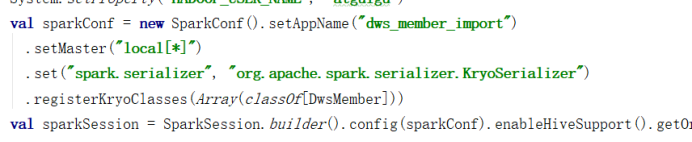

8.8创建DwsMemberController

package com.atguigu.member.controllerimport com.atguigu.member.service.DwsMemberServiceimport com.atguigu.util.HiveUtilimport org.apache.spark.SparkConfimport org.apache.spark.sql.SparkSessionobject DwsMemberController { def main(args: Array[String]): Unit = { System.setProperty("HADOOP_USER_NAME", "atguigu") val sparkConf = new SparkConf().setAppName("dws_member_import") .setMaster("local[*]") val sparkSession = SparkSession.builder().config(sparkConf).enableHiveSupport().getOrCreate() val ssc = sparkSession.sparkContext HiveUtil.openDynamicPartition(sparkSession) //开启动态分区 HiveUtil.openCompression(sparkSession) //开启压缩 HiveUtil.useSnappyCompression(sparkSession) //使用snappy压缩 DwsMemberService.importMember(sparkSession, "20190722") //根据用户信息聚合用户表数据 // DwsMemberService.importMemberUseApi(sparkSession, "20190722") }}

8.9创建DwsMemberDao

package com.atguigu.member.daoimport org.apache.spark.sql.SparkSessionobject DwsMemberDao { /** * 查询用户宽表数据 * * @param sparkSession * @return */ def queryIdlMemberData(sparkSession: SparkSession) = { sparkSession.sql("select uid,ad_id,memberlevel,register,appregurl,regsource,regsourcename,adname," + "siteid,sitename,vip_level,cast(paymoney as decimal(10,4)) as paymoney,dt,dn from dws.dws_member ") } /** * 统计注册来源url人数 * * @param sparkSession */ def queryAppregurlCount(sparkSession: SparkSession, dt: String) = { sparkSession.sql(s"select appregurl,count(uid),dn,dt from dws.dws_member where dt='${dt}' group by appregurl,dn,dt") } //统计所属网站人数 def querySiteNameCount(sparkSession: SparkSession, dt: String) = { sparkSession.sql(s"select sitename,count(uid),dn,dt from dws.dws_member where dt='${dt}' group by sitename,dn,dt") } //统计所属来源人数 def queryRegsourceNameCount(sparkSession: SparkSession, dt: String) = { sparkSession.sql(s"select regsourcename,count(uid),dn,dt from dws.dws_member where dt='${dt}' group by regsourcename,dn,dt ") } //统计通过各广告注册的人数 def queryAdNameCount(sparkSession: SparkSession, dt: String) = { sparkSession.sql(s"select adname,count(uid),dn,dt from dws.dws_member where dt='${dt}' group by adname,dn,dt") } //统计各用户等级人数 def queryMemberLevelCount(sparkSession: SparkSession, dt: String) = { sparkSession.sql(s"select memberlevel,count(uid),dn,dt from dws.dws_member where dt='${dt}' group by memberlevel,dn,dt") } //统计各用户vip等级人数 def queryVipLevelCount(sparkSession: SparkSession, dt: String) = { sparkSession.sql(s"select vip_level,count(uid),dn,dt from dws.dws_member group where dt='${dt}' by vip_level,dn,dt") } //统计各memberlevel等级 支付金额前三的用户 def getTop3MemberLevelPayMoneyUser(sparkSession: SparkSession, dt: String) = { sparkSession.sql("select *from(select uid,ad_id,memberlevel,register,appregurl,regsource" + ",regsourcename,adname,siteid,sitename,vip_level,cast(paymoney as decimal(10,4)),row_number() over" + s" (partition by dn,memberlevel order by cast(paymoney as decimal(10,4)) desc) as rownum,dn from dws.dws_member where dt='${dt}') " + " where rownum<4 order by memberlevel,rownum") }}

8.10报表层统计指标

package com.atguigu.member.serviceimport com.atguigu.member.bean.QueryResultimport com.atguigu.member.dao.DwsMemberDaoimport org.apache.spark.sql.expressions.Windowimport org.apache.spark.sql.{SaveMode, SparkSession}object AdsMemberService { /** * 统计各项指标 使用api * * @param sparkSession */ def queryDetailApi(sparkSession: SparkSession, dt: String) = { import sparkSession.implicits._ //隐式转换 val result = DwsMemberDao.queryIdlMemberData(sparkSession).as[QueryResult].where(s"dt='${dt}'") result.cache() //统计注册来源url人数 val a = result.mapPartitions(partition => { partition.map(item => (item.appregurl + "_" + item.dn + "_" + item.dt, 1)) }).groupByKey(_._1) .mapValues(item => item._2).reduceGroups(_ + _) .map(item => { val keys = item._1.split("_") val appregurl = keys(0) val dn = keys(1) val dt = keys(2) (appregurl, item._2, dt, dn) }).toDF().coalesce(1).write.mode(SaveMode.Overwrite).insertInto("ads.ads_register_appregurlnum") //统计所属网站人数 result.mapPartitions(partiton => { partiton.map(item => (item.sitename + "_" + item.dn + "_" + item.dt, 1)) }).groupByKey(_._1).mapValues((item => item._2)).reduceGroups(_ + _) .map(item => { val keys = item._1.split("_") val sitename = keys(0) val dn = keys(1) val dt = keys(2) (sitename, item._2, dt, dn) }).toDF().coalesce(1).write.mode(SaveMode.Overwrite).insertInto("ads.ads_register_sitenamenum") //统计所属来源人数 pc mobile wechat app result.mapPartitions(partition => { partition.map(item => (item.regsourcename + "_" + item.dn + "_" + item.dt, 1)) }).groupByKey(_._1).mapValues(item => item._2).reduceGroups(_ + _) .map(item => { val keys = item._1.split("_") val regsourcename = keys(0) val dn = keys(1) val dt = keys(2) (regsourcename, item._2, dt, dn) }).toDF().coalesce(1).write.mode(SaveMode.Overwrite).insertInto("ads.ads_register_regsourcenamenum") //统计通过各广告进来的人数 result.mapPartitions(partition => { partition.map(item => (item.adname + "_" + item.dn + "_" + item.dt, 1)) }).groupByKey(_._1).mapValues(_._2).reduceGroups(_ + _) .map(item => { val keys = item._1.split("_") val adname = keys(0) val dn = keys(1) val dt = keys(2) (adname, item._2, dt, dn) }).toDF().coalesce(1).write.mode(SaveMode.Overwrite).insertInto("ads.ads_register_adnamenum") //统计各用户等级人数 result.mapPartitions(partition => { partition.map(item => (item.memberlevel + "_" + item.dn + "_" + item.dt, 1)) }).groupByKey(_._1).mapValues(_._2).reduceGroups(_ + _) .map(item => { val keys = item._1.split("_") val memberlevel = keys(0) val dn = keys(1) val dt = keys(2) (memberlevel, item._2, dt, dn) }).toDF().coalesce(1).write.mode(SaveMode.Overwrite).insertInto("ads.ads_register_memberlevelnum") //统计各用户vip等级人数 result.mapPartitions(partition => { partition.map(item => (item.vip_level + "_" + item.dn + "_" + item.dt, 1)) }).groupByKey(_._1).mapValues(_._2).reduceGroups(_ + _) .map(item => { val keys = item._1.split("_") val vip_level = keys(0) val dn = keys(1) val dt = keys(2) (vip_level, item._2, dt, dn) }).toDF().coalesce(1).write.mode(SaveMode.Overwrite).insertInto("ads.ads_register_viplevelnum") //统计各memberlevel等级 支付金额前三的用户 import org.apache.spark.sql.functions._ result.withColumn("rownum", row_number().over(Window.partitionBy("dn", "memberlevel").orderBy(desc("paymoney")))) .where("rownum<4").orderBy("memberlevel", "rownum") .select("uid", "memberlevel", "register", "appregurl", "regsourcename", "adname" , "sitename", "vip_level", "paymoney", "rownum", "dt", "dn") .coalesce(1).write.mode(SaveMode.Overwrite).insertInto("ads.ads_register_top3memberpay") } /** * 统计各项指标 使用sql * * @param sparkSession */ def queryDetailSql(sparkSession: SparkSession, dt: String) = { val appregurlCount = DwsMemberDao.queryAppregurlCount(sparkSession, dt) val siteNameCount = DwsMemberDao.querySiteNameCount(sparkSession, dt) val regsourceNameCount = DwsMemberDao.queryRegsourceNameCount(sparkSession, dt) val adNameCount = DwsMemberDao.queryAdNameCount(sparkSession, dt) val memberLevelCount = DwsMemberDao.queryMemberLevelCount(sparkSession, dt) val vipLevelCount = DwsMemberDao.queryMemberLevelCount(sparkSession, dt) val top3MemberLevelPayMoneyUser = DwsMemberDao.getTop3MemberLevelPayMoneyUser(sparkSession, dt).show() }}

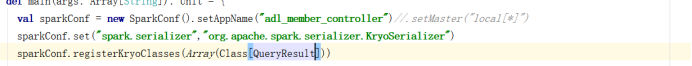

8.11创建AdsMemberController

package com.atguigu.member.controllerimport com.atguigu.member.service.AdsMemberServiceimport com.atguigu.util.HiveUtilimport org.apache.spark.{SparkConf, SparkContext}import org.apache.spark.sql.SparkSessionobject AdsMemberController { def main(args: Array[String]): Unit = { System.setProperty("HADOOP_USER_NAME", "atguigu") val sparkConf = new SparkConf().setAppName("ads_member_controller").setMaster("local[*]") val sparkSession = SparkSession.builder().config(sparkConf).enableHiveSupport().getOrCreate() val ssc = sparkSession.sparkContext HiveUtil.openDynamicPartition(sparkSession) //开启动态分区 AdsMemberService.queryDetailApi(sparkSession, "20190722") // AdsMemberService.queryDetailSql(sparkSession, "20190722") }}

第9章 用户做题模块代码实现

9.1整体类结构

9.2准备样例类

package com.atguigu.qz.beancase class DwdQzPoint(pointid: Int, courseid: Int, pointname: String, pointyear: String, chapter: String, creator: String, createtime: String, status: String, modifystatus: String, excisenum: Int, pointlistid: Int, chapterid: Int, sequence: String, pointdescribe: String, pointlevel: String, typelist: String, score: BigDecimal, thought: String, remid: String, pointnamelist: String, typelistids: String, pointlist: String, dt: String, dn: String)case class DwdQzPaperView(paperviewid: Int, paperid: Int, paperviewname: String, paperparam: String, openstatus: String, explainurl: String, iscontest: String, contesttime: String, conteststarttime: String, contestendtime: String, contesttimelimit: String, dayiid: Int, status: String, creator: String, createtime: String, paperviewcatid: Int, modifystatus: String, description: String, papertype: String, downurl: String, paperuse: String, paperdifficult: String, testreport: String, paperuseshow: String, dt: String, dn: String)case class DwdQzQuestion(questionid: Int, parentid: Int, questypeid: Int, quesviewtype: Int, content: String, answer: String, analysis: String, limitminute: String, scoe: BigDecimal, splitcore: BigDecimal, status: String, optnum: Int, lecture: String, creator: String, createtime: String, modifystatus: String, attanswer: String, questag: String, vanalysisaddr: String, difficulty: String, quesskill: String, vdeoaddr: String, dt: String, dn: String)

9.3解析原始日志信息

package com.atguigu.qz.serviceimport com.alibaba.fastjson.JSONObjectimport com.atguigu.qz.bean.{DwdQzPaperView, DwdQzPoint, DwdQzQuestion}import com.atguigu.util.ParseJsonDataimport org.apache.spark.SparkContextimport org.apache.spark.sql.{SaveMode, SparkSession}/** * etl用户做题信息 */object EtlDataService { /** * 解析章节数据 * * @param ssc * @param sparkSession * @return */ def etlQzChapter(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ //隐式转换 ssc.textFile("/user/atguigu/ods/QzChapter.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val chapterid = jsonObject.getIntValue("chapterid") val chapterlistid = jsonObject.getIntValue("chapterlistid") val chaptername = jsonObject.getString("chaptername") val sequence = jsonObject.getString("sequence") val showstatus = jsonObject.getString("showstatus") val status = jsonObject.getString("status") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val courseid = jsonObject.getIntValue("courseid") val chapternum = jsonObject.getIntValue("chapternum") val outchapterid = jsonObject.getIntValue("outchapterid") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (chapterid, chapterlistid, chaptername, sequence, showstatus, status, creator, createtime, courseid, chapternum, outchapterid, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_chapter") } /** * 解析章节列表数据 * * @param ssc * @param sparkSession */ def etlQzChapterList(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzChapterList.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val chapterlistid = jsonObject.getIntValue("chapterlistid") val chapterlistname = jsonObject.getString("chapterlistname") val courseid = jsonObject.getIntValue("courseid") val chapterallnum = jsonObject.getIntValue("chapterallnum") val sequence = jsonObject.getString("sequence") val status = jsonObject.getString("status") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (chapterlistid, chapterlistname, courseid, chapterallnum, sequence, status, creator, createtime, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_chapter_list") } /** * 解析做题数据 * * @param ssc * @param sparkSession */ def etlQzPoint(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzPoint.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val pointid = jsonObject.getIntValue("pointid") val courseid = jsonObject.getIntValue("courseid") val pointname = jsonObject.getString("pointname") val pointyear = jsonObject.getString("pointyear") val chapter = jsonObject.getString("chapter") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val status = jsonObject.getString("status") val modifystatus = jsonObject.getString("modifystatus") val excisenum = jsonObject.getIntValue("excisenum") val pointlistid = jsonObject.getIntValue("pointlistid") val chapterid = jsonObject.getIntValue("chapterid") val sequence = jsonObject.getString("sequence") val pointdescribe = jsonObject.getString("pointdescribe") val pointlevel = jsonObject.getString("pointlevel") val typeslist = jsonObject.getString("typelist") val score = BigDecimal(jsonObject.getDouble("score")).setScale(1, BigDecimal.RoundingMode.HALF_UP) //保留1位小数 并四舍五入 val thought = jsonObject.getString("thought") val remid = jsonObject.getString("remid") val pointnamelist = jsonObject.getString("pointnamelist") val typelistids = jsonObject.getString("typelistids") val pointlist = jsonObject.getString("pointlist") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") DwdQzPoint(pointid, courseid, pointname, pointyear, chapter, creator, createtime, status, modifystatus, excisenum, pointlistid, chapterid, sequence, pointdescribe, pointlevel, typeslist, score, thought, remid, pointnamelist, typelistids, pointlist, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_point") } /** * 解析知识点下的题数据 * * @param ssc * @param sparkSession * @return */ def etlQzPointQuestion(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzPointQuestion.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val pointid = jsonObject.getIntValue("pointid") val questionid = jsonObject.getIntValue("questionid") val questtype = jsonObject.getIntValue("questtype") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (pointid, questionid, questtype, creator, createtime, dt, dn) }) }).toDF().write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_point_question") } /** * 解析网站课程 * * @param ssc * @param sparkSession */ def etlQzSiteCourse(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzSiteCourse.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val sitecourseid = jsonObject.getIntValue("sitecourseid") val siteid = jsonObject.getIntValue("siteid") val courseid = jsonObject.getIntValue("courseid") val sitecoursename = jsonObject.getString("sitecoursename") val coursechapter = jsonObject.getString("coursechapter") val sequence = jsonObject.getString("sequence") val status = jsonObject.getString("status") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val helppaperstatus = jsonObject.getString("helppaperstatus") val servertype = jsonObject.getString("servertype") val boardid = jsonObject.getIntValue("boardid") val showstatus = jsonObject.getString("showstatus") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (sitecourseid, siteid, courseid, sitecoursename, coursechapter, sequence, status, creator , createtime, helppaperstatus, servertype, boardid, showstatus, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_site_course") } /** * 解析课程数据 * * @param ssc * @param sparkSession */ def etlQzCourse(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzCourse.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val courseid = jsonObject.getIntValue("courseid") val majorid = jsonObject.getIntValue("majorid") val coursename = jsonObject.getString("coursename") val coursechapter = jsonObject.getString("coursechapter") val sequence = jsonObject.getString("sequnece") val isadvc = jsonObject.getString("isadvc") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val status = jsonObject.getString("status") val chapterlistid = jsonObject.getIntValue("chapterlistid") val pointlistid = jsonObject.getIntValue("pointlistid") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (courseid, majorid, coursename, coursechapter, sequence, isadvc, creator, createtime, status , chapterlistid, pointlistid, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_course") } /** * 解析课程辅导数据 * * @param ssc * @param sparkSession */ def etlQzCourseEdusubject(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzCourseEduSubject.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val courseeduid = jsonObject.getIntValue("courseeduid") val edusubjectid = jsonObject.getIntValue("edusubjectid") val courseid = jsonObject.getIntValue("courseid") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val majorid = jsonObject.getIntValue("majorid") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (courseeduid, edusubjectid, courseid, creator, createtime, majorid, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_course_edusubject") } /** * 解析课程网站 * * @param ssc * @param sparkSession */ def etlQzWebsite(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzWebsite.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val siteid = jsonObject.getIntValue("siteid") val sitename = jsonObject.getString("sitename") val domain = jsonObject.getString("domain") val sequence = jsonObject.getString("sequence") val multicastserver = jsonObject.getString("multicastserver") val templateserver = jsonObject.getString("templateserver") val status = jsonObject.getString("status") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val multicastgateway = jsonObject.getString("multicastgateway") val multicastport = jsonObject.getString("multicastport") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (siteid, sitename, domain, sequence, multicastserver, templateserver, status, creator, createtime, multicastgateway, multicastport, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_website") } /** * 解析主修数据 * * @param ssc * @param sparkSession */ def etlQzMajor(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzMajor.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val majorid = jsonObject.getIntValue("majorid") val businessid = jsonObject.getIntValue("businessid") val siteid = jsonObject.getIntValue("siteid") val majorname = jsonObject.getString("majorname") val shortname = jsonObject.getString("shortname") val status = jsonObject.getString("status") val sequence = jsonObject.getString("sequence") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val columm_sitetype = jsonObject.getString("columm_sitetype") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (majorid, businessid, siteid, majorname, shortname, status, sequence, creator, createtime, columm_sitetype, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_major") } /** * 解析做题业务 * * @param ssc * @param sparkSession */ def etlQzBusiness(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzBusiness.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item); val businessid = jsonObject.getIntValue("businessid") val businessname = jsonObject.getString("businessname") val sequence = jsonObject.getString("sequence") val status = jsonObject.getString("status") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val siteid = jsonObject.getIntValue("siteid") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (businessid, businessname, sequence, status, creator, createtime, siteid, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_business") } def etlQzPaperView(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzPaperView.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val paperviewid = jsonObject.getIntValue("paperviewid") val paperid = jsonObject.getIntValue("paperid") val paperviewname = jsonObject.getString("paperviewname") val paperparam = jsonObject.getString("paperparam") val openstatus = jsonObject.getString("openstatus") val explainurl = jsonObject.getString("explainurl") val iscontest = jsonObject.getString("iscontest") val contesttime = jsonObject.getString("contesttime") val conteststarttime = jsonObject.getString("conteststarttime") val contestendtime = jsonObject.getString("contestendtime") val contesttimelimit = jsonObject.getString("contesttimelimit") val dayiid = jsonObject.getIntValue("dayiid") val status = jsonObject.getString("status") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val paperviewcatid = jsonObject.getIntValue("paperviewcatid") val modifystatus = jsonObject.getString("modifystatus") val description = jsonObject.getString("description") val papertype = jsonObject.getString("papertype") val downurl = jsonObject.getString("downurl") val paperuse = jsonObject.getString("paperuse") val paperdifficult = jsonObject.getString("paperdifficult") val testreport = jsonObject.getString("testreport") val paperuseshow = jsonObject.getString("paperuseshow") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") DwdQzPaperView(paperviewid, paperid, paperviewname, paperparam, openstatus, explainurl, iscontest, contesttime, conteststarttime, contestendtime, contesttimelimit, dayiid, status, creator, createtime, paperviewcatid, modifystatus, description, papertype, downurl, paperuse, paperdifficult, testreport, paperuseshow, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_paper_view") } def etlQzCenterPaper(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzCenterPaper.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val paperviewid = jsonObject.getIntValue("paperviewid") val centerid = jsonObject.getIntValue("centerid") val openstatus = jsonObject.getString("openstatus") val sequence = jsonObject.getString("sequence") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (paperviewid, centerid, openstatus, sequence, creator, createtime, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_center_paper") } def etlQzPaper(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzPaper.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val paperid = jsonObject.getIntValue("paperid") val papercatid = jsonObject.getIntValue("papercatid") val courseid = jsonObject.getIntValue("courseid") val paperyear = jsonObject.getString("paperyear") val chapter = jsonObject.getString("chapter") val suitnum = jsonObject.getString("suitnum") val papername = jsonObject.getString("papername") val status = jsonObject.getString("status") val creator = jsonObject.getString("creator") val craetetime = jsonObject.getString("createtime") val totalscore = BigDecimal.apply(jsonObject.getString("totalscore")).setScale(1, BigDecimal.RoundingMode.HALF_UP) val chapterid = jsonObject.getIntValue("chapterid") val chapterlistid = jsonObject.getIntValue("chapterlistid") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (paperid, papercatid, courseid, paperyear, chapter, suitnum, papername, status, creator, craetetime, totalscore, chapterid, chapterlistid, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_paper") } def etlQzCenter(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzCenter.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(parititons => { parititons.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val centerid = jsonObject.getIntValue("centerid") val centername = jsonObject.getString("centername") val centeryear = jsonObject.getString("centeryear") val centertype = jsonObject.getString("centertype") val openstatus = jsonObject.getString("openstatus") val centerparam = jsonObject.getString("centerparam") val description = jsonObject.getString("description") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val sequence = jsonObject.getString("sequence") val provideuser = jsonObject.getString("provideuser") val centerviewtype = jsonObject.getString("centerviewtype") val stage = jsonObject.getString("stage") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (centerid, centername, centeryear, centertype, openstatus, centerparam, description, creator, createtime, sequence, provideuser, centerviewtype, stage, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_center") } def etlQzQuestion(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzQuestion.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val questionid = jsonObject.getIntValue("questionid") val parentid = jsonObject.getIntValue("parentid") val questypeid = jsonObject.getIntValue("questypeid") val quesviewtype = jsonObject.getIntValue("quesviewtype") val content = jsonObject.getString("content") val answer = jsonObject.getString("answer") val analysis = jsonObject.getString("analysis") val limitminute = jsonObject.getString("limitminute") val score = BigDecimal.apply(jsonObject.getDoubleValue("score")).setScale(1, BigDecimal.RoundingMode.HALF_UP) val splitscore = BigDecimal.apply(jsonObject.getDoubleValue("splitscore")).setScale(1, BigDecimal.RoundingMode.HALF_UP) val status = jsonObject.getString("status") val optnum = jsonObject.getIntValue("optnum") val lecture = jsonObject.getString("lecture") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val modifystatus = jsonObject.getString("modifystatus") val attanswer = jsonObject.getString("attanswer") val questag = jsonObject.getString("questag") val vanalysisaddr = jsonObject.getString("vanalysisaddr") val difficulty = jsonObject.getString("difficulty") val quesskill = jsonObject.getString("quesskill") val vdeoaddr = jsonObject.getString("vdeoaddr") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") DwdQzQuestion(questionid, parentid, questypeid, quesviewtype, content, answer, analysis, limitminute, score, splitscore, status, optnum, lecture, creator, createtime, modifystatus, attanswer, questag, vanalysisaddr, difficulty, quesskill, vdeoaddr, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_question") } def etlQzQuestionType(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzQuestionType.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val quesviewtype = jsonObject.getIntValue("quesviewtype") val viewtypename = jsonObject.getString("viewtypename") val questiontypeid = jsonObject.getIntValue("questypeid") val description = jsonObject.getString("description") val status = jsonObject.getString("status") val creator = jsonObject.getString("creator") val createtime = jsonObject.getString("createtime") val papertypename = jsonObject.getString("papertypename") val sequence = jsonObject.getString("sequence") val remark = jsonObject.getString("remark") val splitscoretype = jsonObject.getString("splitscoretype") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (quesviewtype, viewtypename, questiontypeid, description, status, creator, createtime, papertypename, sequence, remark, splitscoretype, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_question_type") } /** * 解析用户做题情况数据 * * @param ssc * @param sparkSession */ def etlQzMemberPaperQuestion(ssc: SparkContext, sparkSession: SparkSession) = { import sparkSession.implicits._ ssc.textFile("/user/atguigu/ods/QzMemberPaperQuestion.log").filter(item => { val obj = ParseJsonData.getJsonData(item) obj.isInstanceOf[JSONObject] }).mapPartitions(partitions => { partitions.map(item => { val jsonObject = ParseJsonData.getJsonData(item) val userid = jsonObject.getIntValue("userid") val paperviewid = jsonObject.getIntValue("paperviewid") val chapterid = jsonObject.getIntValue("chapterid") val sitecourseid = jsonObject.getIntValue("sitecourseid") val questionid = jsonObject.getIntValue("questionid") val majorid = jsonObject.getIntValue("majorid") val useranswer = jsonObject.getString("useranswer") val istrue = jsonObject.getString("istrue") val lasttime = jsonObject.getString("lasttime") val opertype = jsonObject.getString("opertype") val paperid = jsonObject.getIntValue("paperid") val spendtime = jsonObject.getIntValue("spendtime") val score = BigDecimal.apply(jsonObject.getString("score")).setScale(1, BigDecimal.RoundingMode.HALF_UP) val question_answer = jsonObject.getIntValue("question_answer") val dt = jsonObject.getString("dt") val dn = jsonObject.getString("dn") (userid, paperviewid, chapterid, sitecourseid, questionid, majorid, useranswer, istrue, lasttime, opertype, paperid, spendtime, score,question_answer, dt, dn) }) }).toDF().coalesce(1).write.mode(SaveMode.Append).insertInto("dwd.dwd_qz_member_paper_question") }}

9.4创建DwdController

package com.atguigu.qz.controllerimport com.atguigu.qz.service.EtlDataServiceimport com.atguigu.util.HiveUtilimport org.apache.spark.SparkConfimport org.apache.spark.sql.SparkSession/** * 解析做题数据导入dwd层 */object DwdController { def main(args: Array[String]): Unit = { System.setProperty("HADOOP_USER_NAME", "atguigu") val sparkConf = new SparkConf().setAppName("dwd_qz_controller").setMaster("local[*]") val sparkSession = SparkSession.builder().config(sparkConf).enableHiveSupport().getOrCreate() val ssc = sparkSession.sparkContext HiveUtil.openDynamicPartition(sparkSession) //开启动态分区 HiveUtil.openCompression(sparkSession) //开启压缩 HiveUtil.useSnappyCompression(sparkSession) //使用snappy压缩 EtlDataService.etlQzChapter(ssc, sparkSession) EtlDataService.etlQzChapterList(ssc, sparkSession) EtlDataService.etlQzPoint(ssc, sparkSession) EtlDataService.etlQzPointQuestion(ssc, sparkSession) EtlDataService.etlQzSiteCourse(ssc, sparkSession) EtlDataService.etlQzCourse(ssc, sparkSession) EtlDataService.etlQzCourseEdusubject(ssc, sparkSession) EtlDataService.etlQzWebsite(ssc, sparkSession) EtlDataService.etlQzMajor(ssc, sparkSession) EtlDataService.etlQzBusiness(ssc, sparkSession) EtlDataService.etlQzPaperView(ssc, sparkSession) EtlDataService.etlQzCenterPaper(ssc, sparkSession) EtlDataService.etlQzPaper(ssc, sparkSession) EtlDataService.etlQzCenter(ssc, sparkSession) EtlDataService.etlQzQuestion(ssc, sparkSession) EtlDataService.etlQzQuestionType(ssc, sparkSession) EtlDataService.etlQzMemberPaperQuestion(ssc, sparkSession) }}

9.5创建QzChapterDao 章节表dao类

package com.atguigu.qz.daoimport org.apache.spark.sql.SparkSessionobject QzChapterDao { /** * 查询qz_chapter基础数据 * * @param sparkSession * @return */ def getDwdQzChapter(sparkSession: SparkSession, dt: String) = { sparkSession.sql("select chapterid,chapterlistid,chaptername,sequence,showstatus,status,creator as " + "chapter_creator,createtime as chapter_createtime,courseid as chapter_courseid,chapternum,outchapterid,dt,dn from dwd.dwd_qz_chapter where " + s"dt='$dt'") } /** * 查询qz_chapter_list基础数据 * * @param sparkSession * @param dt */ def getDwdQzChapterList(sparkSession: SparkSession, dt: String) = { sparkSession.sql("select chapterlistid,chapterlistname,chapterallnum,dn from dwd.dwd_qz_chapter_list " + s"where dt='$dt'") } /** * 查询qz_point基础数据 * * @param sparkSession * @param dt */ def getDwdQzPoint(sparkSession: SparkSession, dt: String) = { sparkSession.sql("select pointid,pointname,pointyear,chapter,excisenum,pointlistid,chapterid," + "pointdescribe,pointlevel,typelist,score as point_score,thought,remid,pointnamelist,typelistids,pointlist,dn from " + s"dwd.dwd_qz_point where dt='$dt'") } /** * 查询qz_point_question基础数据 * * @param sparkSession * @param dt */ def getDwdQzPointQuestion(sparkSession: SparkSession, dt: String) = { sparkSession.sql(s"select pointid,questionid,questype,dn from dwd.dwd_qz_point_question where dt='$dt'") }}

9.6创建QzCourseDao 课程表dao类

package com.atguigu.qz.daoimport org.apache.spark.sql.SparkSessionobject QzCourseDao { def getDwdQzSiteCourse(sparkSession: SparkSession, dt: String) = { sparkSession.sql("select sitecourseid,siteid,courseid,sitecoursename,coursechapter,sequence,status," + "creator as sitecourse_creator,createtime as sitecourse_createtime,helppaperstatus,servertype,boardid,showstatus,dt,dn " + s"from dwd.dwd_qz_site_course where dt='${dt}'") } def getDwdQzCourse(sparkSession: SparkSession, dt: String) = { sparkSession.sql("select courseid,majorid,coursename,isadvc,chapterlistid,pointlistid,dn from " + s"dwd.dwd_qz_course where dt='${dt}'") } def getDwdQzCourseEduSubject(sparkSession: SparkSession, dt: String) = { sparkSession.sql("select courseeduid,edusubjectid,courseid,dn from dwd.dwd_qz_course_edusubject " + s"where dt='${dt}'") }}

9.7创建QzMajorDao 主修表dao类

package com.atguigu.qz.daoimport org.apache.spark.sql.SparkSessionobject QzMajorDao { def getQzMajor(sparkSession: SparkSession, dt: String) = { sparkSession.sql("select majorid,businessid,siteid,majorname,shortname,status,sequence,creator as major_creator," + s"createtime as major_createtime,dt,dn from dwd.dwd_qz_major where dt='$dt'") } def getQzWebsite(sparkSession: SparkSession, dt: String) = { sparkSession.sql("select siteid,sitename,domain,multicastserver,templateserver,creator," + s"createtime,multicastgateway,multicastport,dn from dwd.dwd_qz_website where dt='$dt'") } def getQzBusiness(sparkSession: SparkSession, dt: String) = { sparkSession.sql(s"select businessid,businessname,dn from dwd.dwd_qz_business where dt='$dt'") }}

9.8创建QzPaperDao 试卷dao类