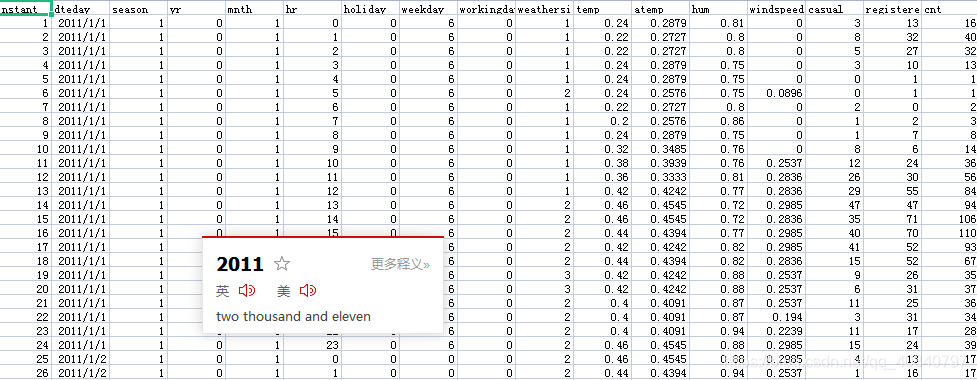

1.数据来源:本例将会使用一个国外的共享单车公开数据集(Capital Bikeshare)来完成我们的任务,数据集下载链接:www.capitalbikeshare.com/ system-data。(或者也在我上传的资源中也有https://download.csdn.net/download/qq_40840797/20325952)

数据介绍:特征因素(例如:年份,季度,星期几,温度,风速等因素);目标变量(用户数,临时注册用户数,注册用户数)

2数据处理

import numpy as np

import pandas as pd #读取csv文件的库

from matplotlib import pyplot as plt

import torch

import torch.optim as optim

data_path = 'Bike-Sharing-Dataset/hour.csv'#数据位置

rides = pd.read_csv(data_path)#读取数据文件

rides.head()#看看数据长什么样子2.1.原始数据的星期几,天气情况等因素需要编码,这样数据才有意义。

# season=1,2,3,4, weathersi=1,2,3, mnth= 1,2,...,12, hr=0,1, ...,23, weekday=0,1,...,6

# 经过下面的处理后,将会多出若干特征,例如,对于season变量就会有 season_1, season_2, season_3, season_4

dummy_fields = ['season', 'weathersit', 'mnth', 'hr', 'weekday']# 这四种不同的特征,进行编码

for each in dummy_fields:

#利用pandas对象,我们可以很方便地将一个类型变量属性进行one-hot编码,变成多个属性

dummies = pd.get_dummies(rides[each], prefix=each, drop_first=False)#prefix是用来生成列名字,drop_first是否把每次生成的第一列去掉

rides = pd.concat([rides, dummies], axis=1)#按照列方向合并2.2.把原来的表格里面被编码原始列与不相关列去掉

# 把原有的类型变量对应的特征去掉,将一些不相关的特征去掉

fields_to_drop = ['instant', 'dteday', 'season', 'weathersit',

'weekday', 'atemp', 'mnth', 'workingday', 'hr']#要去掉的列名字

data = rides.drop(fields_to_drop, axis=1)#从文件中去掉

data.head()2.4.归一化 'cnt', 'temp', 'hum', 'windspeed'这四个列

quant_features = ['cnt', 'temp', 'hum', 'windspeed']#要归一化的四列

#quant_features = ['temp', 'hum', 'windspeed']

# 我们将每一个变量的均值和方差都存储到scaled_features变量中。

scaled_features = {}#空集合

for each in quant_features:

mean, std = data[each].mean(), data[each].std()#求均值和方差

scaled_features[each] = [mean, std]#将均值和方差都装进集合

data.loc[:, each] = (data[each] - mean)/std#求归一化结果并装载进data2.5.训练数据与测试数据划分

train_data = data[:-21*24]

test_data = data[-21*24:]#最后21天是测试集

print('训练数据:',len(train_data),'测试数据:',len(test_data))2.6生成训练样本与训练目标值

target_fields = ['cnt', 'casual', 'registered']#训目标列【用户数,临时用户数,注册用户数】

features, targets = train_data.drop(target_fields, axis=1), train_data[target_fields]

test_features, test_targets = test_data.drop(target_fields, axis=1), test_data[target_fields]

# 将数据从pandas dataframe转换为numpy

X = features.values#取值

Y = targets['cnt'].values#取值

Y = Y.astype(float)#转化格式

Y = np.reshape(Y, [len(Y),1])#【16875,】转变为【16875,1】

losses = []

features.head()3.两种构建神经网络方式

3.1.手动编写神经网络

input_size = features.shape[1] #输入层单元个数

hidden_size = 10 #隐含层单元个数

output_size = 1 #输出层单元个数

batch_size = 128 #每隔batch的记录数

weights1 = torch.randn([input_size, hidden_size], dtype = torch.double, requires_grad = True) #第一到二层权重

biases1 = torch.randn([hidden_size], dtype = torch.double, requires_grad = True) #隐含层偏置

weights2 = torch.randn([hidden_size, output_size], dtype = torch.double, requires_grad = True) #隐含层到输出层权重

def neu(x):

#计算隐含层输出

#x为batch_size * input_size的矩阵,weights1为input_size*hidden_size矩阵,

#biases为hidden_size向量,输出为batch_size * hidden_size矩阵

hidden = x.mm(weights1) + biases1.expand(x.size()[0], hidden_size)

hidden = torch.sigmoid(hidden)

#输入batch_size * hidden_size矩阵,mm上weights2, hidden_size*output_size矩阵,

#输出batch_size*output_size矩阵

output = hidden.mm(weights2)

return output

def cost(x, y):

# 计算损失函数

error = torch.mean((x - y)**2)

return error

def zero_grad():

# 清空每个参数的梯度信息

if weights1.grad is not None and biases1.grad is not None and weights2.grad is not None:

weights1.grad.data.zero_()

weights2.grad.data.zero_()

biases1.grad.data.zero_()

def optimizer_step(learning_rate):

# 梯度下降算法

weights1.data.add_(- learning_rate * weights1.grad.data)

weights2.data.add_(- learning_rate * weights2.grad.data)

biases1.data.add_(- learning_rate * biases1.grad.data)

losses = []

for i in range(1000):

# 每128个样本点被划分为一个撮,在循环的时候一批一批地读取

batch_loss = []#每一批次的损失

# start和end分别是提取一个batch数据的起始和终止下标

for start in range(0, len(X), batch_size):#每128个点作为一个批次

end = start + batch_size if start + batch_size < len(X) else len(X)#取截止点位

xx = torch.tensor(X[start:end], dtype = torch.double, requires_grad = True)#生成特征批次

yy = torch.tensor(Y[start:end], dtype = torch.double, requires_grad = True)#生成目标批次

predict = neu(xx)#预测

loss = cost(predict, yy)#损失

zero_grad()#梯度清零

loss.backward()#损失反向传播

optimizer_step(0.01)#学习率设置

batch_loss.append(loss.data.numpy())#每个批次损失装载在列表

# 每隔100步输出一下损失值(loss)

if i % 100==0:

losses.append(np.mean(batch_loss))#每一百次迭代的损失均值装载在losses

print(i, np.mean(batch_loss))

# 打印输出损失值

fig = plt.figure(figsize=(10, 7))

plt.plot(np.arange(len(losses))*100,losses, 'o-')#绘制损失曲线

plt.xlabel('epoch')

plt.ylabel('MSE')3.2.使用pytorch已有网络框架

input_size = features.shape[1]

hidden_size = 10

output_size = 1

batch_size = 128

neu = torch.nn.Sequential(

torch.nn.Linear(input_size, hidden_size),

torch.nn.Sigmoid(),

torch.nn.Linear(hidden_size, output_size),

)

cost = torch.nn.MSELoss()

optimizer = torch.optim.SGD(neu.parameters(), lr = 0.01)

losses = []

for i in range(1000):

# 每128个样本点被划分为一个撮,在循环的时候一批一批地读取

batch_loss = []

# start和end分别是提取一个batch数据的起始和终止下标

for start in range(0, len(X), batch_size):

end = start + batch_size if start + batch_size < len(X) else len(X)

xx = torch.tensor(X[start:end], dtype = torch.float, requires_grad = True)

yy = torch.tensor(Y[start:end], dtype = torch.float, requires_grad = True)

predict = neu(xx)

loss = cost(predict, yy)

optimizer.zero_grad()

loss.backward()

optimizer.step()

batch_loss.append(loss.data.numpy())

# 每隔100步输出一下损失值(loss)

if i % 100==0:

losses.append(np.mean(batch_loss))

print(i, np.mean(batch_loss))4.测试

targets = test_targets['cnt'] #读取测试集的cnt数值

targets = targets.values.reshape([len(targets),1]) #将数据转换成合适的tensor形式

targets = targets.astype(float) #保证数据为实数

x = torch.tensor(test_features.values, dtype = torch.double, requires_grad = True)#使用3.1搭建网络,则dtype= torch.double;使用3.2搭建3.2搭建网络,则dtype = torch.float

y = torch.tensor(targets, dtype = torch.float, requires_grad = True)

print(x[:10])

# 用神经网络进行预测

predict = neu(x)

predict = predict.data.numpy()

print((predict * std + mean)[:10])

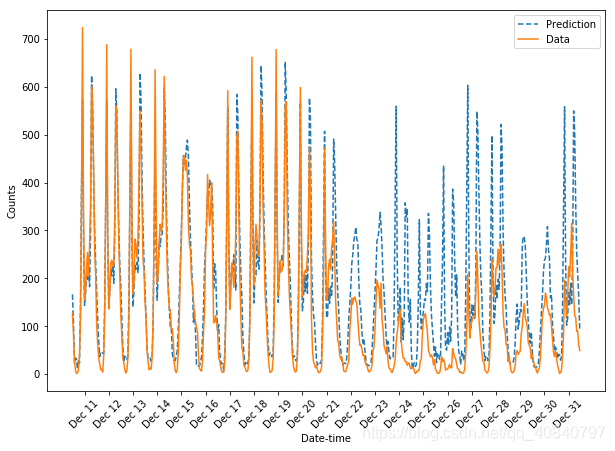

# 将后21天的预测数据与真实数据画在一起并比较

# 横坐标轴是不同的日期,纵坐标轴是预测或者真实数据的值

fig, ax = plt.subplots(figsize = (10, 7))

mean, std = scaled_features['cnt']

ax.plot(predict * std + mean, label='Prediction', linestyle = '--')

ax.plot(targets * std + mean, label='Data', linestyle = '-')

ax.legend()

ax.set_xlabel('Date-time')

ax.set_ylabel('Counts')

# 对横坐标轴进行标注

dates = pd.to_datetime(rides.loc[test_data.index]['dteday'])

dates = dates.apply(lambda d: d.strftime('%b %d'))

ax.set_xticks(np.arange(len(dates))[12::24])

_ = ax.set_xticklabels(dates[12::24], rotation=45)

5.结果图