技术路线:requests库+bs4库+re库的整合使用

目标:获得上交所和深交所所有股票的名称和交易信息

输出:保存至本地文件

可选数据网络有:新浪股票和百度股票,,通过查看网页源代码可知,新浪股票的数据是通过javascript脚本获取的,故通过以上方式无法解析

呃呃呃,可以说requests库+bs4库+re库可以爬的网站应该是---信息静态存在于HTML页面中,非js代码生成,没有Robots协议限制

所以最终确定了数据源为:东方财富网+百度股票

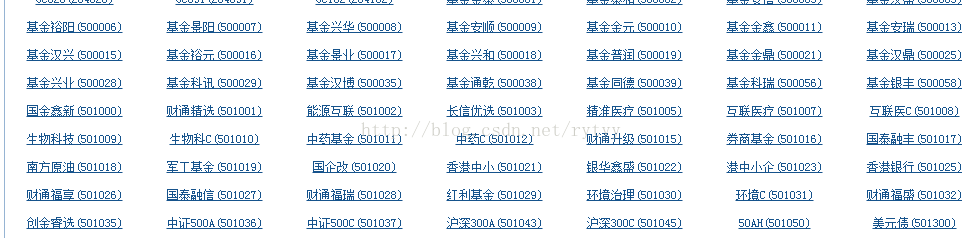

东方财富网:

百度股票:

程序结构设计:

1.从东方财富网中获取股票列表

2.根据股票列表逐个到百度股票获取个股信息

3.将结果存至文件

封装函数,编写代码

import requests

from bs4 import BeautifulSoup

import traceback

import re

def getHTMLText(url,code='utf-8'):

try:

r = requests.get(url,timeout = 30)

r.raise_for_status()

r.encoding = code

return r.text

except:

return ""

def getStockList(lst,stockURL):

html = getHTMLText(stockURL,'GB2312')

soup = BeautifulSoup(html,'html.parser')

a = soup.find_all('a')

for i in a:

try:

href = i.attrs['href']

lst.append(re.findall(r"[s][hz]\d{6}",href)[0])

except:

continue

def getStockInfo(lst,stockURL,fpath):

count = 0

for stock in lst:

url = stockURL + stock + ".html"

html = getHTMLText(url)

try:

if html == "":

continue

infoDict = { }

soup = BeautifulSoup(html,'html.parser')

stockInfo = soup.find('div',attrs={'class':'stock-bets'})

name = stockInfo.find_all(attrs={'class':'bets-name'})[0]

infoDict.update({'股票名称':name.text.split()[0]})

keyList = stockInfo.find_all('dt')

valueList = stockInfo.find_all('dd')

for i in range(len(keyList)):

key = keyList[i].text

val = valueList[i].text

infoDict[key] = val

with open(fpath,'a',encoding = 'utf-8') as f:

f.write(str(infoDict) + '\n')

except:

traceback.print_exc()

continue

return ""

def main():

stock_list_url = 'http://quote.eastmoney.com/stocklist.html'

stock_info_url = 'https://gupiao.baidu.com/stock/'

output_file = 'C://Users//kfc//Desktop//BaiduStockInfo.txt'

slist = []

getStockList(slist,stock_list_url)

getStockInfo(slist,stock_info_url,output_file)

main()