前言

ELK即Elasticsearch、Logstash、Kibana,组合起来可以搭建线上日志系统,本文主要讲解使用ELK来收集SpringBoot应用产生的日志。

ELK中各个服务的作用

Elasticsearch:用于存储收集到的日志信息;

Logstash:用于收集日志,SpringBoot应用整合了Logstash以后会把日志发送给Logstash,Logstash再把日志转发给Elasticsearch;

Kibana:通过Web端的可视化界面来查看日志。

1.根据SpringBoot版本查看对应的ES、LogStash、Kibana版本

(1) SpringBoot使用 spring-boot-dependencies方式 依赖es

<!-- springboot版本-->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-data-elasticsearch</artifactId>

<version>${spring-boot.version}</version>

</dependency>

(2)SpringBoot使用 spring-boot-starter-parent 方式 依赖es

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-data-elasticsearch</artifactId>

</dependency>

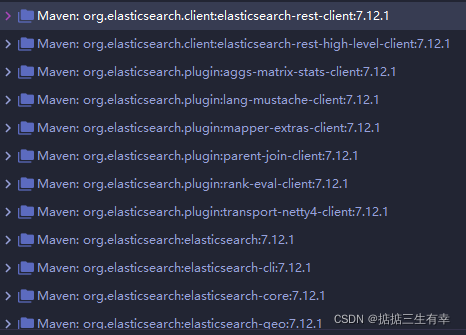

(3) 刷新maven后可以看到当前项目对应的SpringBoot版本

2.使用Docker Compose 搭建ELK环境

(1) 根据项目依赖的版本pull对应的版本,注意 ELK版本必须完全一致

docker pull elasticsearch:7.12.1

docker pull logstash:7.12.1

docker pull kibana:7.12.1

(2) 创建一个存放logstash配置的目录并上传配置文件

创建配置文件存放目录

mkdir /mydata/elk/logstash

mkdir /mydata/elk/es

mkdir /mydata/elk/kibana

cd /mydata/elk/logstash

vim logstash-springboot.conf

logstash-springboot.conf文件内容

input {

tcp {

mode => "server"

host => "0.0.0.0"

port => 4560

codec => json_lines

type => "debug"

}

tcp {

mode => "server"

host => "0.0.0.0"

port => 4561

codec => json_lines

type => "error"

}

tcp {

mode => "server"

host => "0.0.0.0"

port => 4562

codec => json_lines

type => "business"

}

tcp {

mode => "server"

host => "0.0.0.0"

port => 4563

codec => json_lines

type => "record"

}

}

filter{

if [type] == "record" {

mutate {

remove_field => "port"

remove_field => "host"

remove_field => "@version"

}

json {

source => "message"

remove_field => ["message"]

}

}

}

output {

elasticsearch {

hosts => "es:9200"

index => "soboot-%{type}-%{+YYYY.MM.dd}"

}

}

(3)使用docker-compose.yml脚本启动ELK服务

cd /mydata/elk

vim docker-compose.yml

docker-compose.yml内容

version: '3'

services:

elasticsearch:

image: elasticsearch:7.12.1

container_name: elasticsearch

environment:

- "cluster.name=elasticsearch" #设置集群名称为elasticsearch

- "discovery.type=single-node" #以单一节点模式启动

- "ES_JAVA_OPTS=-Xms512m -Xmx512m" #设置使用jvm内存大小

volumes:

- /mydata/elk/es/plugins:/usr/share/elasticsearch/plugins #插件文件挂载

- /mydata/elk/es/data:/usr/share/elasticsearch/data #数据文件挂载

ports:

- 9200:9200

- 9300:9300

kibana:

image: kibana:7.12.1

container_name: kibana

links:

- elasticsearch:es #可以用es这个域名访问elasticsearch服务

depends_on:

- elasticsearch #kibana在elasticsearch启动之后再启动

environment:

- "elasticsearch.hosts=http://es:9200" #设置访问elasticsearch的地址

ports:

- 5601:5601

logstash:

image: logstash:7.12.1

container_name: logstash

volumes:

- /mydata/elk/logstash/logstash-springboot.conf:/usr/share/logstash/pipeline/logstash.conf #挂载logstash的配置文件

depends_on:

- elasticsearch #kibana在elasticsearch启动之后再启动

links:

- elasticsearch:es #可以用es这个域名访问elasticsearch服务

ports:

- 4560:4560

- 4561:4561

- 4562:4562

- 4563:4563

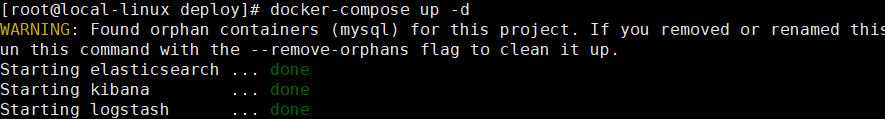

(4) 执行docker-compose命令运行elk

docker-compose up -d

注意:Elasticsearch启动可能需要好几分钟,要耐心等待。

(5)启动完成后 在logstash中安装json_lines插件

# 进入logstash容器

docker exec -it logstash /bin/bash

# 进入bin目录

cd /bin/

# 安装插件

logstash-plugin install logstash-codec-json_lines

# 退出容器

exit

# 重启logstash服务

docker restart logstash

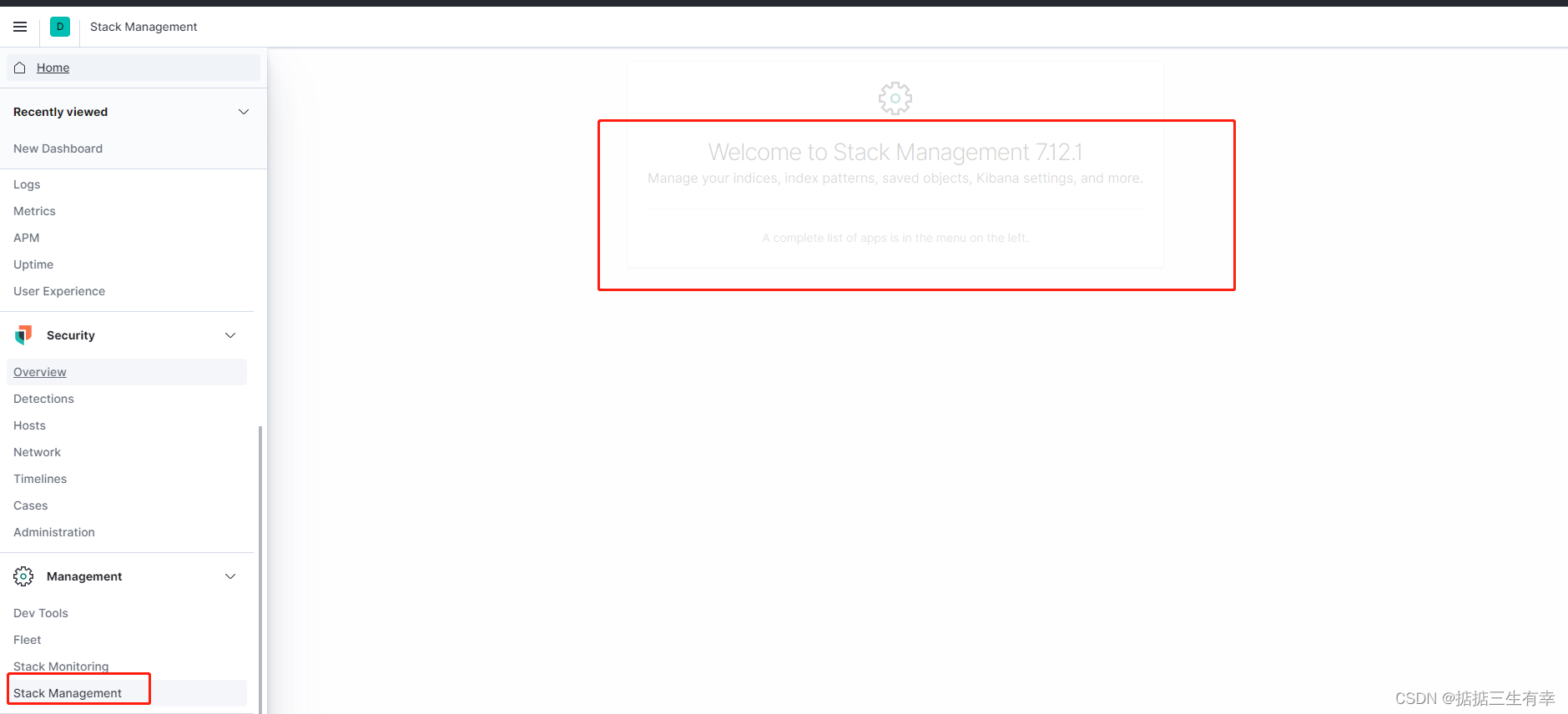

(6) 访问kibana

注意:关闭防火墙或配置安全组

访问地址:http://服务器IP:5601

3.SpringBoot应用集成ELK

(1) 在pom.xml中添加logstash-logback-encoder依赖

<!--集成logstash-->

<dependency>

<groupId>net.logstash.logback</groupId>

<artifactId>logstash-logback-encoder</artifactId>

<version>5.3</version>

</dependency>

(2) 添加配置文件logback-spring.xml让logback的日志输出到logstash

注意appender节点下的destination需要改成你自己的logstash服务地址,比如:10.12.13.14:4560

logback-spring.xml 内容

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE configuration>

<configuration>

<!--引用默认日志配置-->

<include resource="org/springframework/boot/logging/logback/defaults.xml"/>

<!--使用默认的控制台日志输出实现-->

<include resource="org/springframework/boot/logging/logback/console-appender.xml"/>

<!--应用名称-->

<springProperty scope="context" name="APP_NAME" source="spring.application.name" defaultValue="springBoot"/>

<!--日志文件保存路径-->

<!-- <property name="LOG_FILE_PATH" value="${LOG_FILE:-${LOG_PATH:-${LOG_TEMP:-${java.io.tmpdir:-/tmp}}}/logs}"/>-->

<!--LogStash访问host-->

<springProperty name="LOG_STASH_HOST" scope="context" source="logstash.host" defaultValue="10.12.13.14"/>

<!--DEBUG日志输出到文件-->

<appender name="FILE_DEBUG"

class="ch.qos.logback.core.rolling.RollingFileAppender">

<!--输出DEBUG以上级别日志-->

<filter class="ch.qos.logback.classic.filter.ThresholdFilter">

<level>DEBUG</level>

</filter>

<encoder>

<!--设置为默认的文件日志格式-->

<pattern>${FILE_LOG_PATTERN}</pattern>

<charset>UTF-8</charset>

</encoder>

<rollingPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedRollingPolicy">

<!--设置文件命名格式-->

<fileNamePattern>${LOG_FILE_PATH}/debug/${APP_NAME}-%d{yyyy-MM-dd}-%i.log</fileNamePattern>

<!--设置日志文件大小,超过就重新生成文件,默认10M-->

<maxFileSize>${LOG_FILE_MAX_SIZE:-10MB}</maxFileSize>

<!--日志文件保留天数,默认30天-->

<maxHistory>${LOG_FILE_MAX_HISTORY:-30}</maxHistory>

</rollingPolicy>

</appender>

<!--ERROR日志输出到文件-->

<appender name="FILE_ERROR"

class="ch.qos.logback.core.rolling.RollingFileAppender">

<!--只输出ERROR级别的日志-->

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>ERROR</level>

<onMatch>ACCEPT</onMatch>

<onMismatch>DENY</onMismatch>

</filter>

<encoder>

<!--设置为默认的文件日志格式-->

<pattern>${FILE_LOG_PATTERN}</pattern>

<charset>UTF-8</charset>

</encoder>

<rollingPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedRollingPolicy">

<!--设置文件命名格式-->

<fileNamePattern>${LOG_FILE_PATH}/error/${APP_NAME}-%d{yyyy-MM-dd}-%i.log</fileNamePattern>

<!--设置日志文件大小,超过就重新生成文件,默认10M-->

<maxFileSize>${LOG_FILE_MAX_SIZE:-10MB}</maxFileSize>

<!--日志文件保留天数,默认30天-->

<maxHistory>${LOG_FILE_MAX_HISTORY:-30}</maxHistory>

</rollingPolicy>

</appender>

<!-- 控制台异步实时输出 -->

<appender name="ASYNC_CONSOLE_APPENDER" class="ch.qos.logback.classic.AsyncAppender">

<!-- 不丢失日志.默认的,如果队列的80%已满,则会丢弃TRACT、DEBUG、INFO级别的日志 -->

<discardingThreshold>0</discardingThreshold>

<!-- 更改默认的队列的深度,该值会影响性能.默认值为256 -->

<queueSize>256</queueSize>

<!-- 添加附加的appender,最多只能添加一个 -->

<appender-ref ref="FILE_DEBUG"/>

</appender>

<!--DEBUG日志输出到LogStash-->

<appender name="LOG_STASH_DEBUG" class="net.logstash.logback.appender.LogstashTcpSocketAppender">

<filter class="ch.qos.logback.classic.filter.ThresholdFilter">

<level>DEBUG</level>

</filter>

<destination>${LOG_STASH_HOST}:4560</destination>

<encoder charset="UTF-8" class="net.logstash.logback.encoder.LoggingEventCompositeJsonEncoder">

<providers>

<timestamp>

<timeZone>Asia/Shanghai</timeZone>

</timestamp>

<!--自定义日志输出格式-->

<pattern>

<pattern>

{

"project": "soboot",

"level": "%level",

"service": "${APP_NAME:-}",

"pid": "${PID:-}",

"thread": "%thread",

"class": "%logger",

"message": "%message",

"stack_trace": "%exception{20}"

}

</pattern>

</pattern>

</providers>

</encoder>

</appender>

<!--ERROR日志输出到LogStash-->

<appender name="LOG_STASH_ERROR" class="net.logstash.logback.appender.LogstashTcpSocketAppender">

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>ERROR</level>

<onMatch>ACCEPT</onMatch>

<onMismatch>DENY</onMismatch>

</filter>

<destination>${LOG_STASH_HOST}:4561</destination>

<encoder charset="UTF-8" class="net.logstash.logback.encoder.LoggingEventCompositeJsonEncoder">

<providers>

<timestamp>

<timeZone>Asia/Shanghai</timeZone>

</timestamp>

<!--自定义日志输出格式-->

<pattern>

<pattern>

{

"project": "soboot",

"level": "%level",

"service": "${APP_NAME:-}",

"pid": "${PID:-}",

"thread": "%thread",

"class": "%logger",

"message": "%message",

"stack_trace": "%exception{20}"

}

</pattern>

</pattern>

</providers>

</encoder>

</appender>

<!--业务日志输出到LogStash-->

<appender name="LOG_STASH_BUSINESS" class="net.logstash.logback.appender.LogstashTcpSocketAppender">

<destination>${LOG_STASH_HOST}:4562</destination>

<encoder charset="UTF-8" class="net.logstash.logback.encoder.LoggingEventCompositeJsonEncoder">

<providers>

<timestamp>

<timeZone>Asia/Shanghai</timeZone>

</timestamp>

<!--自定义日志输出格式-->

<pattern>

<pattern>

{

"project": "soboot",

"level": "%level",

"service": "${APP_NAME:-}",

"pid": "${PID:-}",

"thread": "%thread",

"class": "%logger",

"message": "%message",

"stack_trace": "%exception{20}"

}

</pattern>

</pattern>

</providers>

</encoder>

</appender>

<!--接口访问记录日志输出到LogStash-->

<appender name="LOG_STASH_RECORD" class="net.logstash.logback.appender.LogstashTcpSocketAppender">

<destination>${LOG_STASH_HOST}:4563</destination>

<encoder charset="UTF-8" class="net.logstash.logback.encoder.LoggingEventCompositeJsonEncoder">

<providers>

<timestamp>

<timeZone>Asia/Shanghai</timeZone>

</timestamp>

<!--自定义日志输出格式-->

<pattern>

<pattern>

{

"project": "soboot",

"level": "%level",

"service": "${APP_NAME:-}",

"class": "%logger",

"message": "%message"

}

</pattern>

</pattern>

</providers>

</encoder>

</appender>

<!--控制框架输出日志-->

<logger name="org.slf4j" level="INFO"/>

<logger name="springfox" level="INFO"/>

<logger name="io.swagger" level="INFO"/>

<logger name="org.springframework" level="INFO"/>

<logger name="org.hibernate.validator" level="INFO"/>

<root level="DEBUG">

<appender-ref ref="CONSOLE"/>

<appender-ref ref="FILE_DEBUG"/>

<appender-ref ref="FILE_ERROR"/>

<appender-ref ref="LOG_STASH_RECORD"/>

<appender-ref ref="LOG_STASH_DEBUG"/>

<appender-ref ref="LOG_STASH_ERROR"/>

</root>

<logger name="com.soboot.common.log.aspect.WebLogAspect" level="DEBUG">

<appender-ref ref="LOG_STASH_RECORD"/>

</logger>

<logger name="com.soboot" level="DEBUG">

<appender-ref ref="LOG_STASH_BUSINESS"/>

</logger>

</configuration>

(3)编写统一日志处理切面

WebLog.java内容

package com.soboot.common.log.domain;

import lombok.Data;

import lombok.EqualsAndHashCode;

/**

* Controller层的日志封装类

* Created by liu on 2022/03/15.

* @author liuy

*/

@Data

@EqualsAndHashCode(callSuper = false)

public class WebLog {

/**

* 操作描述

*/

private String description;

/**

* 操作用户

*/

private String username;

/**

* 操作时间

*/

private Long startTime;

/**

* 消耗时间

*/

private Integer spendTime;

/**

* 根路径

*/

private String basePath;

/**

* URI

*/

private String uri;

/**

* URL

*/

private String url;

/**

* 请求类型

*/

private String method;

/**

* IP地址

*/

private String ip;

/**

* 请求参数

*/

private Object parameter;

/**

* 返回结果

*/

private Object result;

}

WebLogAspect.java 内容

package com.soboot.common.log.aspect;

import cn.hutool.core.util.StrUtil;

import cn.hutool.core.util.URLUtil;

import cn.hutool.json.JSONUtil;

import com.soboot.common.log.domain.WebLog;

import io.swagger.annotations.ApiOperation;

import net.logstash.logback.marker.Markers;

import org.aspectj.lang.JoinPoint;

import org.aspectj.lang.ProceedingJoinPoint;

import org.aspectj.lang.Signature;

import org.aspectj.lang.annotation.*;

import org.aspectj.lang.reflect.MethodSignature;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.core.annotation.Order;

import org.springframework.stereotype.Component;

import org.springframework.util.StringUtils;

import org.springframework.web.bind.annotation.RequestBody;

import org.springframework.web.bind.annotation.RequestParam;

import org.springframework.web.context.request.RequestContextHolder;

import org.springframework.web.context.request.ServletRequestAttributes;

import javax.servlet.http.HttpServletRequest;

import java.lang.reflect.Method;

import java.lang.reflect.Parameter;

import java.util.ArrayList;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

/**

* 统一日志处理切面

* Created by liu on 2022/03/15.

* @author liuy

*/

@Aspect

@Component

@Order(1)

public class WebLogAspect {

private static final Logger LOGGER = LoggerFactory.getLogger(WebLogAspect.class);

@Pointcut("execution(public * com.soboot.controller.*.*(..))||execution(public * com.soboot.*.controller.*.*(..))")

public void webLog() {

}

@Before("webLog()")

public void doBefore(JoinPoint joinPoint) throws Throwable {

}

@AfterReturning(value = "webLog()", returning = "ret")

public void doAfterReturning(Object ret) throws Throwable {

}

@Around("webLog()")

public Object doAround(ProceedingJoinPoint joinPoint) throws Throwable {

long startTime = System.currentTimeMillis();

//获取当前请求对象

ServletRequestAttributes attributes = (ServletRequestAttributes) RequestContextHolder.getRequestAttributes();

HttpServletRequest request = attributes.getRequest();

//记录请求信息(通过Logstash传入Elasticsearch)

WebLog webLog = new WebLog();

Object result = joinPoint.proceed();

Signature signature = joinPoint.getSignature();

MethodSignature methodSignature = (MethodSignature) signature;

Method method = methodSignature.getMethod();

if (method.isAnnotationPresent(ApiOperation.class)) {

ApiOperation log = method.getAnnotation(ApiOperation.class);

webLog.setDescription(log.value());

}

long endTime = System.currentTimeMillis();

String urlStr = request.getRequestURL().toString();

webLog.setBasePath(StrUtil.removeSuffix(urlStr, URLUtil.url(urlStr).getPath()));

webLog.setUsername(request.getRemoteUser());

webLog.setIp(request.getRemoteAddr());

webLog.setMethod(request.getMethod());

webLog.setParameter(getParameter(method, joinPoint.getArgs()));

webLog.setResult(result);

webLog.setSpendTime((int) (endTime - startTime));

webLog.setStartTime(startTime);

webLog.setUri(request.getRequestURI());

webLog.setUrl(request.getRequestURL().toString());

Map<String,Object> logMap = new HashMap<>();

logMap.put("url",webLog.getUrl());

logMap.put("method",webLog.getMethod());

logMap.put("parameter",webLog.getParameter());

logMap.put("spendTime",webLog.getSpendTime());

logMap.put("description",webLog.getDescription());

LOGGER.info(Markers.appendEntries(logMap), JSONUtil.parse(webLog).toString());

return result;

}

/**

* 根据方法和传入的参数获取请求参数

*/

private Object getParameter(Method method, Object[] args) {

List<Object> argList = new ArrayList<>();

Parameter[] parameters = method.getParameters();

for (int i = 0; i < parameters.length; i++) {

//将RequestBody注解修饰的参数作为请求参数

RequestBody requestBody = parameters[i].getAnnotation(RequestBody.class);

if (requestBody != null) {

argList.add(args[i]);

}

//将RequestParam注解修饰的参数作为请求参数

RequestParam requestParam = parameters[i].getAnnotation(RequestParam.class);

if (requestParam != null) {

Map<String, Object> map = new HashMap<>();

String key = parameters[i].getName();

if (!StringUtils.isEmpty(requestParam.value())) {

key = requestParam.value();

}

map.put(key, args[i]);

argList.add(map);

}

}

if (argList.size() == 0) {

return null;

} else if (argList.size() == 1) {

return argList.get(0);

} else {

return argList;

}

}

}

(4) 添加es、logstash yml配置

yml配置

spring:

data:

elasticsearch:

repositories:

enabled: true

elasticsearch:

rest:

# es部署的服务器IP:9200

uris: 10.12.13.14:9200

logging:

level:

root: info

com.soboot: debug

logstash:

# logstash部署的服务器IP

host: 10.12.13.14

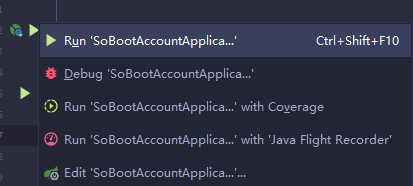

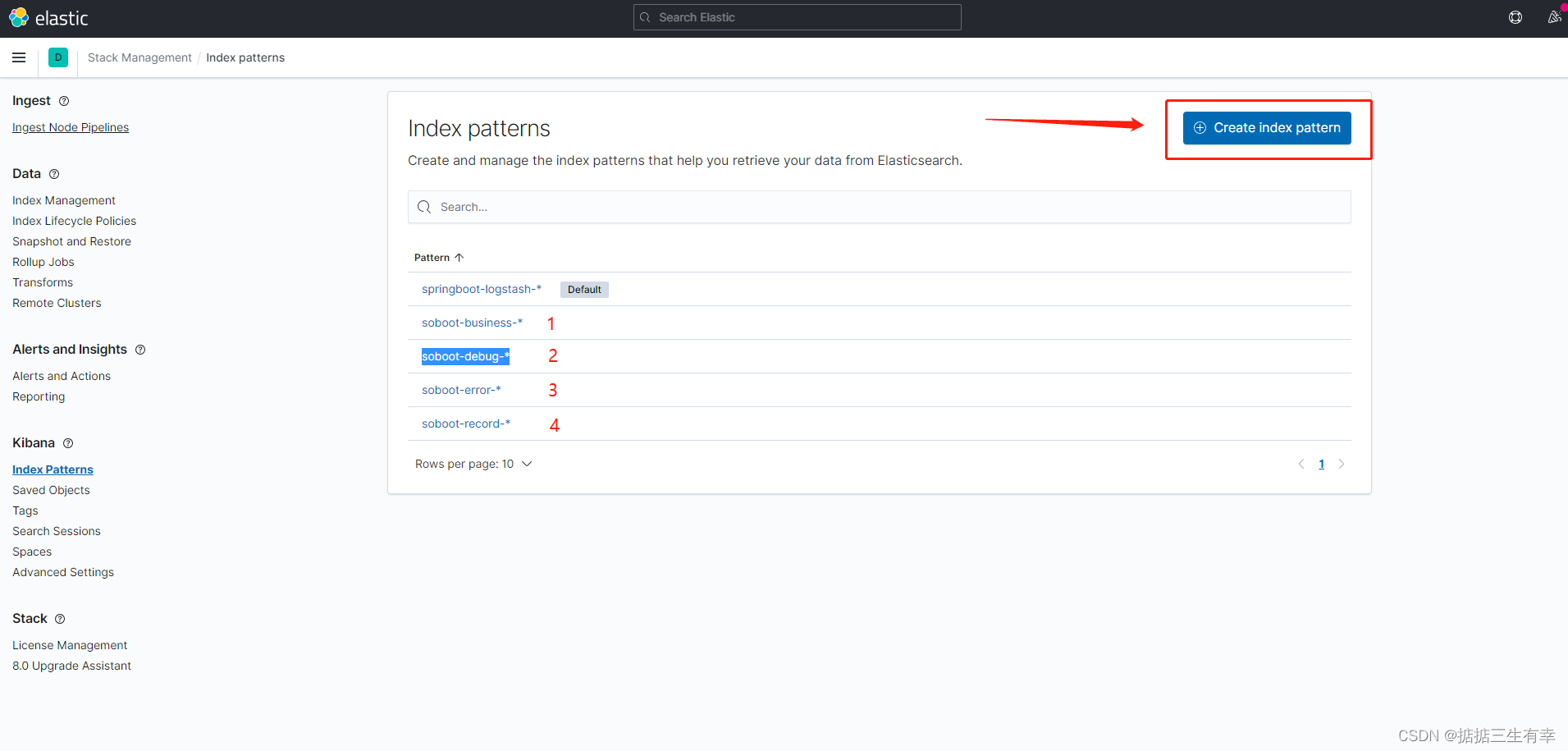

(5) 运行Springboot应用 并在查看kibana日志

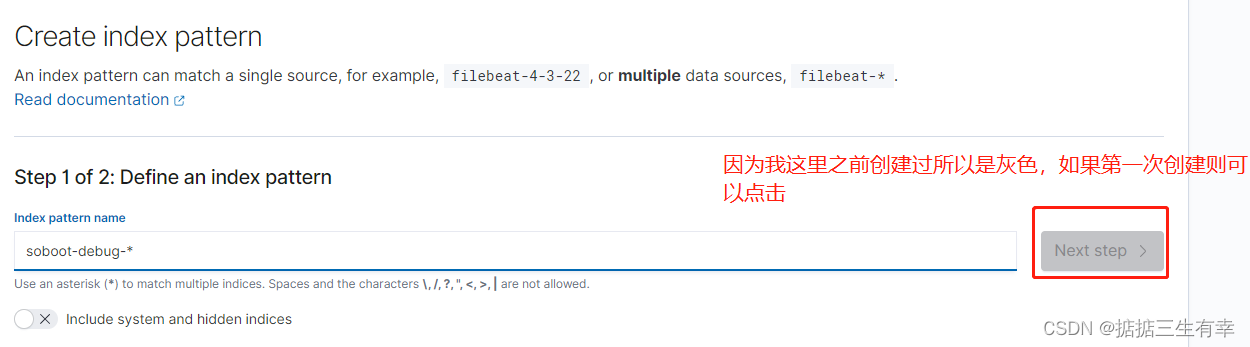

创建index pattern

分别创建这四种索引对应不同的日志级别

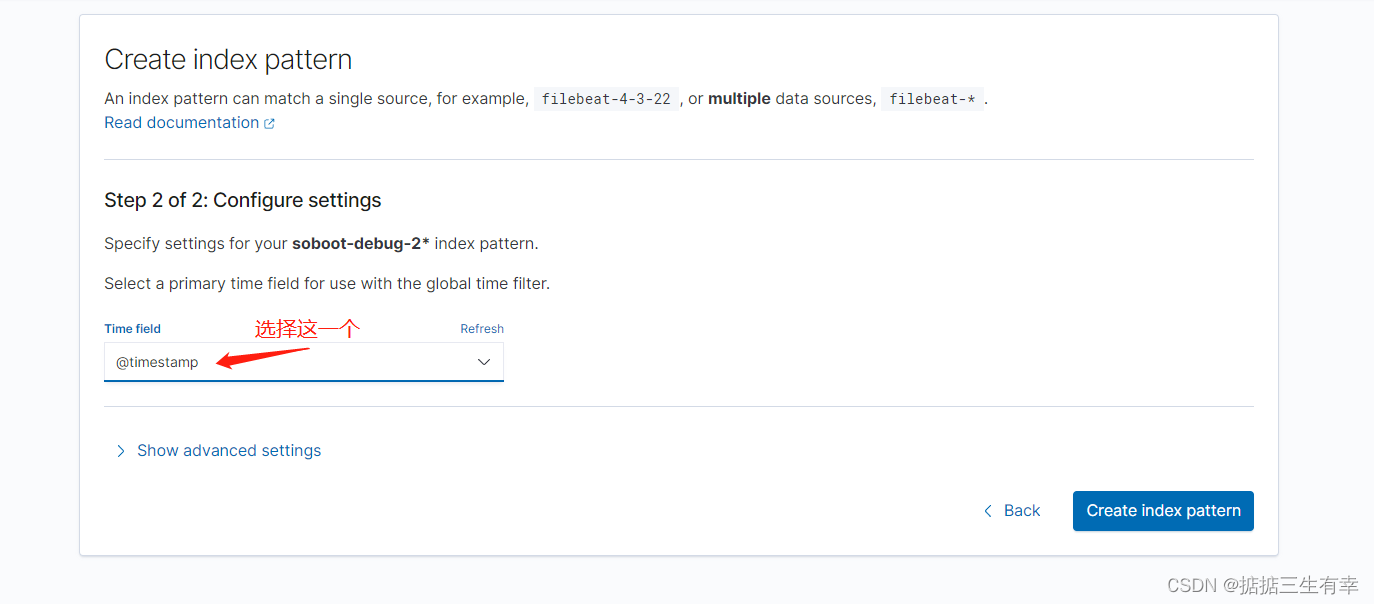

点击Next step后

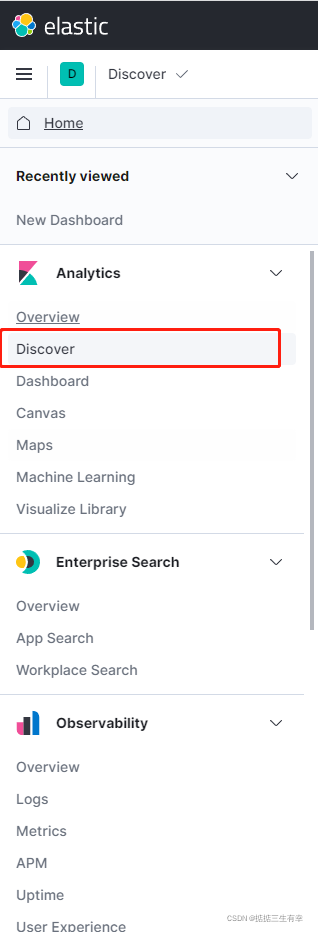

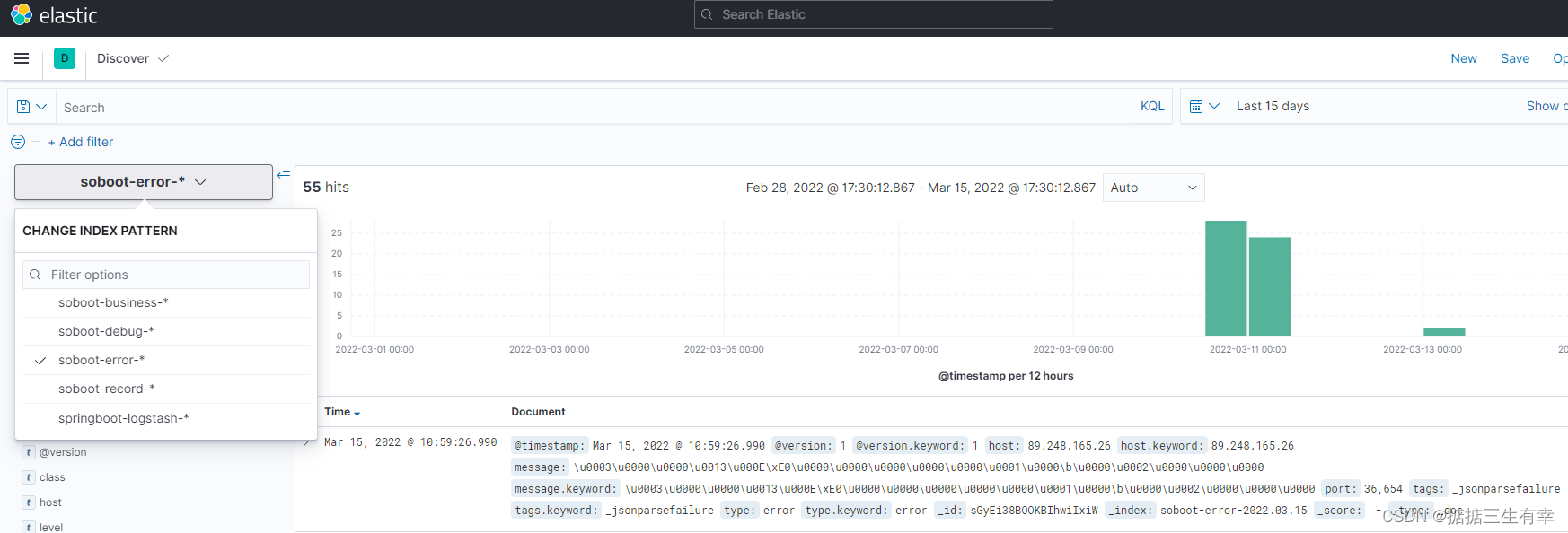

4. 查看收集日志

故意在接口中制造错误后在error中查看

5. 总结

搭建了ELK日志收集系统之后,我们如果要查看SpringBoot应用的日志信息,就不需要查看日志文件了,直接在Kibana中查看即可。