文章目录

Linux内核实现名称空间的创建

ip netns命令

可以借助ip netns命令来完成对 Network Namespace 的各种操作。ip netns命令来自于iproute安装包,一般系统会默认安装,如果没有的话,请自行安装。

#查看是否安装ip netns命令

[root@localhost ~]# rpm -qa | grep iproute

iproute-5.3.0-1.el8.x86_64

iproute-tc-5.3.0-1.el8.x86_64

注意:ip netns命令修改网络配置时需要 sudo 权限。

可以通过ip netns命令完成对Network Namespace 的相关操作,可以通过ip netns help查看命令帮助信息:

[root@localhost ~]# ip netns help

Usage: ip netns list //列出所有

ip netns add NAME //添加

ip netns attach NAME PID

ip netns set NAME NETNSID

ip [-all] netns delete [NAME]

ip netns identify [PID]

ip netns pids NAME

ip [-all] netns exec [NAME] cmd ...

ip netns monitor

ip netns list-id

NETNSID := auto | POSITIVE-INT

默认情况下,Linux系统中是没有任何 Network Namespace的,所以ip netns list命令不会返回任何信息。

创建Network Namespace

通过命令创建一个名为ns0的命名空间:

[root@localhost ~]# ip netns add ns0

[root@localhost ~]# ip netns list

ns0

新创建的 Network Namespace 会出现在/var/run/netns/目录下。如果相同名字的 namespace 已经存在,命令会报Cannot create namespace file “/var/run/netns/ns0”: File exists的错误。

[root@localhost ~]# ls /var/run/netns/

ns0

[root@localhost ~]# ip netns add ns0

Cannot create namespace file "/var/run/netns/ns0": File exists

对于每个 Network Namespace 来说,它会有自己独立的网卡、路由表、ARP 表、iptables 等和网络相关的资源。

操作Network Namespace

ip命令提供了ip netns exec子命令可以在对应的 Network Namespace 中执行命令。

查看新创建 Network Namespace 的网卡信息

[root@localhost ~]# ip netns exec ns0 ip addr

1: lo: <LOOPBACK> mtu 65536 qdisc noop state DOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

可以看到,新创建的Network Namespace中会默认创建一个lo回环网卡,此时网卡处于关闭状态。此时,尝试去 ping 该lo回环网卡,会提示Network is unreachable

[root@localhost ~]# ip netns exec ns0 ping 127.0.0.1

connect: 网络不可达

通过下面的命令启用lo回环网卡:

[root@localhost ~]# ip netns exec ns0 ip link set lo up //开启lo回环网卡

[root@localhost ~]# ip netns exec ns0 ip addr //开启后 就有了状态有了up 网卡有了ip

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

[root@localhost ~]# ip netns exec ns0 ping 127.0.0.1 //在ping就能ping通了

PING 127.0.0.1 (127.0.0.1) 56(84) bytes of data.

64 bytes from 127.0.0.1: icmp_seq=1 ttl=64 time=0.026 ms

64 bytes from 127.0.0.1: icmp_seq=2 ttl=64 time=0.048 ms

^C

--- 127.0.0.1 ping statistics ---

2 packets transmitted, 2 received, 0% packet loss, time 61ms

rtt min/avg/max/mdev = 0.026/0.037/0.048/0.011 ms

转移设备

我们可以在不同的 Network Namespace 之间转移设备(如veth)。由于一个设备只能属于一个 Network Namespace ,所以转移后在这个 Network Namespace 内就看不到这个设备了。

其中,veth设备属于可转移设备,而很多其它设备(如lo、vxlan、ppp、bridge等)是不可以转移的。

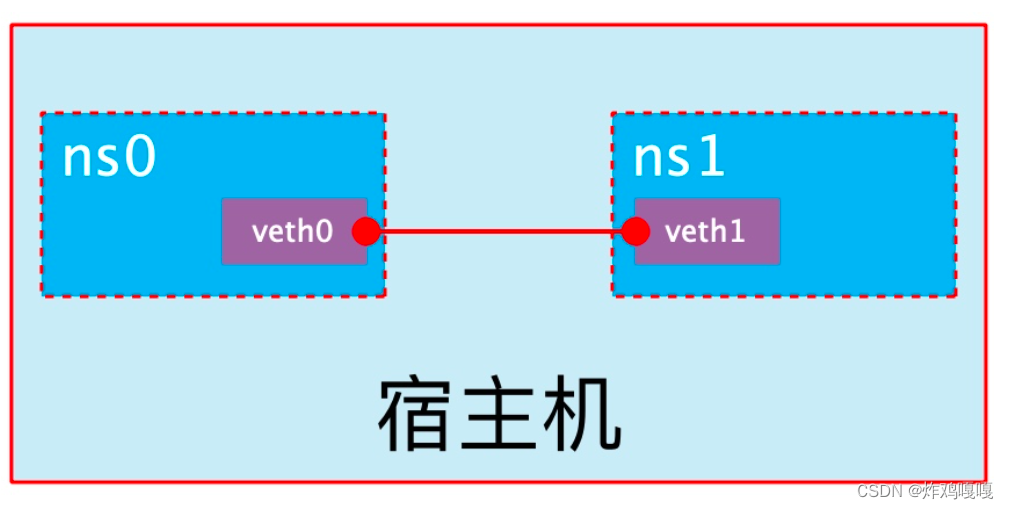

veth pair

veth pair 全称是 Virtual Ethernet Pair,是一个成对的端口,所有从这对端口一 端进入的数据包都将从另一端出来,反之也是一样。

引入veth pair是为了在不同的 Network Namespace 直接进行通信,利用它可以直接将两个 Network Namespace 连接起来。

创建veth pair

[root@localhost ~]# ip link add type veth

[root@localhost ~]# ip a

10: veth0@veth1: <BROADCAST,MULTICAST,M-DOWN> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether b6:c4:97:9b:0d:06 brd ff:ff:ff:ff:ff:ff

11: veth1@veth0: <BROADCAST,MULTICAST,M-DOWN> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 46:a3:da:cb:c9:87 brd ff:ff:ff:ff:ff:ff

可以看到,此时系统中新增了一对veth pair,将veth0和veth1两个虚拟网卡连接了起来,此时这对 veth pair 处于”未启用“状态。

实现Network Namespace间通信

下面我们利用veth pair实现两个不同的 Network Namespace 之间的通信。刚才我们已经创建了一个名为ns0的 Network Namespace,下面再创建一个信息Network Namespace,命名为ns1

#创建一个新的名称空间 名为ns1

[root@localhost ~]# ip netns add ns1

[root@localhost ~]# ip netns list

ns1

ns0

然后我们将veth0加入到ns0,将veth1加入到ns1

因为容器间是无法互相通信的 将veth0和veth1 分别加入到ns0和ns1就可使其互相通信

[root@localhost ~]# ip link set veth0 netns ns0

[root@localhost ~]# ip link set veth1 netns ns1

然后我们分别为这对veth pair配置上ip地址,并启用它们

[root@localhost ~]# ip link set veth0 netns ns0 //将veth0加入到ns0

[root@localhost ~]# ip link set veth1 netns ns1 //将veth1加入到ns1

[root@localhost ~]# ip netns exec ns0 ip link set veth0 up //开启veth0

[root@localhost ~]# ip netns exec ns0 ip addr add 1.1.1.1/24 dev veth0 //为veth0添加1.1.1.1/24

[root@localhost ~]# ip netns exec ns1 ip link set lo up //开启ns1的环回网卡

[root@localhost ~]# ip netns exec ns1 ip link set veth1 up //开启veth1网卡

[root@localhost ~]# ip netns exec ns1 ip addr add 1.1.1.2/24 dev veth1 //为veth1添加1.1.1.2/24

查看这对veth pair的状态

[root@localhost ~]# ip netns exec ns0 ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

10: veth0@if11: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether b6:c4:97:9b:0d:06 brd ff:ff:ff:ff:ff:ff link-netns ns1

inet 1.1.1.1/24 scope global veth0 //veth0有了1.1.1.1/24

valid_lft forever preferred_lft forever

inet6 fe80::b4c4:97ff:fe9b:d06/64 scope link

valid_lft forever preferred_lft forever

[root@localhost ~]# ip netns exec ns1 ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

11: veth1@if10: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 46:a3:da:cb:c9:87 brd ff:ff:ff:ff:ff:ff link-netns ns0

inet 1.1.1.2/24 scope global veth1 //veth1有了1.1.1.2/24

valid_lft forever preferred_lft forever

inet6 fe80::44a3:daff:fecb:c987/64 scope link

valid_lft forever preferred_lft forever

(测试)从上面可以看出,我们已经成功启用了这个veth pair,并为每个veth设备分配了对应的ip地址。我们尝试在ns1中访问ns0中的ip地址:

[root@localhost ~]# ip netns exec ns1 ping 1.1.1.1

PING 1.1.1.1 (1.1.1.1) 56(84) bytes of data.

64 bytes from 1.1.1.1: icmp_seq=1 ttl=64 time=0.045 ms

64 bytes from 1.1.1.1: icmp_seq=2 ttl=64 time=0.051 ms

^C

--- 1.1.1.1 ping statistics ---

2 packets transmitted, 2 received, 0% packet loss, time 38ms

rtt min/avg/max/mdev = 0.045/0.048/0.051/0.003 ms

可以看到,veth pair成功实现了两个不同Network Namespace之间的网络交互。

veth设备重命名

#修改ns0的网卡名

[root@localhost ~]# ip netns exec ns0 ip link set veth0 down //重命名需要将网卡关闭

[root@localhost ~]# ip netns exec ns0 ip link set dev veth0 name eth0

[root@localhost ~]# ip netns exec ns0 ifconfig -a

eth0: flags=4098<BROADCAST,MULTICAST> mtu 1500

inet 1.1.1.1 netmask 255.255.255.0 broadcast 0.0.0.0

ether b6:c4:97:9b:0d:06 txqueuelen 1000 (Ethernet)

RX packets 17 bytes 1286 (1.2 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 17 bytes 1286 (1.2 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10<host>

loop txqueuelen 1000 (Local Loopback)

RX packets 4 bytes 336 (336.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 4 bytes 336 (336.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@localhost ~]# ip netns exec ns0 ip link set eth0 up

#修改ns1的网卡名

[root@localhost ~]# ip netns exec ns1 ip link set veth1 down

[root@localhost ~]# ip netns exec ns1 ip link set dev veth1 name eth1

[root@localhost ~]# ip netns exec ns1 ifconfig -a

eth1: flags=4098<BROADCAST,MULTICAST> mtu 1500

inet 1.1.1.2 netmask 255.255.255.0 broadcast 0.0.0.0

ether 46:a3:da:cb:c9:87 txqueuelen 1000 (Ethernet)

RX packets 26 bytes 2012 (1.9 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 19 bytes 1466 (1.4 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10<host>

loop txqueuelen 1000 (Local Loopback)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@localhost ~]# ip netns exec ns1 ip link set eth1 up

四种网络模式配置

bridge模式配置

#拉去一个busybos镜像用于测试

[root@localhost ~]# docker pull busybox

Using default tag: latest

latest: Pulling from library/busybox

5cc84ad355aa: Pull complete

Digest: sha256:5acba83a746c7608ed544dc1533b87c737a0b0fb730301639a0179f9344b1678

Status: Downloaded newer image for busybox:latest

docker.io/library/busybox:latest

[root@localhost ~]# docker run -it --name b1 --rm busybox

/ # ifconfig

eth0 Link encap:Ethernet HWaddr 02:42:AC:11:00:02

inet addr:172.17.0.2 Bcast:172.17.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:16 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:2050 (2.0 KiB) TX bytes:0 (0.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

```bash

[root@localhost ~]# docker container ls -a //用于列出所有容器(包括未启动的)

# 在创建容器时添加--network bridge与不加--network选项效果是一致的 默认就是桥接模式

[root@localhost ~]# docker run -it --name b2 --network bridge --rm busybox

/ # ifconfig

eth0 Link encap:Ethernet HWaddr 02:42:AC:11:00:02

inet addr:172.17.0.2 Bcast:172.17.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:15 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:1949 (1.9 KiB) TX bytes:0 (0.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

none模式配置

#此模式为孤岛模式 只能访问自己和其他容器隔离

[root@localhost ~]# docker run -it --network none --rm busybox

/ # ifconfig -a

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

container模式配置

启动第一个容器

[root@localhost ~]# docker run -it --name b1 --rm busybox

/ # ifconfig -a

eth0 Link encap:Ethernet HWaddr 02:42:AC:11:00:02

inet addr:172.17.0.2 Bcast:172.17.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:23 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:2762 (2.6 KiB) TX bytes:0 (0.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

启动第二个容器

[root@localhost ~]# docker run -it --name b2 --rm busybox

/ # ifconfig -a

eth0 Link encap:Ethernet HWaddr 02:42:AC:11:00:03

inet addr:172.17.0.3 Bcast:172.17.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:21 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:2591 (2.5 KiB) TX bytes:0 (0.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

可以看到名为b2的容器IP地址是10.0.0.3,与第一个容器的IP地址不是一样的,也就是说并没有共享网络,此时如果我们将第二个容器的启动方式改变一下,就可以使名为b2的容器IP与B1容器IP一致,也即共享IP,但不共享文件系统。

[root@localhost ~]# docker run -it --name b2 --rm --network container:b1 busybox

/ # ifconfig

eth0 Link encap:Ethernet HWaddr 02:42:AC:11:00:02

inet addr:172.17.0.2 Bcast:172.17.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:29 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:3275 (3.1 KiB) TX bytes:0 (0.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

此时我们在b1容器上创建一个目录

/ # mkdir /tmp/data

/ # ls /tmp/

data

到b2容器上检查/tmp目录会发现并没有这个目录,因为文件系统是处于隔离状态,仅仅是共享了网络而已。

在b2容器上部署一个站点

/ # echo 'hello world' > /tmp/index.html

/ # ls /tmp/

index.html

/ # netstat -antl

Active Internet connections (servers and established)

Proto Recv-Q Send-Q Local Address Foreign Address State

/ # /bin/httpd -h /tmp/

/ # netstat -antl

Active Internet connections (servers and established)

Proto Recv-Q Send-Q Local Address Foreign Address State

tcp 0 0 :::80 :::* LISTEN

在b1容器上用本地地址去访问此站点

/ # wget -O - -q 127.0.0.1:80

hello world

由此可见,container模式下的容器间关系就相当于一台主机上的两个不同进程

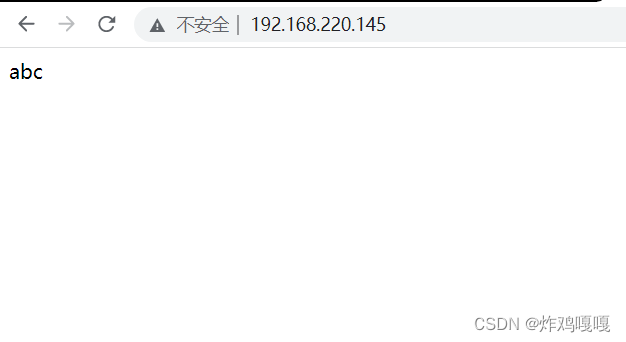

host模式配置

启动容器时直接指明模式为host

[root@localhost ~]# docker run -it --name b1 --rm --network host busybox

/ # ifconfig

ens160 Link encap:Ethernet HWaddr 00:0C:29:88:45:00

inet addr:192.168.220.145 Bcast:192.168.220.255 Mask:255.255.255.0

inet6 addr: fe80::25e8:73ad:fbe9:f338/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:326653 errors:0 dropped:0 overruns:0 frame:0

TX packets:525616 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:25930635 (24.7 MiB) TX bytes:126028332 (120.1 MiB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:98 errors:0 dropped:0 overruns:0 frame:0

TX packets:98 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:7590 (7.4 KiB) TX bytes:7590 (7.4 KiB)

virbr0 Link encap:Ethernet HWaddr 52:54:00:67:69:5A

UP BROADCAST MULTICAST MTU:1500 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

此时如果我们在这个容器中启动一个http站点,我们就可以直接用宿主机的IP直接在浏览器中访问这个容器中的站点了。

[root@localhost ~]# docker run -it --name b1 --rm --network host busybox

/ # mkdir test

/ # echo "abc" > /test/index.html //创建测试文件

/ # /bin/httpd -h /test/ //开启80端口

/ # netstat -antl

tcp 0 0 :::80 :::* LISTEN

#开启新终端 关闭防火墙

[root@localhost ~]# systemctl stop firewalld.service

[root@localhost ~]# setenforce 0

测试

容器的常用操作

查看容器的主机名

b732caf0f051

在容器启动时注入主机名

[root@localhost ~]# docker run -it --name b1 --network bridge --hostname kurumi --rm busybox

/ # hostname

kurumi

/ # cat /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.17.0.2 kurumi

/ # cat /etc/resolv.conf

# Generated by NetworkManager

search localdomain

nameserver 192.168.220.2

手动指定容器要使用的DNS

[root@localhost ~]# docker run -it --rm --hostname kurumi --dns 114.114.114.114 busybox

/ # cat /etc/resolv.conf

search localdomain

nameserver 114.114.114.114

手动往/etc/hosts文件中注入主机名到IP地址的映射

下面操作node2 创建完后ping下node1 可以通就没问题 不能通的话新开一台终端用宿主机ping容器node1 或者node2 还不通就快照吧

#node1操作

[root@localhost ~]# docker run -it --rm --hostname done1 --network bridge busybox

/ # mkdir data

/ # echo "abc" > /data/index.html

/ # cat /data/index.html

abc

/ # /bin/httpd -h /data/

/ # netstat -antl

Active Internet connections (servers and established)

Proto Recv-Q Send-Q Local Address Foreign Address State

tcp 0 0 :::80 :::* LISTEN

#node2操作

[root@localhost ~]# docker run -it --rm --hostname done2 --add-host node1:172.17.0.2 --network bridge busybox

/ # ping node1

PING node1 (172.17.0.2): 56 data bytes

64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.109 ms

64 bytes from 172.17.0.2: seq=1 ttl=64 time=0.210 ms

^C

--- node1 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.109/0.159/0.210 ms

/ # wget http://node1

Connecting to node1 (172.17.0.2:80)

saving to 'index.html'

index.html 100% |****************************| 4 0:00:00 ETA

'index.html' saved

开放容器端口

执行docker run的时候有个-p选项,可以将容器中的应用端口映射到宿主机中,从而实现让外部主机可以通过访问宿主机的某端口来访问容器内应用的目的。

-p选项能够使用多次,其所能够暴露的端口必须是容器确实在监听的端口。

-p选项的使用格式:

-p 将指定的容器端口映射至主机所有地址的一个动态端口

[root@localhost ~]# docker run -d --rm --name web -p 80 httpd

0c767fc66426d42dd87636d64b7234066ddc2409675ff955a7a42e7987be690f

[root@localhost ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

0c767fc66426 httpd "httpd-foreground" 4 seconds ago Up 3 seconds 0.0.0.0:49154->80/tcp, :::49154->80/tcp web

-p : 将容器端口映射至指定的主机端口

[root@localhost ~]# docker run -d --rm --name web -p 80:80 httpd

af5356fa1082fdf2496f42f8f93a3093c74f441452642dd8df7a6a1f0ae9f09c

[root@localhost ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

af5356fa1082 httpd "httpd-foreground" 4 seconds ago Up 3 seconds 0.0.0.0:80->80/tcp, :::80->80/tcp web

-p :: 将指定的容器端口映射至主机指定的动态端口

#此方式适用于 主机有多个ip 要求指定ip访问

[root@localhost ~]# ip addr add 192.168.220.254/24 dev ens160

[root@localhost ~]# ip addr show ens160

2: ens160: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:88:45:00 brd ff:ff:ff:ff:ff:ff

inet 192.168.220.145/24 brd 192.168.220.255 scope global dynamic noprefixroute ens160

valid_lft 1292sec preferred_lft 1292sec

inet 192.168.220.254/24 scope global secondary ens160

valid_lft forever preferred_lft forever

inet6 fe80::25e8:73ad:fbe9:f338/64 scope link noprefixroute

valid_lft forever preferred_lft forever

[root@localhost ~]# docker run -d --name web --rm -p 192.168.220.254::80 htt

pd

cfcf972bf3680c5da4f5b40447b2b923a03599737aaaf2543aa973515b999b81

[root@localhost ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS

PORTS NAMES

cfcf972bf368 httpd "httpd-foreground" 3 seconds ago Up 2 seconds

192.168.220.254:49153->80/tcp web

-p ::将指定的容器端口映射至主机指定的端口

[root@localhost ~]# docker run --name web -d --rm -p 192.168.220.254:8080:80

httpd

770b52056347843bf31deea38933fb37fef611d1541e34388d364ef3bd419af0

[root@localhost ~]# ss -antl

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 0.0.0.0:111 0.0.0.0:*

LISTEN 0 128 192.168.220.254:8080 0.0.0.0:*

LISTEN 0 32 192.168.122.1:53 0.0.0.0:*

LISTEN 0 128 0.0.0.0:22 0.0.0.0:*

LISTEN 0 5 127.0.0.1:631 0.0.0.0:*

LISTEN 0 128 [::]:111 [::]:*

LISTEN 0 128 [::]:22 [::]:*

LISTEN 0 5 [::1]:631 [::]:*

[root@localhost ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

770b52056347 httpd "httpd-foreground" 23 seconds ago Up 22 seconds 192.168.220.254:8080->80/tcp web

自定义docker0桥的网络属性信息

官方操作文档:https://docs.docker.com/network/bridge/

[root@localhost ~]# ip addr show docker0

5: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:e2:78:45:7e brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

[root@localhost ~]# vim /etc/docker/daemon.json

[root@localhost ~]# cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://docker.mirrors.ustc.edu.cn/"],

"bip": "1.1.1.1/24" //修改网卡ip为1.1.1.1

}

[root@localhost ~]# systemctl restart docker //重启docker服务

[root@localhost ~]# ip addr show docker0

5: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:e2:78:45:7e brd ff:ff:ff:ff:ff:ff

inet 1.1.1.1/24 brd 1.1.1.255 scope global docker0

valid_lft forever preferred_lft forever

#在chongqidocker时容器也会关闭 加上--restart=alwys 这个参数docker重启时 容器也会重启

[root@localhost ~]# docker run -d --name web --restart=always httpd

4cb3c839461eaed1b269242b28a55b968de1c13933f129aa6ed08980fd55f411

[root@localhost ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

4cb3c839461e httpd "httpd-foreground" 12 seconds ago Up 10 seconds 80/tcp web

[root@localhost ~]# systemctl restart docker

[root@localhost ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

4cb3c839461e httpd "httpd-foreground" 27 seconds ago Up 4 seconds 80/tcp web

#当指定docker0的ip后 删除此添加docker的ip也是不会改变的想修改需要在指定一次

[root@localhost ~]# cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://docker.mirrors.ustc.edu.cn/"]

}

[root@localhost ~]# systemctl restart docker

[root@localhost ~]# ip addr show docker0

5: docker0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:e2:78:45:7e brd ff:ff:ff:ff:ff:ff

inet 1.1.1.1/24 brd 1.1.1.255 scope global docker0

valid_lft forever preferred_lft forever

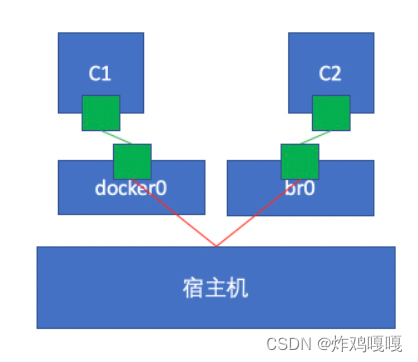

创建自定义桥

[root@localhost ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

0cfebacda74b bridge bridge local

322c4224124b host host local

c69dc8eed0ec none null local

[root@localhost ~]# docker network create -d bridge --subnet "1.1.1.0/24" --gateway "1.1.1.1" br0

e75fc1f15e4c47d7e75f0d2c407291cdcbe695394ce64ecdc368138dd6f07f79

[root@localhost ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

e75fc1f15e4c br0 bridge local

0cfebacda74b bridge bridge local

322c4224124b host host local

c69dc8eed0ec none null local

使用新创建的自定义桥来创建容器:

[root@localhost ~]# docker run -it --name b1 --rm --network br0 busybox

/ # ifconfig

eth0 Link encap:Ethernet HWaddr 02:42:01:01:01:02

inet addr:1.1.1.2 Bcast:1.1.1.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:36 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:4921 (4.8 KiB) TX bytes:0 (0.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

使用默认桥创建容器

[root@localhost ~]# docker run -it --rm --name b2 busybox

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

9: eth0@if10: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

双方容器网段不一样想要通信只需添加一块网卡

#另起一台终端为b2添加br0网段的网卡

[root@localhost ~]# docker network connect br0 b2 //此操作添加的网卡是永久的 重启容器服务宿主机都不会消失

#b2配置

/ # ip a //查看b2的ip发现已经拥有了br0的1.1.1.0网段的ip

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

9: eth0@if10: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

11: eth1@if12: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 02:42:01:01:01:03 brd ff:ff:ff:ff:ff:ff

inet 1.1.1.3/24 brd 1.1.1.255 scope global eth1

valid_lft forever preferred_lft forever

/ # ping 1.1.1.2 //尝试ping b1容器测试可以通

PING 1.1.1.2 (1.1.1.2): 56 data bytes

64 bytes from 1.1.1.2: seq=0 ttl=64 time=0.140 ms

64 bytes from 1.1.1.2: seq=1 ttl=64 time=0.109 ms

64 bytes from 1.1.1.2: seq=2 ttl=64 time=0.295 ms

64 bytes from 1.1.1.2: seq=3 ttl=64 time=0.082 ms

64 bytes from 1.1.1.2: seq=4 ttl=64 time=0.067 ms

#b1容器 配置

/ # ping 172.17.0.2 //用b1去ping b2的ip无法ping通原因是不在同一网段

PING 172.17.0.2 (172.17.0.2): 56 data bytes

^C

--- 172.17.0.2 ping statistics ---

13 packets transmitted, 0 packets received, 100% packet loss