CanalAdmin部署文档

CanalAdmin部署文档

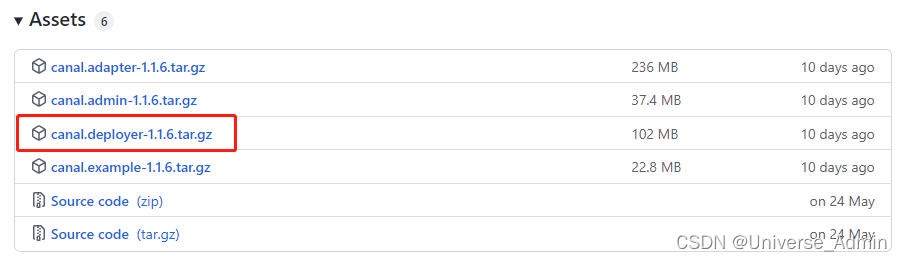

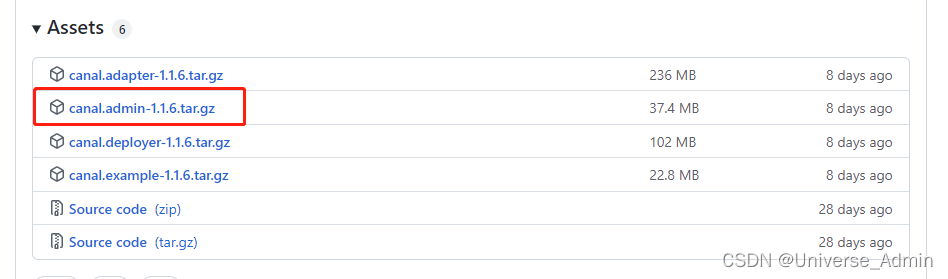

1、下载

Releases · alibaba/canal · GitHub

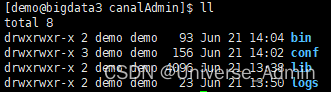

2、解压

mkdir canalAdmin

tar zxvf canal.admin-1.1.6.tar.gz -C canalAdmin

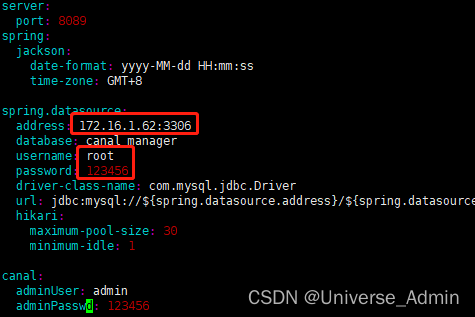

3、配置修改

vim canalAdmin/conf/application.yml

配置数据库信息,address、username、password填实际使用数据库信息,database固定为canal_manager

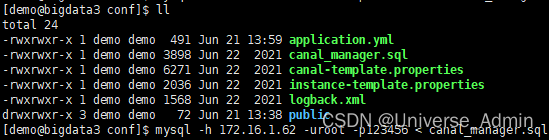

4、初始化元数据库

mysql -h 172.16.1.186 -uroot -p123456 < canal_manager.sql

如果本地没有mysql客户端,可以将canal_manager.sql内容拷贝出来单独执行

5、启动Canal Admin

sh canalAdmin/bin/startup.sh

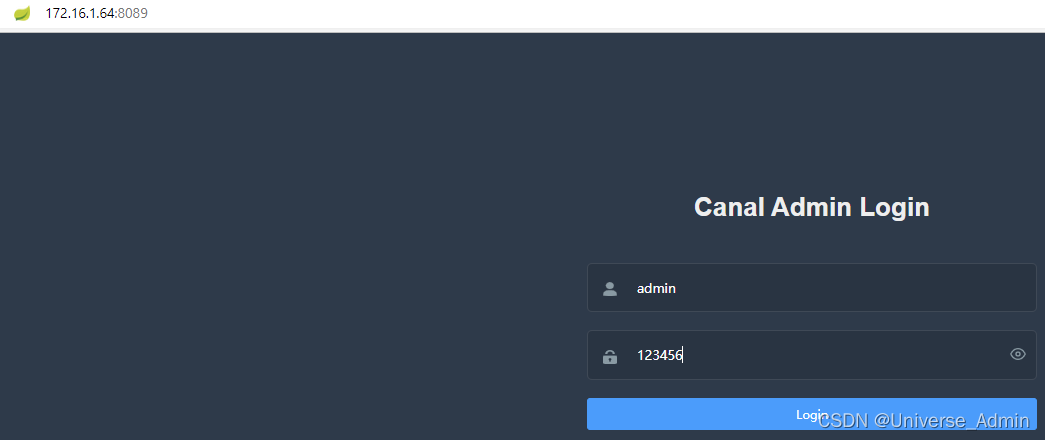

6、登录页面

默认端口8089,用户名admin,默认密码123456

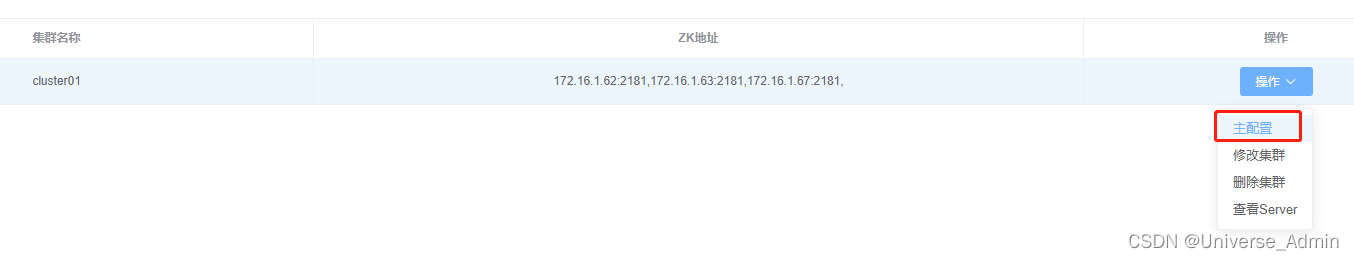

7、集群管理->新建集群

集群名称:自定义

ZK地址:为已安装的zk集群地址

8、添加主配置参数

#################################################

######### common argument #############

#################################################

canal.ip =

canal.register.ip =

canal.port = 11111

canal.metrics.pull.port = 11112

# 这里填写linux上安装canalServer的操作系统用户名和MD5加密的密码

canal.user = demo

canal.passwd = e369853df766fa44e1ed0ff613f563bd

# 这里填写安装canalAdmin的IP:端口

canal.admin.manager = 172.16.1.4:8089

# 默认11110,可以不改

canal.admin.port = 11110

# canalAdmin前端登录的用户名和MD5加密的密码

canal.admin.user = admin

canal.admin.passwd = 6BB4837EB74329105EE4568DDA7DC67ED2CA2AD9

canal.admin.register.auto = true

# 对应[集群管理]中创建的集群名

canal.admin.register.cluster = cluster01

# 对应[Server管理]中创建的Server名称

canal.admin.register.name = bigdata5

# 配置需要写入kafka的ZK集群

canal.zkServers = bigdata1:2181,bigdata2:2181,bigdata5:2181

# flush data to zk

canal.zookeeper.flush.period = 1000

canal.withoutNetty = false

# tcp, kafka, rocketMQ, rabbitMQ, pulsarMQ

canal.serverMode = kafka

# flush meta cursor/parse position to file

canal.file.data.dir = ${canal.conf.dir}

canal.file.flush.period = 1000

## memory store RingBuffer size, should be Math.pow(2,n)

canal.instance.memory.buffer.size = 16384

## memory store RingBuffer used memory unit size , default 1kb

canal.instance.memory.buffer.memunit = 1024

## meory store gets mode used MEMSIZE or ITEMSIZE

canal.instance.memory.batch.mode = MEMSIZE

canal.instance.memory.rawEntry = true

## detecing config

canal.instance.detecting.enable = false

#canal.instance.detecting.sql = insert into retl.xdual values(1,now()) on duplicate key update x=now()

canal.instance.detecting.sql = select 1

canal.instance.detecting.interval.time = 3

canal.instance.detecting.retry.threshold = 3

canal.instance.detecting.heartbeatHaEnable = false

# support maximum transaction size, more than the size of the transaction will be cut into multiple transactions delivery

canal.instance.transaction.size = 1024

# mysql fallback connected to new master should fallback times

canal.instance.fallbackIntervalInSeconds = 60

# network config

canal.instance.network.receiveBufferSize = 16384

canal.instance.network.sendBufferSize = 16384

canal.instance.network.soTimeout = 30

# binlog filter config

canal.instance.filter.druid.ddl = true

canal.instance.filter.query.dcl = false

canal.instance.filter.query.dml = false

canal.instance.filter.query.ddl = false

canal.instance.filter.table.error = false

canal.instance.filter.rows = false

canal.instance.filter.transaction.entry = false

canal.instance.filter.dml.insert = false

canal.instance.filter.dml.update = false

canal.instance.filter.dml.delete = false

# binlog format/image check

canal.instance.binlog.format = ROW,STATEMENT,MIXED

canal.instance.binlog.image = FULL,MINIMAL,NOBLOB

# binlog ddl isolation

canal.instance.get.ddl.isolation = false

# parallel parser config

canal.instance.parser.parallel = true

## concurrent thread number, default 60% available processors, suggest not to exceed Runtime.getRuntime().availableProcessors()

#canal.instance.parser.parallelThreadSize = 16

## disruptor ringbuffer size, must be power of 2

canal.instance.parser.parallelBufferSize = 256

canal.instance.tsdb.enable = true

#canal.instance.tsdb.dir =

# 配置canal binlog DDL相关元数据存储的数据jdbc、用户名、密码

canal.instance.tsdb.url = jdbc:mysql://172.16.1.62:3306/canal_tsdb

canal.instance.tsdb.dbUsername = root

canal.instance.tsdb.dbPassword = Pw#123456

# dump snapshot interval, default 24 hour

canal.instance.tsdb.snapshot.interval = 24

# purge snapshot expire , default 360 hour(15 days)

canal.instance.tsdb.snapshot.expire = 360

#################################################

######### destinations #############

#################################################

canal.destinations =

# canalServer在linux上的配置目录

canal.conf.dir = /opt/canal.deployer-1.1.6/conf

# auto scan instance dir add/remove and start/stop instance

canal.auto.scan = true

canal.auto.scan.interval = 5

# set this value to 'true' means that when binlog pos not found, skip to latest.

# WARN: pls keep 'false' in production env, or if you know what you want.

canal.auto.reset.latest.pos.mode = false

#canal.instance.tsdb.spring.xml = classpath:spring/tsdb/h2-tsdb.xml

canal.instance.tsdb.spring.xml = classpath:spring/tsdb/mysql-tsdb.xml

canal.instance.global.mode = spring

canal.instance.global.lazy = false

canal.instance.global.manager.address = ${canal.admin.manager}

#canal.instance.global.spring.xml = classpath:spring/memory-instance.xml

#canal.instance.global.spring.xml = classpath:spring/file-instance.xml

canal.instance.global.spring.xml = classpath:spring/default-instance.xml

##################################################

######### MQ Properties #############

##################################################

# aliyun ak/sk , support rds/mq

canal.aliyun.accessKey =

canal.aliyun.secretKey =

canal.aliyun.uid=

canal.mq.flatMessage = true

canal.mq.canalBatchSize = 50

canal.mq.canalGetTimeout = 100

# Set this value to "cloud", if you want open message trace feature in aliyun.

canal.mq.accessChannel = local

canal.mq.database.hash = true

canal.mq.send.thread.size = 30

canal.mq.build.thread.size = 8

##################################################

######### Kafka #############

##################################################

# kafka集群地址

kafka.bootstrap.servers = bigdata4:9092,bigdata5:9092,bigdata6:9092

kafka.acks = all

kafka.compression.type = none

kafka.batch.size = 16384

kafka.linger.ms = 1

kafka.max.request.size = 1048576

kafka.buffer.memory = 33554432

kafka.max.in.flight.requests.per.connection = 1

kafka.retries = 0

kafka.kerberos.enable = false

kafka.kerberos.krb5.file = "../conf/kerberos/krb5.conf"

kafka.kerberos.jaas.file = "../conf/kerberos/jaas.conf"

##################################################

######### RocketMQ #############

##################################################

rocketmq.producer.group = test

rocketmq.enable.message.trace = false

rocketmq.customized.trace.topic =

rocketmq.namespace =

rocketmq.namesrv.addr = 127.0.0.1:9876

rocketmq.retry.times.when.send.failed = 0

rocketmq.vip.channel.enabled = false

rocketmq.tag =

##################################################

######### RabbitMQ #############

##################################################

rabbitmq.host =

rabbitmq.virtual.host =

rabbitmq.exchange =

rabbitmq.username =

rabbitmq.password =

rabbitmq.deliveryMode =

##################################################

######### Pulsar #############

##################################################

pulsarmq.serverUrl =

pulsarmq.roleToken =

pulsarmq.topicTenantPrefix =

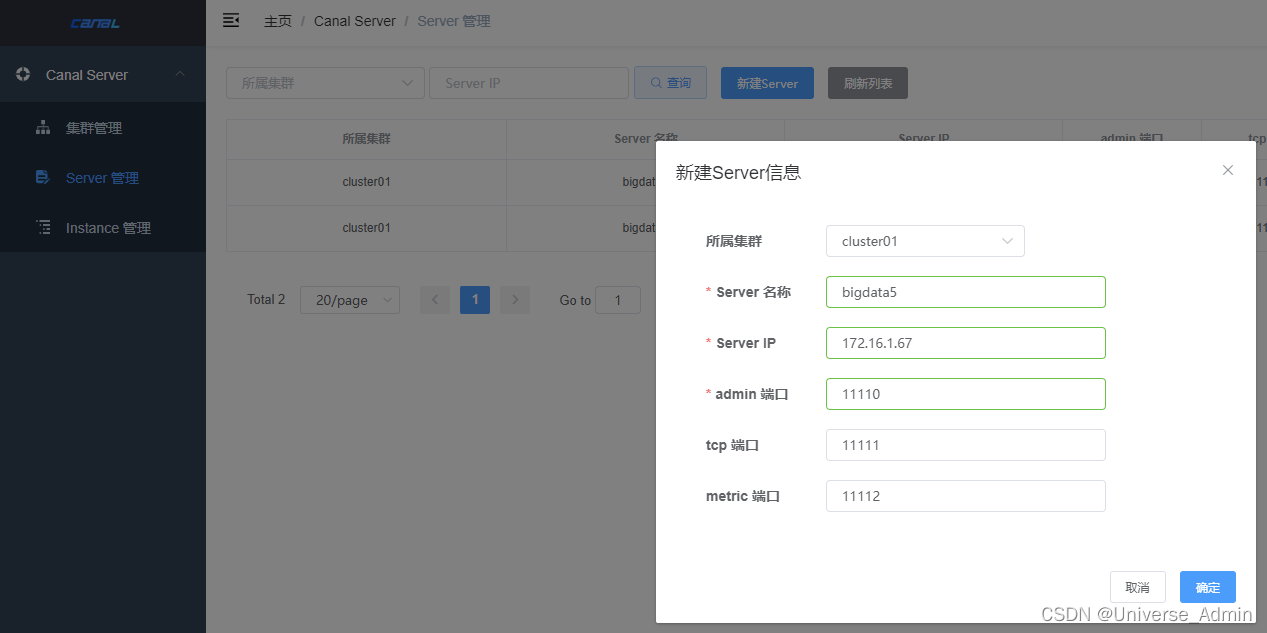

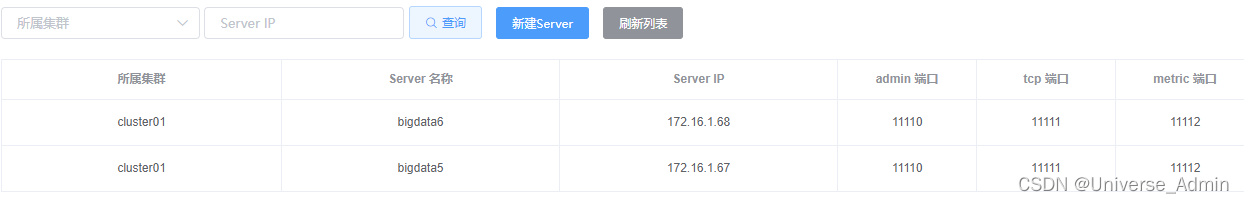

9、Server管理->新建Server

所属集群:集群管理中配置的集群

Server名称:自定义

Server IP:linux中已安装canalServer的机器IP

admin端口:默认11110

tcp端口:默认11111

metric端口:默认11112

创建后的状态为停止,需要在linux启动canalServer后,动态识别状态并关联上

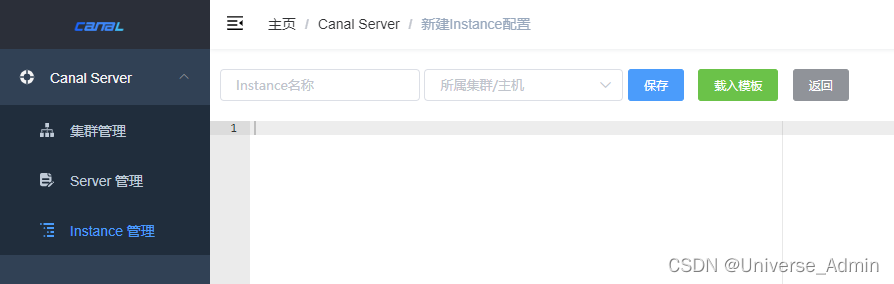

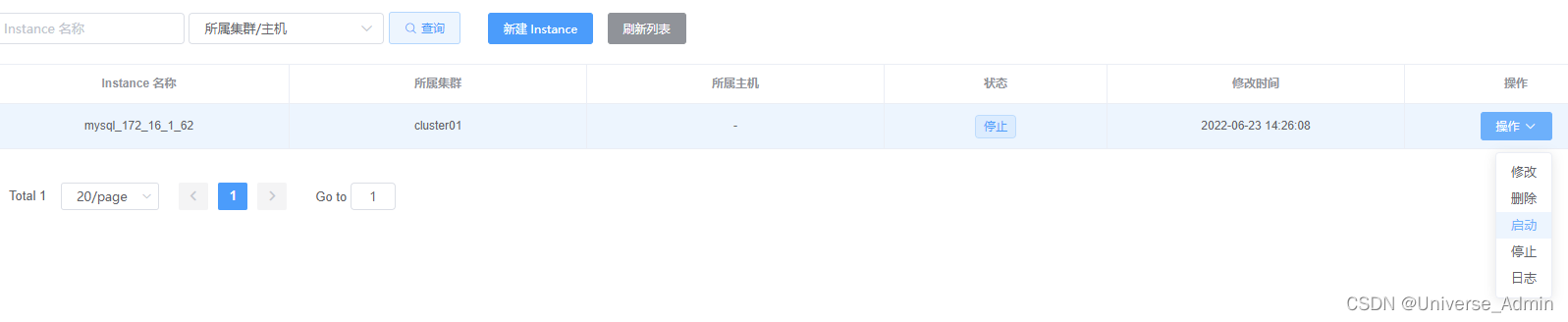

10、Instance管理->新建Instance

#################################################

## mysql serverId , v1.0.26+ will autoGen

# canal.instance.mysql.slaveId=0

# enable gtid use true/false 是否开启全局事务id

canal.instance.gtidon=false

#canal.instance.master.gtid=

# position info

# 需要同步binlog的数据库地址及端

canal.instance.master.address=172.16.1.62:3306

# 需要读取的起始的binlog文件

canal.instance.master.journal.name=mysql-bin.000001

# 需要读取的起始的binlog文件的偏移量

canal.instance.master.position=0

# 需要读取的起始的binlog的时间戳

canal.instance.master.timestamp=1655049600000

# username/password 需要同步binlog的数据库的用户名和密码

canal.instance.dbUsername=root

canal.instance.dbPassword=Pw#123456

canal.instance.connectionCharset = UTF-8

# enable druid Decrypt database password

canal.instance.enableDruid=false

#canal.instance.pwdPublicKey=MFwwDQYJKoZIhvcNAQEBBQADSwAwSAJBALK4BUxdDltRRE5/zXpVEVPUgunvscYFtEip3pmLlhrWpacX7y7GCMo2/JM6LeHmiiNdH1FWgGCpUfircSwlWKUCAwEAAQ==

# table meta tsdb info

# 是否开启table meta的时间序列版本记录功能

canal.instance.tsdb.enable=true

# 存储canal记录表结构日志的数据库jdbc

canal.instance.tsdb.url=jdbc:mysql://172.16.1.62:3306/canal_tsdb

canal.instance.tsdb.dbUsername=root

canal.instance.tsdb.dbPassword=Pw#123456

#canal.instance.standby.address =

#canal.instance.standby.journal.name =

#canal.instance.standby.position =

#canal.instance.standby.timestamp =

#canal.instance.standby.gtid=

# rds oss binlog

#canal.instance.rds.accesskey=

#canal.instance.rds.secretkey=

#canal.instance.rds.instanceId=

# table regex

# mysql 数据解析关注的表,Perl正则表达式, .*\\..*默认所有库所有表

canal.instance.filter.regex=testDataBase\\..*

#例子1:testA\\.test 只解析testA库test表

#例子2:.*\\.test,.*\\.testA 解析所有库test表和testA表

# table black regex

# 过滤那些不符合要求的table,这些table的数据将不会被解析和传送

canal.instance.filter.black.regex=mysql\\.slave_.*

# table field filter(format: schema1.tableName1:field1/field2,schema2.tableName2:field1/field2)

#canal.instance.filter.field=test1.t_product:id/subject/keywords,test2.t_company:id/name/contact/ch

# table field black filter(format: schema1.tableName1:field1/field2,schema2.tableName2:field1/field2)

#canal.instance.filter.black.field=test1.t_product:subject/product_image,test2.t_company:id/name/contact/ch

# 指定kafka的topic

canal.mq.topic=testTopic2

# dynamic topic route by schema or table regex

#canal.mq.dynamicTopic=mytest1.user,topic2:mytest2\\..*,.*\\..*

canal.mq.partition=0

# hash partition config

canal.mq.enableDynamicQueuePartition=true

canal.mq.partitionsNum=3

#canal.mq.dynamicTopicPartitionNum=test.*:4,mycanal:6

canal.mq.partitionHash=.*\\..*

#例子1:test\\.test:pk1^pk2 指定匹配的单表,对应的hash字段为pk1 + pk2

#例子2:.*\\..*:id 正则匹配,指定所有正则匹配的表对应的hash字段为id

#例子3:.*\\..*:$pk$ 正则匹配,指定所有正则匹配的表对应的hash字段为表主键(自动查找)

#例子4: 匹配规则啥都不写,则默认发到0这个partition上

#例子5:.*\\..* ,不指定pk信息的正则匹配,将所有正则匹配的表,对应的hash字段为表名

#例子6: test\\.test:id,.*\\..* , 针对test的表按照id散列,其余的表按照table散列

#################################################

11、安装canalServer

11.1、下载

Releases · alibaba/canal · GitHub

11.2、解压

将canal.deployer-1.1.6.tar.gz上传到/opt目录

cd /opt

tar -zxvf canal.deployer-1.1.6.tar.gz

11.3、设置配置文件

cd /opt/canal.deployer-1.1.6/conf

mv canal.properties canal.properties_bak

cp canal_local.properties canal.properties

vim canal.properties

# register ip

canal.register.ip =

# canalAdmin 的链接、端口、用户名和MD5密码

canal.admin.manager = 172.16.1.64:8089

canal.admin.port = 11110

canal.admin.user = admin

canal.admin.passwd = 6BB4837EB74329105EE4568DDA7DC67ED2CA2AD9

# admin auto register

canal.admin.register.auto = true

# 需要注册到canalAdmin的集群名,对应集群管理里面创建的集群名

canal.admin.register.cluster = cluster01

# 当前canalServer在Server管理里面创建的Server名称

canal.admin.register.name = bigdata6

11.4、启动canalServer

sh /opt/canal.deployer-1.1.6/bin/startup.sh

启动后,canalAdmin的server管理模块,对应创建的server会动态识别到,状态变为启动

12、canal Server HA 搭建

在server管理里面,创建第二个server,所属集群与第一个server相同,比如同为cluster01

创建完成后,重复步骤11,在另一台机器安装canalServer后端服务并启动

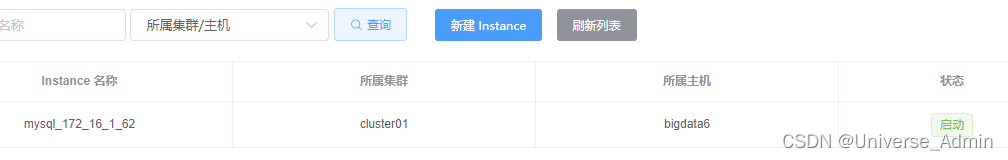

13、启动Instance实例

启动完成后,被动态分配到HA的bigdata6来执行

至此,canal HA已启动完成,可以去kafka客户端指定的topic去消费数据了