pom.xml

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.SparkStream</groupId>

<artifactId>SparkStreamspace</artifactId>

<version>1.0-SNAPSHOT</version>

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<testSourceDirectory>src/test/scala</testSourceDirectory>

<plugins>

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.2.2</version>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

<configuration>

<args>

<arg>-dependencyfile</arg>

<arg>${project.build.directory}/.scala_dependencies</arg>

</args>

</configuration>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>2.4.3</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<filters>

<filter>

<artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF</exclude>

<exclude>META-INF/*.DSA</exclude>

<exclude>META-INF/*.RSA</exclude>

</excludes>

</filter>

</filters>

<transformers>

<transformer implementation=

"org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<mainClass></mainClass>

</transformer>

</transformers>

</configuration>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>6</source>

<target>6</target>

</configuration>

</plugin>

</plugins>

</build>

<properties>

<scala.version>2.11.8</scala.version>

<hadoop.version>2.7.4</hadoop.version>

<spark.version>2.3.2</spark.version>

</properties>

<dependencies>

<!--Scala-->

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<!--Spark-->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<!--Hadoop-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>2.3.2</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.46</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>2.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-streams</artifactId>

<version>2.0.0</version>

</dependency>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>2.11.8</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>2.0.2</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.11</artifactId>

<version>2.0.2</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-kafka-0-8_2.11</artifactId>

<version>2.3.2</version>

</dependency>

</dependencies>

</project>

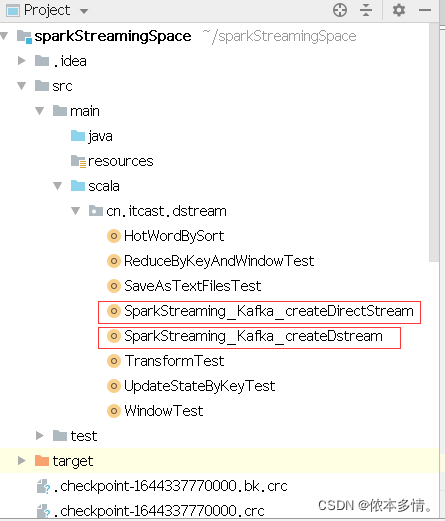

SparkStreaming_Kafka_createDstream.scala

import org.apache.spark.streaming.dstream.{DStream, ReceiverInputDStream}

import org.apache.spark.streaming.kafka.KafkaUtils

import org.apache.spark.streaming.{Seconds, StreamingContext}

import org.apache.spark.{SparkConf, SparkContext}

import scala.collection.immutable

/**

* 从kafka中拉取数据

* 读取数据时, consumer记录的offset发送回kafka中, 保存在zk中

* 需要开始WAL日志保存模式防止数据丢失, 需要设置检查点

*/

object SparkStreaming_Kafka_createDstream {

def main(args: Array[String]): Unit = {

//1. 初始化参数,conf, sc, ssc

val sparkConf: SparkConf = new SparkConf()

.setAppName("SparkStreaming_Kafka_createDstream")

.setMaster("local[4]")

.set("spark.streaming.receiver.writeAheadLog.enable", "true")

val sc: SparkContext = new SparkContext(sparkConf)

//设置日志级别

sc.setLogLevel("WARN")

//创建StreamingContext

val ssc: StreamingContext = new StreamingContext(sc, Seconds(5))

//设置检查点, 开启WLA日志保存机制就要设置检查点

ssc.checkpoint("./Kafka_Receiver")

//2. 从kafka中拉取数据, KafKaUtil

val zkQuorum = "hadoop01:2181,hadoop02:2181,hadoop03:2181"

val groupId = "spark_receiver"

//这里的1, 代表每一个分区被N个消费者消费

val topics = Map("kafka_spark" -> 1)

val receiverDstream: immutable.IndexedSeq[ReceiverInputDStream[(String, String)]] = (1 to 3)

.map(x => {

val stream: ReceiverInputDStream[(String, String)] = KafkaUtils

.createStream(ssc, zkQuorum, groupId, topics)

stream

})

//3. 从主体中获取具体的数据, 也就是value值, key是offect

val unionDstream: DStream[(String, String)] = ssc.union(receiverDstream)

//4. 单词计数

val topicData: DStream[String] = unionDstream.map(_._2)

val wordAndOne: DStream[(String, Int)] = topicData.flatMap(_.split(" ")).map((_, 1))

val result: DStream[(String, Int)] = wordAndOne.reduceByKey(_ + _)

//5. 打印

result.print()

//6. 开启流模式

ssc.start()

ssc.awaitTermination()

}

}

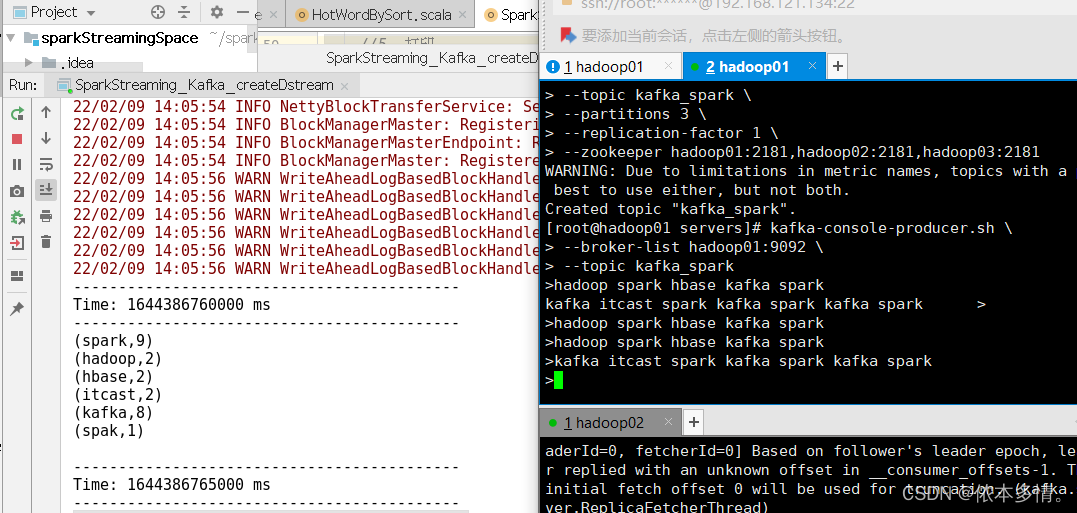

开启zookeeper和kafka集群。

创建主题

kafka-topics.sh --create \

--topic kafka_spark \

--partitions 3 \

--replication-factor 1 \

--zookeeper hadoop01:2181,hadoop02:2181,hadoop03:2181

启动生产者

kafka-console-producer.sh \

--broker-list hadoop01:9092 \

--topic kafka_spark

SparkStreaming_Kafka_createDirectStream.scala

import kafka.serializer.StringDecoder

import org.apache.spark.{SparkConf, SparkContext}

import org.apache.spark.streaming.{Seconds, StreamingContext}

import org.apache.spark.streaming.dstream.{DStream, InputDStream}

import org.apache.spark.streaming.kafka.KafkaUtils

//todo:利用sparkStreaming对接kafka实现单词计数----采用Direct(低级API)

object SparkStreaming_Kafka_createDirectStream {

def main(args: Array[String]): Unit = {

//1、创建sparkConf

val sparkConf: SparkConf = new SparkConf()

.setAppName("SparkStreaming_Kafka_createDirectStream")

.setMaster("local[2]")

//2、创建sparkContext

val sc = new SparkContext(sparkConf)

sc.setLogLevel("WARN")

//3、创建StreamingContext

val ssc = new StreamingContext(sc,Seconds(5))

ssc.checkpoint("./Kafka_Direct")

//4、配置kafka相关参数

val kafkaParams=Map("metadata.broker.list"->"hadoop01:9092,hadoop02:9092,hadoop03:9092","group.id"->"spark_direct")

//5、定义topic

val topics=Set("kafka_direct0")

//6、通过 KafkaUtils.createDirectStream接受kafka数据,这里采用是kafka低级api偏移量不受zk管理

val dstream: InputDStream[(String, String)] = KafkaUtils.createDirectStream[String,String,StringDecoder,StringDecoder](ssc,kafkaParams,topics)

//7、获取kafka中topic中的数据

val topicData: DStream[String] = dstream.map(_._2)

//8、切分每一行,每个单词计为1

val wordAndOne: DStream[(String, Int)] = topicData.flatMap(_.split(" ")).map((_,1))

//9、相同单词出现的次数累加

val result: DStream[(String, Int)] = wordAndOne.reduceByKey(_+_)

//10、打印输出

result.print()

//开启计算

ssc.start()

ssc.awaitTermination()

}

}

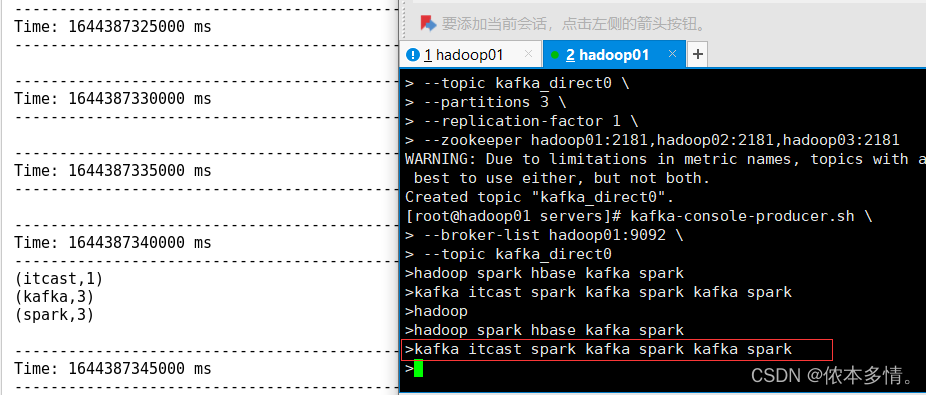

创建主题

kafka-topics.sh --create \

--topic kafka_direct0 \

--partitions 3 \

--replication-factor 1 \

--zookeeper hadoop01:2181,hadoop02:2181,hadoop03:2181

启动生产者

kafka-console-producer.sh \

--broker-list hadoop01:9092 \

--topic kafka_direct0