python爬取图片(lsp篇)

前言

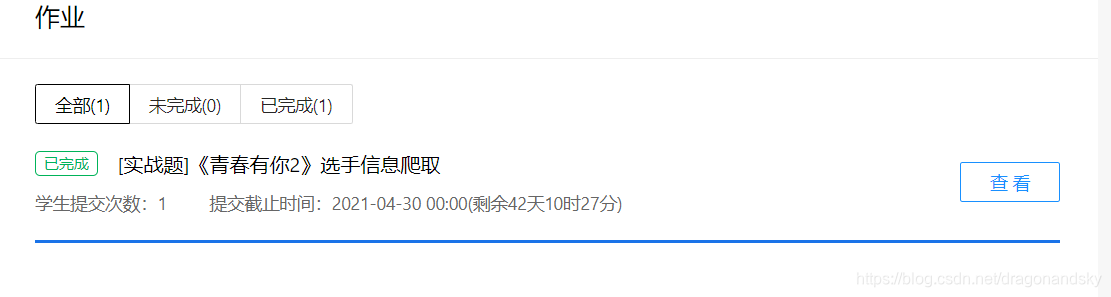

为了完成老师布置的作业,爬取青春有你2,借用了这个作业的模板爬图片,屡试不爽

一、需要用到什么?

python基础,软件方面根据个人习惯可以使用Anaconda一个集成的可以在浏览器中编程的软件,不需要再安装python包等等比较方便!

二、作业模板

1.根据网址分析数据(所有爬虫程序都必须对网址进行分析,由于这是个lsp网址就不拿出来分析了)

2.套用模板

第一步,从网址中取得你需要的那部分html

import json

import re

import requests

from bs4 import BeautifulSoup

import sys

import os

import datetime

today = datetime.date.today().strftime('%Y%m%d')

def crawl_wiki_data(n):

"""爬取html"""

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/67.0.3396.99 Safari/537.36'

}

url='https://m.mm131.net/more.php?page='

n=int(n)+1

for page in range(1,n):

url=url+str(page)

print(url)

response = requests.get(url,headers=headers)

print(response.status_code)

soup=BeautifulSoup(response.content,'lxml')

content=soup.find('body')

parse_wiki_data(content)

url='https://m.mm131.net/more.php?page='

第二步,从那部分html中取得想要的目录名,以及图集的链接地址

def parse_wiki_data(content):

"""

生成json文件到C:/Users/19509/Desktop/python目录下

"""

girls=[]

bs=BeautifulSoup(str(content),'lxml')

all_article=bs.find_all('article')

for h2_title in all_article:

girl={}

#图集

girl["name"]=h2_title.find('a',class_="post-title-link").text

#链接

girl["link"]="https://m.mm131.net"+h2_title.find('a',class_="post-title-link").get('href')

girls.append(girl)

json_data=json.loads(str(girls).replace("\'","\""))

with open('C:/Users/19509/Desktop/python/girls/'+today+'.json','w',encoding='UTF-8') as f:

json.dump(json_data,f,ensure_ascii=False)

crawl_pic_urls()

第三步,从json文件中,根据图集链接进一步爬取每张图片的链接并,将每张图片的链接作存在数组中,用来传递给下一个函数来下载图片

def crawl_pic_urls():

"""

爬取每个相册的图片链接

"""

with open('C:/Users/19509/Desktop/python/girls/'+today+'.json','r',encoding='UTF-8') as file:

json_array = json.loads(file.read())

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/67.0.3396.99 Safari/537.36'

}

for girl in json_array:

name = girl['name']

link = girl['link']

pic_urls=[]

#爬取图集

response = requests.get(link,headers = headers)

bs = BeautifulSoup(response.content,'lxml')

#拉取页数

pic=bs.find('div',class_="paging").find('span',class_="rw").text

pic=re.findall("\d+",pic)

pic_number=int(pic[1])+1

#拉取图片链接

pic_url=bs.find('div',class_="post-content single-post-content").find('img').get('src')

pic_urls.append(pic_url)

list=[]

for x in range(len(pic_url)):

list.append(pic_url[x])

for m in range(2,pic_number):

all_pic_urls=''

list[33]=str(m)

for k in range(len(list)):

all_pic_urls+=list[k]

pic_urls.append(all_pic_urls)

headers = {"Referer": link,

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/84.0.4147.89 Safari/537.36 SLBrowser/7.0.0.9 SLBChan/25"}

down_pic(name, pic_urls,headers)

第四步下载图片并保存

def down_pic(name,pic_urls,headers):

"""下载图片"""

path = 'C:/Users/19509/Desktop/python/girls/'+'pic/'+name+'/'

if not os.path.exists(path):

os.makedirs(path)

for i,pic_url in enumerate(pic_urls):

try:

pic = requests.get(pic_url,headers=headers)

string = str(i+1)+'.jpg'

with open(path+string,'wb') as f:

f.write(pic.content)

print('成功下载第%s张图片:%s' %(str(i+1),str(pic_url)))

except Exception as e:

print('下载第%s张图片时失败:%s' %(str(i+1),str(pic_url)))

print(e)

continue

最后打印下载路径的绝对路径,同时写主函数运行所有函数

def show_pic_path(path):

"""遍历所爬取的每张图片,并打印所有图片的绝对路径"""

pic_num=0

for (dirpath,dirnames,filenames) in os.walk(path):

for filename in filenames:

pic_num+=1

print("第%d张照片: %s" %(pic_num,os.path.join(dirpath,filename)))

print("共爬取lsp图%d张" % pic_num)

if __name__ == '__main__':

n=input('要几页:')

html = crawl_wiki_data(n)

#打印所爬取的选手图片路径

show_pic_path('C:/Users/19509/Desktop/python/girls/pic')

print("所有信息爬取完成!谢谢")

注意事项:’C:/Users/19509/Desktop/python/girls‘这个是我的目录,不是你的目录,你必须创建属于你的目录和相应的girls文件夹

总结

这篇文章涉及到挺多小细节的,比如下载图片时的headers跟前面的headers不一样,以及拉取图集里面图的张数时用到了正则表达式,存在问题:图集的名字不能改成中文,不知道有没有大佬会的!!